Max-Q-Technologien für Notebooks | NVIDIA

Was ist Max-Q?

Source

Videos

(Nvidia Control Panel Optimal Setting)

Windows 10 Graphics Settings ⚡️ How to Enable Discrete Graphics Card on Laptop

?HOW TO CONFIGURE AND OPTIMIZE NVIDIA VIDEO CARD / INCREASE FPS IN GAMES [2022]

Proper setting of Nvidia video card driver on maximum performance and FPS02 in games games? There is a solution! Nvidia GeForce setup.

How to enable a powerful video card on a laptop? Switching video cards!

How to raise FPS by 50{a4532ba0a44cc87d61b1e887602ef60331ba437e420acb54472cd391f3ddeed0} on GeForce graphics cards on Windows 10 — GPU Planning!

How to select a graphics card in Windows 10. Switching graphics cards on laptops

How to enable GPU scheduling with hardware acceleration windows 10 | #windows10

Intel hd graphics 6000 GPU — Build-Tweak

| 3DMark06 | 8091 |

| 3DMark11 | 1458 |

| Cinebench R11.5 OpenGL | 22.5 |

| 3DMark06 | |

|---|---|

| 184. NVIDIA Quadro K610M | 8157 |

| 185. NVIDIA Quadro FX 3600M | 8136 |

| 186. Intel HD Graphics 6000 | 8091 |

| 187. ATI Mobility Radeon HD 5830 | 8050 |

188. NVIDIA GeForce 710M NVIDIA GeForce 710M |

7993 |

| Rating of all mobile video cards | |

The Intel HD Graphics 6000 is a Broadwell generation integrated graphics card that was released in the first quarter of 2015. At the moment, it can be found in ULV SoCs (15W) such as Core i5-5250U or Core i7-5650U. The GT3 GPU has 48 execution units, slightly more than the HD 5000 (40 EU). Depending on the processor model, the maximum GPU frequency ranges from 950 to 1000 MHz.

The

Broadwell GPU is based on the Intel Gen8 architecture and has been optimized in some ways compared to the previous Gen7.5 (Haswell). In particular, shader arrays have been reorganized and now offer 8 execution units each. The three sub-fragments form a total of 24 EUs. Combined with other improvements such as a larger L1 cache and an optimized interface, the integrated GPU is faster and more efficient than its predecessor.

The HD Graphics 6000 is the top version of the Broadwell family and consists of two parts with 48 EUs. In addition, there are low-end (GT1, 12 EU), mid-range (GT2, 24 EU) and high-end (GT3e, 48 EU + EDRAM) options.

In addition, there are low-end (GT1, 12 EU), mid-range (GT2, 24 EU) and high-end (GT3e, 48 EU + EDRAM) options.

All Broadwell GPUs support OpenCL 2.0 and DirectX 11.2. Now the video engine can decode H.265 using both the functions of the chip itself and the GPU shaders. Three displays can be connected via DP 1.2/EDP 1.3 (max. 3840×2160) or HDMI 1.4a (max. 3840×2160). HDMI 2.0 is still not supported.

Depending on the processor model, the maximum GPU frequency ranges from 950-1000 MHz. However, due to the low TDP in 3D applications, the frequency can drop much lower. It is assumed that the HD Graphics 6000 outperforms the HD 5000 by 20-25% and is equal to the GeForce 820M. Games from 2014/15 tend to run exclusively on very low settings.

Using a new 14nm process, Broadwell ULV chips exhibit a TDP of 15W, making them suitable for thin ultrabooks. Power consumption is flexible enough to go down to 9.5 W, thus having a noticeable effect on overall performance.

| Manufacturer: | Intel |

| Series: | HD Graphics |

| Code: | Broadwell GT3 |

| Architecture: | Broadwell |

| Threads: | 48 — unified |

| Clock frequency: | 300 — 1000 (Boost) MHz |

| Memory bus width: | 64/128 bit |

| Total memory: | yes |

| DirectX: | DirectX 11. 2 2 |

| Technology: | 14 nm |

| Optional: | QuickSync |

| Release date: | 01/05/2015 |

* Specified clock frequencies are subject to change by the manufacturer

Intel HD 6000 is an integrated graphics card used in Broadwell processors (fifth generation Intel core i5 and core i7). This chip appeared in the first half of 2015.

Specifications

Intel HD 6000 has pretty good specifications, the adapter outperforms many other integrated solutions and even some discrete graphics cards.

48 unified processors are responsible for the operation of the video card. The clock frequency of the graphics chip on top processors reaches 1000MHz, on weaker CPUs this value will be slightly lower.

Since integrated video cards do not have their own memory (they simply have nowhere to solder the necessary chips), the amount of HD 6000 memory directly depends on the amount of RAM and the settings in the UEFI BIOS.

The performance of this video card depends on the frequency of the RAM, so to get the most power you will have to fork out for a good memory. But do not get too carried away and buy overclocker RAM sticks, they are too expensive, for their price you can buy a good discrete video card.

The memory bus width is 128 bits, in this parameter the chip is on par with some of the discrete solutions, and it even surpasses some of the external adapters.

There is support for DirectX 11.2, OpenGL 4.3, OpenCL 2.0 and proprietary Intel Quick Sync API. Therefore, the Intel HD 6000 should run any games and allow you to work in professional software. Alas, but this is only in theory, in practice the insufficient speed of the video chip will affect.

What is the Intel HD 6000 suitable for?

Mostly low-demanding tasks, such as running various programs that do not require high performance from the video card, watching movies, starting video editing, and playing old games.

Everything is clear with office programs, even the most mediocre video adapter will cope with them, so the HD 6000 will be more than enough.

Movies and multimedia

For watching movies, Intel HD Graphics 6000 is also enough in full. The graphics chip will handle video files in HD, FullHD, QuadHD (2K) and even UltraHD (4K) resolutions. It is also possible to play 3D (only movies, you can’t even dream of playing games in 3D, all you need for this is a TV or monitor that supports such a resolution. Alas, films in a specific resolution (for example 8K) HD 6000 will not pull, but with only the highest-end discrete graphics cards can handle such content.0003

With the ability to play everything rather mediocre, few games from modern projects will run with an acceptable frame rate. Even the newest models of integrated video cards cannot cope with such an impossible task, not to mention the Intel HD 6000, which is not a top-end chip. Although it will cope with many modern indie projects, because excellent graphics are rarely present there.

Fans of old games can safely use this video adapter. In such tasks, the graphics chip will be able to fully reveal itself.

The HD 6000 can provide a slight boost in video editing or graphics processing applications, although for more impressive speed you might want to buy an expensive discrete graphics card.

GPU overclocking is completely missing. Although there is an opportunity to slightly increase the performance of the video card by overclocking the RAM installed on the computer.

Drivers

The driver for Windows is nothing special, it copes with its task very well, works without any problems. Although in some aspects it is inferior to drivers from Nvidia or AMD.

Installing the software under Windows will not cause you any difficulties. Everything is standard, just download the driver from the official Intel resource and install it on your computer.

For users of systems based on the Linux kernel, things are much more complicated. You will have to make a choice of driver, because there are two of them. The manufacturer’s driver is preferred, but it’s difficult to install and is not supported on all Linux distributions.

The second driver is called «free» and is used by default in the operating system. The advantages of a free driver include no need for installation and automatic updates along with the distribution, but it will provide rather mediocre performance and compatibility with games or programs.

Which discrete graphics card is the HD 6000?

Compared to discrete graphics cards, the performance of the Intel HD 6000 is on par with the Nvidia GT 720, which is very good. If you want to buy a GT 720 or a weaker video adapter with an Intel HD 6000 integrated chip, don’t make such a stupid purchase.

Description

Intel started HD Graphics 6000 sales on January 5, 2015. This is Broadwell architecture notebook card based on 14 nm manufacturing process and primarily aimed at office use.

In terms of compatibility, this is a PCIe 2.0 x1 card. Power consumption — 15 W.

It provides weak performance in tests and games at 0.55% of the leader, which is NVIDIA GeForce GTX 1080 SLI (mobile).

How the GPU works at a low level — CoreMission

This article summarizes some of the low-level aspects of the GPU. Although the set of instructions available for GPU programming is simpler than for CPUs, the content of these instructions also does not exactly match the actions of the hardware. The reason is that we can’t just program the GPU without some kind of API which is an abstraction over its inner workings. And while more explicit APIs like DirectX 12 and Vulkan have emerged in the last few years that bridge that gap between abstraction and actual hardware operation, there are still a few low-level parts worth explaining.

Author’s Note: Although this post is not about the graphics or computational API, I will use some of the terms used in Vulkan, mainly because it is the only modern and multi-platform API.

Table of Contents

GPU Parts — Summary

Let’s list the most important parts of the GPU at the lowest possible level:0008

- L0$ (rare in consumer-grade hardware)

- L1$ shared in Shared Memory on each compute unit

- L2$ on GPU 9008 9008 9008 9008 9008 external memory (device memory)

- command scheduler

- command dispatcher

- TMU, ROP — fixed pipeline units

- …and others

All of the above, of course, are grouped into many hierarchies that ultimately make up graphics cards.

The GPU is one big asynchronous wait device

At least that’s how you can think of it in terms of the CPU. Of course, this is much more complicated than anything that can be seen from the processor side. But still the logic remains. You submit some work and wait for the results while doing other things. You need to provide some kind of synchronization between what has been done and what has not been done. Fence in Vulkan is the main mechanism for synchronizing the CPU and GPU.

Fence in Vulkan is the main mechanism for synchronizing the CPU and GPU.

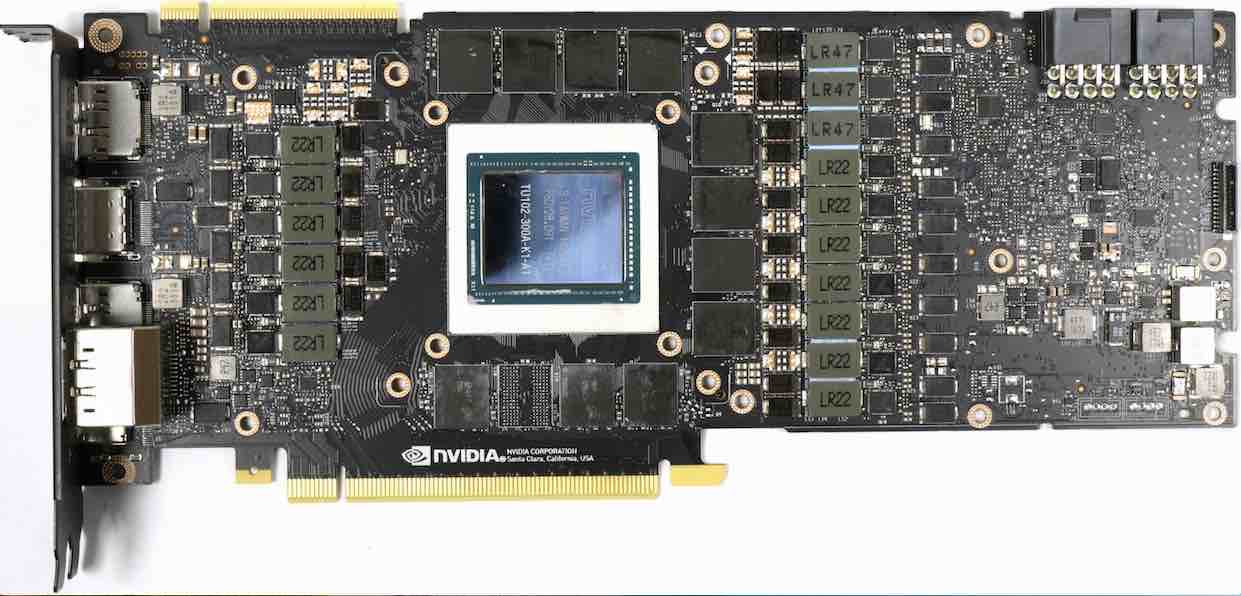

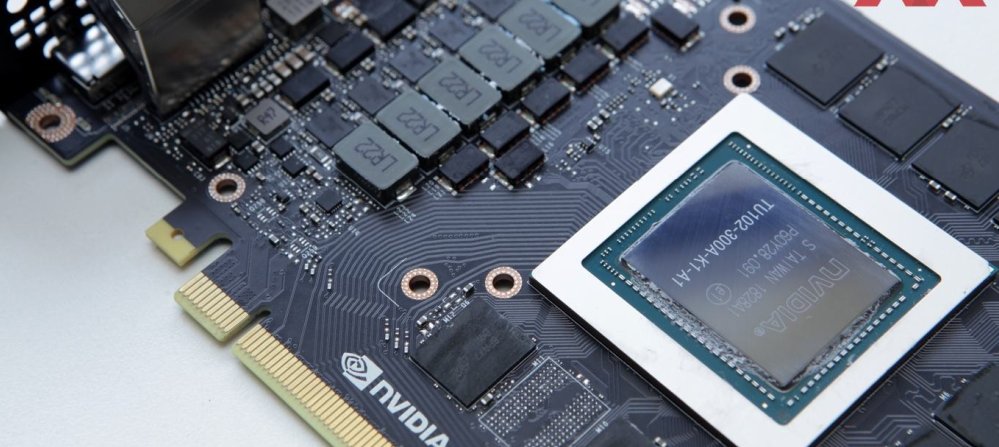

GPU cores

Well, this may come as a surprise to many, but the so-called cores in the GPU world are more of a marketing term. Reality is much more complicated. CUDA cores in Nvidia cards, or simply cores in AMD GPUs, are very simple blocks that perform specific floating point operations. 1 They can’t do fancy things like processors (eg, branch prediction, out-of-order execution, data fetching). At the same time, they cannot work independently. The kernels are attached to a group of neighbors (which will be discussed further in the Scalars and Vectors section). From now on, we will call them shading modules. While some may view the cores as very primitive ones that cannot work on their own, we should also mention the Compute Unit, a much larger hardware construct that includes many of these cores. The compute unit has a lot more functions than a regular CPU core: caches, registers, etc. There are GPU-specific elements: scheduler, dispatcher, ROP, TMU, interpolators, blenders, and more. Although all this together may somewhat resemble a CPU, none of the blocks can be considered a core. The shading block is too simple, and the computational one is much more.

There are GPU-specific elements: scheduler, dispatcher, ROP, TMU, interpolators, blenders, and more. Although all this together may somewhat resemble a CPU, none of the blocks can be considered a core. The shading block is too simple, and the computational one is much more.

Each manufacturer has its own name for the Compute Unit:

- Nvidia uses SM (Streaming Multiprocessor)

- AMD uses CU (Compute Unit) and WGP (Workgroup Processor since RDNA architecture) )

- Intel uses EU (Execution Unit) and Xe Core (starting with the new Arc architecture)

It is worth mentioning that since the Turing architecture, two new types of cores have appeared: RT cores and tensor cores (AMD also has its own cores RT since RDNA2 architecture). RT cores accelerate BVH (Bounding Volume Hierarchy, hierarchy of bounding volumes) 2 , and tensor cores speed up FMA operations on lower-precision matrices (usually we can neglect 32-bit floating point numbers for machine learning).

You can use RT cores in both AMD and Nvidia cards with universal tracing extensions.

Tensor cores in Nvidia GPUs can be used directly with the VK_NV_cooperative_matrix. It works with 32-bit and 16-bit floating point numbers.

If you are interested in knowing the number of SMs on your GPU and need to use this information in your code, there are several options (at least on Nvidia GPUs):

- VkPhysicalDeviceShaderSMBuiltinsPropertiesNV

- NVML library — Nvidia library comes with drivers, but C header file is distributed with CUDA SDK

Simultaneity and parallelism

going on inside him. I find that the concepts of concurrency and parallelism are too easily interchangeable in many articles. In general, the SPMT (Single Program Multiple Threads) approach does not allow us to use this difference: GPU manufacturers do not disclose what is inside the SM / CU (except for the size of shared memory and the size of the working group), and especially how many shading units are hidden. This is the main reason why concept substitution doesn’t matter when writing shaders. But let’s shed some light on what actually happens when submitting work to the GPU. We will use the streaming multiprocessor scheme from Nvidia’s Turing architecture: Turing SM.

This is the main reason why concept substitution doesn’t matter when writing shaders. But let’s shed some light on what actually happens when submitting work to the GPU. We will use the streaming multiprocessor scheme from Nvidia’s Turing architecture: Turing SM.

As you can see, each SM has 64 FP32 shading units. Notice how big the registers are. Now let’s use a slightly contrived example and suppose we distribute the work to 4 independent subgroups (128 threads) containing only single precision floating point numbers. The first and second subgroups (64 threads) can be executed in parallel , since there are enough hardware shading modules to cover them. The third and fourth will be loaded into the register and made to wait for the completion / stop of the previous one. If a stop occurs, the third and fourth will be executed simultaneously with the first and second. Of course, it may happen that, for example, only the 2nd subgroup stops, then the 1st and 3rd can be executed in parallel. This distinction in most cases does not give you any advantage when writing code, but by now it should be clear that both cases — simultaneous and parallel — occur on the GPU and mean different things.

This distinction in most cases does not give you any advantage when writing code, but by now it should be clear that both cases — simultaneous and parallel — occur on the GPU and mean different things.

If you come from the CPU world, then GPU concurrency is a concept similar to SMT and Hyper Threading, but on a different scale.

Smallest work block

Once we have the data ready, we can send it to the Workgroup. Workgroups are a construct that includes at least one work unit (corresponding to one hardware thread), which is represented as a single unit. It can be one operation or thousands. But it doesn’t matter to the GPU how much data we provide, because it’s all divided into groups corresponding to the underlying hardware…0003

…and these groups have different names depending on the manufacturer:

- Warp — for Nvidia

- Wave(fronts) — for AMD

- Wave — when using DX12

- Subgroup — when using Vulkan (starting from 1.

1)

1)

The length of the subgroups depends on the manufacturer. AMD used to have 64 floats on Vega cards, and now with Navi the combination is 32/64. Nvidia uses 32 floats. Intel, on the other hand, can run in 8/16/32 configurations. 3 Subgroup sizes are critical to understanding the difference: although the smallest work unit is actually a single thread, the GPU will run threads at least as large as a work group! May not seem optimal, but given that GPUs are designed for huge amounts of data, this is actually very fast. All non-optimal data combinations that do not fully fit the subgroup are mitigated by a mechanism called delay hiding.

It’s also worth mentioning that we process data in batches because raster blocks output data as quads (not individual pixels), and historically GPUs could only process graphics pipelines. Quads are needed for derivatives that will later be used by samplers (without going into details: we can use mip-mapping thanks to this pipeline). Currently, equipment manufacturers are trying to balance the size of the subgroup because:

Currently, equipment manufacturers are trying to balance the size of the subgroup because:

- smaller subgroups means less divergence cost but also less memory coalescence (efficiency)

- larger subgroups means expensive divergence but increases memory coalescence (flexibility)

Register file and cache

There is one important hardware aspect to understand: register size. If I had to quote just one fact to describe the difference between a CPU and a GPU, I would say this: GPU register files are bigger than cache! Let’s take Nvidia’s RTX 2060 as an example. There is a 256 KB register per SM, while the L1/shared memory for that particular SM is totaling 96 KB.

Hiding Latency

Knowing how large GPU registers are, we can now understand why GPUs are so efficient at processing large amounts of data. Most of the work is done at the same time. Even when only one thread in a subgroup has to wait for something (for example, a memory fetch), the GPU does not wait. The entire subgroup is marked as stopped. Another suitable subgroup is executed from the pool of subgroups stored in registers. As soon as the previous operation that caused the delay is completed, the subgroup is started to complete work on it again. And in real life scenarios, this happens to thousands of subgroups. The ability to immediately switch to a different subgroup if there is a delay is critical for the GPU. It hides the timeout by running another set of matching subgroups.

The entire subgroup is marked as stopped. Another suitable subgroup is executed from the pool of subgroups stored in registers. As soon as the previous operation that caused the delay is completed, the subgroup is started to complete work on it again. And in real life scenarios, this happens to thousands of subgroups. The ability to immediately switch to a different subgroup if there is a delay is critical for the GPU. It hides the timeout by running another set of matching subgroups.

Some equipment:

- active Subgroup, the active subgroup is the one that is running

- resident Subgroup/Subgroup in flight, the resident subgroup/subgroup in flight is stored in marked as ready to run or restart

Occupancy

Short version: ratio of how well the GPU is occupied.

Being busy is not about how well we use the GPU! This is the number of resident subgroups (option to hide the delay). ALUs can experience maximum load even at low occupancy, although usage performance is quickly degraded in this case.

Extended version: Let’s assume that a computational unit can have 32 resident subgroups (total register file capacity). Now let’s send 32 completely independent subgroups with work to fill it completely. Even if 31 of the 32 possible subgroups are stopped, there is still an opportunity to hide the delay when the 32nd one is executed. This is the ratio: how many independent subgroups went for execution out of all possible subgroups in flight.

Now suppose you send 8 workgroups of 128 threads each (covers 4 subgroups). This time, the workgroups need to be processed together (if the groups are completely independent, the driver will divide them into 4 separate subgroups, creating exactly the same situation as above). In other words, each thread now needs 4 registers. The computational block remains the same, so there are still only 32 possible subgroups in it. Therefore, it turned out to be 4 times less opportunities to hide the delay (switch to other 4 subgroups), because each working group is now 4 times larger. This means that the occupancy was only 25%. It is important to remember the following here: Increasing the use of registers for each subgroup reduces overall employment. Unless the data is exceptionally well structured, workgroup distributions almost never reach 100% employment. Extreme case: if you send one workgroup to the entire compute block register size, then there is nothing to toggle on stop (no way to hide the delay).

This means that the occupancy was only 25%. It is important to remember the following here: Increasing the use of registers for each subgroup reduces overall employment. Unless the data is exceptionally well structured, workgroup distributions almost never reach 100% employment. Extreme case: if you send one workgroup to the entire compute block register size, then there is nothing to toggle on stop (no way to hide the delay).

Lack of registers and unloading of registers

Let’s continue the previous example. What if each thread needs even more register space? Every time we increase the flow requirements, measured in the required register space, we also increase the register pressure. With a low register shortage, there’s nothing to worry about, we’ll just reduce the occupancy (which is fine too, as long as there’s always work to hide the delay). But as soon as we start to increase the use of registers per thread, we will inevitably reach a level where the driver can decide to unload registers. Register spilling is the process of moving data that would normally be stored in registers into the L1/L2 cache and/or external memory (device memory in Vulkan). The driver decides to do this if the occupancy is really low, in order to save some room for latency hiding at the cost of slower memory access.

Register spilling is the process of moving data that would normally be stored in registers into the L1/L2 cache and/or external memory (device memory in Vulkan). The driver decides to do this if the occupancy is really low, in order to save some room for latency hiding at the cost of slower memory access.

Note: while uploading to the device’s internal memory may actually improve performance, 4 this is less obvious when uploading to external memory.

Scalars and vectors

In one of the previous paragraphs it was said that we cannot do work smaller than the size of the working group. Actually, that’s not all. AMD hardware is inherently vector. This means that they have separate blocks for vector and scalar operations. 9Nvidia’s 0641s, on the other hand, are scalar in nature , which means it’s possible for subgroups to handle mixed data types (mostly a combination of F32 and INT32). So the previous paragraph was a lie and we can actually do less work than a Subgroup? The correct answer is: we can’t, but the scheduler can. The division of work to be done depends on the scheduler. This doesn’t change the fact that the scheduler will still accept a number of scalars no less than the size of the subgroup, it just won’t use it. In the same scenario, AMD should use the entire vector, but unnecessary operations will be nulled and discarded. 5 Intel went even further with this solution because the size of the vector can vary!

The division of work to be done depends on the scheduler. This doesn’t change the fact that the scheduler will still accept a number of scalars no less than the size of the subgroup, it just won’t use it. In the same scenario, AMD should use the entire vector, but unnecessary operations will be nulled and discarded. 5 Intel went even further with this solution because the size of the vector can vary!

The previous paragraphs remain valid. If you don’t micro-optimize the layout of the data that is passed to the GPU, the difference can be ignored. AMD’s approach should give really good results if the data is well structured. For more random data, Nvidia is likely in the lead.

Use of unrelated data types

We have two cases here:

- Using types larger than the default native size

- Using types smaller than the normal native size

Most consumer-grade GPUs use 32-bit floating point as the most common native size. What happens if we use a double precision floating point number (64 bits)? If not too many are sent at the same time, nothing worse will happen than maximum register usage (registers in GPUs use 32-bit elements, so 64-bit floats take up two of them). Most graphics manufacturers provide separate F64 blocks or special purpose blocks for more precise operations.

Most graphics manufacturers provide separate F64 blocks or special purpose blocks for more precise operations.

It’s more interesting to use smaller than original sizes. First, we do have hardware that can handle this (at least for the time being). Floating-point half-precision numbers have been in high demand for several years now. Not only because of machine learning, but also for specific gaming purposes. Turing has a very interesting architecture here as it separates GPUs into GTX and RTX variants. The former lacks RT cores and tensor cores, while the latter has both. RTX tensors are special FP16 blocks that can also handle INT8 or INT4 types. They specialize in FMA (Fused Multiply and Add) matrix operations. The main purpose of tensor cores is to use DLSS, 6 but I blindly assume that the driver may decide to use them for other operations as well. The GTX version of the Turing architecture (1660, 1650) does not have tensor cores, instead it has freely available FP16 blocks! They no longer specialize in matrix operations, but the scheduler can use them at will if needed.

16-bit floating point numbers are also known as half-precision numbers .

What happens if we use F16 on a GPU that has no hardware equivalent, neither in the Tensor core nor in individual FP16 modules? We’re still using FP32 blocks for processing, and more importantly, we’re wasting register space because no matter how big the number is, we’re still putting it in a 32-bit element. But there is one big improvement: less bandwidth is needed.

Branching is bad, right?

You may have heard this many times, but the correct answer depends on the circumstances. When someone speaks negatively about branching, they mean that it occurs within a subgroup. In other words, branching within a subgroup is bad! But that’s only half the story. Imagine you are using an Nvidia GPU and are submitting 64 threads of work. If 32 consecutive streams end in one path, and the remaining 32 in another, then branching is normal. In other words, perfectly branching between subgroups of is normal. But if only one thread out of the 32 packed floats is branching, then another one will be waiting for it (marked as inactive) and we will end up branching within a subgroup.

In other words, perfectly branching between subgroups of is normal. But if only one thread out of the 32 packed floats is branching, then another one will be waiting for it (marked as inactive) and we will end up branching within a subgroup.

L1 Cache, LDS, and Shared Memory

Depending on the common name, you may see LDS (Local Data Storage) and Shared Memory, in fact they both mean the same thing. LDS is the name used by AMD and Shared Memory is a term coined by Nvidia.

L1$ and LDS/Shared Memory are different things, but they both take up the same space on the hardware. We have no explicit control over the use of the L1 cache. All this is controlled by the driver. The only thing we can program is a local data store that shares space with an L1 cache (with configurable proportions). Once we start using shared memory, we might run into some problems…

Memory bank conflicts

Shared memory is divided into banks. You can think of banks as orthogonal to your data. How each bank is mapped to memory access depends on the number of banks and word size . Let’s take 32 banks with a 4-byte word as an example. If you have an array of 64 floats, then only the 0th and 32nd elements will end in bank1, the 1st and 33rd will end in bank2, and so on. Now, if each thread in the subgroup accesses a unique bank, this is the ideal scenario since we can do it in a single load instruction. A bank conflict occurs when 2 or more threads request different data from the same memory bank. This means having to serialize access to data cells, which is pure evil. In the worst case, you can end up with all threads accessing the same memory bank, but getting 32 different values, which actually means 32 times more time. 7

How each bank is mapped to memory access depends on the number of banks and word size . Let’s take 32 banks with a 4-byte word as an example. If you have an array of 64 floats, then only the 0th and 32nd elements will end in bank1, the 1st and 33rd will end in bank2, and so on. Now, if each thread in the subgroup accesses a unique bank, this is the ideal scenario since we can do it in a single load instruction. A bank conflict occurs when 2 or more threads request different data from the same memory bank. This means having to serialize access to data cells, which is pure evil. In the worst case, you can end up with all threads accessing the same memory bank, but getting 32 different values, which actually means 32 times more time. 7

Accessing a specific value from the same bank by multiple threads is not a problem. Let’s take it to the extreme: if all threads access 1 value in 1 bank, it’s called broadcasting — only one read from shared memory is performed internally, and this value is broadcast to all threads. If some (but not all) threads access 1 value from a particular bank, then we have multicast .

If some (but not all) threads access 1 value from a particular bank, then we have multicast .

Some examples:

- Optimal shared memory access:

// evenly distributed

arr[gl_GlobalInvocationID.x];

//not evenly distributed, but each thread has access to different banks

arr[gl_GlobalInvocationID.x * 3];

|

// evenly distributed arr[gl_GlobalInvocationID.x]; //not evenly distributed, but each thread has access to different banks arr[gl_GlobalInvocationID.x * 3]; |

- Bank conflict:

// «duplication» conflict

// — when multiplied by 2

// — when using double precision numbers

// — when using structures of 2 floating point numbers

arr[gl_GlobalInvocationID.x * 2];

|

// «duplication» conflict // — when multiplying by 2 // — when using double precision numbers // — when using structures of 2 floats arr[gl_GlobalInvocationID. |

- Broadcast:

arr[12]; //with constants

arr[gl_SubgroupID] //with variables

|

arr[12]; //with constants arr[gl_SubgroupID] //with variables |

Due to latency and shared memory speed hiding, bank conflicts may not actually matter. Until one subgroup detects conflicting access, the scheduler can switch to another.

Bank conflicts can only occur within subgroups! There is no such thing as bank conflicts between subgroups.

There may also be a register conflict between banks.

Compiling a shader process is like… Java

Modern APIs like Vulkan and DX12 make it possible to save shader compilation from running a graphics/computing application. We compile GLSL / HLSL into SPIR-V in advance and save it as an intermediate representation (bytecode) only for further use by the driver. But the driver takes it (perhaps with last-minute changes, such as clarification of constants) and compiles it again for the specific manufacturer and/or hardware code. In essence, this is very similar to managed C# or Java when code is compiled to IL, which is then compiled/interpreted by the CLR or JVM on the specific hardware.

But the driver takes it (perhaps with last-minute changes, such as clarification of constants) and compiles it again for the specific manufacturer and/or hardware code. In essence, this is very similar to managed C# or Java when code is compiled to IL, which is then compiled/interpreted by the CLR or JVM on the specific hardware.

Instruction Set Architecture (ISA)

If you delve into how the GPU executes instruction sets, this is a really great read. There are two companies that freely share ISAs for their specific hardware:

- AMD ISA

- Intel ISA

Bonus — vendor names for Linux drivers

My main OS has been Linux for over 6 years now. As always, the driver situation may surprise many coming from Windows, so let’s dive into the complicated political situation with Vulkan’s drivers.

For Nvidia we have 2 options:

- nouveau — can be used for normal day to day work like office or watching YouTube but not for gaming and computing.

Development is hampered by some Nvidia solutions.

Development is hampered by some Nvidia solutions. - Proprietary Nvidia driver — works flawlessly in most cases, but without code access.

AMD:

- AMDVLK is an open source version.

- Mesa 3D is a driver library that provides an open source driver (basically a copy of AMD-VLK). The most popular choice when it comes to AMD.

- AMD proprietary AMDGPU-PRO.

Intel:

- Mesa 3D — like AMD, open source driver and part of the library.

Notes

- RT Core actually does BVH traversal, rectangle intersection test, triangle intersection test, and unlike all other GPU execution patterns that are similar to SIMD, the RT core is of type MIMD.

- New architectures such as Turing may have separate execution cores for int32.

- This flexibility leads to a very interesting advantage over AMD and Nvidia.

- If shared memory/L1 or L2 caches are not sufficiently initialized.

- AMD typically compensates for this architectural choice with larger registers and caches.

/cdn.vox-cdn.com/uploads/chorus_asset/file/8634403/akrales_170518_1704_0019.jpg) x *2];

x *2];