Nvidia GeForce GTX 1080 vs Nvidia GeForce GTX Titan X: What is the difference?

59points

Nvidia GeForce GTX 1080

51points

Nvidia GeForce GTX Titan X

vs

54 facts in comparison

Nvidia GeForce GTX 1080

Nvidia GeForce GTX Titan X

Why is Nvidia GeForce GTX 1080 better than Nvidia GeForce GTX Titan X?

- 607MHz faster GPU clock speed?

1607MHzvs1000MHz - 2.08 TFLOPS higher floating-point performance?

8.23 TFLOPSvs6.14 TFLOPS - 32.6 GPixel/s higher pixel rate?

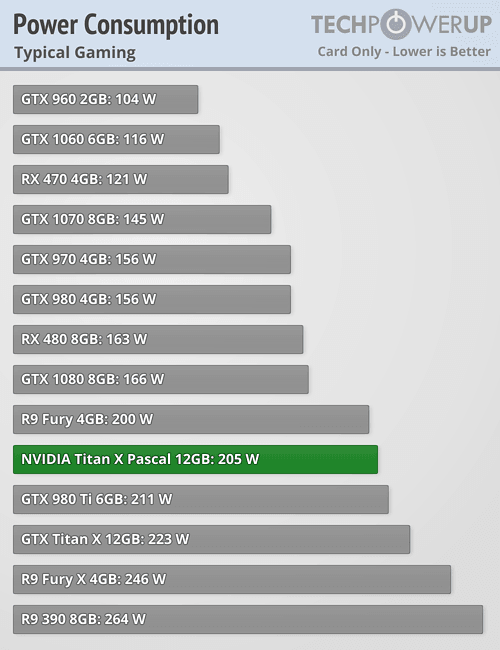

128.6 GPixel/svs96 GPixel/s - 70W lower TDP?

180Wvs250W - 747MHz faster memory clock speed?

2500MHzvs1753MHz - 2988MHz higher effective memory clock speed?

10000MHzvs7012MHz - 65.1 GTexels/s higher texture rate?

257.1 GTexels/svs192 GTexels/s - 644MHz faster GPU turbo speed?

1733MHzvs1089MHz

Why is Nvidia GeForce GTX Titan X better than Nvidia GeForce GTX 1080?

- 1.

5x more VRAM?

12GBvs8GB - 17GB/s more memory bandwidth?

337GB/svs320GB/s - 128bit wider memory bus width?

384bitvs256bit - 512 more shading units?

3072vs2560 - 800million more transistors?

8000 millionvs7200 million - 32 more texture mapping units (TMUs)?

192vs160 - 16 more render output units (ROPs)?

96vs80

Which are the most popular comparisons?

Nvidia GeForce GTX 1080

vs

Nvidia GeForce RTX 3060

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce RTX 3090

Nvidia GeForce GTX 1080

vs

Nvidia Geforce GTX 1660 Super

Nvidia GeForce GTX Titan X

vs

Nvidia Titan V

Nvidia GeForce GTX 1080

vs

Nvidia GeForce RTX 2060

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce GTX 1070

Nvidia GeForce GTX 1080

vs

Nvidia GeForce RTX 3080

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce RTX 3050 Laptop

Nvidia GeForce GTX 1080

vs

Nvidia GeForce RTX 3050 Ti Laptop

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce GTX 1650

Nvidia GeForce GTX 1080

vs

Nvidia GeForce GTX 1650 Super

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce RTX 2080 Ti Founders Edition

Nvidia GeForce GTX 1080

vs

Nvidia GeForce RTX 3070 Ti

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce RTX 3060 Laptop

Nvidia GeForce GTX 1080

vs

Nvidia GeForce GTX 1650

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce GTX Titan Z

Nvidia GeForce GTX 1080

vs

AMD Radeon RX 580

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce GTX Titan Black

Nvidia GeForce GTX 1080

vs

Nvidia GeForce GTX 1070

Price comparison

User reviews

Overall Rating

Nvidia GeForce GTX 1080

1 User reviews

Nvidia GeForce GTX 1080

10. 0/10

0/10

1 User reviews

Nvidia GeForce GTX Titan X

0 User reviews

Nvidia GeForce GTX Titan X

0.0/10

0 User reviews

Features

Value for money

10.0/10

1 votes

No reviews yet

Gaming

10.0/10

1 votes

No reviews yet

Performance

10.0/10

1 votes

No reviews yet

Fan noise

6.0/10

1 votes

No reviews yet

Reliability

7.0/10

1 votes

No reviews yet

Performance

1.GPU clock speed

1607MHz

1000MHz

The graphics processing unit (GPU) has a higher clock speed.

2.GPU turbo

1733MHz

1089MHz

When the GPU is running below its limitations, it can boost to a higher clock speed in order to give increased performance.

3.pixel rate

128. 6 GPixel/s

6 GPixel/s

96 GPixel/s

The number of pixels that can be rendered to the screen every second.

4.floating-point performance

8.23 TFLOPS

6.14 TFLOPS

Floating-point performance is a measurement of the raw processing power of the GPU.

5.texture rate

257.1 GTexels/s

192 GTexels/s

The number of textured pixels that can be rendered to the screen every second.

6.GPU memory speed

2500MHz

1753MHz

The memory clock speed is one aspect that determines the memory bandwidth.

7.shading units

Shading units (or stream processors) are small processors within the graphics card that are responsible for processing different aspects of the image.

8.texture mapping units (TMUs)

TMUs take textures and map them to the geometry of a 3D scene. More TMUs will typically mean that texture information is processed faster.

9.render output units (ROPs)

The ROPs are responsible for some of the final steps of the rendering process, writing the final pixel data to memory and carrying out other tasks such as anti-aliasing to improve the look of graphics.

Memory

1.effective memory speed

10000MHz

7012MHz

The effective memory clock speed is calculated from the size and data rate of the memory. Higher clock speeds can give increased performance in games and other apps.

2.maximum memory bandwidth

320GB/s

337GB/s

This is the maximum rate that data can be read from or stored into memory.

3.VRAM

VRAM (video RAM) is the dedicated memory of a graphics card. More VRAM generally allows you to run games at higher settings, especially for things like texture resolution.

4.memory bus width

256bit

384bit

A wider bus width means that it can carry more data per cycle. It is an important factor of memory performance, and therefore the general performance of the graphics card.

It is an important factor of memory performance, and therefore the general performance of the graphics card.

5.version of GDDR memory

Newer versions of GDDR memory offer improvements such as higher transfer rates that give increased performance.

6.Supports ECC memory

✖Nvidia GeForce GTX 1080

✖Nvidia GeForce GTX Titan X

Error-correcting code memory can detect and correct data corruption. It is used when is it essential to avoid corruption, such as scientific computing or when running a server.

Features

1.DirectX version

DirectX is used in games, with newer versions supporting better graphics.

2.OpenGL version

OpenGL is used in games, with newer versions supporting better graphics.

3.OpenCL version

Some apps use OpenCL to apply the power of the graphics processing unit (GPU) for non-graphical computing. Newer versions introduce more functionality and better performance.

Newer versions introduce more functionality and better performance.

4.Supports multi-display technology

✔Nvidia GeForce GTX 1080

✔Nvidia GeForce GTX Titan X

The graphics card supports multi-display technology. This allows you to configure multiple monitors in order to create a more immersive gaming experience, such as having a wider field of view.

5.load GPU temperature

Unknown. Help us by suggesting a value. (Nvidia GeForce GTX Titan X)

A lower load temperature means that the card produces less heat and its cooling system performs better.

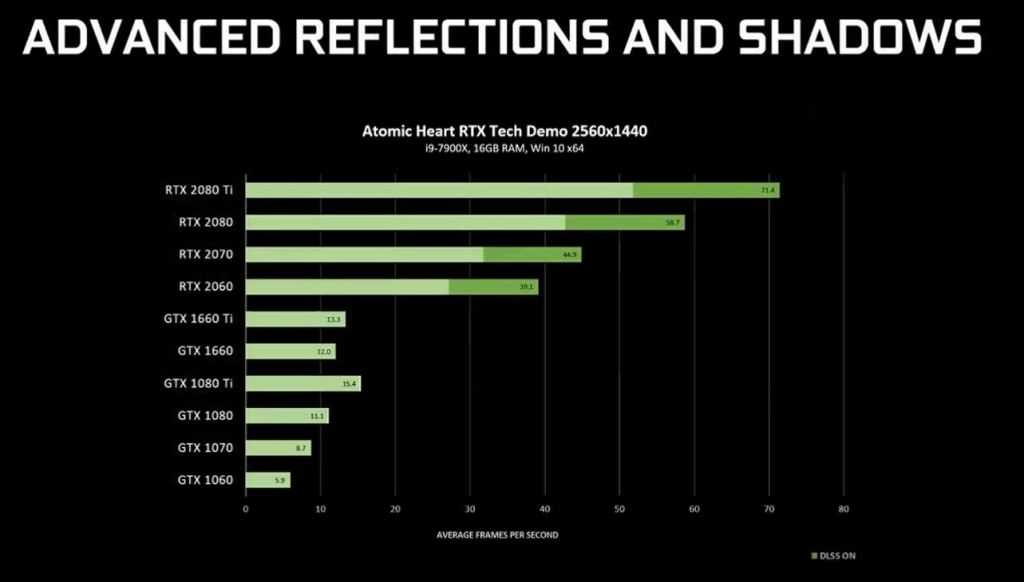

6.supports ray tracing

✔Nvidia GeForce GTX 1080

✔Nvidia GeForce GTX Titan X

Ray tracing is an advanced light rendering technique that provides more realistic lighting, shadows, and reflections in games.

7.Supports 3D

✔Nvidia GeForce GTX 1080

✔Nvidia GeForce GTX Titan X

Allows you to view in 3D (if you have a 3D display and glasses).

8.supports DLSS

✖Nvidia GeForce GTX 1080

✖Nvidia GeForce GTX Titan X

DLSS (Deep Learning Super Sampling) is an upscaling technology powered by AI. It allows the graphics card to render games at a lower resolution and upscale them to a higher resolution with near-native visual quality and increased performance. DLSS is only available on select games.

9.PassMark (G3D) result

Unknown. Help us by suggesting a value. (Nvidia GeForce GTX 1080)

Unknown. Help us by suggesting a value. (Nvidia GeForce GTX Titan X)

This benchmark measures the graphics performance of a video card. Source: PassMark.

Ports

1.has an HDMI output

✔Nvidia GeForce GTX 1080

✔Nvidia GeForce GTX Titan X

Devices with a HDMI or mini HDMI port can transfer high definition video and audio to a display.

2.HDMI ports

More HDMI ports mean that you can simultaneously connect numerous devices, such as video game consoles and set-top boxes.

3.HDMI version

HDMI 2.0

HDMI 2.0

Newer versions of HDMI support higher bandwidth, which allows for higher resolutions and frame rates.

4.DisplayPort outputs

Allows you to connect to a display using DisplayPort.

5.DVI outputs

Allows you to connect to a display using DVI.

6.mini DisplayPort outputs

Allows you to connect to a display using mini-DisplayPort.

Price comparison

Cancel

Which are the best graphics cards?

Nvidia Titan X Vs GTX 1080 Ti

by Sebastian Kozlowski

Nvidia has always been in pole position when it comes to providing the highest quality graphics cards money can buy. Today, we decide to compare two of the best performing GPUs in Nvidia’s impressive lineup, the Titan X and the GTX 1080 Ti.

With the super 20 series set to release in a couple of weeks, we thought it was well worth comparing some of Nvidia’s current flagship GPUs. Don’t worry; we’ll also look at how the new super card compares to the Titan and 1080 Ti as well.

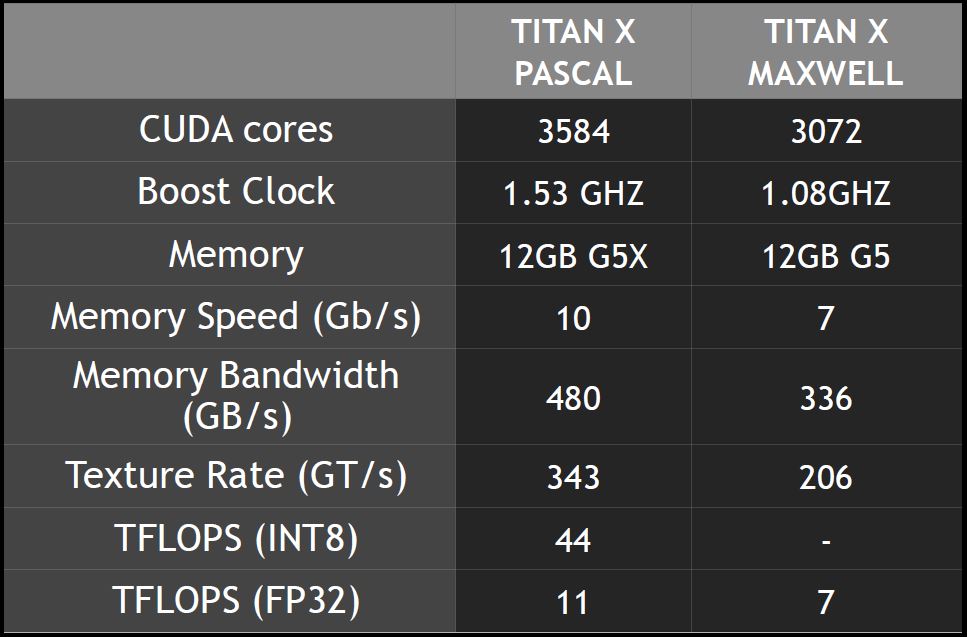

For those that aren’t up-to-speed with the Titan X, it’s a Pascal graphics card for enthusiasts which was launched back in August 2016. It’s built on the 16nm process and based upon the GP102 graphics processor. The card supports DirectX 12.0, amongst other technology, and comes with an impressive 12GB of GDDR5X memory. So in short, extremely powerful.

The Titan X is a dual-slot card, as many modern-day GPUs are, and is 10.5 inches long. It has a base clock speed of 1417Mhz and a boost clock of 1531Mhz respectively.

The Titan X is up there with the quickest and most powerful GPUs currently available in today’s market. Below we have outlined the full specifications of the Titan X.

ASUS ROG STRIX GTX 1080 Ti

Clock Speed

1632 MHz

11GB GDDR5X

Length

11. 73 inches

73 inches

Strong factory overclock, but not the best

Best cooling performance of the bunch offers more OC headroom, plus…

Additional features like RGB lighting for extra visual flair

It’s also huge, but surprisingly not the biggest

It’s fairly expensive

CHECK PRICE

CHECK PRICE

NVIDIA Titan RTX Graphics Card

Clock Speed

1770 MHz Boost Clock

GDDR6 24GB

Memory Bus Width

384 Bit

Massive 24GB of GDDR6 memory capacity

Excellent build quality

Ray-tracing technology

4608 CUDA cores

Massively expensive

CHECK PRICE

Titan X Tech Specs

| Feature | Titan X |

|---|---|

| CUDA Cores | 3584 |

| Base Clock | 1.42GHz |

| Boost Clock | 1.53GHz |

| Memory | 12GB GDDR5x |

| Memory Bandwidth | 480Gb/s |

| TDP | 250W |

| Processor | GP102 |

| Transistor | 12bn |

As you can see, the Titan X has an impressive base clock of 1. 42Ghz and a boost clock of 1.53Ghz, figures almost identical to that of the GTX 1080 Ti. Both the Titan and the 1080 have the same 3584 CUDA cores. However, the Titan comes equipped with more memory, 12GB over the 1080s 11GB.

42Ghz and a boost clock of 1.53Ghz, figures almost identical to that of the GTX 1080 Ti. Both the Titan and the 1080 have the same 3584 CUDA cores. However, the Titan comes equipped with more memory, 12GB over the 1080s 11GB.

The TDP for the Titan sits at 250W, and you guessed it, totally equivalent to the GTX 1080 Ti again.

So, you can see these two cards are quite close when it comes to specs, but how do they compare when it comes to real-life performance? Well, let’s have a look at Titan first and then compare the two.

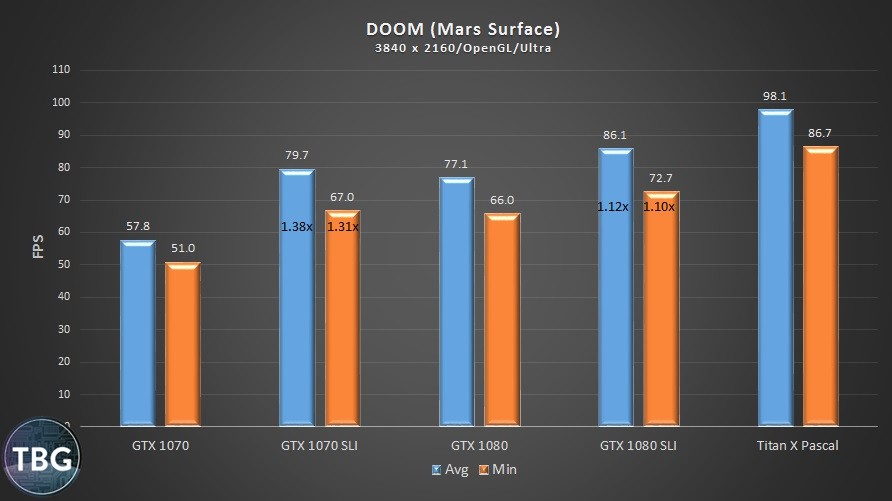

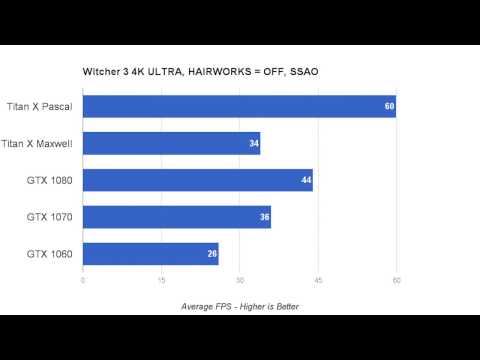

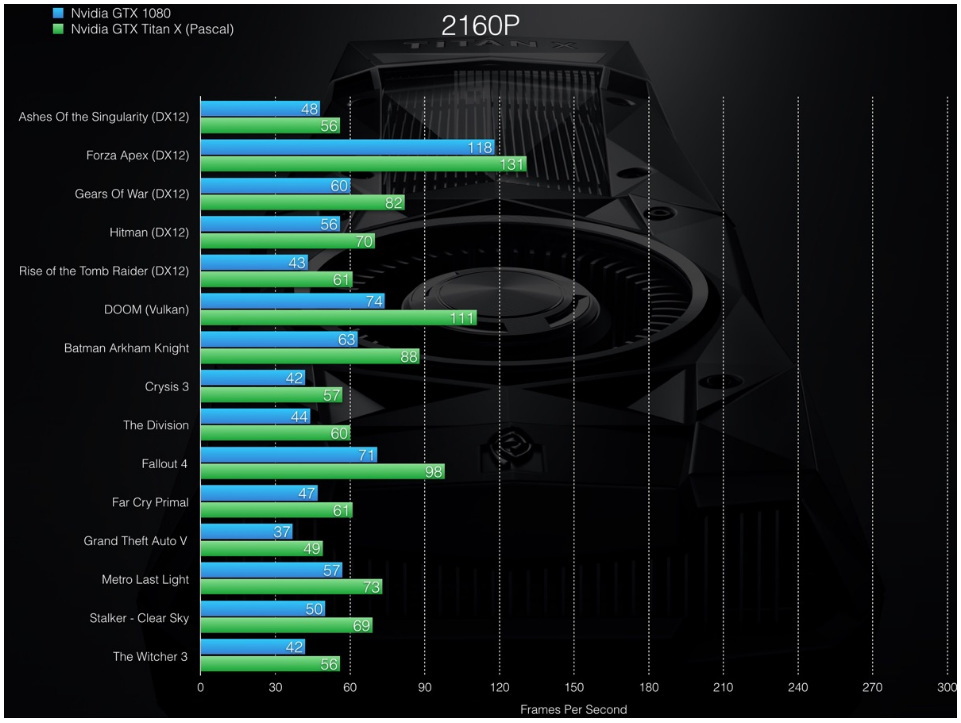

Titan X Performance

I think we can straightaway say that this card is going to absolutely smash your AAA game titles clean out of the water. The Titan X is capable of handling anything you throw at it and all in stunning 4k resolution. It’s one of the only cards which can deliver consistent 60 frames per second in most of the leading engines at ultra HD 4k.

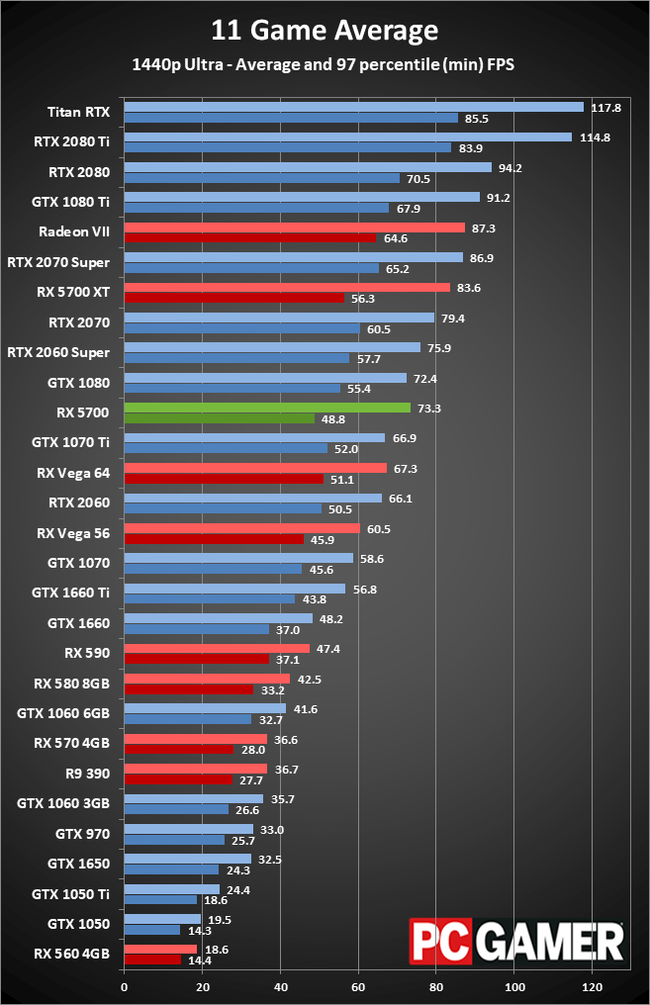

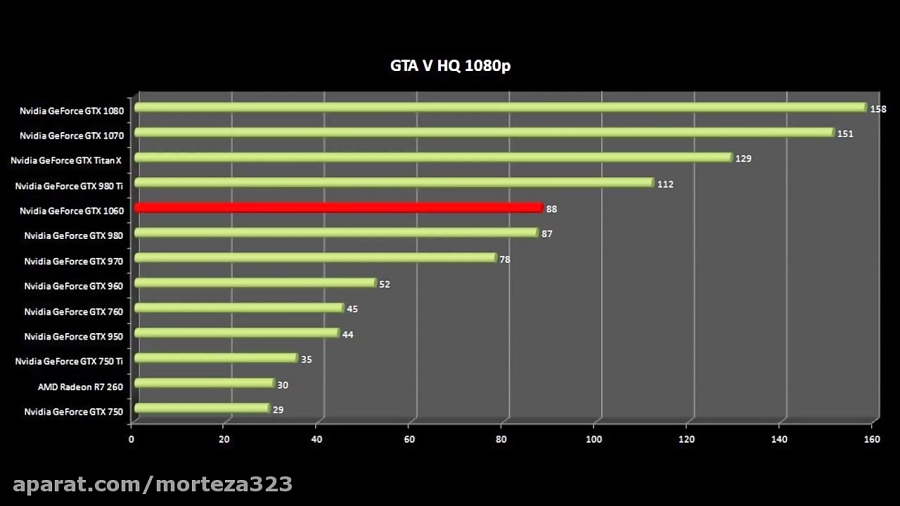

At 4k it’s safe to say that the Titan X is one of the best performing GPUs out there. It’s 20%+ faster than any custom GTX 1080 meaning it’s hugely more impressive than the majority of other cards available.

It’s 20%+ faster than any custom GTX 1080 meaning it’s hugely more impressive than the majority of other cards available.

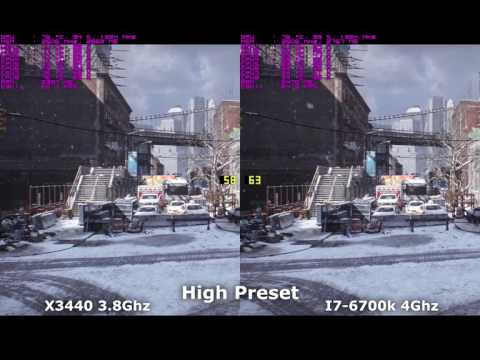

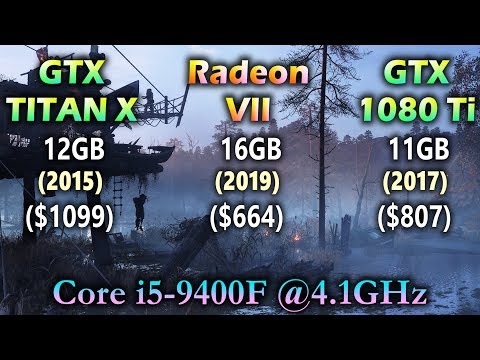

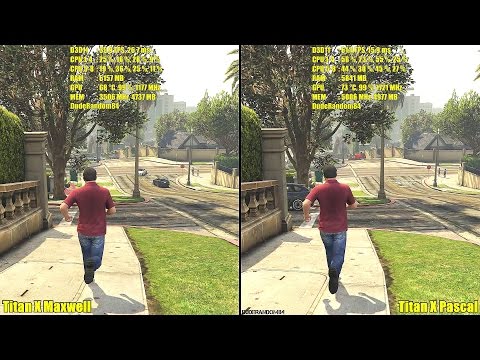

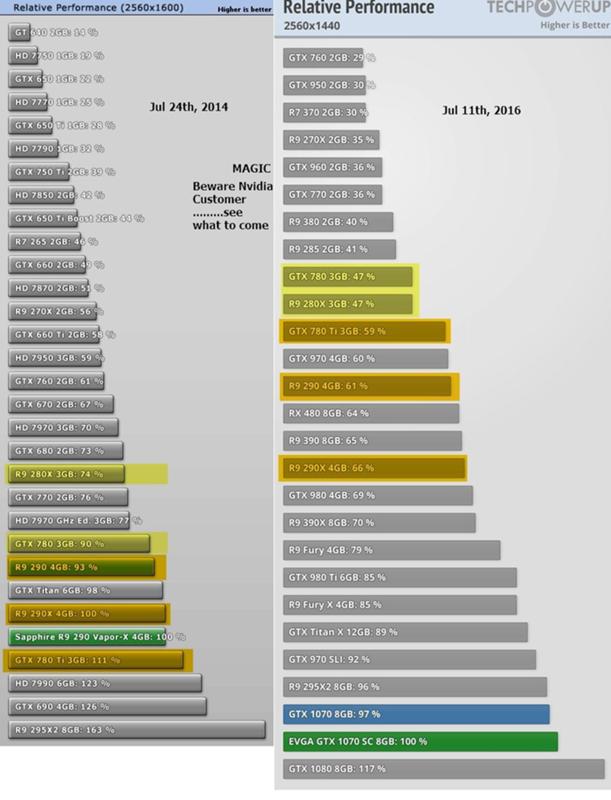

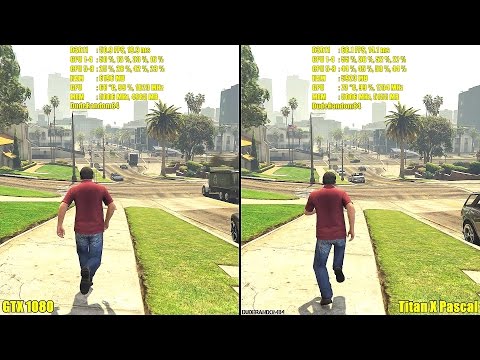

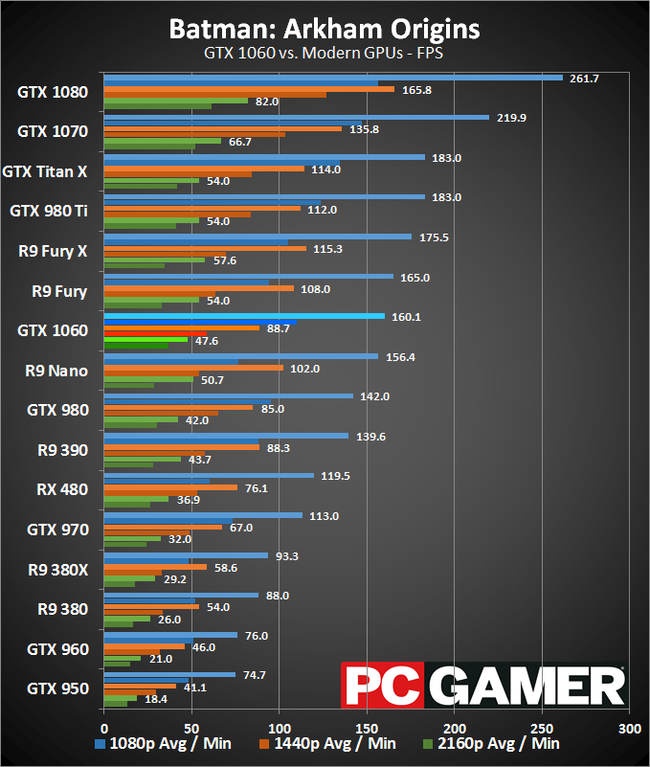

The picture underneath shows benchmarking results taken from Techpowerup, where it clearly shows the Titan X finishing 5th in an overall performance summary against some of the other market leaders.

Unfortunately for the Titan X, it gets narrowly beaten by the 1080 Ti, a trend we will see continue as the article goes on. The 1080 Ti comes in with an average of 3% better performance, not huge, but still a victory.

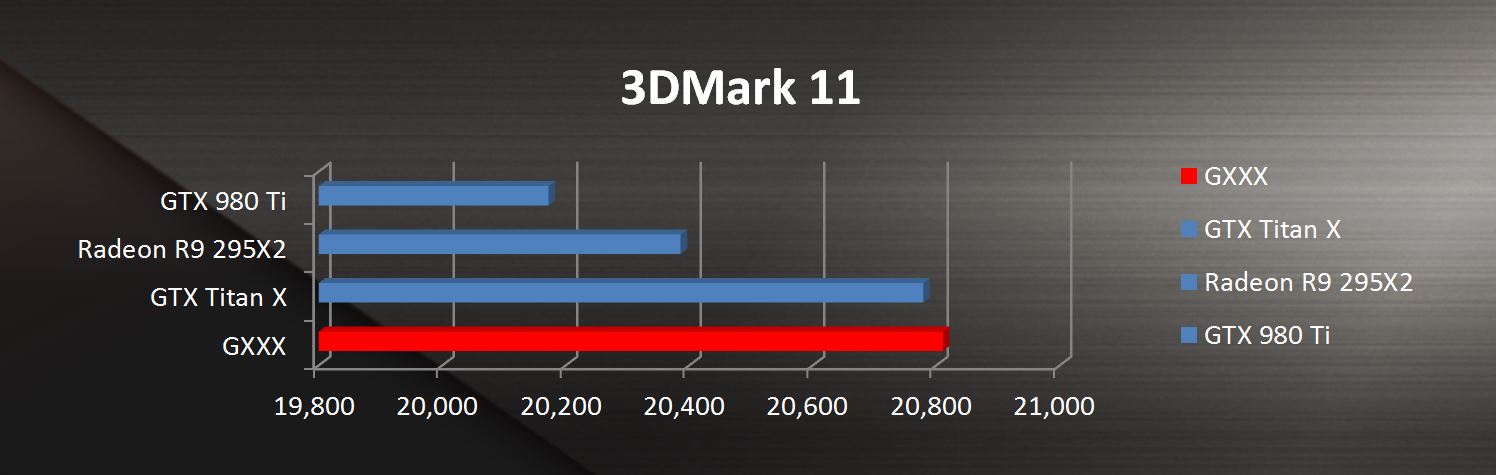

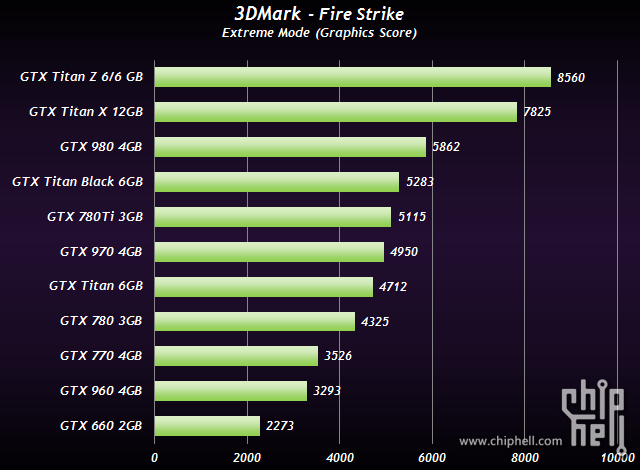

Underneath is more benchmarking that shows the Titan X’s 3DMark Fire Strike Graphics Score compared with some of the other leading cards available.

As you can see, once again the Titan X is marginally beaten by the 1080 Ti by just over 400 points. That being said, the RTX 2080 Ti (the “big daddy” as I like to call it), beats the Titan’s score quite considerably.

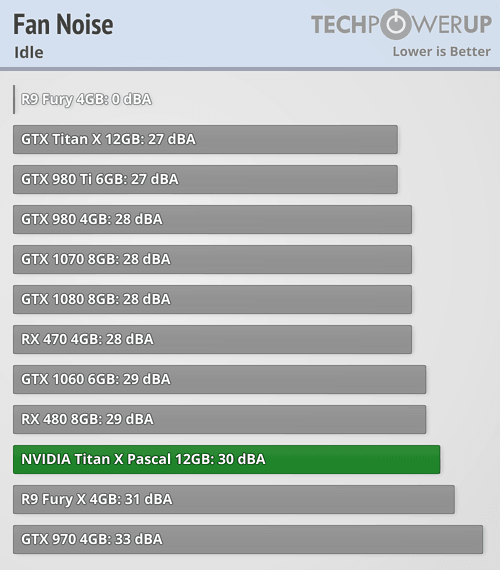

Pure benchmarking figures aside, I’d like to touch on temperatures for a moment. It’s quite apparent that the Titan X has a blower-style fan. For those unaware, this means that instead of pushing the hot air around your build, like aftermarket cards usually do, a blower-style fan will suck the air into the card and blow it out the back. This, in turn, results in an overall cooler build.

It’s quite apparent that the Titan X has a blower-style fan. For those unaware, this means that instead of pushing the hot air around your build, like aftermarket cards usually do, a blower-style fan will suck the air into the card and blow it out the back. This, in turn, results in an overall cooler build.

The fan itself will reach upwards of 50% in stressful situations where the fan will be turning at 2,500RPM and be creating noticeable noise. Nothing too off-putting though it must be said.

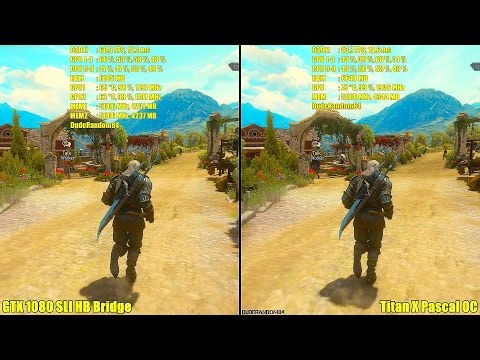

Titan X VS 1080 Ti

So, the big question a lot of people are currently asking is, how does this Titan stand up to the mighty GTX 1080 Ti? Well, we’ll do our best to try and break it down for you.

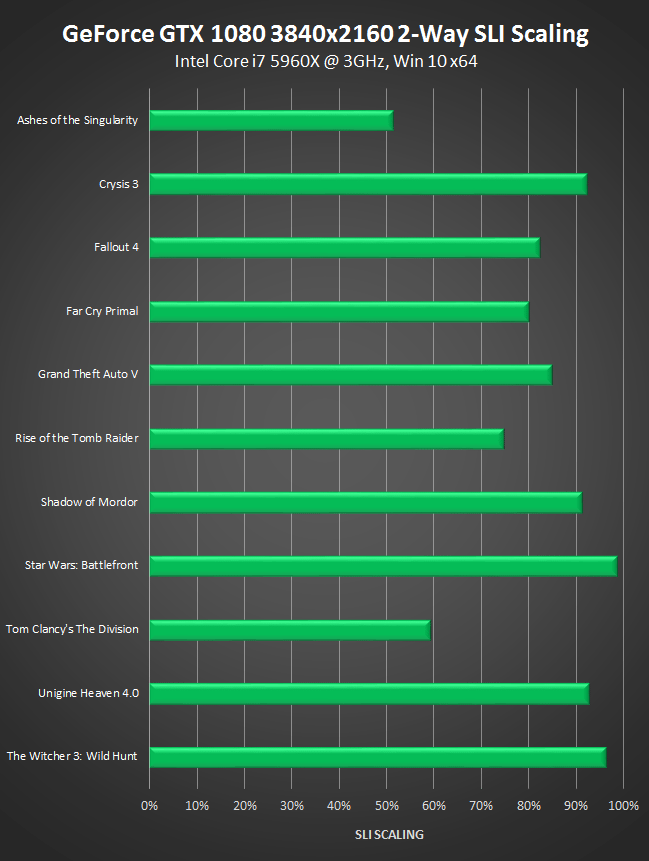

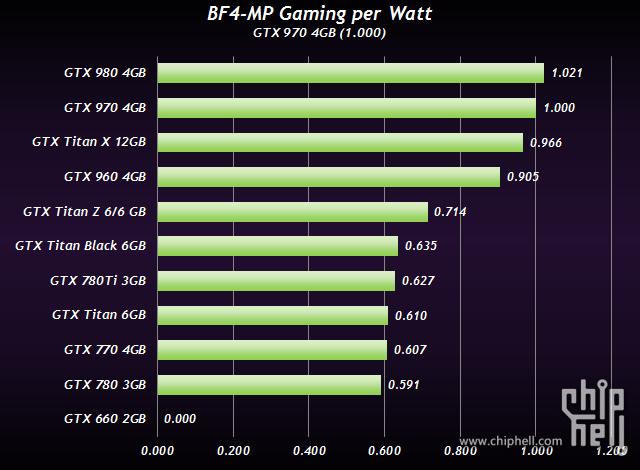

The Titan and the 1080Ti are pretty close right across the board. Same CUDA cores, pretty similar clock speeds (1080Ti just edging it), almost identical memory bandwidth, same TDP, processor, and transistors. One noticeable difference is the memory, whereas the GTX 1080Ti has 11GB, the Titan has 12GB. But how much difference does that make?

But how much difference does that make?

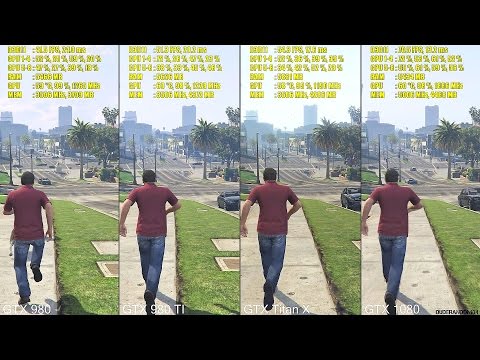

The benchmarks between both these cards are pretty close, and to be honest, that’s because they’re very close in terms of specifications. The same can be said for in-game FPS across a number of different games. Both cards break the 50 frames per second barrier in 4k ultra and in a lot of games, will break 70. The difference between the two cards, however, is very low with the most significant difference being 3-5FPS.

Closing Thoughts

ASUS ROG STRIX GTX 1080 Ti

CHECK PRICE

CHECK PRICE

NVIDIA Titan RTX Graphics Card

CHECK PRICE

So what conclusion can we take from the data collected? Well, it’s safe to say that choosing between the Titan X and the 1080Ti is a difficult choice to make; especially if you’re looking for the absolute best of the best in graphical performance. Both Titan and 1080Ti are very closely matched in almost every aspect, this being said, however, the 1080Ti probably just edges it in the benchmarks though.

Ultimately, both cards performance to the highest level. However, due to the massive demand and popularity of the 1080Ti, I feel the Titan might lose the battle in the long run.

Which one did you decide to go for? Let us know in the comments section below!

NVIDIA Geforce GTX 1080 แรงกว่า Titan X ถึง 2 เท่า ใช้พลังงานน้อยกว่า 3 เท่า จริงหรือ ?

กำลังกลายเป็นประเด็นใหญ่กันอยู่ในเวลานี้ หลังจากที่ทาง NVIDIA ได้มีการจัดงานแถลงข่าวที่ใช้ชื่อว่า NVIDIA Special event เมื่อช่วงเช้าตรู่ของวันที่ 7 พฤษภาคมที่ผ่านมา โดยทาง NVIDIA จัดงานนี้ขึ้นมาเพื่อเป็นการแถลงข่าวแนะนำตัวการ์ดในตระกูลใหม่ของตนเองที่บรรดาคอเกมทั่วโลกต่างเฝ้ารอดูกันอยู่ว่า NVIDIA จะได้ใช้ชื่อการ์ดว่าอย่างไร จะมีหน้าตาตามที่หลุดมาหรือไม่ สเป็คของการ์ดจะเป็นอย่างไร การทำงานจะแรงขนาดไหน ? ซึ่งงานนี้ทาง NVIDIA ก็มีการเผยข้อมูลเบื้องต้นเกี่ยวกับตัวการ์ดออกมารวมทั้งมีการพูดถึงเรื่องของประสิทธิภาพบางส่วนออกมาเช่นกัน โดยในรายละเอียดของงาน NVIDIA Special event ทางเราก็ได้มีการนำเสนอแบบรายงานสดให้ได้ติดตามชมกันจากที่นี่ และจากรายงานดังกล่าวก็กลายเป็นที่ถกเถียงกันไม่น้อยในเรื่องของความแรงจากข้อมูลที่ทาง Jen-Hsun Huang หรือ CEO ของ NVIDIA ได้พูดย้ำหลายครั้งว่า

“NVIDIA Geforce GTX 1080 จะมีความแรงกว่า GTX Titan X กว่า 2 เท่าและใช้พลังงานน้อยกว่าถึง 3 เท่า”

จากประเด็นดังกล่าวซึ่งเป็นสิ่งที่เราได้นำเสนออกไปสด ๆ พร้อม ๆ กับตัวงานแถลงข่าว ซึ่งก็เป็นเพียงการนำเรื่องราวในงานมาบอกเล่ากันว่ามันมีอะไรเกิดขึ้นบ้าง ดังนั้นบทความนี้ที่เกิดขึ้นตามมาอีกครั้ง ก็เพื่อเป็นการนำประเด็นที่ว่านี้ มาวิเคราะห์ พูดคุยกันว่า มันจะมีความเป็นไปได้มากน้อยขนาดไหน มีความเป็นไปได้จริง ๆ หรือเปล่า หรือพูดง่าย ๆ NVIDIA Geforce GTX 1080 มันแรงเวอร์อย่างที่ CEO NVIDIA พูดเอาไว้จริงหรือเปล่า ? ซึ่งตรงนี้ก็จะเป็นเรื่องราวของบทวิเคราะห์ที่ผมจะขอนำเสนอจากข้อมูลโดยรอบทั้งหมดที่มีออกมา จากทั้งก่อนและหลังงานแถลงข่าว

2x Perf and 3x Efficiency vs Titan X ?

เข้าเรื่องกันเลยนะครับ ว่ากันที่ความแรงที่ทาง NVIDIA เคลมว่า GTX 1080 แรงกว่า Titan X ถึง 2 เท่าและใช้พลังงานน้อยกว่าหรือมีประสิทธิภาพกว่า 3 เท่านั้น ถ้ามองจากสิ่งที่ NVIDIA นำเสนอออกมา แต่ไม่ได้มีการขยายความมากนักว่ามันเป็นความแรงในลักษณะใด บอกเพียงว่าความแรงที่พูดถึงอยู่นี้เป็นการทดสอบเปรียบเทียบระหว่าง GTX 1080 กับ GTX Titan X กับการทดสอบการใช้งานด้าน VR ด้วยการทดสอบกับ Barbarian Demo benchmark และเมื่อเรามองไปที่ตัวกราฟที่ทาง NVIDIA ใช้ในการนำเสนอจากภาพด้านล่าง

เราจะเห็นว่าลักษณะของ Relative VR Gaming Performance (เส้นแนวดิ่ง) ส่วนแนวนอนจะเป็น Power หรืออัตราการใช้พลังงาน โดยผมได้ทำการลากเส้นสีแดงให้กับการ์ด Titan X และสีเหลืองให้กับ GTX 1080 โดยถ้าเรามองที่ประสิทธิภาพหรือความแรง จะเห็นว่า GTX 1080 จะอยู่ที่ช่วงประมาณ 7 ส่วน GTX Titan X จะอยู่ในช่วงประมาณ 3. 5 ดังนั้นความแตกต่างที่เกิดขึ้นก็ชัดเจนว่ามันคือ 2 เท่าตามที่ NVIDIA พูดเอาไว้ แต่เมื่อถึงในช่วงของการใช้พลังงาน เราอาจจะงง ๆ ว่ามัน 3 เท่าจาก Titan X อย่างไร ?

5 ดังนั้นความแตกต่างที่เกิดขึ้นก็ชัดเจนว่ามันคือ 2 เท่าตามที่ NVIDIA พูดเอาไว้ แต่เมื่อถึงในช่วงของการใช้พลังงาน เราอาจจะงง ๆ ว่ามัน 3 เท่าจาก Titan X อย่างไร ?

ในจุดนี้มันเป็นการพูดในรูปของ Efficiency ที่หมายถึง ความมีประสิทธิภาพในการทำงานหรือการใช้งาน ดังนั้นความหมายของคำว่า 3 เท่าจาก NVIDIA มันจะหมายถึงว่า ถ้าหากต้องการให้ Titan X แรงเท่า ๆ กันกับ GTX 1080 มันจะต้องมีการใช้พลังงานที่เพิ่มขึ้นจากเดิมอีกกว่า 3 เท่าจากความสามารถในการรีดประสิทธิภาพที่ออกมาได้เท่ากับ 1 โดยเราต้องมองกันก่อนว่า ในทุก ๆ ประสิทธิภาพ(กราฟแนวดิ่ง)ที่เท่ากับ 1 นั้นตัวการ์ดจะต้องใช้พลังงานมากขนาดไหน

สำหรับ GTX 1080 ที่ประสิทธิภาพเท่ากับ 7 มันใช้พลังงานที่ 180W ดังนั้นทุก ๆ ประสิทธิภาพเท่ากับ 1 ก็จะได้ว่ามันต้องใช้พลังงานที่ 25.71W (180/7 = 25.71) ส่วนทางด้านของ GTX Titan X ทุก ๆ ประสิทธิภาพเท่ากับ 1 จะใช้พลังงานที่ 71.43W (250/3.5 = 71.43) ดังนั้นที่ประสิทธิภาพในระดับที่เท่า ๆ กัน GTX Titan X จะใช้พลังงานสูงกว่า GTX 1080 คิดเป็นสัดส่วนได้ว่า 71.43/25.71 = 2.78 หรือประมาณ 3 เท่าตัวตามที่ทาง NVIDIA เคลมเอาไว้

ตามที่ได้ขยายความไปมันก็คือเรื่องของความแรงที่ทาง NVIDIA ได้นำเสนอ และอย่าลืมด้วยว่ามันคือประสิทธิภาพการใช้งานด้าน VR ที่ตัว GPU จะต้องมีการประมวลผลกราฟิกที่มีความละเอียดสูงมาก ๆ ซึ่งมันจะมีการทำงานที่แตกต่างไปจากที่เราใช้เล่นเกมบนจอภาพที่ความละเอียดในระดับ Full HD, 2K หรือ 4K ดังนั้นจากจุดนี้เราไปดูกันที่ต่อเรื่องของ Gaming Performance หรือความแรงจากการเล่นเกมที่ทาง NVIDIA ได้มีกราฟออกมาให้ได้เห็นบนหน้าเว็บไซต์ Geforce สักพักใหญ่ ๆ หลังงานแถลงข่าวจบลงไป และทางเราก็มีบทสรุปภาพรวมมาให้ชมกันไปแล้วเช่นเดียวกัน

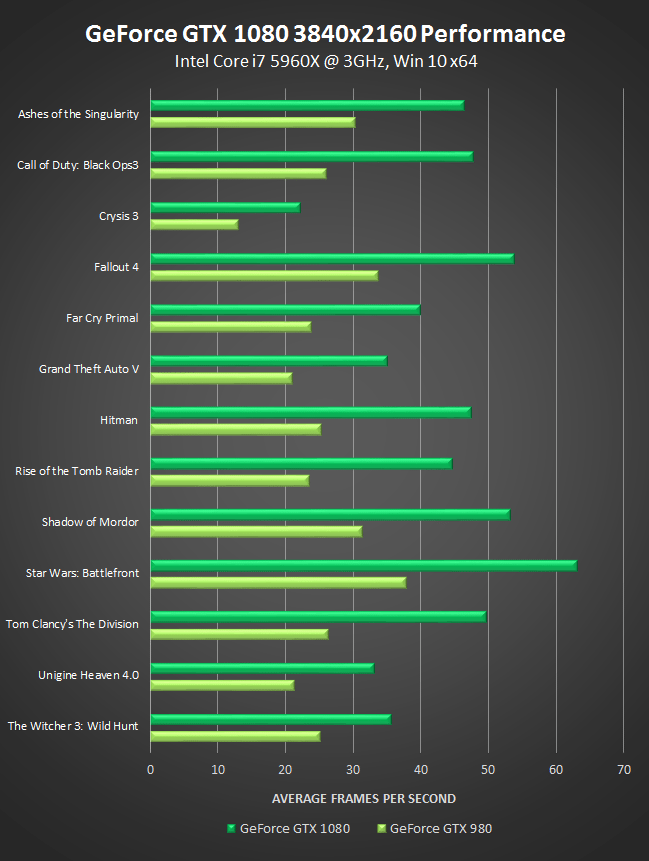

Gaming Performance

ในจุดนี้ทาง NVIDIA ได้ให้ข้อมูลเพียงหยาบ ๆ ว่า GTX 1080 จะสามารถให้ความแรงในการเล่นเกมได้มากถึง 3 เท่าตัวเมื่อเปรียบเทียบกับ GPU ในเจเนเรชันปัจจุบัน บวกกับยังจะได้รับเทคโนโลยีใหม่สำหรับรองรับเกมใหม่ ๆ และรวมถึงประสบการณ์ที่ไม่เคยสัมผัสมาก่อนกับการใช้งาน VR แต่ทว่าจากกราฟแสดงประสิทธิภาพที่แสดงถึงความแตกต่างของความแรงที่เปรียบเทียบกับ GTX 980 (จากข้างต้นในส่วนของ VR เปรียบเทียบกับ Titan X) ซึ่งผลที่ออกมาก็ตามที่เห็นได้จากในภาพด้านล่าง

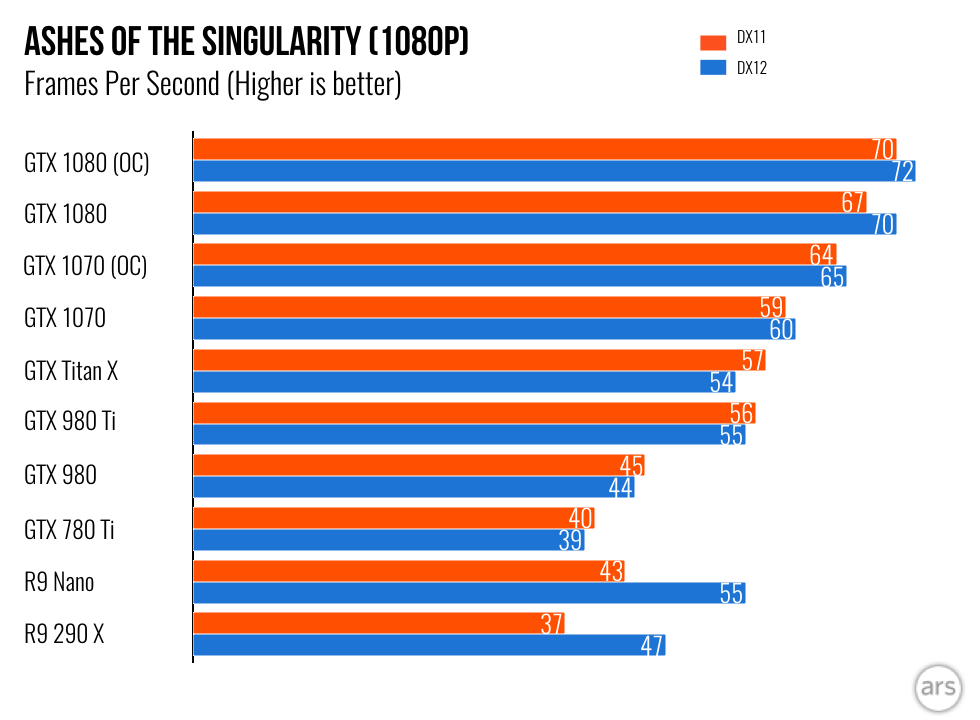

จากผลที่ออกมาจริง ๆ ทาง NVIDIA กลับมีประสิทธิภาพในส่วนของการทดสอบด้วย VR เท่านั้นที่เห็นความแตกต่างราว 3 เท่าตัวตามที่เคลมไว้ แต่สำหรับความแรงที่เปรียบเทียบโดย Rise of the Tomb Radder กับการใช้งานบน API DirectX 12 ซึ่งความแรงที่ทำออกมาได้เหนือกว่า GTX 980 ทำได้เพียงในช่วงประมาณ 1. 7 เท่า และสำหรับเกม The Witcher 3: Wild Hunt เหนือกว่าประมาณ 1.6 เท่า ซึ่งลักษณะของการบอกกล่าวถึงความแรงในรูปนี้เราอาจจะมองไม่ชัดเจนนัก แต่ถ้าเรานำสัดส่วนที่ทาง NVIDIA ระบุมา และประเมินเป็นค่าในลักษณะของ FPS น่าจะช่วยให้เห็นภาพได้ชัดเจนมากขึ้น และจากบททดสอบของทาง Guru3D ที่ผมขอใช้เป็นผลอ้างอิงของ เกมทั้งสองเกมที่การทดสอบในระดับ 2K ของ GTX 980 โดยค่า FPS จะเป็นดังนี้

7 เท่า และสำหรับเกม The Witcher 3: Wild Hunt เหนือกว่าประมาณ 1.6 เท่า ซึ่งลักษณะของการบอกกล่าวถึงความแรงในรูปนี้เราอาจจะมองไม่ชัดเจนนัก แต่ถ้าเรานำสัดส่วนที่ทาง NVIDIA ระบุมา และประเมินเป็นค่าในลักษณะของ FPS น่าจะช่วยให้เห็นภาพได้ชัดเจนมากขึ้น และจากบททดสอบของทาง Guru3D ที่ผมขอใช้เป็นผลอ้างอิงของ เกมทั้งสองเกมที่การทดสอบในระดับ 2K ของ GTX 980 โดยค่า FPS จะเป็นดังนี้

The Witcher 3: Wild Hunt

- GTX 980 Ref – 2560x1440p = 48FPS

- GTX 980 Ti Ref – 2560x1440p = 61FPS

- GTX 980 Ti HOF – 2560x1440p = 67FPS

ดังนั้นหากเราอิงจากประสิทธิภาพที่เห็นจากผลการทดสอบข้างต้นที่ NVIDIA ระบุว่า GTX 1080 แรงกว่า GTX 980 ในเกม The Witcher 3: Wild Hunt ราว 1.6 เท่าหรือบวกไปอีก 60% เราก็จะได้เฟรมเรตโดยประมาณของ GTX 1080 ได้ที่ 76.8FPS (48×1.6) และถ้าหากเรานำอัตรา FPS ที่เราประเมินได้จากสัดส่วนของความแรงที่เปรียบเทียบกับ GTX 980 ไปเปรียบเทียบกับประสิทธิภาพของ GTX 980 Ti ในแบบ Ref และเวอร์ชัน Overclock ที่หยิบยกมา เราก็ได้ว่า

- GTX 1080(76.8fps) จะแรงกว่า GTX 980 Ti(61fps) ประมาณ 25% หรือ 1.

25x

25x - GTX 1080(76.8fps) จะแรงกว่า GTX 980 Ti HOF(67fps) ประมาณ 15% หรือ 1.15x

ต่อมาถ้ามองกันในแบบเฟรมเรตต่อเฟรมเรตเราก็จะเห็นว่า GTX 1080 จะให้เฟรมเรตที่สูงกว่า GTX 980 Ti ราว 15.8FPS และเหนือกว่า GTX 980 Ti HOF ราว 9.8FPS แต่ทั้งนี้ให้เข้าใจไว้ด้วยนะครับว่า ที่หยิบยกมานั้นเป็นเพียงการอ้างอิงจากสเกลที่ทาง NVIDIA แจ้งมาเท่านั้น ซึ่งเราก็ไม่ทราบได้ว่าสเกลความแรงที่แท้จริงในการทดสอบจากทาง NVIDIA ที่ออกมาได้มีการทดสอบที่ความละเอียดระดับไหน ใช้สเป็คอะไรบ้าง

3DMark Fire Strike Extreme Performance

คราวนี้เรามาวิเคราะห์กันต่อจากผลการทดสอบ 3DMark ที่มีหลุดออกมาของการ์ด GTX 1080 เมื่อไม่กี่วันก่อน ซึ่งจะมีผลการทดสอบของ 3DMark Firs Strike Extreme ที่ตัวการ์ดทำคะแนนรวมออกมาได้ที่ 8959 คะแนนและคะแนนในส่วนของกราฟิกอยู่ที่ 10102 คะแนน

หากเราอิงจากผลคะแนนของ GTX 980 Ti HOF ตามที่ทาง Guru3D ได้ทำการทดสอบไว้ ซึ่งผลของคะแนนรวมที่ออกมาคือ 8881 คะแนนส่วนคะแนนกราฟิกหรือ Graphic Score จะอยู่ที่ 9191 (ตามภาพด้านล่าง)

ความแตกต่างของความแรงที่ออกมาระหว่าง GTX 1080 และ GTX 980 Ti HOF คือ ในส่วนของคะแนนรวมนั้น GTX 1080 จะแรงกว่าเพียงเล็กน้อยประมาณมาก ๆ ประมาณ 1% เท่านั้นและในส่วนของคะแนนกราฟิกเราพอจะเห็นถึงความแตกต่างที่ชัดเจนขึ้นมาบ้างโดยที่ GTX 1080 ให้ประสิทธิภาพที่เหนือกว่าราว 10% ซึ่งถือว่าเป็นสัดส่วนที่ใกล้เคียงกับการประเมินจากความแรงของเฟรมเรตที่ได้มาจากตัวเกมจากด้านบนที่ GTX 1080 เหนือกว่า GTX 980 Ti HOF ประมาณ 15%

Conclusion

บทสรุป… จากการวิเคราะห์ สังเคราะห์ในครั้งนี้ แน่นอนว่าผมเองก็ไม่อาจจะบอกได้ว่ามันถูกต้องหรือไม่ เพราะทุกอย่างยังคงอยู่บนพื้นฐานข้อมูลจากเท่าที่เราสามารถหาได้ในเวลานี้ มันอาจจะเป็นไปในลักษณะนี้จริง ๆ หรือมันอาจจะไม่เป็นไปตามนี้ เบื้องต้นทุกท่านก็ต้องใช้วิจารณญาณในการรับชมและคิดวิเคราะห์ตามไปด้วย

ถ้ามองจากสิ่งที่ผมได้พยายามบอกเล่าไปนั้น เบื้องต้นในส่วนของความแรงที่ทาง NVIDIA บอกว่ามันจะแรงขึ้นเป็น 2 เท่าและใช้พลังงานลดลงหรือมีประสิทธิภาพกว่า 3 เท่าจาก Titan X ประเด็นนี้ คงยังไม่อาจจะหาข้อสรุปได้ ณ เวลานี้เพราะมันเป็นเพียงการกล่าวอ้างลอย ๆ จากทาง NVIDIA ที่ใช้ในการนำเสนอเท่านั้น ไม่มีการหยิบยกผลการทดสอบมาให้เห็นกันอย่างชัดเจน แต่สิ่งที่เรารู้มีเพียงรู้ว่า GTX 1080 จะใช้พลังงานสูงสุดในช่วง 180W ซึ่งน้อยกว่า Titan X จริง ๆ ส่วนที่บอกว่าแรงกว่า 2 เท่านั้นไม่มีสิ่งใดมายืนยัน แต่ถ้าหากว่ามันแรงกว่าเป็น 2 เท่าจริง ๆ ตามที่กล่าวอ้าง สัดส่วนของการใช้พลังงานก็จะถูกต้องตามไปด้วย แต่มันไม่ได้หมายถึงว่าใช้น้อยกว่า 3 เท่าแบบโดยตรง ซึ่งมันจะเป็นในลักษณะของ ถ้าแรงเท่ากันจะใช้พลังงานน้อยกว่า หรือถ้าใช้พลังงานเท่ากัน GTX 1080 ก็จะแรงกว่าที่เห็นอีก

ส่วนผลความแรงในด้านเกมจากกราฟที่ทาง NVIDIA ยกมาให้เห็นนั้น กับการเปรียบเทียบกับ GTX 980 ในแบบ Ref ซึ่งเราก็พอจะรับรู้กันว่า GTX 980 ref จะมีความเร็วในการทำงานที่ค่อนข้างต่ำ แต่การเปรียบเทียบแบบนี้จากทาง NVIDIA ก็ถือว่าเป็นการเปรียบเทียบในแบบที่แฟร์และถูกต้อง เพราะเป็นการวัดกันที่การ์ดมาตรฐานของตนเอง ซึ่งความแรงที่ออกมาเคลมว่ามันเล่นเกมได้เหนือกว่า GTX 980 ราว 1. 6 เท่าหรือแรงกว่าราว 60% ซึ่งถ้าหากสัดส่วนเป็นไปตามนั้นจริง ๆ เมื่อนำมาเปรียบเทียบกับ GTX 980 Ti ทั้งในแบบ Ref และ Overclock Version ผลที่เห็นคือ สัดส่วนความแตกต่างก็ลดลงมาในช่วง 15-25% เท่านั้น ซึ่งถือเป็นช่วงความแตกต่างตามที่เราเคยพบเห็นกันมาอยู่บ่อยครั้งในการเปิดตัวของการ์ดรุ่นใหม่ ๆ และเมื่อมามองกันต่อที่การทดสอบของ 3DMark จากผลที่หลุดมา สเกลที่แตกต่างก็อยู่ในช่วงประมาณ 10% ที่มันก็ใกล้เคียงกับสเกลที่เราประเมินในส่วนของเกม ดังนั้นจึงมีความเป็นไปได้สูงกว่า GTX 1080 จะมีความแรงเหนือกว่า GTX 980 Ti ในช่วงประมาณ 10-25% ขึ้นอยู่กับแต่ละเกมแต่ละบททดสอบ แต่สิ่งที่ผมมองว่า GTX 1080 จะเด่นเป็นพิเศษเลยก็คือ เรื่องของอัตราการใช้พลังงาน เพราะตัวการ์ดมีความต้องการต่อไฟเลี้ยงเพิ่มจากภายนอกด้วย Pcie 8pin power เพียงชุดเดียวเท่านั้น

6 เท่าหรือแรงกว่าราว 60% ซึ่งถ้าหากสัดส่วนเป็นไปตามนั้นจริง ๆ เมื่อนำมาเปรียบเทียบกับ GTX 980 Ti ทั้งในแบบ Ref และ Overclock Version ผลที่เห็นคือ สัดส่วนความแตกต่างก็ลดลงมาในช่วง 15-25% เท่านั้น ซึ่งถือเป็นช่วงความแตกต่างตามที่เราเคยพบเห็นกันมาอยู่บ่อยครั้งในการเปิดตัวของการ์ดรุ่นใหม่ ๆ และเมื่อมามองกันต่อที่การทดสอบของ 3DMark จากผลที่หลุดมา สเกลที่แตกต่างก็อยู่ในช่วงประมาณ 10% ที่มันก็ใกล้เคียงกับสเกลที่เราประเมินในส่วนของเกม ดังนั้นจึงมีความเป็นไปได้สูงกว่า GTX 1080 จะมีความแรงเหนือกว่า GTX 980 Ti ในช่วงประมาณ 10-25% ขึ้นอยู่กับแต่ละเกมแต่ละบททดสอบ แต่สิ่งที่ผมมองว่า GTX 1080 จะเด่นเป็นพิเศษเลยก็คือ เรื่องของอัตราการใช้พลังงาน เพราะตัวการ์ดมีความต้องการต่อไฟเลี้ยงเพิ่มจากภายนอกด้วย Pcie 8pin power เพียงชุดเดียวเท่านั้น

ส่งท้ายตรงนี้ คิดว่าน่าจะพอเห็นภาพกันบ้างแล้วนะครับว่า จากสิ่งที่ทาง NVIDIA นำเสนอนั้นถ้าเรามองให้ลึก ๆ หรือนำออกมาแจกแจงให้ดีแล้ว มันไม่ได้แรงเวอร์อย่างที่เราคิด(หากมันเป็นไปตามที่เราวิเคราะห์) และสิ่งที่หลาย ๆ คนสงสัยกันว่า เหตุใดทาง NVIDIA จะต้องมีการยก GTX 1080 ไปอ้างอิงกับ GTX 980 ทำไมไม่เทียบกับ GTX 980 Ti ไปเลย พอมาเห็นตรงนี้ก็คงจะพอเข้าใจหรือเห็นอะไรมากขึ้น เพราะหากว่านำมาเปรียบเทียบกับ GTX 980 Ti แล้วความแรงที่ได้มาไม่ได้แตกต่างมากมายนัก ไม่ก็จะไม่สามารถจูงใจได้เท่าที่ควร หรือไม่เป็นที่กล่าวถึงในวงกว้าง แต่ทั้งนี้ทั้งนั้น ขอย้ำอีกครั้งว่าสิ่งที่นำเสนอไปตรงนี้เป็นเพียงบทวิเคราะห์ ไม่สามารถยืนยันได้ว่าจะถูกต้องมากน้อยเพียงไร ซึ่งการที่เราจะได้มาสำหรับคำตอบที่ถูกต้องที่สุด ชัดเจนที่สุด ก็ต้องรอดูกันเมื่อตัวการ์ดมีการเปิดตัวออกมาอย่างเป็นทางการอีกครั้ง มีการทดสอบจากวงกว้าง ถึงเวลานั้นเราจะได้รู้กันอย่างชัดเจนแน่นอนว่าความแรงที่แท้จริงของ GTX 1080 นั้นจะขนาดไหนกันแน่ อีกไม่นานแล้วละครับ อีกประมาณหนึ่งเดือนเราก็จะได้คำตอบที่ทุกคนรอคอยกัน

PlayStation 5 vs Xbox Series X vs Nintendo Switch

Why you can trust Pocket-lint

(Pocket-lint) — We love a good old-fashioned console war, which is great because it seems as though we’re permanently in the middle of one, with Xbox, PlayStation and Nintendo all constantly locking horns in the market.

The Nintendo Switch has been out for a long while now, although its OLED model is newer, and the PlayStation 5 and Xbox Series X have also now been heading up their respective console lineups for a couple of years, so if you’re in the market for some new hardware you might be wondering which one’s best for you. Here are all the details and comparisons you need to know about.

Performance

How does each console stack up against the others in terms of performance?

Pocket-lint

Nintendo Switch

There’s an elephant in the room when you compare these three consoles that we’ll address head-on — the Nintendo Switch is far less powerful than the PS5 or Xbox Series X.

With a maximum resolution of 720p while handheld and 1080p while docked, something that many big games don’t even manage on Switch, you won’t be gaming in crisp 4K on Nintendo’s hardware.

However, it also has the major upside of being portable, which neither of the other two can manage, especially if you don’t want to try remote play on your phone with either.

The Switch OLED version has a 7-inch screen to play on when handheld, though, and games do look absolutely gorgeous on it, and while its modified Nvidia Tegra X1 platform might be showing its age, Nintendo’s first-party games keep looking great on it nonetheless.

Plenty of its top games run at 60FPS thanks to Nintendo’s rigorous quality control, but even if they’re at 30FPS you can generally rely on solid performance from Nintendo’s own games. Ports of games from other consoles tend to be more variable.

Pocket-lint

PlayStation 5

Sony’s PS5 translates its huge size into great performance, with 4K gaming now pretty regularly available.

That said, you will find that most big PS5 games have graphical options to let you get your chosen frame rate since 4K gaming tends to render 60FPS difficult for graphically-intensive titles.

This means you might find yourself gaming in 1440p or even 1080p a lot of the time, but with variable refresh rate now added and native 1440p rendering available, this can actually be really great on a good display.

The PS5 is capable of incredibly solid performance, then, and it also stays whisper-quiet without getting too warm in operation, both of which we appreciate.

Pocket-lint

Xbox Series X

Things are similar for the Xbox Series X, just with a little added beef — this is the most powerful console available in raw terms, although in practise cross-platform titles tend to perform very similarly compared to the PS5.

That means you can again expect rock-solid performance in challenging games, and 4K resolution is often there as an option if you want it.

Like the PS5, we more commonly prioritise a higher frame rate over this raw resolution, but right now the Series X is still feeling like a console with power to spare.

Games

A huge part of any console’s prowess comes down to the exclusive games it boasts over its rivals.

Nintendo

Nintendo Switch

Nintendo continues to have a totally enviable roster of first-party games that can’t be played anywhere but the Switch, making it an amazing choice for anyone looking for a family console in particular.

The likes of The Legend of Zelda: Breath of the Wild and Super Mario Odyssey are instant classics, some of the best games ever released, but there are constant additions to augment this further.

The Fire Emblem series, multiple Pokemon titles, Mario Kart 8 Deluxe, the titan that is Super Smash Bros. Ultimate — the list can go on, and on.

The Switch earns the very highest marks for its exclusive titles, not least because there are just so many that you can’t play anywhere else, including on PC.

Check out our list of the very best Switch games here for more detail.

Bluepoint Games

PlayStation 5

The PS5 had a strong launch and its exclusive lineup continues to thrill, with games like Demon’s Souls and Returnal really feeling like they showcase what makes the console unique thanks to new haptic controller feedback and unbelievable visuals.

A lot of its best games can be played on the PS4 as well, in fairness, but they’re always at their best on the newer hardware, as showcased by Horizon: Forbidden West, which is a new beast on the PS5.

If you open the field up to include multi-platform games, there are hordes of magical titles to enjoy, from Elden Ring to Dying Light 2, all of them looking as good as they can on the new hardware.

The PS5 might not have as many exclusives as the Switch, but it’s also a few years behind, so we’ll give it time.

We’ve got a list of the best PS5 games you can peruse here, too.

Xbox

Xbox Series X

Xbox is probably in third place on exclusives thanks to a slightly sluggish release scheduled since the Xbox Series X and S debuted, but it’s still had some great moments.

Halo Infinite has a blockbuster campaign to enjoy, while Forza Horizon 5 is a stunning open-world driving game, to name two.

The real feather in this console’s cap comes in the form of Xbox Game Pass, which lets you sample a wide range of interesting and sometimes unknown games at a low monthly cost, making it way more potentially affordable to game regularly.

It’s a genius system that means you can explore the Xbox ecosystem with significantly more ease, and makes the Series X feel like a potentially smart investment.

Browse our list of the best Xbox Series X titles for more information.

Media apps and streaming

This is an area where Nintendo takes a bit of a beating — its Switch consoles do have YouTube, that much we can say. Basically every other major streaming platform is missing, though, so they’re really not media hubs in any meaningful sense.

By contrast, the PS5 and Xbox Series X both have access to a wealth of slick and generally responsive apps from the likes of Netflix, Prime Video, Disney+ and the BBC, getting you access to both live and on-demand services at will. These services are largely available in 4K and with HDR, making for a great experience in general.

Provided you don’t pick up a Digital Edition of the PS5, both consoles also have a disc drive that can play 4K Blu-Rays, so if you’ve got a collection of DVDs or Blu-Rays you’ll be able to watch both on your console, saving you another bit of hardware.

Price

Another major variable when you think about buying a console is obviously how expensive (and readily available) it is.

The Switch exists in three versions but to take its top-range option as the main choice, the Switch OLED can be had for £309.99 or $349.99.

squirrel_widget_5717928

By comparison, the Xbox Series X retails at £449.99 or $499.99, while the PlayStation 5 comes in at £479.99 or $499.99.

squirrel_widget_2744430

That makes calling which one is best value a little complex, but the Switch OLED is clearly more affordable (and the standard Switch or Switch Lite even more so), which should be remembered.

squirrel_widget_2679939

However, the price difference between the PS5 and Xbox Series X is either pretty slim or nonexistent depending on where you are. The bigger difference is that while the Series X is now fairly easy to find, it can still be a challenge finding the PS5 in stock, something that could hold you up a bit.

Conclusion

The judgement between these three consoles is actually very similar to the one you would have made five years ago, swapping in the PlayStation 4 and Xbox One instead of their successors, meaning it’s still a hard call.

If you want family-friendly games and a huge roster of amazing first-party titles you can’t play anywhere else, we think the Switch OLED is a superb choice that can’t be topped, with couch co-op also a strength. However, if you’re keen to also watch movies or want modern and impressive graphics, this isn’t the one for you.

If that’s your priority, and you don’t mind spending some more money, we lean decidedly toward the PlayStation 5 for its stronger lineup of exclusive games, given that most multi-platform titles perform very similarly on both its hardware and the Xbox Series X. The latter has a strong argument in the form of Xbox Game Pass, but we still think Sony’s games trump it, with more to come.

The good news is that there’s no clear wrong answer here — all three consoles are in a really good spot, so you’re likely to end up happy whichever you pick.

Writing by Max Freeman-Mills.

Which one is right for you?

The Dell Latitude lineup includes some of the best and most popular business laptops out there, and there’s something for everyone here. Even just within Dell’s offerings, there are very different laptops to choose from, so it’s important to find the right one for you. We’re here to help with that, and in this article, we’ll be comparing the Dell Latitude 7430 to the Latitude 5430 to help you pick one of the two.

Right off the bat, these are aimed at slightly different crowds. The Dell Latitude 7430, like the rest of the 7000 series, is more of a premium product (though not quite as premium as the 9000 series), while the Latitude 5430 is more geared towards the mainstream. That means there are some differences in design that may sway you one way or the other. Let’s take a closer look.

Navigate this article:

- Specs

- Performance

- Display

- Design

- Ports and connectivity

- Final thoughts

Dell Latitude 7430 vs Dell Latitude 5430: Specs

| Dell Latitude 7430 | Dell Latitude 5430 | |

|---|---|---|

| Operating system |

|

|

| CPU |

|

|

| Graphics |

|

|

| Display |

|

|

| Storage |

|

|

| RAM |

|

|

| Battery |

|

|

| Ports |

|

|

| Audio |

|

|

| Camera |

|

|

| Windows Hello |

|

|

| Connectivity |

|

|

| Color |

|

|

| Size (WxDxH) |

|

|

| Weight |

|

|

| Price | Starting at $1,969 (MSRP) | Starting at $1,419 (MSRP) |

One thing to note about these configurations is that some are only available through the normal purchasing pages on Dell’s website, while others are only available by going to the advanced configurations page. You may not see them all right away.

You may not see them all right away.

Performance: The Dell Latitude 7430 has optional P-series processors

Looking at the spec sheet above, you can see that these two laptops share many of the same CPU configurations, however, the Dell Latitude 7430 does have a few options that the Latitude 5430 lacks. Specifically, you can get the Latitude 7430 with Intel’s P-series processors, which have a higher TDP, and in tandem, more cores and threads, which can result in faster performance. As an example, here’s how an Intel Core i7-1270P compared to a Core i7-1265U in the Geekbench 5 benchmark:

| Dell Latitude 7430 Intel Core i7-1270P (see test) |

Dell Latitude 5430 Intel Core i7-1265U (see test) |

|

|---|---|---|

| Geekbench 5 (single-core/multi-core) | 1,726 / 9,163 | 1,778 / 7,462 |

Benchmarks aren’t the end-all-be-all of performance, though, and the actual performance may vary a little because you also have to consider things like thermals. One trend we’ve seen a lot in 2022 is that many laptops that used to ship with U-series processors (with a 15W TDP) now have P-series processors, without big changes to the design to accommodate the need for better cooling, so performance can throttle under heavy loads.

One trend we’ve seen a lot in 2022 is that many laptops that used to ship with U-series processors (with a 15W TDP) now have P-series processors, without big changes to the design to accommodate the need for better cooling, so performance can throttle under heavy loads.

Another thing to consider is battery life. P-series processors use a lot more power than U-series models, and that means they chew through the battery more quickly. Both the Dell Latitude 7430 and 5430 have up to 58Whr batteries, but if you choose a P-series processor in the former, it won’t last as long as it would with a U-series one. Thankfully, you can choose, so this isn’t necessarily an advantage for the Latitude 5430 – just for U-series processor in general.

The Dell Latitude 5430 uses slotted RAM, meaning you can upgrade it.

What is a clear advantage for the Dell Latitude 5430 is the upgradeability of the RAM. The Latitude 7430 comes with soldered RAM, so whatever configuration you choose out of the box, that’s what you’re stuck with. You can choose 8GB, 16GB, or 32GB if you go with a U-series processor, but the P-series models are limited to 16GB, so you lose some freedom there. Meanwhile, the Latitude 5430 can be configured with up to 64GB of RAM, and that RAM uses SODIMM slots. That means you can save some money by buying a cheaper configuration now, then upgrade the RAM later if you want more. Both laptops come with DDR4 RAM, though the P-series models of the Latitude 7430 actually use LPDDR5, which is a potential advantage.

You can choose 8GB, 16GB, or 32GB if you go with a U-series processor, but the P-series models are limited to 16GB, so you lose some freedom there. Meanwhile, the Latitude 5430 can be configured with up to 64GB of RAM, and that RAM uses SODIMM slots. That means you can save some money by buying a cheaper configuration now, then upgrade the RAM later if you want more. Both laptops come with DDR4 RAM, though the P-series models of the Latitude 7430 actually use LPDDR5, which is a potential advantage.

As for storage, both laptops have M.2 SSD storage, and the configurations are similar. The big difference is that you can configure the Latitude 5430 with up to 2TB, while the 7430 caps out a 1TB.

Display: Both are relatively basic

Moving on to the display, the two laptops definitely have some noteworthy differences, but neither of them is all too special. The Dell Latitude 7430 definitely has an advantage, starting with a Full HD (1920 x 1080) display in the base configuration, which should be more than good enough for general office use. If you have money to spend, Dell also offers an Ultra HD (3840 x 2160) option that’s incredibly sharp for this size, and it should look great.

If you have money to spend, Dell also offers an Ultra HD (3840 x 2160) option that’s incredibly sharp for this size, and it should look great.

Dell Latitude 7430

The Dell Latitude 5430 is much less impressive in this regard. For starters, the base configuration comes in a measly 1366 x 768 resolution, which is really not ideal for an expensive laptop in 2022. You can upgrade to Full HD and get a similar experience to the Dell Latitude 7430, but that’s as far as you can go. There’s no Ultra HD or even Quad HD option, so if you want an extra sharp screen, the Dell Latitude 7430 is for you.

Both laptops offer touchscreens as an optional upgrade, but one thing to note is that the Dell Latitude 7430 is also available as a 2-in-1 laptop, so not only does it support it, you can actually use it as a tablet.

Dell Latitude 5430

Dell has been one of the slower companies when it comes to adopting better webcams, so in both of these laptops, we’re looking at a base configuration with a 720p camera. Thankfully, you can upgrade to a 1080p camera and get much better image quality. Most Full HD webcam configurations also include an IR camera for Windows Hello facial recognition, which makes it that much more convenient to unlock your PC. On that note, both laptops also have optional fingerprint readers, if you prefer that.

Thankfully, you can upgrade to a 1080p camera and get much better image quality. Most Full HD webcam configurations also include an IR camera for Windows Hello facial recognition, which makes it that much more convenient to unlock your PC. On that note, both laptops also have optional fingerprint readers, if you prefer that.

As for sound, each of the laptops has two speakers powered by Waves MaxxAudio, and dual-array microphones for calls and meetings. These should provide a solid enough experience, but the speakers aren’t going to be the most immersive out there.

Being the more premium laptop means the Dell Latitude 7430 has more of an emphasis on design, and that entails a few things. For one thing, it’s more compact, measuring 17.27mm in thickness and weighing 2.69lbs for the clamshell model. Even the 2-in-1 variant weighs 2.97lbs, which still makes it slightly lighter than the Latitude 5430.

Indeed, that model starts at 3.01lbs, and it’s also noticeably thicker, at 19. 3mm. That’s an expected trade-off with a cheaper laptop, though, and in this case, you get something in return for that. The extra space in the chassis means the RAM is upgradeable, as we’ve mentioned, plus you get some extra ports.

3mm. That’s an expected trade-off with a cheaper laptop, though, and in this case, you get something in return for that. The extra space in the chassis means the RAM is upgradeable, as we’ve mentioned, plus you get some extra ports.

The Latitude 7430 comes in aluminum or carbon fiber variants.

As for looks – which are admittedly a bit more suggestive – we’d still give extra points to the Dell Latitude 7430 due to giving you more options. You can choose the aluminum model, which comes in Titan Grey (which is to say silver), or the carbon fiber model that comes in a nearly black colorway. Meanwhile, the Latitude 5430 is only available in aluminum, and it comes in a dark silver shade.

Ports and connectivity: Business laptops are very versatile

Finally, let’s talk about ports, which is something business laptops typically excel at. Both the Dell Latitude 7430 and 5430 have an excellent supply of ports, but being the larger laptop, the Latitude 5430 actually has an advantage here. The Dell latitude 7430 comes with two Thunderbolt 4 (USB-C) ports, one USB Type-A port, HDMI 2.0, a headphone jack, and a microSD card reader. You can also get an optional Smart Card reader built-in.

The Dell latitude 7430 comes with two Thunderbolt 4 (USB-C) ports, one USB Type-A port, HDMI 2.0, a headphone jack, and a microSD card reader. You can also get an optional Smart Card reader built-in.

The Dell Latitude 5430 has a nearly identical setup, but with two extra ports – another USB Type-A port, and RJ45 Ethernet. If you rely on a wired internet connection, this one is a pretty big deal since you won’t need any adapters to get more reliable internet.

Beyond the physical ports, there’s also wireless connectivity to talk about, and here the Dell Latitude 7430 pulls ahead. Both laptops support Wi-Fi 6E and Bluetooth 5.2, as you’d expect, but the Latitude 7430 has better cellular options. You can choose between 4G LTE or 5G support if you want to be a bit more future-proof, both powered by Qualcomm modems. Meanwhile, the Latitude 5430 only offers 4G LTE, which is powered by an Intel XMM 7360 modem. 5G support isn’t a huge advantage in terms of speed, though, so this isn’t a necessary upgrade at all.

Dell Latitude 7430 vs Latitude 5430: Final thoughts

As with anything, choosing between these two comes down to your personal priorities, but there are clear advantages and disadvantages to each of them. Right off the bat, if you value the 2-in-1 form factor, the Dell Latitude 7430 is your only option. That means you can use it as a laptop or a tablet or in a few different modes for media consumption and browsing the web, so you definitely get a bit more versatility.

The Dell Latitude 7430 also has configurations with faster performance thanks to the P-series processors, an option for a 4K display, and optional 5G support if you want to be on the bleeding edge. It’s also a more compact laptop and it comes in two colors to choose from, which is great if you want to choose a look that’s more your style.

On the other hand, you might appreciate the Dell Latitude 5430 because it offers upgradeable RAM, which means you can save money now and upgrade later as your budget allows. You can also configure it with more storage out of the box. This one also has more ports, including Ethernet, so it might be a bit more convenient.

You can also configure it with more storage out of the box. This one also has more ports, including Ethernet, so it might be a bit more convenient.

One final thing to consider is the price, especially because the Dell Latitude 5430 starts at a considerably lower price than the 7430. These days, you can find both laptops with some discounts, but the Latitude 5430 starts at around $1,400 at writing time, while the Latitude 7430 starts at around $1,679. You get a bit more room for upgrades with the cheaper model.

Regardless of your choice, you can buy either laptop using the links below. Otherwise, consider checking out the best Dell laptops to see some other options that aren’t as business-oriented. We also have a list of the best laptops overall if you want to look beyond Dell.

Dell Latitude 7430

-

The Dell latitude 7430 is available with 12th-generation Intel Core processors and other top-notch specs for business users.

- See at Dell

Dell Latitude 5430

-

The Dell Latitude 5430 is a highly configurable business laptop with 12th-gen Intel processors and a premium design.

- See at Dell

0023 1000MHz

8.23 TFLOPS vs 6.14 TFLOPS

128.6 GPixel/s vs 96 GPixel/s

180W vs 250W

2500MHz vs 1753MHz

10000MHz vs 7012MHz

257.1 GTexels/s vs 192 GTexels/s

1733MHz vs 1089MHz

- 1.5x more VRAM?

12GB vs 8GB - 17GB/s more memory bandwidth?

337GB/s vs 320GB/s - 128bit wider memory bus?

384bit vs 256bit - 512 more stream processors?

3072 vs 2560 - 800million more transistors?

8000 million vs 7200 million - 32 more texture units (TMUs)?

192 vs 160 - 16 more ROPs?

96 vs 80

What are the most popular comparisons?

Nvidia GeForce GTX 1080

vs

Nvidia GeForce RTX 3060

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce RTX 3090

Nvidia GeForce GTX 1080

vs

Nvidia Geforce GTX 1660 Super

Nvidia GeForce GTX Titan X

vs

Nvidia Titan V

Nvidia GeForce GTX 1080

vs

Nvidia GeForce RTX 2060

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce GTX 1070

Nvidia GeForce GTX 1080

vs

Nvidia GeForce RTX 3080

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce RTX 3050 Laptop

Nvidia GeForce GTX 1080

vs

Nvidia GeForce RTX 3050 Ti Laptop

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce GTX 1650

Nvidia GeForce GTX 108

vs

Nvidia GeForce GTX 1650 Super

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce RTX 2080 Ti Founders Edition

Nvidia GeForce GTX 1080

vs

Nvidia GeForce RTX 3070 Ti

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce RTX 3060 Laptop

Nvidia GeForce GTX 1080

vs

Nvidia GeForce GTX 1650

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce GTX Titan Z

Nvidia GeForce GTX 1080

vs

AMD Radeon RX 580

Nvidia GeForce GTX Titan X

vs

Nvidia GeForce GTX Titan Black

Nvidia GeForce GTX 1080

vs

Nvidia GeForce GTX 1070

Price Match

User Reviews

Overall Rating

Nvidia GeForce GTX 1080

1 User Reviews

Nvidia GeForce GTX 10800003

10. 0 /10

0 /10

1 reviews of users

NVIDIA GeForce GTX Titan X

0 Reviews of Users

NVIDIA GEFORCE GTX TITX TITAN X

/10

9000 9000 9000 9000 9000 9000 Reviews 9000 and qualities

10.0 /10

1 Votes

Reviews are not yet

10.0 /10

1 VOTES

9,0003

reviews yet there are no

performance

10.0 /10

1 Votes

reviews yet there are no

fan nois

6.0 /10

1 votes 9000

Reliability

7.0 /10

1 votes

No reviews yet

Performance

1.clock GPU

0003

1000MHz

The graphics processing unit (GPU) has a higher clock speed.

2.turbo GPU

1733MHz

1089MHz

When the GPU is running below its limits, it can jump to a higher clock speed to increase performance.

3.pixel rate

128.6 GPixel/s

96 GPixel/s

The number of pixels that can be displayed on the screen every second.

4.flops

8.23 TFLOPS

6.14 TFLOPS

FLOPS is a measurement of GPU processing power.

5.texture size

257.1 GTexels/s

192 GTexels/s

Number of textured pixels that can be displayed on the screen every second.

6.GPU memory speed

2500MHz

1753MHz

Memory speed is one aspect that determines memory bandwidth.

7.shading patterns

Shading units (or stream processors) are small processors in a graphics card that are responsible for processing various aspects of an image.

8.textured units (TMUs)

TMUs accept textured units and bind them to the geometric layout of the 3D scene. More TMUs generally means texture information is processed faster.

More TMUs generally means texture information is processed faster.

9 ROPs imaging units

ROPs are responsible for some of the final steps of the rendering process, such as writing the final pixel data to memory and performing other tasks such as anti-aliasing to improve the appearance of graphics.

Memory

1.memory effective speed

10000MHz

7012MHz

The effective memory clock frequency is calculated from the size and data transfer rate of the memory. A higher clock speed can give better performance in games and other applications.

2.max memory bandwidth

320GB/s

337GB/s

This is the maximum rate at which data can be read from or stored in memory.

3.VRAM

VRAM (video RAM) is the dedicated memory of the graphics card. More VRAM usually allows you to run games at higher settings, especially for things like texture resolution.

4.memory bus width

256bit

384bit

Wider memory bus means it can carry more data per cycle. This is an important factor in memory performance, and therefore the overall performance of the graphics card.

5. GDDR memory versions

Later versions of GDDR memory offer improvements such as higher data transfer rates, which improve performance.

6. Supports memory troubleshooting code

✖Nvidia GeForce GTX 1080

✖Nvidia GeForce GTX Titan X

Memory troubleshooting code can detect and fix data corruption. It is used when necessary to avoid distortion, such as in scientific computing or when starting a server.

Functions

1.DirectX version

DirectX is used in games with a new version that supports better graphics.

2nd version of OpenGL

The newer version of OpenGL, the better graphics quality in games.

OpenCL version 3.

Some applications use OpenCL to use the power of the graphics processing unit (GPU) for non-graphical computing. Newer versions are more functional and better quality.

4. Supports multi-monitor technology

✔Nvidia GeForce GTX 1080

✔Nvidia GeForce GTX Titan X

The video card has the ability to connect multiple screens. This allows you to set up multiple monitors at the same time to create a more immersive gaming experience, such as a wider field of view.

5. GPU temperature at boot

Unknown. Help us offer a price. (Nvidia GeForce GTX Titan X)

Lower boot temperature means the card generates less heat and the cooling system works better.

6.supports ray tracing

✔Nvidia GeForce GTX 1080

✔Nvidia GeForce GTX Titan X

Ray tracing is an advanced light rendering technique that provides more realistic lighting, shadows and reflections in games.

7. Supports 3D

✔Nvidia GeForce GTX 1080

✔Nvidia GeForce GTX Titan X

Allows you to view in 3D (if you have a 3D screen and glasses).

8.supports DLSS

✖Nvidia GeForce GTX 1080

✖Nvidia GeForce GTX Titan X

DLSS (Deep Learning Super Sampling) is an AI based scaling technology. This allows the graphics card to render games at lower resolutions and upscale them to higher resolutions with near-native visual quality and improved performance. DLSS is only available in some games.

9. PassMark result (G3D)

Unknown. Help us offer a price. (Nvidia GeForce GTX 1080)

Unknown. Help us offer a price. (Nvidia GeForce GTX Titan X)

This test measures the graphics performance of a graphics card. Source: Pass Mark.

Ports

1.has HDMI output

✔Nvidia GeForce GTX 1080

✔Nvidia GeForce GTX Titan X

Devices with HDMI or mini HDMI ports can stream HD video and audio to an attached display.

2.HDMI connectors

More HDMI connectors allow you to connect multiple devices at the same time, such as game consoles and TVs.

HDMI 3.Version

HDMI 2.0

HDMI 2.0

New HDMI versions support higher bandwidth, resulting in higher resolutions and frame rates.

4. DisplayPort outputs

Allows connection to a display using DisplayPort.

5.DVI outputs

Allows connection to a display using DVI.

Mini DisplayPort 6.outs

Allows connection to a display using Mini DisplayPort.

Price match

Cancel

Which graphics cards are better?

What is better than the NVIDIA GeForce GTX 1080 TI or NVIDIA Titan X

NVIDIA GeForce GTX 1080 Ti

NVIDIA Titan X

The basic clock frequency of the GPU

0 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000

1481MHz

max 2457

Average: 938 MHz

1417MHz

max 2457

Average: 938 MHz

GPU memory frequency

This is an important aspect calculating memory bandwidth

1376MHz

max 16000

Average: 1326. 6 MHz

6 MHz

1251MHz

max 16000

Average: 1326.6 MHz

FLOPS

The measurement of processing power of a processor is called FLOPS.

11.3TFLOPS

max 1142.32

Average: 92.5 TFLOPS

9.72TFLOPS

max 1142.32

Average: 92.5 TFLOPS

Turbo GPU

If the speed of the GPU drops below its limit, it can switch to a high clock speed to improve performance.

Show all

1582MHz

max 2903

Average: 1375.8 MHz

1531MHz

max 2903

Average: 1375. 8 MHz

8 MHz

Texture size

A certain number of textured pixels are displayed on the screen every second.

Show all

332 GTexels/s

max 756.8

Average: 145.4 GTexels/s

317 GTexels/s

max 756.8

Average: 145.4 GTexels/s

Architecture name

Pascal

No data

Graphic processor name

GP102

DEMODED memory

This is 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9000 9,0002 9,0002 9,0002 9,0002 the rate at which a device stores or reads information.

484.4GB/s

max 2656

Average: 198. 3GB/s

3GB/s

480GB/s

max 2656

Average: 198.3 GB/s

Effective memory speed

The effective memory clock speed is calculated from the size and information transfer rate of the memory. The performance of the device in applications depends on the clock frequency. The higher it is, the better.

Show all

11008MHz

max 19500

Average: 6984.5 MHz

10008 MHz

max 19500

Average: 6984.5 MHz

RAM

11GB

max 128

Average: 4.6 GB

12GB

max 128

Average: 4. 6 GB

6 GB

GDDR Memory Versions

Latest GDDR memory versions provide high data transfer rates to improve overall performance

Show all

5

Average: 4.5

5

Average: 4.5

Memory bus width

A wide memory bus means that it can transfer more information in one cycle. This property affects the performance of the memory as well as the overall performance of the device’s graphics card.

Show all

352bit

max 8192

Average: 290.1bit

384bit

max 8192

Average: 290.1bit

Release date

2017-02-28 00:00:00

n/a

Heat dissipation (TDP)

The lower the TDP, the less power will be consumed.

Show all

250W

Average: 140.4 W

250W

Average: 140.4 W

Process technology

The small size of the semiconductor means it is a new generation chip.

16 nm

Average: 47.5 nm

16 nm

Average: 47.5 nm

Number of transistors

The higher their number, the more processor power it indicates

11800 million

max 80000

Average: 5043 million

12000 million

max 80000

Average: 5043 million

PCIe version

Considerable speed is provided by the expansion card used to connect the computer to peripherals. The updated versions have impressive throughput and provide high performance.

The updated versions have impressive throughput and provide high performance.

Show all

3

Mean: 2.8

3

Mean: 2.8

Width

266.7mm

max 421.7

Average: 242.6mm

267mm

max 421.7

Average: 242.6mm

Height

111.2mm

max 180

Average: 119.1mm

111.1mm

max 180

Average: 119.1mm

Purpose

Desktop

n.a.

12

max 12. 2

2

Average: 11.1

12

max 12.2

Average: 11.1

OpenCL version

Used by some applications to enable GPU power for non-graphical calculations. The newer the version, the more functional it will be

Show all

3

max 4.6

Average: 1.7

1.2

max 4.6

Average: 1.7

opengl version

Later versions provide better game graphics

4.6

max 4.6

Average: 4

4.5

max 4. 6

6

Average: 4

Shader model version

6.4

max 6.6

Average: 5.5

max 6.6

Average: 5.5

version VULKAN

1.3

No data

version CUDA

6.1

No

HDMI Output

9000 They can transmit video and audio to the display.

Show all

Yes

Yes

HDMI version

The latest version provides a wide signal transmission channel due to the increased number of audio channels, frames per second, etc.

Show all

2

max 2.1

Average: 2

2

max 2. 1

1

Average: 2

DisplayPort

Allows connection to a display using DisplayPort

3

Average: 2

3

Average: 2

Number of HDMI sockets

The more there are, the more devices can be connected at the same time (for example, game/TV type consoles)

Show all

one

Average: 1.1

one

Average: 1.1

HDMI

Yes

Yes

Passmark score

17693

max 29325

Average: 7628. 6

6

max 29325

Average: 7628.6

3DMark Cloud Gate GPU benchmark score

139640

max 1

Average: 80042.3

max 1

Average: 80042.3

3DMark Fire Strike Score

19224

max 38276

Average: 12463

max 38276

Average: 12463

3DMark Fire Strike Graphics test score

27013

max 49575

Average: 11859.1

max 49575

Average: 11859.1

3DMark 11 Performance GPU score

36919

max 57937

Average: 18799. 9

9

max 57937

Average: 18799.9

3DMark Ice Storm GPU score

386800

max 533357

Average: 372425.7

max 533357

Average: 372425.7

SPECviewperf 12 test score — Solidworks

67

max 202

Average: 62.4

max 202

Average: 62.4

SPECviewperf 12 test score — specvp12 sw-03

67

max 202

Average: 64

max 202

Average: 64

SPECviewperf 12 evaluation — Siemens NX

ten

max 212

Average: 14

max 212

Average: 14

SPECviewperf 12 test score — specvp12 showcase-01

146

max 232

Average: 121. 3

3

max 232

Average: 121.3

SPECviewperf 12 score — Showcase

146

max 175

Average: 108.4

max 175

Average: 108.4

SPECviewperf 12 test score — Medical

57

max 107

Average: 39.6

max 107

Average: 39.6

SPECviewperf 12 test score — specvp12 mediacal-01

57

max 107

Average: 39

max 107

Average: 39

SPECviewperf 12 test score — Maya

172

max 177

Average: 129. 8

8

max 177

Average: 129.8

SPECviewperf 12 test score — specvp12 maya-04

172

max 180

Average: 132.8

max 180

Average: 132.8

SPECviewperf 12 — Creo 9 test score0545

59

max 153

Average: 49.5

max 153

Average: 49.5

SPECviewperf 12 test score — specvp12 creo-01

59

max 153

Average: 52.5

max 153

Average: 52.5

SPECviewperf 12 test score — specvp12 catia-04

103

max 189

Average: 91. 5

5

max 189

Average: 91.5

SPECviewperf 12 evaluation — Catia

103

max 189

Average: 88.6

max 189

Average: 88.6

SPECviewperf 12 test score — specvp12 3dsmax-05

145

max 316

Average: 189.5

max 316

Average: 189.5

SPECviewperf 12 test score — 3ds Max

143

max 269

Average: 169.8

max 269

Average: 169.8

GeForce GTX 1080 Ti video card preview

Traditionally, TITAN X series video cards have higher performance compared to GTX series devices. However, this rule is not typical for the new GeForce GTX 1080 Ti: this video card practically leveled the capabilities of the ultimate high-end TITAN X models with devices of a more modest, household GTX series.

However, this rule is not typical for the new GeForce GTX 1080 Ti: this video card practically leveled the capabilities of the ultimate high-end TITAN X models with devices of a more modest, household GTX series.

Graphics capabilities of new products from NVIDIA

The heart of the GeForce GTX 1080 Ti is a slightly simplified version of the GP102 GPU, which is also used in devices of the GeForce TITAN X line. It retains the same number of CUDA units and texture units (3584 and 224, respectively) , but the number of used rasterization units is reduced to 88 (in the TITAN X model, their number is 96).

Another difference between the novelty and the premium model is the use of a non-standard memory bus width. It is 352 bits (in TITAN X this figure is 384 bits). The amount of pre-installed RAM has also been reduced from 12 to 11 GB, but at the same time, the GDDR5X frequency has been increased from 10,008 MHz to 11,008 MHz. And given the increase in the operating frequency of both the GPU and the installed memory chips, the new line of video cards will not be inferior in performance to the higher-end GeForce TITAN X model. And the significantly lower cost of the GTX 1080 Ti video card will certainly make it a top solution for home users.

And the significantly lower cost of the GTX 1080 Ti video card will certainly make it a top solution for home users.

As usual, the models of the so-called reference design were the first to be proposed, so let’s start our acquaintance with the video card with the reference version of the GTX 1080 Ti Founders Edition. The video card is packaged in a beautifully designed medium-sized box. The package includes, in addition to the video card itself, a DisplayPort to DVI video output adapter and a paper express manual. The external design remained the same as in the previous well-known reference models from NVIDIA: the same top cover made of aluminum, with chopped, high-quality edges, and the cooling plates are clearly visible through a transparent window on the top panel.

The heat-removing element of the base is a rather massive evaporator chamber, and air is blown and the radiator is blown through by a single centrifugal fan. The advantage of this constructive solution is that almost all the hot air leaves the «system unit». This powerful video adapter option is considered the best solution for compact or poorly ventilated cases. Optimized with a larger evaporator volume and usable internal interface panel, the cooler is more efficient than the TITAN X cooler.

This powerful video adapter option is considered the best solution for compact or poorly ventilated cases. Optimized with a larger evaporator volume and usable internal interface panel, the cooler is more efficient than the TITAN X cooler.

The GTX 1080 Ti has four video outputs (one HDMI 2.0b and three DisplayPort 1.4 outputs). The developers placed all interface connectors in one tier. To save the area of the panel, the DVI-D connector is not placed on the “second floor”, and if necessary, the monitor is connected via the included DisplayPort to DVI adapter. Thanks to this layout of the connectors, it was possible to significantly increase the cooling area of the ventilation grille (it occupies about ⅔ of the mounting plate), simplify heat dissipation and increase the overall efficiency of the cooling system.

For the GTX 1080 Ti, the manufacturer used the same circuit board as for the GeForce TITAN X, supplemented by a GPU power stabilization subsystem. Due to the fact that the VRM module includes seven dual phases (dualFET paired chips), not only the required power is achieved, but also the efficiency of the converter is increased. The graphics card uses Micron’s GDDR5X memory chips that are arranged around the GPU case in a unique U-shape. The GeForce GTX 1080 Ti has 11 chips of 1 GB each. All of them receive additional cooling from a massive bracket of a common cooler through special heat-conducting pads.

Due to the fact that the VRM module includes seven dual phases (dualFET paired chips), not only the required power is achieved, but also the efficiency of the converter is increased. The graphics card uses Micron’s GDDR5X memory chips that are arranged around the GPU case in a unique U-shape. The GeForce GTX 1080 Ti has 11 chips of 1 GB each. All of them receive additional cooling from a massive bracket of a common cooler through special heat-conducting pads.

To connect an additional 12 volt power supply to the device, two special connectors are installed in the video adapter: one for 8, the other for 6 pins. Both are located on the top edge of the adapter. According to the manufacturer, the value of the thermal package of the new video card (TDP) is about 250 watts. The TITAN X models are of the same importance, as both cards are based on identical GPUs, including 12 billion transistors. For the previous model GeForce GTX 1080, made on a simpler GP104 chip, the TDP value is up to 180 watts. But the increase in energy consumption is justified by the increased productivity. As NVIDIA recommends, when using systems with a GTX 1080 Ti graphics card, you must have a power supply with a power of at least 600 watts.

But the increase in energy consumption is justified by the increased productivity. As NVIDIA recommends, when using systems with a GTX 1080 Ti graphics card, you must have a power supply with a power of at least 600 watts.

To prevent damage to the PCB surface, the manufacturer covered the reverse side of the main printed circuit board with a massive plate. Thanks to the composite design of the protective plate, part of the metal plate can be easily removed, increasing the free gap between the components of the devices, which improves the flow of cold air into the cooling system of the second video card or other device in the adjacent slot.

The top panel of the video adapter is decorated with the GeForce GTX logo, complemented by the company’s traditional green backlight, which turns on when the system is running. The length of the video card does not differ from the dimensions of other top models from NVIDIA — 267 mm.

First impressions of the GeForce GTX 1080 Ti

At rest (with the case cover open), the GPU temperature did not exceed 32 degrees. At the same time, the cooler rotation speed was about 1100 rpm. Despite the fact that the installed cooling system does not support hybrid mode, and the fan is constantly rotating, with a minimum graphic load of the system, the device remains as quiet as possible. But with an increase in system load, the temperature on the processor case increased to 84 degrees, and the fan speeded up to 2400 rpm. In peak modes, the noise level of the video adapter can be estimated as average.

At the same time, the cooler rotation speed was about 1100 rpm. Despite the fact that the installed cooling system does not support hybrid mode, and the fan is constantly rotating, with a minimum graphic load of the system, the device remains as quiet as possible. But with an increase in system load, the temperature on the processor case increased to 84 degrees, and the fan speeded up to 2400 rpm. In peak modes, the noise level of the video adapter can be estimated as average.

With built-in GPU Boost 3.0, the internal frequency of the GP102 graphics chip in the reference GTX 1080 Ti constantly changes in response to the chip’s power consumption and current temperature. When running some games, the GPU frequency reaches 1800-1850 MHz, but in other operating situations it can drop to 1700 MHz or increase to 1886 MHz (at peak load).

Gaming performance

When experimenting with overclocking this GPU, its speed under real gaming load reached 1923-1974 MHz, and the actual frequency tended to 2000 MHz. However, the system of dynamic auto-acceleration does not allow to achieve stable operation at this frequency. When operating in this mode, heating and power consumption increase significantly: the internal temperature of the chip rises to 85 degrees, and the cooling fan speed exceeds 3000 rpm.

However, the system of dynamic auto-acceleration does not allow to achieve stable operation at this frequency. When operating in this mode, heating and power consumption increase significantly: the internal temperature of the chip rises to 85 degrees, and the cooling fan speed exceeds 3000 rpm.

Conclusions

Contrary to the established practice of NVIDIA when introducing new models of top GPUs in previous generations of video adapters, this time the developer did everything possible to ensure that the GeForce GTX 1080 Ti was not inferior in performance to its main competitor TITAN X, and also received a number of additional benefits. Despite some limitations in the set of functional blocks of the GPU and the absence of one of the RAM controllers, eight ROP chips and one of the 12 GB of RAM, this practically does not affect the performance of the video card in games and is compensated by a slight increase in the GPU clock speed and the use of new chips GDDR5X, with an effective frequency of 11 GHz.

These minor specifications differences are more likely to reduce the cost of the device than to differentiate the GeForce GTX 1080 Ti model from its older sister TITAN X. In addition, the performance of the GTX 1080 Ti is also affected by improved «physical» parameters of the video adapter: higher efficiency cooling systems and power supply efficiency. To date, the GTX 1080 Ti is the first GeForce device to be fully capable of gaming at 4K resolution. And the relatively low price makes it the most cost-effective solution compared to any multi-processor configurations.

Choose and buy your graphics card from the technical experts!

Buy a video card for a computer in the online store

NVIDIA GeForce

ASUS

graphics cards ZOTAC 9000

Contents

- Introduction

- Features

- Tests

- Games

- Key differences

- Conclusion

- Comments

Video card

Video card

Introduction

We compared two graphics cards: NVIDIA TITAN Xp vs NVIDIA GeForce GTX 1080 Ti. On this page, you will learn about the key differences between them, as well as which one is the best in terms of features and performance.

On this page, you will learn about the key differences between them, as well as which one is the best in terms of features and performance.

NVIDIA TITAN Xp is a Pascal-based GeForce 10 generation graphics card released Apr 6th, 2017. It comes with 12GB of GDDR5X memory running at 1426MHz, has 1x 6-pin + 1x 8-pin power connector and consumes up to 165 W.

The NVIDIA GeForce GTX 1080 Ti is a Pascal-based GeForce 10 generation graphics card released on Mar 10th, 2017. It comes with 11GB of GDDR5X memory running at 1376MHz, has a 1x 6-pin + 1x 8-pin power connector and consumes up to 165 watts.

354.4 GFLOPS (1:32)

CLOCK Speeds

Basic frequency

1405 MHz

1481 MHZ

Frequency

1582 MHZ

9000 Render Config

SHADING Units

3840

3584

Texture Units

240

224

Raster Units

9000

88

SM Count

30

28

Graphics Features

DirectX

12 (12_1)

12 (12_1)

OpenGL

4. 6

6

4.6

OpenCL

3.0

3.0

Cuda

6.1

6.1

VULKAN

1.2

1.2

Board Design

Heating0004 1x 6-pin + 1x 8-pin

Slot width

Dual-slot

Dual-slot

Benchmarks

3DMark Graphics

3DMark is a benchmarking tool designed and developed by UL to measure the performance of computer hardware. Upon completion, the program gives a score, where a higher value indicates better performance.

NVIDIA TITAN XP

+4%

NVIDIA GeForce GTX 1080 Ti

Blender bmw27

Blender is the most popular 3D content creation software. It has its own test, which is widely used to determine the rendering speed of processors and video cards. We chose the bmw27 scene. The result of the test is the time taken to render the given scene.

NVIDIA TITAN Xp

NVIDIA GeForce GTX 1080 Ti

+1%

Th200 RP

Th200 RP is a test created by Th200. It measures the raw power of the components and gives a score, with a higher value indicating better performance.

It measures the raw power of the components and gives a score, with a higher value indicating better performance.

NVIDIA TITAN XP

+10%

NVIDIA GeForce GTX 1080 Ti

Games

1920×1080, Ultra

| Game | TITAN Xp | GeForce GTX 1080 Ti |

|---|---|---|

| Anno 1800 | ||

| Assassin’s Creed Odyssey | ||

| Battlefield V | ||

| DOOM Eternal | ||

| Far Cry 5 | ||

| Hitman 2 | ||

| Metro Exodus | ||

| Red Dead Redemption 2 | ||

| Shadow of the Tomb Raider | ||

| The Witcher 3 | ||

| Average | 0. 00 fps 00 fps |

110.04 fps |

2560×1440, Ultra