The Best Graphics Cards for Compact PCs in 2022

Editor’s Note: Before you dive into this guide, as you’ll see from some of the prices above, the availability and pricing situation for GPUs is anything but «normal» right now, and has been skewed since the start of the pandemic. If you plan to buy a card soon, also see this buying-strategies guide for advice on finding cards at a fair price. If you want to wait it out a bit longer, check out this how-to tutorial on getting the most performance from the GPU you already own.

The Best Graphics Card Deals This Week*

*Deals are selected by our commerce team

-

MSI Geforce RTX 3090 24GB Graphics Card

(Opens in a new window)

— $979.99

(List Price $2,009.99)

-

MSI Geforce RTX 3090 Ti 24GB Graphics Card

(Opens in a new window)

— $1,269.60

(List Price $1,400)

-

EVGA GeForce RTX 3090 FTW3 Ultra Graphics Card

(Opens in a new window)

— $1,126.99

(List Price $1,919.99)

-

Zotac Gaming RTX 3060 Twin Edge OC 12GB Graphics Card

(Opens in a new window)

— $420.57

(List Price $549.99)

-

Zotac Gaming RTX 3080 Ti Trinity OC Graphic Card

(Opens in a new window)

— $869.99

(List Price $1,149.99)

More About Our Picks

Zotac GeForce GTX 1650 Super Twin Fan

4.0 Excellent

Best Compact Graphics Card for Budget 1080p Play

Bottom Line:

Zotac’s punchy GeForce GTX 1650 Super Twin Fan is markedly better than the non-«Super» GTX 1650 and a solid, small version of this mainstream GPU for budget-focused 1080p gaming.

Pros

- Much faster than original non-Super GeForce GTX 1650 in 1080p and 1440p gaming.

- Runs quiet.

- Priced competitively.

- Impressively small in our Zotac test sample.

Cons

- Underperforms on some games.

-

Runs hotter than the non-Super GTX 1650.

Read Our Zotac GeForce GTX 1650 Super Twin Fan Review

Zotac GeForce GTX 1660 Super Twin Fan

4.0 Excellent

Best Compact Graphics Card for Mainstream 1080p Play

Bottom Line:

If you’ve been holding off on a mainstream video card for 1080p gaming, the GeForce GTX 1660 Super could be your trigger to buy: It’s a solid-playing, popularly priced waypoint between the GTX 1660 and GTX 1660 Ti.

Pros

- Solid price-to-performance ratio for 1080p gaming.

- Surprisingly good overclocking ceiling.

Cons

- Some driver wrinkles in a few test games show scant improvement over GTX 1660.

Read Our Zotac GeForce GTX 1660 Super Twin Fan Review

EVGA GeForce RTX 3050 XC Black Gaming 8G

4.0 Excellent

Best Compact Graphics Card for High-Refresh 1080p Play (Plus Ray-Tracing)

Bottom Line:

The GeForce RTX 3050 is a strong junior entry into Nvidia’s peerless lineup of «Ampere»-powered RTX 30 Series GPUs, and this EVGA XC Black card is a corker for 1080p play at a near-budget price.

Pros

- Compact, twin-fan design

- Full array of video ports in our test sample

- Good price-to-performance ratio for its segment

- Strong results in ray-tracing benchmarks

- High overclock ceiling

Cons

- Not as far ahead of AMD’s Radeon RX 6500 XT in some tests as we would have hoped

- Relatively high power consumption for its class

Read Our EVGA GeForce RTX 3050 XC Black Gaming 8G Review

XFX Speedster SWFT 210 AMD Radeon RX 6600

3.5 Good

Best Compact Graphics Card for Budget 1440p Play

Bottom Line:

The XFX Speedster SWFT 210, based on AMD’s midrange Radeon RX 6600 GPU, is an able-enough short card for lower-end 1440p gaming with newer AAA games.

Pros

- Competitive with GeForce RTX 3060 in frame rates and list price

- Lower power requirements

Cons

- Better with newer games than old

- No significant overclocking headroom

- Ran hot during our stress testing

Read Our XFX Speedster SWFT 210 AMD Radeon RX 6600 Review

EVGA GeForce RTX 3060 XC Black Gaming 12G

3. 5 Good

5 Good

Best Compact Graphics Card for Mainstream 1440p Play

Bottom Line:

Suited to 1080p and 1440p gaming, EVGA’s XC Black Gaming 12G version of the GeForce RTX 3060 is an able performer, though Nvidia’s own RTX 3060 Ti outshines it on value.

Pros

- Fast frame rates in multiplayer titles

- Compact card design

- Reasonable overclocking performance

Cons

- Nontrivial performance drop versus the GeForce RTX 3060 Ti

- Minor gains over previous (RTX 2060) generation

- Pricey for its performance class

Read Our EVGA GeForce RTX 3060 XC Black Gaming 12G Review

Zotac GeForce RTX 2060 Amp

4.0 Excellent

A Solid Previous-Gen Alternative to the GeForce RTX 3060

Bottom Line:

A fine value in the previous-generation GeForce RTX 20 lineup, the RTX 2060 is a worthy-enough 1440p pick if you can’t land an RTX 3060.

Pros

-

Faster than the GeForce GTX 1070 for less money.

- Compact two-slot design.

- Quiet fans.

- Headroom for mild overclocking.

Cons

- Unlike Nvidia RTX 2060 Founders Edition, lacks a VirtualLink USB Type-C port.

- Priced higher than the GeForce GTX 1060 it replaces.

- 8GB of video memory would have been ideal.

Read Our Zotac GeForce RTX 2060 Amp Review

Nvidia GeForce RTX 3060 Ti Founders Edition

4.5 Outstanding

Best Semi-Compact Graphics Card for High-Refresh 1440p Play

Bottom Line:

If you want the best marriage of price, performance, and features for 4K and 1440p gaming, Nvidia’s GeForce RTX 3060 Ti Founders Edition is matched only by its own step-up RTX 3070 sibling.

Pros

- Beats the RTX 2080 Super in most benchmarks

- Great price-to-performance ratio

- Stable launch drivers

- Runs cool

- Short PCB, redesigned cooling system make for a compact card

Cons

- RTX 3070 gives an extra margin for 4K today and tomorrow

Read Our Nvidia GeForce RTX 3060 Ti Founders Edition Review

Nvidia GeForce RTX 3070 Founders Edition

5. 0 Exemplary

0 Exemplary

Best Semi-Compact Graphics Card for 4K Gaming

Bottom Line:

A fierce follow-up to its killer GeForce RTX 3080, Nvidia’s beautifully engineered RTX 3070 Founders Edition is a practically perfect graphics card. Gamers aiming for high-resolution, high-refresh 1440p or 4K play will find today’s best price-for-performance engine right here.

Pros

- Incredibly fast for the price

- Beautiful design

- Great 4K gaming results

- Innovative cooling system

- Doesn’t run too hot

Cons

- Expect a run on this card to rival the one on the RTX 3080

Read Our Nvidia GeForce RTX 3070 Founders Edition Review

AMD Radeon RX 6700 XT

3.5 Good

Best Graphics Card for 4K Power in Recent AAA Games (If It Fits!)

Bottom Line:

AMD’s Radeon RX 6700 XT is a solid competitor to Nvidia’s lineup of midrange GPUs—if you stick to recent, optimized titles.

Pros

- Competitive frame rates, in games that are optimized

- SAM testing sees frame-rate gains in select titles

- Debut MSRP is $20 below that of Nvidia’s GeForce RTX 3070

Cons

- DirectX 11-based games may run significantly slower than on competing Nvidia cards

- Higher temperatures under stress than competing Nvidia cards

- Radeon reference design is underwhelming compared with Nvidia’s RTX 30 Series Founders Editions

- Coil whine under load in our sample card

Read Our AMD Radeon RX 6700 XT Review

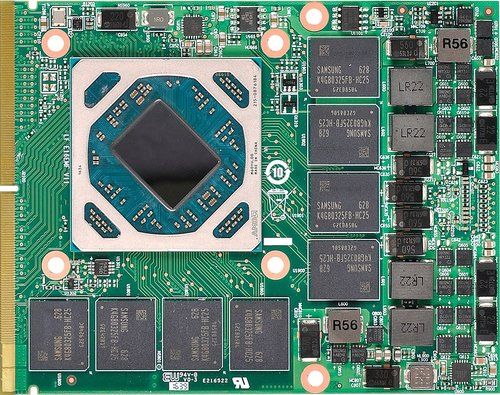

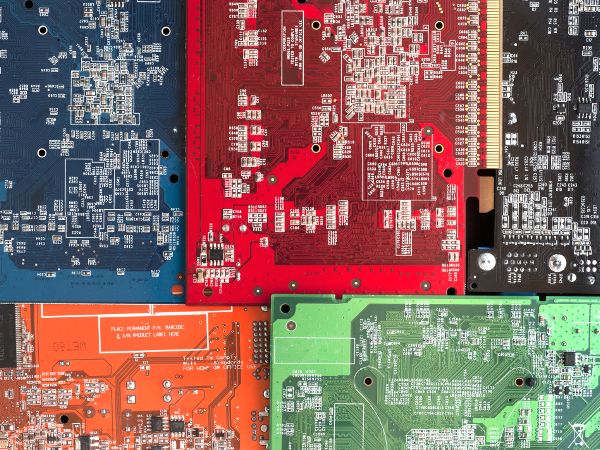

A hulking, full-tower PC is always your best option if you want room for multiple graphics cards, general upgrades, or all the terabytes of storage you could ever reasonably afford. But these days, you don’t necessarily need one if you want a powerful PC that can handle editing high-def or 4K video, playing new AAA games at 4K, and powering virtual-reality (VR) headsets.

As more and more PC enthusiasts and boutique-PC builders have shown interest in compact performance systems, many case makers have offered up comparatively compact chassis that have room for midsize or even full-size graphics cards. For instance, the shoebox-shaped SilverStone Sugo 14 has room enough for a graphics card over a foot long. That means the largest and most powerful cards available, such as the Nvidia GeForce GTX 3080, should have just enough clearance in that case.

A handful of similarly compact PC cases have an even smaller footprint, yet enough room for beastly graphics cards. (You’ll want to check the specs.) Just note that specialized compact cases like these usually require a MicroATX or Mini-ITX motherboard, rather than a more standard (and often less expensive) full-size ATX motherboard. Their unusual proportions mean that they may be able to take big video cards, but not big mainboards.

It’s possible to build a gaming-ready system even smaller, using a truly trim case such as the Cooler Master Elite 110 (which is just 11 by 10 by 11 inches). It can include an upper-end processor and a graphics card like the Zotac GeForce GTX 1650 Super Twin Fan or one based on the GeForce GTX 1660 or GTX 1660 Super. Those cards aren’t extreme performers, but they do support VR gaming and can handle today’s hardiest non-VR titles on high settings at 1080p (1,920-by-1,080-pixel) resolution. (Interested in which cards are best for your VR headset? Check out our roundup of those GPUs(Opens in a new window) for the best picks.)

It can include an upper-end processor and a graphics card like the Zotac GeForce GTX 1650 Super Twin Fan or one based on the GeForce GTX 1660 or GTX 1660 Super. Those cards aren’t extreme performers, but they do support VR gaming and can handle today’s hardiest non-VR titles on high settings at 1080p (1,920-by-1,080-pixel) resolution. (Interested in which cards are best for your VR headset? Check out our roundup of those GPUs(Opens in a new window) for the best picks.)

Measure Twice: How to Tell Which Graphics Cards Will Fit Your Case

For graphics-card considerations specifically (which is likely why you landed here), the most important thing is how much card clearance the PC chassis that you own (or are considering buying) has available. This is often listed on the chassis’ product page under «specifications,» or in an online manual. But in a pinch, you can pop off the side panel and do a rough estimate yourself, with a tape measure or ruler.

Inside the case, find the area where the PCI Express card expansion brackets are, usually at the back. This is the spot where the video-output ports on a graphics card will show through the back of the chassis. Measure from there, parallel to the PCI Express slot into which you’ll install your card, to the first obstruction you run across. That’s your maximum card length, assuming your card does not have power connectors sticking out of its trailing end. If it does, you’ll need to compensate for that. (Most of the time, these days, the connectors are on the top edge of the card.)

This is the spot where the video-output ports on a graphics card will show through the back of the chassis. Measure from there, parallel to the PCI Express slot into which you’ll install your card, to the first obstruction you run across. That’s your maximum card length, assuming your card does not have power connectors sticking out of its trailing end. If it does, you’ll need to compensate for that. (Most of the time, these days, the connectors are on the top edge of the card.)

(Photo: Zlata Ivleva)

If you want to fit a high-end gaming card, also be sure there’s room for mounting the card across two expansion slots, because just about all such cards occupy at least two bracket positions across. Some very compact PC cases may not have very much space between the PCI Express slot you’ll use for a card and the nearby case wall, which might prevent you from installing a card at all. If you’re upgrading an existing system, at least an eyeball check is much advised.

The distance between the bracket area and the nearest object on the other side of the case (often a hard drive mount or bay, or the wall of the case itself) will determine how long a graphics card your system can handle. If the case has 10.5 inches of clearance or more, you’ll be able to fit at least some high-end cards, including a subset of ultra-high-end cards based on, say, Nvidia’s GeForce RTX 3070 or GeForce RTX 3080. This also includes AMD’s high-end options like the Radeon RX 6800 and RX 6800 XT, which both measure in at exactly 10.5 inches each in reference versions. But some third-party versions of these cards are longer (much longer), so you need to check the details. Example: We’ve seen a few GeForce RTX 3070 cards under 10 inches, and some RTX 3060 Ti cards above 12 inches. Check those specs.

(Photo: Zlata Ivleva)

For smaller PC cases that still have room for a full-height graphics card, though, you’ll need an air-cooled card that may be 8 inches long or less. These are often Mini-ITX-mainboard cases that are very tight on space. That 8 inches may sound generously big enough, but as gaming-class graphics cards go, that’s not much wiggle room. But even here these days, you have a few options on that front.

These are often Mini-ITX-mainboard cases that are very tight on space. That 8 inches may sound generously big enough, but as gaming-class graphics cards go, that’s not much wiggle room. But even here these days, you have a few options on that front.

Can Your Power Supply Handle a New Video Card?

Another factor to consider when building a compact PC is power supply (PSU) cable routing. While many cards on the market will keep their power-pin connection ports on top of the card (and equally as many compact or Mini-ITX cases are made to support this design), a few may opt to put the pin connector on the rear-facing edge of the card instead.

If you’re already working in a constrained space and every inch of the total card measurement matters, you’ll want to be sure that wherever your card plugs into the PSU (if it does at all, more on that below) is accommodating to all the other parts and pieces you’re trying to squeeze into a confined area.

That said, many compact cards won’t even ask you for a dedicated PSU power connection in the first place. Several options we have tested, including the 75-watt Zotac GeForce GTX 1650 OC, don’t need an external connection because they already pull all the watts they need from the PCI Express slot they’re plugged into.

Several options we have tested, including the 75-watt Zotac GeForce GTX 1650 OC, don’t need an external connection because they already pull all the watts they need from the PCI Express slot they’re plugged into.

Keep in mind that these cards have limitations on the amount of graphical horsepower and overclocking capacity they can support without an external power source. But if those aren’t major concerns of yours, then you might want to go this route to avoid any cabling issues in the first place.

Low-Profile Graphics Cards: A Low-Clearance Alternative

You’ll note that we’ve talked about card length, but not much about card height. A few thin PC cases (usually flat, broad ones meant for home-theater-PC use) accept only what are called «low-profile» expansion cards, among them low-profile PCI Express graphics cards. These cards are much shorter in the vertical dimension than an ordinary video card, and they can be outfitted with what’s known as a low-profile or «half-height» bracket. (You twist a screw or two to install the shorter bracket in place of the ordinary one.) This enables the card to mount on the vertically smaller PCI-slot frame.

(You twist a screw or two to install the shorter bracket in place of the ordinary one.) This enables the card to mount on the vertically smaller PCI-slot frame.

There’s usually room for just two ports on a half-height bracket, or in a few cards we’ve seen, the half-height bracket is two slots wide, allowing for a third port. So know that multi-display connectivity is often a bit compromised on these cards, especially with the low-profile bracket attached.

Because low-profile boards are much smaller in surface area (and thus the room for a graphics chip, power circuitry, and cooling apparatus is reduced), they are budget-minded, basic cards, meant as a step up from CPU-integrated graphics or to add support for multiple displays.

So, Which Compact Graphics Card Should I Buy?

As always, size and features for video cards based on a given graphics chip can vary significantly, depending on the model and the card maker. Nvidia and AMD make «reference cards» based on their graphics processors. Third-party partners—MSI, Sapphire, EVGA, Asus, and many others—then make and sell their own branded cards, some of which adhere to the design of these reference boards. They also offer «custom» versions with slight differences in shape and size, the configuration of the ports, the amount and speed of onboard memory, and the cooling fans or heat sinks installed.

Third-party partners—MSI, Sapphire, EVGA, Asus, and many others—then make and sell their own branded cards, some of which adhere to the design of these reference boards. They also offer «custom» versions with slight differences in shape and size, the configuration of the ports, the amount and speed of onboard memory, and the cooling fans or heat sinks installed.

Compact video cards fall into that custom class. Because of that, if you’re shopping for a card for a compact system, or you have a particular case in mind, be sure the size, power, and cooling demands of the card you’re buying match up with the chassis you’re planning on putting it in. Few things in the gaming world are more frustrating than getting a promising new graphics card in the mail, or carting it home from the local superstore, only to find out it doesn’t fit in your PC, or your power supply doesn’t have the juice (or requisite connectors) to get it going.

If you have a bit more room to play with in your PC case, check out our roundup of the best graphics cards for 4K gaming, which will be bigger cards. (Also check out our master guide to the best graphics cards overall, heedless of size.) And complete your custom build with one of the top M.2 solid-state drives we’ve tested. These tiny SSDs are a perfect match if you’re space-strapped.

(Also check out our master guide to the best graphics cards overall, heedless of size.) And complete your custom build with one of the top M.2 solid-state drives we’ve tested. These tiny SSDs are a perfect match if you’re space-strapped.

Cost Per Frame: Best Value Graphics Cards Right Now

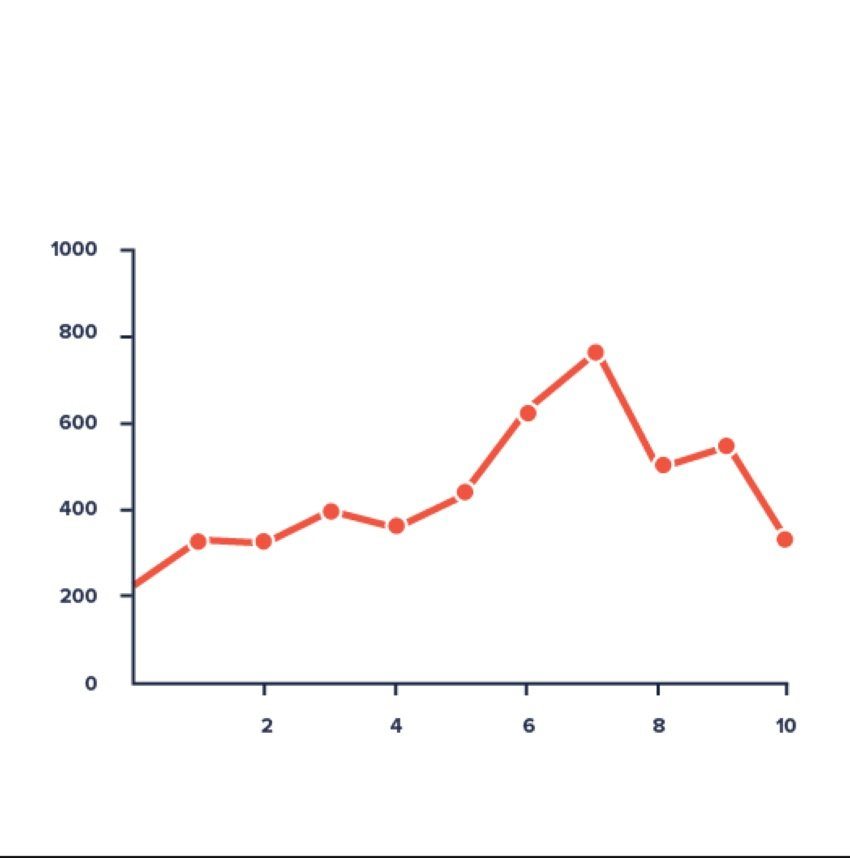

For those who missed it, we recently published our GPU pricing update for April 2022, which saw prices for graphics cards continue to trend in the right direction… downward. We’ve been monitoring GPU prices for well over a year now and it’s great to see prices getting closer and closer to the MSRP.

But as we mentioned in that feature, it’s hard to say just how exciting that is for prospective buyers considering most of these products are now 18 months old and will likely be superseded by the end of this year by much more powerful next-gen hardware. Then again, we do believe that if gamers had the opportunity of buying an RTX 3070 for $730 or an RX 6700 XT for $570 earlier this year, they would have jumped at it. So while pricing still sucks compared to 2019, it could be worse, a lot worse. If you need evidence of that, just go back and check our GPU pricing update for May 2021 when the 3070 was closer to $1,600(!).

So while pricing still sucks compared to 2019, it could be worse, a lot worse. If you need evidence of that, just go back and check our GPU pricing update for May 2021 when the 3070 was closer to $1,600(!).

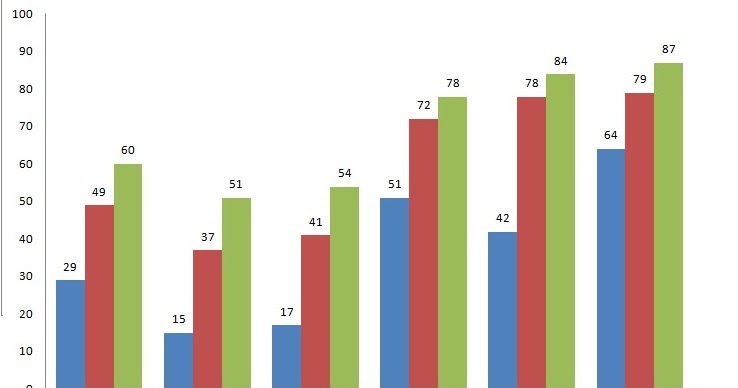

Anyway, for those of you who are now ready to buy, and aren’t willing to hold out for next gen products to arrive — because let’s be honest, pricing will almost certainly be inflated and availability will be poor around launch day — what should you buy now? For that, we’ve come up with some fresh data and created a cost per frame analysis.

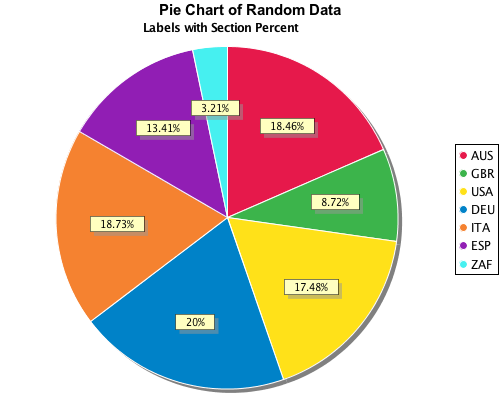

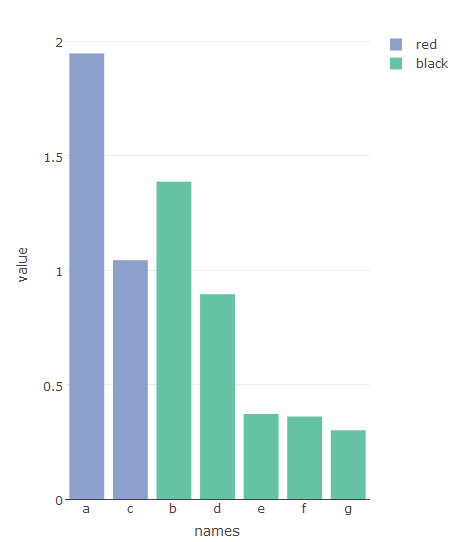

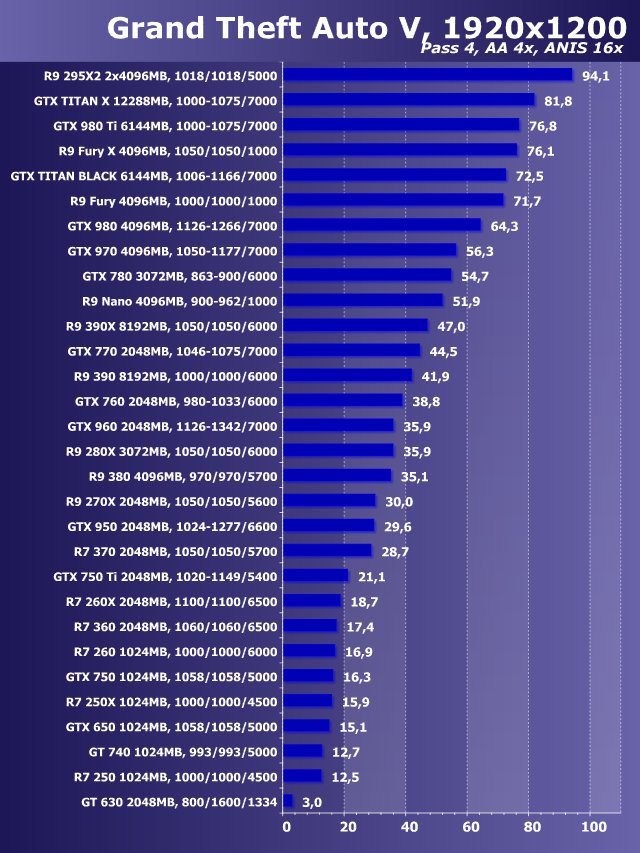

The goal today is to test all current generation AMD and Nvidia GPUs to establish FPS performance and using that data, create some cost per frame comparisons. In total, there’s 17 current generation GPUs (or up to 18 if you include the RX 6400, but we don’t have one of those yet) and we don’t think we’re missing out on anything of true value there.

Because that’s a ton of GPUs to cover, we’ve only tested them in 6 games, but we’ve chosen the titles carefully based on recent 50 game benchmarks. The titles include Red Dead Redemption 2, Rainbow Six Siege, Far Cry 6, Hitman 3, Dying Light 2 and Shadow of the Tomb Raider.

The titles include Red Dead Redemption 2, Rainbow Six Siege, Far Cry 6, Hitman 3, Dying Light 2 and Shadow of the Tomb Raider.

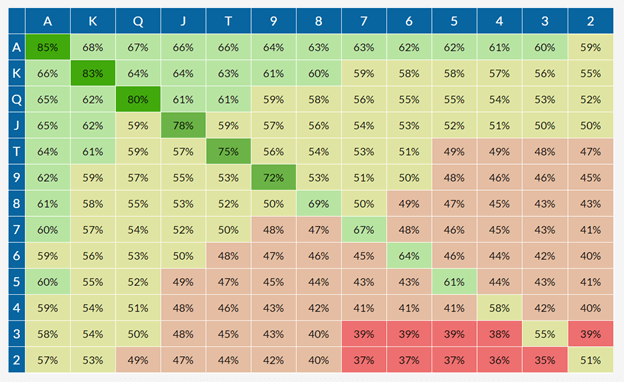

Instead of going through the individual game data, we’ve calculated the geomean for the six games and will be using that to report cost per frame. The reason for using medium quality settings in almost all the games was to allow the entry-level models to achieve reasonable levels of performance, and then at the high-end we can look at the 4K data.

We tested at 1080p 1440p and 4K resolutions using the Ryzen 7 5800X3D with DDR4-3600 CL16 memory and resizable BAR enabled. We used pricing information gathered on April 20, which means that some price movement is to be expected, though the bulk of the data should be good.

With that said, the most valuable information here is the frame rate data. Simply select a performance tier you’re interested in, take the current pricing you’re looking at and divide that by the frame rate to get your cost per frame. Even if you are not in the United States, as we usually make recommendations based on pricing from that market, you can check pricing of the relevant products in your region and using the formula above you can easily come up with the option that makes the most sense for you.

Even if you are not in the United States, as we usually make recommendations based on pricing from that market, you can check pricing of the relevant products in your region and using the formula above you can easily come up with the option that makes the most sense for you.

Best Value at 1080p

The best value 1080p GPU right now is the Radeon RX 6600, coming in at a cost of $2.98 per frame in our testing. That makes it 16% cheaper than the 6500 XT per frame. What’s shocking about this data is that the 6500 XT would need to be priced at ~$170 just match the cost per frame of the RX 6600, and it would be doing so with half the VRAM, half the PCIe bandwidth, no hardware encoding, and complete absence of AV1 decoding.

In other words, even as the Radeon 6500 XT approaches its MSRP, it continues to be a horrible product that should have never sold for a dollar over $150 — in fact, $100 or less is far more fitting for this class of product. It’s crazy to think this GPU was selling for $270+ just months ago and some reviewers were recommending it simply because it was the cheapest new GPU you could buy.

The Radeon RX 6600 also makes a mockery of the RTX 3050 as the GeForce GPU costs 26% more per frame. It might be $10 cheaper, according to our pricing data, but because it’s 20% slower, it’s not a great deal.

The GeForce RTX 3060 Ti stacks up better despite costing more per frame. It’s $10 more than the 6700 XT and is only ~5% slower, so you could argue features such as DLSS help to offset that margin. For comparing more higher-end graphics cards let’s move to 1440p.

Best Value at 1440p

The margin between the Radeon 6700 XT and GeForce RTX 3060 Ti remains about the same at 1440p with the GeForce GPU costing roughly 7% more per frame. As we move beyond the mid-range though towards the higher-end models, AMD stacks up well.

The Radeon RX 6800 XT is offering 5% more performance than the 12GB RTX 3080, suggesting that the 6 game sample is slightly more favorable towards AMD when compared to our 50 game testing, but we’re only talking about a 5% discrepancy, though do keep that in mind.

In terms of overall FPS performance, the 6800 XT, RTX 3080, 3080 12GB, 3080 Ti and even the 3090 are all pretty similar. The 3080 Ti and 6800 XT are a good match up here as they both averaged 157 fps in our testing, but the Radeon GPU is currently 24% cheaper in the US, making it more appealing in terms of cost per frame.

Best Value at 4K

As we’ve come to expect, Nvidia RTX Ampere GPUs stack up better at the higher 4K resolution and now the 6800 XT is on par with the 12GB 3080. With a wider range of games the RTX 3080 would pull ahead by around a 5-7% margin, and we know that because we recently tested them, but for the purpose of this feature it was not feasible to test 17 graphics cards across 50 games.

The data for the mid-range to lower end GPU models was spot on with what we saw across our 50 game testing, so we suspect the lower quality settings is what’s favoring AMD’s high-end models a little more in this scenario.

Here we’re seeing half a dozen AMD and Nvidia GPUs in that 90-105 FPS range, which includes the Radeon RX 6800 XT, 6900 XT, GeForce RTX 3080, 3080 12GB, 3080 Ti and 3090. The most affordable GeForce option is the original RTX 3080 for $1,000, whereas the 6800 XT is down at $920. That’s not a huge saving, so as usual it will come down to the importance you put on extra features, namely ray tracing performance and DLSS.

The most affordable GeForce option is the original RTX 3080 for $1,000, whereas the 6800 XT is down at $920. That’s not a huge saving, so as usual it will come down to the importance you put on extra features, namely ray tracing performance and DLSS.

Where the GeForce range becomes ridiculous is with the RTX 3090 series. The 3090 at $1,700 is dumb and the 3090 Ti at $2,000 is equally silly. The high-end battle is fought out between the 6900 XT and RTX 3080 Ti, both offer a similar level of performance while the GeForce GPU costs 21% more.

Back in February we compared the 6900 XT and 3080 12GB head to head in 50 games and at the time the GeForce GPU was priced between $1600 — $1800, while the 6900 XT was closer to $1500 — $1600. The 3080 12GB was between $100 to $200 more expensive, which is still the case today. We think ray tracing and DLSS allow the GeForce GPU to command a 10% premium, but 20% is getting a bit too steep for us.

Best Value at 1440p (Australia)

Although a big portion of our audience is US based, we thought it’d be interesting to check out pricing trends in a few other regions, so let’s start with Australia. The best value option here is the 6600 XT, narrowly beating out the RX 6600, basically both Radeon 6600 series GPUs represent a similar level of value. Then we have the 6700 XT which just beat the 6500 XT in terms of value, but you know we take the 6500 XT’s cost per frame with a grain of salt given all the issues with that product. We’re also testing it with PCIe 4.0, meaning it would stack up far had use used PCIe 3.0.

The best value option here is the 6600 XT, narrowly beating out the RX 6600, basically both Radeon 6600 series GPUs represent a similar level of value. Then we have the 6700 XT which just beat the 6500 XT in terms of value, but you know we take the 6500 XT’s cost per frame with a grain of salt given all the issues with that product. We’re also testing it with PCIe 4.0, meaning it would stack up far had use used PCIe 3.0.

The best value GeForce GPU in Australia is the RTX 3060 Ti or 3060. The 3060 Ti costs just 2% more than the 6700 XT per frame, so depending on the features you’re interested in the GeForce GPU could be a better value choice.

For those after a high-end GPU in Australia, the Radeon 6800 XT appears to be the way to go at $1350 AUD as it’s 23% cheaper than the RTX 3080 Ti for the same level of performance. Even if you go off our 50 game data where the 3080 12GB and 6800 XT delivered the same level of performance, the 6800 XT would amount to $9 per frame, making it 16% cheaper per frame than the 3080 12GB. That’s a large premium for superior ray tracing performance and DLSS support, but of course, it’s up to you to decide if those features are worth it.

That’s a large premium for superior ray tracing performance and DLSS support, but of course, it’s up to you to decide if those features are worth it.

Best Value at 1440p (Europe)

We also have some Euro pricing data, and here we see some significant changes in pricing trends. Using prices from Mindfactory, we see that the 6500 XT offers the lowest cost per frame at €210, but of course, the RX 6600 is significantly better value despite costing 86% more.

Interestingly, the RTX 3060 Ti ranks very well here and is the best value mid tier product, offering 6700 XT-like performance at a 10% discount. The RTX 3060 is also competitive with the 6600 XT.

As we get to the high-end parts, AMD does stack up well. The 6800 XT can be had for €950, while the original RTX 3080 costs €1140, making it a whopping 36% more expensive. Then when compared to the RTX 3080 12GB, we see that the GeForce GPU is fetching almost 50% more per frame and if we go off the 50 game data and say that these two GPUs are a match in terms of performance, the 12GB 3080 still comes out costing 41% more per frame.

Based on Mindfactory’s pricing, without question you would purchase the 6800 XT over any of the 3080 or 3090 series GPUs from Nvidia.

Closing Notes

That’s where things stand on the GPU front as of April 2022. Of course, pricing is highly volatile at the moment and may have changed for some models by the time you read this. Our advice is to work out which performance tier you’re after and then compare current pricing for those products.

The numbers here serve as a rough guide, but if you want to get highly accurate data for certain match ups then make sure to check out 50 game benchmarks. We’ve updated the data for most GPUs at this point. The only graphics card we strongly recommend you to avoid is the Radeon RX 6500 XT — and possibly the new 6400 XT which we might look at soon.

The Radeon RX 6600 is among the best value you are going to get right now for a budget graphics card, we wouldn’t go with anything below it. On the other side of the spectrum, the RTX 3090 and 3090 Ti should also be avoided, but not because they’re bad products, but because their price is still inflated and do not offer great value. We hope this guide is helpful to those of you buying a new graphics card right now.

We hope this guide is helpful to those of you buying a new graphics card right now.

Shopping Shortcuts:

- AMD Radeon RX 6700 XT on Amazon

- AMD Radeon RX 6800 XT on Amazon

- AMD Radeon RX 6600 on Amazon

- Nvidia GeForce RTX 3060 on Amazon

- Nvidia GeForce RTX 3070 on Amazon

- Nvidia GeForce RTX 3080 on Amazon

- Nvidia GeForce RTX 3090 on Amazon

GPUCards | Scientific Volume Imaging

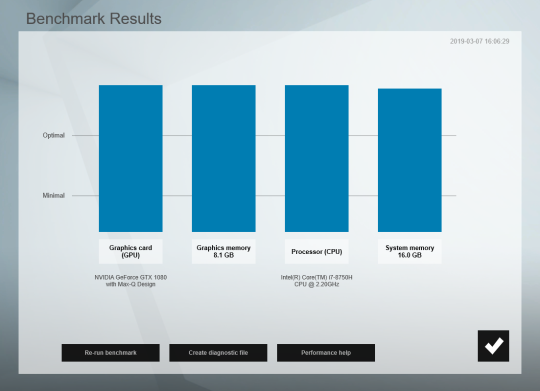

This page gives an overview of NVIDIA cards that can be used in combination with the Small, Medium, Large or Extreme GPU option in the latest version of Huygens for GPU acceleration. Over time this page will be refreshed, for a category overview of cards working with older versions of Huygens, please contact us.

For cards that are not included in this page: the option that is required in your license will depend on the amount of CUDA cores and amount of RAM that the card has according to the following specifications:

Small GPU option: for cards that have up to 1024 CUDA cores and up to 6 GB of video RAM (included with every Huygens license Free of Charge)

Medium GPU option: for cards that have up to 3072 CUDA cores and up to 8 GB of video RAM.

Large GPU option: for cards that have up to 8192 CUDA cores and up to 24 GB of video RAM.

Extreme GPU option: for cards that have up to 24576 CUDA cores and up to 64 GB of video RAM.

For the ideal combination with your CPU power check the various Performance Options in which we bundle your GPU and CPU power to let Huygens run extremely fast through your biggest data.

Huygens GPU acceleration is supported for Nvidia cards. These are supported by Windows and Linux, but not by Mac OS. If you have a valid GPU card but it’s not recognized by Huygens check out GPU checklist.

No Tabs

- Small

- Medium

- Large

- Extreme

Small GPU cards

The Small GPU option is required in your Huygens license in order to use small GPU cards. A small GPU option is included in every Huygens base license from 15.10 and later. Download the latest Huygens now.

| GPU card | CUDA cores | VRAM |

|---|---|---|

| GeForce GTX 1660 Ti | 1536 | 6GB |

| GeForce GTX 1660 Super | 1408 | 6GB |

| GeForce GTX 1660 | 1408 | 6GB |

| GeForce GTX 1650 Super | 1408 | 4GB |

| GeForce GTX 1650 | 1024 | 4GB |

| GeForce GTX 1650 | 896 | 4GB |

| GeForce GTX 1060 3GB | 1280 | 3GB |

| GeForce GTX 1060 6GB | 1280 | 6GB |

| GeForce GTX 1050 Ti | 768 | 4GB |

| GeForce GTX 1050 (3GB) | 768 | 3GB |

| GeForce GTX 1050 (2GB) | 640 | 2GB |

| GeForce GTX 960 | 1024 | 2GB |

| GeForce GTX 950 | 786 | 2GB |

| GeForce GTX 780 Ti | 2880 | 3GB |

| GeForce GTX 780 | 2304 | 3GB |

| GeForce GTX 750 Ti | 640 | 2 GB |

| GeForce GTX 750 | 512 | 1GB or 2 GB |

| Quadro P2000 | 1024 | 5GB |

| Quadro P1000 | 640 | 4GB |

| Quadro M2000 | 768 | 4GB |

| Quadro K2200 | 640 | 4GB |

| Quadro T2000 | 1024 | 4 GB |

| Quadro T1000 | 768 | 4GB |

Medium GPU cards

The Medium GPU option is required in your Huygens license in order to use medium GPU cards. Request Quote

Request Quote

| GPU card | CUDA cores | VRAM |

|---|---|---|

| GeForce RTX 3070 | 5888 | 8GB |

| GeForce RTX 2080 SUPER | 3072 | 8GB |

| GeForce RTX 2080 | 2944 | 8GB |

| GeForce RTX 2070 SUPER | 2560 | 8GB |

| GeForce RTX 2070 | 2304 | 8GB |

| GeForce RTX 2060 SUPER | 2176 | 8GB |

| GeForce RTX 2060 | 1920 | 6GB |

| GeForce GTX 1080 | 2560 | 8GB |

| GeForce GTX 1070 Ti | 2432 | 8GB |

| GeForce GTX 1070 | 1920 | 8GB |

| GeForce GTX 980 Ti | 2816 | 6GB |

| GeForce GTX 980 | 2048 | 4GB |

| GeForce GTX 970 | 1664 | 4GB |

| Quadro RTX 4000 | 2304 | 8GB |

| Quadro P4000 | 1792 | 8GB |

| Quadro M5000 | 2048 | 8GB |

| Quadro M4000 | 1664 | 8GB |

| Quadro P2200 | 1280 | 5GB |

| Tesla K20 | 2496 | 5GB |

Large GPU cards

Large GPU option is required in your license in order to use Large GPU cards. Request Quote

Request Quote

| GPU card | CUDA cores | VRAM |

|---|---|---|

| Titan RTX | 4608 | 24GB |

| Titan V | 5120 | 12GB |

| Titan-Xp (Pascal Generation) | 3584 | 12GB |

| GeForce GTX Titan-X | 3072 | 12GB |

| GeForce RTX 3090 | 10496 | 24GB |

| GeForce RTX 3080 | 8704 | 10GB |

| GeForce RTX 3080 12GB | 8960 | 12GB |

| GeForce RTX 3080 Ti | 10240 | 12GB |

| GeForce RTX 2080 Ti | 4352 | 11GB |

| GeForce GTX 1080 Ti | 3584 | 11GB |

| Tesla V100 (16GB version) | 5120 | 16GB |

| Tesla P100 | 3584 | 16GB |

| Tesla P100 NVLINK | 3584 | 16GB |

| Tesla P40 | 3840 | 24GB |

| Tesla M60 | 4096 | 16 GB |

| Tesla M40 | 3072 | 12GB or 24 GB |

| Tesla K80 | 4992 | 2 x 12GB |

| Tesla K40 | 2880 | 12GB |

| Quadro GP100 | 3584 | 16GB |

| Quadro RTX A5000 | 8192 | 24GB |

| Quadro RTX A4000 | 6144 | 16GB |

| Quadro RTX 6000 | 4608 | 24GB |

| Quadro RTX 5000 | 3072 | 16GB |

| Quadro P6000 | 3840 | 24GB |

| Quadro P5000 | 2560 | 16GB |

| Quadro M6000 24GB | 3072 | 24GB |

| Quadro M6000 | 3072 | 12GB |

| Quadro K6000 | 2880 | 12GB |

Extreme GPU cards

Extreme GPU option is required in your license in order to use the GPU cards below. Request Quote

Request Quote

| GPU card | CUDA cores | VRAM |

|---|---|---|

| Tesla V100 (32GB version) | 5120 | 32GB |

| Quadro RTX 8000 | 4608 | 48GB |

| Quadro GV100 | 5120 | 32GB |

| Titan V CEO Edition | 5120 | 32GB |

| RTX A6000 | 10752 | 48 GB |

Huygens 20.04

Huygens versions up to and including 20.04 support NVidia graphics cards with a Compute Capability of 3.0 or higher and a Cuda Toolkit version of 7.0 or higher.

Huygens 20.10

Compute Capability lower than 3.5 and Cuda Toolkit versions older than 8.0 are now deprecated. Huygens 20.10 does no longer support these for GPU acceleration. CPU computation and display on a monitor via these cards will continue to be supported.

CPU computation and display on a monitor via these cards will continue to be supported.

Affected cards that are still supported in Huygens 20.04 but not in Huygens 20.10:

GeForce GTX 770, GeForce GTX 760, GeForce GT 740, GeForce GTX 690, GeForce GTX 680, GeForce GTX 670, GeForce GTX 660 Ti, GeForce GTX 660, GeForce GTX 650 Ti BOOST, GeForce GTX 650 Ti, GeForce GTX 650, GeForce GTX 880M, GeForce GTX 780M, GeForce GTX 770M, GeForce GTX 765M, GeForce GTX 760M, GeForce GTX 680MX, GeForce GTX 680M, GeForce GTX 675MX, GeForce GTX 670MX, GeForce GTX 660M, GeForce GT 750M, GeForce GT 650M, GeForce GT 745M, GeForce GT 645M, GeForce GT 740M, GeForce GT 730M, GeForce GT 640M, GeForce GT 640M LE, GeForce GT 735M, GeForce GT 730M;

Quadro K5000, Quadro K4200, Quadro K4000, Quadro K2000, Quadro K2000D, Quadro K600, Quadro K420, Quadro K500M, Quadro K510M, Quadro K610M, Quadro K1000M, Quadro K2000M, Quadro K1100M, Quadro K2100M, Quadro K3000M, Quadro K3100M, Quadro K4000M, Quadro K5000M, Quadro K4100M, Quadro K5100M, NVS 510, Quadro 410;

Tesla K10, GRID K340, GRID K520.

See also the wikipedia page on CUDA.

Besides the GPU options for Huygens, SVI also offers Performance options. For the use of multi-GPU acceleration the Performance Plus, Mega or Extreme option is needed. With Huygens version 16.10.0p8, multi-GPU support has been introduced in the Batch Processor and Huygens Core to perform deconvolution of a queue of images on multiple GPU devices simultaneously. With Huygens 17.10 (Linux) and Huygens 18.04 (Windows), support is added for running a single image deconvolution on multiple GPUs in Huygens Professional. We offer the following Performance Packages to get the most out of your workstation:

Performance Option: Standard included in Huygens for using up to 16 CPU cores (32 logical when hyper-threaded) and 1 Small GPU card.

Performance Plus: Allows you to use up to 32 CPU cores (64 logical cores when hyper-threaded) and 2 Large GPU cards.

Performance Mega: Allows you to use up to 64 CPU cores (128 logical cores when hyper-threaded) and 4 Large GPU cards.

Performance Extreme: Allows you to use up to 128 CPU cores (256 logical cores when hyper-threaded) and 8 Large GPU cards.

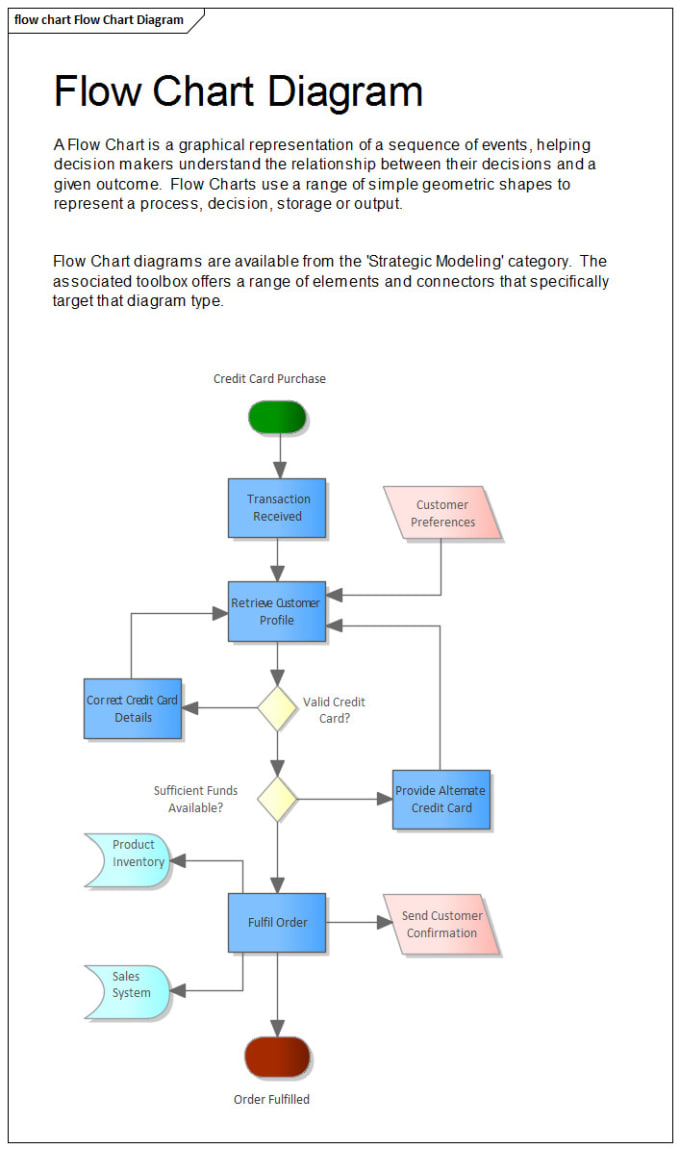

Below is a table that shows which options and commands in Huygens can use single GPU acceleration or multi-GPU acceleration in batch mode.

Accelerated with GPU (CUDA) |

|

|---|---|

| Deconvolution algorithms | |

| CMLE deconvolution | + Multi GPU support: Operation Window, Batch Processor & Scripting |

| QMLE deconvolution | + Multi GPU support: Operation Window, Batch Processor & Scripting |

| GMLE deconvolution | + Multi GPU support: Operation Window, Batch Processor & Scripting |

| Tikhonov-Miller deconvolution | |

| Workflow Processor | + Multi GPU support |

| Deconvolution Wizard | |

| Deconvolution Express | |

| Batch Feeder | |

| Localization algorithms for Huygens Localizer | |

| MLE particle fitting | |

| LSQ particle fitting | |

| Center of mass particle fitting | |

| Registration & Deconvolution | |

| Object Stabilizer | + Multi GPU support: Batch Processor |

| Drift correction | + Multi GPU support: Batch Processor |

| Stitching & Decon Wizard | + Multi GPU support: HuCore/HRM |

| Light-Sheet Decon & Fusion Wizard | |

| Image processing commands | |

| Image processing fitlers (min, max, variance ppu, avg, gauss) | |

| Image properites functions (hist, stat, range) | |

| Image processing commands 1 (estbg, phaseCorr) | |

| Image processing commands 2 (getpix, setpix, cp, slice, multiMip, miniMip, sum) | |

| Image processing commands 3 (shift, resample, mirror, hist2ch, label, perc, optrep) | |

| Visualization & Analysis | |

| 2D slice renderers | |

| 3D SFP renderer | Since v. 19.04 19.04 |

| 3D MIP renderer | Since v.19.04 |

| 3D Surface renderer | Since v.19.04 |

| Colocalization Analysis | + Multi GPU support: HuCore/HRM |

| Object Analyzer | GPU support for labeling and rendering |

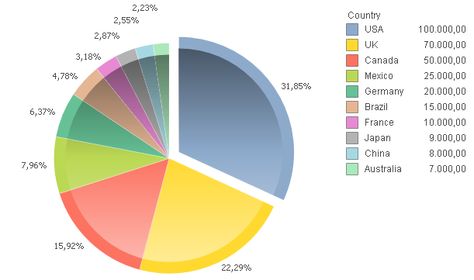

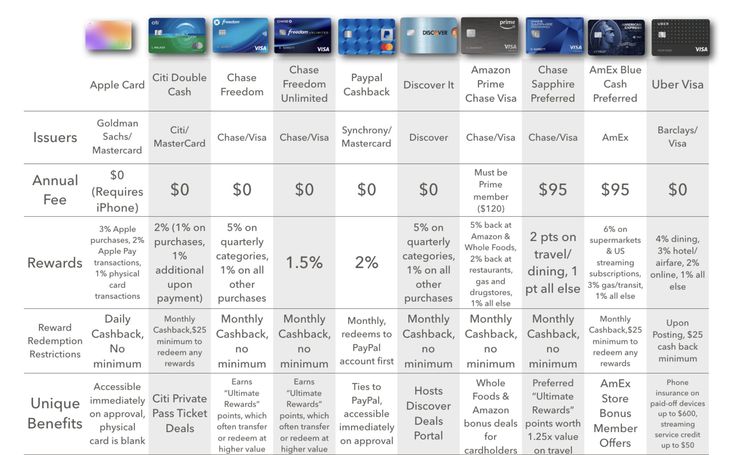

GeForce Graphics Cards Now 14% Over MSRP, Radeon at Just 6% Over MSRP

The latest NVIDIA GeForce and AMD Radeon GPU pricing update have been published by 3DCenter, & once again shows that we are a step close to MSRP prices for gaming graphics cards.

NVIDIA GeForce & AMD Radeon GPU Prices Now Just 10% Over MSRP: Graphics Cards In Stock Everywhere!

In the latest report by 3DCenter, we can see that the GPU prices for both NVIDIA GeForce and AMD Radeon graphics cards continue to fall which shouldn’t be a surprise as that’s the trend we have witnessed since the end of 2021. The NVIDIA GeForce RTX 30 series prices now average at around 14% over MSRP while AMD’s Radeon RX 6000 series averages with a selling price of 6% over MSRP.

AMD Radeon & NVIDIA GeForce Graphics cards are back to normal MSRP prices & GPU availability is better than ever. (Image Credits: 3DCenter)

In addition to that, GPU supply is abundant and currently, there’s no retail outlet in the world that doesn’t have graphics cards on their store shelves (which wasn’t the case a few quarters back). The red and green teams have also announced various promos such as the ‘Restocked and Reloaded’ by NVIDIA and AMD also announcing how its Radeon RX 6000 series cards are available at MSRP-level prices.

AMD Radeon & NVIDIA GeForce Graphics Card Price Trend (Image Credits: 3DCenter):

| Dec 12 | Jan 2 | Jan 23 | 13 Feb | 6 Mar | 27 Mar | Apr 17 | 8th of May | |

|---|---|---|---|---|---|---|---|---|

| AMD Radeon RX 6000 | +83% | +78% -5PP |

+63% -15PP |

+45% -18PP |

+35% -10PP |

+25% -10PP |

+12% -13PP |

+6% -6PP |

| nVidia GeForce RTX 30 | +87% | +85% -2PP |

+77% -8PP |

+57% -20PP |

+41% -16PP |

+25% -16PP |

+19% -6PP |

+14% -5PP |

| only Radeon RX 6700 XT, 6800 & 6800 XT and GeForce RTX 3060, 3060 Ti, 3070 & 3080-10GB | +105% | +101% -4PP |

+88% -13PP |

+68% -20PP |

+55% -13PP |

+38% -17PP |

+26% -12PP |

+22% -4PP |

Talking about AMD prices first, once again, almost all graphics cards within the Radeon RX 6000 series are now available between +1 to +10% of MSRP. It is only the Radeon RX 6800 series cards that are still selling for an average of 30-35% over MSRP. This has been the case with these cards since their launch.

It is only the Radeon RX 6800 series cards that are still selling for an average of 30-35% over MSRP. This has been the case with these cards since their launch.

AMD Radeon RX 6000 Series Graphics Card Prices (RDNA 2 GPUs) via 3DCenter:

| 6400 | 6500XT | 6600 | 6600XT | 6700XT | 6800 | 6800XT | 6900XT | |

|---|---|---|---|---|---|---|---|---|

| miser | 182-230€ | 199-320€ | 359-480€ | 425-570€ | 599-1199€ | 889-1209€ | 929-1470€ | 1114-1949€ |

| alternates | N/A | 220-299€ | 374-439€ | 479-579€ | €629-799 | 925€ | 1019-1099€ | 1129-1399€ |

| Caseking | 188-211€ | 228-305€ | 399-438€ | 498-575€ | 640-957€ | 907-1145€ | 989-1207€ | 1199-1679€ |

| computer universe | 186-211€ | 215-260€ | 375-446€ | 479-660€ | 630-752€ | 937-1080€ | 966-1335€ | 1349-1767€ |

| Hardwarecamp24 | N/A | 224€ | 389-449€ | 458-494€ | 769€ | €959 | 1129-1149€ | 1249-1259€ |

| media market | 190€ | 205-310€ | 420-435€ | 450-560€ | 649-900€ | 1039€ | 1132-1229€ | 1206-1349€ |

| Mindfactory | 182-195€ | 199-260€ | 359-412€ | 425-556€ | 599-690€ | €889-949 | 929-1031€ | 1114-1339€ |

| notebooks cheaper | 199€ | 199-269€ | 369-509€ | 449-529€ | 629-819€ | €889-999 | 999-1180€ | 1160-1949€ |

| Pro Shop | 193-230€ | €222-309 | 399-428€ | 514-575€ | 683-840€ | 950-1127€ | 1016-1349€ | 1300-1820€ |

| List Price | $179 | $199 | $329 | $379 | $479 | $579 | $649 | $999 |

| Surcharge | from –10% | from –11% | from –3% | from -1% | from +11% | from +36% | from +27% | from -1% |

| Change as of 17th April | — | –6PP | +2PP | –7PP | –3PP | –5PP | –4PP | -1PP |

| Availability | ★★★☆☆ | ★★★★★ | ★★★★★ | ★★★★★ | ★★★★★ | ★★★★☆ | ★★★★☆ | ★★★★★ |

The NVIDIA lineup averages around +14% prices over MSRP but it is just three cards at the moment, the RTX 3050, RTX 3060 Ti, and RTX 3070 which are priced over +20% of MSRP. The enthusiast RTX 3080 Ti can actually be found below the MSRP which is impressive given that it offers performance similar to the RTX 3090 Ti which costs several hundred dollars more.

The enthusiast RTX 3080 Ti can actually be found below the MSRP which is impressive given that it offers performance similar to the RTX 3090 Ti which costs several hundred dollars more.

NVIDIA GeForce RTX 30 Series Graphics Card Prices (Ampere GPUs) via 3DCenter:

| 3050 | 3060 | 3060Ti | 3070 | 3070Ti | 3080 -10GB | 3080Ti | 3090 | |

|---|---|---|---|---|---|---|---|---|

| miser | 348-500€ | 420-790€ | €620-917 | 679-1079€ | 749-1491€ | 899-1491€ | 1299-1976€ | 1839-3074€ |

| alternates | 369-419€ | 449-499€ | €619 | 789-799€ | 799-949€ | 999-1079€ | 1299-1699€ | 1999-2499€ |

| Caseking | 358-419€ | 486-651€ | 623-733€ | 796-898€ | 829-928€ | 965-1294€ | 1379-1850€ | 1999-2168€ |

| computer universe | 378-429€ | 469-790€ | 590-749€ | 799-961€ | 806-1491€ | 941-1140€ | 1416-1903€ | 1889-3164€ |

| Hardwarecamp24 | 418€ | N/A | 649€ | €779-899 | 788-869€ | 999-1279€ | 1399-1529€ | 1879-1994€ |

| media market | 359-421€ | 450-620€ | 811€ | 700€ | 799-1039€ | 960-1249€ | 1300-1869€ | 1800-2699€ |

| Mindfactory | 372-398€ | 449-479€ | N/A | 745-769€ | 789-899€ | 969-1064€ | 1298-1498€ | 1839-1990€ |

| notebooks cheaper | 358-500€ | 449-729€ | 630-710€ | €679-899 | 749-1049€ | €899-1199 | 1329-1532€ | 1879-2099€ |

| Pro Shop | 369-497€ | 470-624€ | €675-701 | 770-983€ | 825-975€ | 1099-1200€ | 1399-1949€ | 2000-2249€ |

| List Price | $249 | $329 | $399 | $499 | $599 | $699 | $1199 | $1499 |

| Surcharge | from +24% | from +13% | from +31% | from +21% | from +11% | from +14% | from -4% | from +6% |

| Change as of 17th April | +7p | –5PP | -1PP | –6PP | –7PP | –7PP | –5PP | –8PP |

| Availability | ★★★★★ | ★★★★★ | ★★★★★ | ★★★★★ | ★★★★★ | ★★★★★ | ★★★★★ | ★★★★★ |

We recently saw the AMD Radeon RX 6900 XT being sold at $100 US below its MSRP over at Newegg US. There are just a few cards that are currently in demand and would take a few more months to hit their MSRP figures but the vast majority of the NVIDIA GeForce RTX 30 and AMD Radeon RX 6000 lineup is currently being sold at normal prices. This along with prices now coming back at or below the MSRP means that the GPU market is out of the worse and prices/availability can now go back to normal.

There are just a few cards that are currently in demand and would take a few more months to hit their MSRP figures but the vast majority of the NVIDIA GeForce RTX 30 and AMD Radeon RX 6000 lineup is currently being sold at normal prices. This along with prices now coming back at or below the MSRP means that the GPU market is out of the worse and prices/availability can now go back to normal.

Products mentioned in this post

Best GeForce RTX 3080 Ti Graphics Cards Available — Which One To Get?

Q3 is almost finished and we are slowly but surely moving to Q4. Still, graphics card prices continue to fall. We have seen RTX 3090 Ti selling for MSRP, but for the high-end graphics card, I think the GeForce RTX 3080 Ti is still the best. Although, some of the best RTX 3080 (non-Ti) are not bad either. The GeForce RTX 3080 Ti is a cheaper version of the RTX 3090. It is also said to be a better buy than the RTX 3090, especially if you don’t need 24GB of VRAM. So, if you are in the market wondering which RTX 3080 Ti you should get, we listed here some of the best RTX 3080 Ti graphics cards in the market.

So, if you are in the market wondering which RTX 3080 Ti you should get, we listed here some of the best RTX 3080 Ti graphics cards in the market.

UPDATE: Note that as of September 17, 2022, EVGA has announced that they will no longer work with NVIDIA, ending the partnership immediately. They will continue to support and sell the RTX 30 series cards until all their stocks are sold. EVGA will also keep parts on hand for replacement and warranty purposes. Once all are consumed, they are finished with the graphics card business.

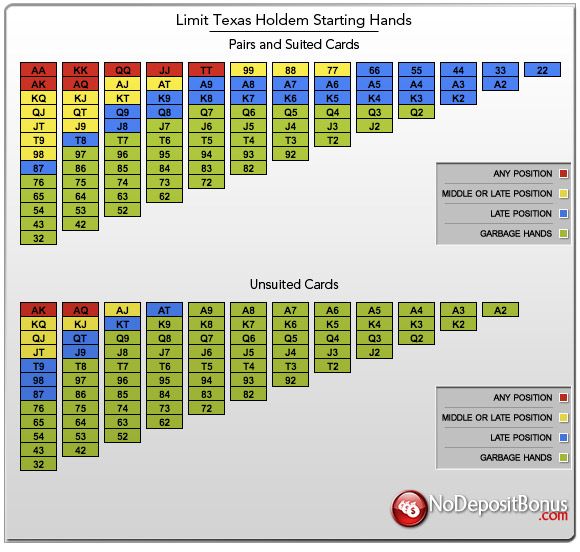

Best RTX 3080 Ti Graphics Cards Today

All GeForce RTX 3080 Ti graphics cards in the market are powered with the same 8nm GA102 GPU based on the Ampere architecture. It has 10240 CUDA cores, 320 TMUs, 112 ROPs, and 12GB of GDDR6X memory running on a 384-bit memory interface. These are all common to all RTX 3080 Ti graphics cards.

The main difference lies in the cooler design and PCB design. Some AIB advertises or highlights the boost clock speed of their graphics card. But at the end of the day, all of them will boost higher thanks to NVIDIA Boost technology. The graphics card only needs sufficient power and enough cooling to keep the GPU’s temperature at bay. With the conditions met, the GPU will eventually boost higher.

But at the end of the day, all of them will boost higher thanks to NVIDIA Boost technology. The graphics card only needs sufficient power and enough cooling to keep the GPU’s temperature at bay. With the conditions met, the GPU will eventually boost higher.

Below is a summary of all the graphics cards mentioned in this list. It is in alphabetical order. Later in the article, I’ll be categorizing them according to their (unique) features. I’ll also show you some performance numbers and answer some frequently asked questions regarding the RTX 3080 Ti GPU.

| Model | Boost Clock | Cooler Design | PCI Slots | Power Connectors | Availability |

|---|---|---|---|---|---|

| ASUS ROG Strix GeForce RTX 3080 Ti OC Edition | 1815 MHz | Tri-fan air cooling | 2.9 | 3x 8-pin | Check here |

| ASUS ROG Strix LC GeForce RTX 3080 Ti OC Gaming | 1860 MHz | Hybrid (Liquid + Air) | 2. 6 6 |

3x 8-pin | Check here |

| ASUS TUF Gaming GeForce RTX 3080 Ti OC Edition | 1785 MHz | Tri-fan air cooling | 2.7 | 2x 8-pin | Check here |

| EVGA GeForce RTX 3080 Ti FTW3 Ultra Gaming | 1800 MHz | Tri-fan air cooling | 2.75 | 3x 8-pin | Check here |

| EVGA GeForce RTX 3080 Ti XC3 Ultra Gaming | 1725 MHz | Tri-fan air cooling | 2.2 | 2x 8-pin | Check here |

| EVGA GeForce RTX 3080 Ti XC3 Ultra Hybrid Gaming | 1725 MHz | Hybrid (Liquid + Air) | 2 | 2x 8-pin | Check here |

| Gigabyte Aorus GeForce RTX 3080 Ti Master 12G | 1770 MHz | Tri-fan air cooling | 3.5 | 3x 8-pin | Check here |

| Gigabyte GeForce RTX 3080 Ti Gaming OC 12G | 1710 MHz | Tri-fan air cooling | 2. 7 7 |

2x 8-pin | Check here |

| Gigabyte GeForce RTX 3080 Ti Vision OC 12G | 1710 MHz | Tri-fan air cooling | 2.7 | 2x 8-pin | Check here |

| MSI GeForce RTX 3080 Ti Gaming X Trio 12G | 1770 MHz | Tri-fan air cooling | 2.7 | 3x 8-pin | Check here |

| MSI GeForce RTX 3080 Ti Ventus 3X 12G OC | 1695 MHz | Tri-fan air cooling | 2.7 | 2x 8-pin | Check here |

| MSI GeForce RTX 3080TI SUPRIM X 12G | 1830 MHz | Tri-fan air cooling | 2.75 | 3x 8-pin | Check here |

| NVIDIA GeForce RTX 3080 Ti Founders Edition | 1670 MHz | Dual Fan Flow-Through | 2 | 2x 8-pin | Check here |

GeForce RTX 3080 Ti Performance

To give you an idea of how fast the RTX 3080 Ti is, here are some performance benchmarks at 4K resolution with maximum or ultra graphics preset. Note that some RTX 3080 Ti will perform slightly faster than others, and some will slightly be slower. GPU cooling, power, and silicon lottery are also at play. But at the end of the day, all of the RTX 3080 Ti cards will perform within the same performance range. Most of the time, the difference is minimal and negligible. NVIDIA controls how these GPUs perform.

Note that some RTX 3080 Ti will perform slightly faster than others, and some will slightly be slower. GPU cooling, power, and silicon lottery are also at play. But at the end of the day, all of the RTX 3080 Ti cards will perform within the same performance range. Most of the time, the difference is minimal and negligible. NVIDIA controls how these GPUs perform.

RTX 3080 Ti FAQs

What RTX 3080 Ti has the best cooler and great cooling performance?

From the list, the Asus ROG Strix LC and EVGA XC3 Ultra Hybrid, definitely, have the better cooling solution. The GPU is liquid-cooled, while the other components are air-cooled. Unfortunately, they are costly compared to air-cooled RTX 3080 Ti. The good news is, most RTX 3080 Ti with a tri-fan cooling system will offer anywhere from sufficient to excellent cooling performance.

What RTX 3080 Ti is the fastest out of the box?

Out of the box, the Asus ROG Strix LC RTX 3080 Ti, EVGA RTX 3080 Ti FTW3 and MSI RTX 3080 Ti SUPRIM X have the higher or faster boost clock speed. They come with a factory overclock settings. But like I mentioned earlier if the graphics card has enough power and the GPU is sufficiently cooled, it will eventually boost higher. My MSI Suprim X can achieve around ~2,000MHz(+/-) without additional tweaking or manual overclocking.

They come with a factory overclock settings. But like I mentioned earlier if the graphics card has enough power and the GPU is sufficiently cooled, it will eventually boost higher. My MSI Suprim X can achieve around ~2,000MHz(+/-) without additional tweaking or manual overclocking.

Is it worth it to buy an expensive RTX 3080 Ti graphics card?

Yes and no. It depends on how you define “worth”. Some people are okay to spend more on the design, looks, and aesthetics. But even the liquid-cooled RTX 3080 Ti will not perform significantly better compared to the air-cooled RTX 3080 Ti. For sure it will have an advantage since it has a better cooling solution. But is it worth it for you?

Most of the time, even the simplest RTX 3080 Ti, like NVIDIA RTX 3080 Ti Founders Edition is enough already.

What’s the best CPU for the RTX 3080 Ti?

Generally speaking, you can pair the RTX 3080 Ti with any CPU. But if you are using a slower or entry-level CPU, its performance may be poor. So, since you are willing to spend thousands of dollars for an RTX 3080 Ti, it would only be wise to not skimp on the CPU.

So, since you are willing to spend thousands of dollars for an RTX 3080 Ti, it would only be wise to not skimp on the CPU.

I would recommend AMD’s Ryzen 9 5950X, 5900X, or Ryzen 7 5800X. For Intel, the latest 12th Gen Intel Core CPUs, like the Core i9-12900(K/F) and Core i7-12700(K/F) are currently some of the best in the market, especially in gaming. The older 10th gen and 11th gen CPUs are not bad either. But if you’re building a new system, I recommend that you jump to the latest 12th Gen Intel CPU since the performance difference from its predecessor is substantial.

Should I get an RTX 3080 Ti if I have a 1080p monitor?

No, definitely no! Just like what we have experienced on our RTX 3080 review, the GPU is being bottlenecked by the 1080p resolution. There’s some performance left on the table and it is simply not designed for lower resolutions.

What’s the best monitor for the RTX 3080 Ti?

4K (2160p) and 2K (1440p) would be the ideal resolution for the RTX 3080 Ti.![]() You can check out our article on the best 4K gaming monitors here.

You can check out our article on the best 4K gaming monitors here.

So, which RTX 3080 Ti should I get?

The RTX 3080 Ti graphics cards listed here are all good. It’s really up to you which one would you prefer and based on your budget.

Best GeForce RTX 3080 Ti Graphics Cards in General

EVGA GeForce RTX 3080 Ti FTW3 Ultra Gaming

EVGA’s RTX 3080 Ti FTW3 Ultra Gaming is another beast of a graphics card. It offers a higher factory overclock setting and a thick and substantial aluminum heatsink to cool the GPU and VRAM. The heatsink is cooled by three fans and there is RGB lighting on the front-side portion.

EVGA RTX 3080 Ti FTW3 Ultra Gaming available on Amazon.com here

For UK, check at Amazon UK here

Asus ROG Strix GeForce RTX 3080 Ti OC Edition

Aside from the EVGA FTW3 Ultra graphics cards, Asus’ ROG Strix series is also a popular graphics card. And the Asus ROG Strix GeForce RTX 3080 Ti OC Edition is no slouch in terms of being one of the best 3080 Ti card in the market. It has a 2.9-slot design, with three Axial-tech fan design, nice-looking RGB lighting, and its aesthetics is simple a headturner. It also feels premium on hand and its cooling solution is quite effective.

It has a 2.9-slot design, with three Axial-tech fan design, nice-looking RGB lighting, and its aesthetics is simple a headturner. It also feels premium on hand and its cooling solution is quite effective.

Asus ROG Strix RTX 3080 Ti OC Edition check on Amazon.com here.

MSI GeForce RTX 3080TI SUPRIM X 12G

Just like the EVGA FTW3, the MSI RTX 3080 Ti SUPRIM X is also a beast of a graphics card. It also requires three 8-pin power connectors and features a 20-phase power design. There’s a switch near the power connector that toggles between “silent” and “gaming” mode. Finally, it has some RGB lighting and this card feels heavy and premium on hand as well.

MSI GeForce RTX 3080 Ti SUPRIM X available on Amazon.com here.

For UK, check at Amazon UK here.

NVIDIA GeForce RTX 3080 Ti Founders Edition

The NVIDIA GeForce RTX 3080 Ti Founders Edition is one of the best RTX 3080 Ti in the market. Not only that it comes with the base price, but it’s also professional-looking, sleek, and clean. The flow-through design is excellent at cooling the GPU and other components. As expected from a Founders Edition, it does feel premium on hand.

The flow-through design is excellent at cooling the GPU and other components. As expected from a Founders Edition, it does feel premium on hand.

NVIDIA RTX 3080 Ti Founders Edition available on Amazon.com here

For UK, check at Amazon UK here

Gigabyte Aorus GeForce RTX 3080 Ti Master 12G

This is definitely the “thiccest” RTX 3080 Ti on this list. The Aorus RTX 3080 Ti Master has three fans, with the middle one spinning in the opposite direction. It also has RGB lighting, but the unique feature of the RTX 3080 Ti Master are its dual BIOS, six output ports, and the (mini) LCD display on the rear-end front-side portion. Aside from displaying the GPU temperature, users can upload GIF and customize what will be displayed on the screen.

Aorus GeForce RTX 3080 Ti Master 12G available on Amazon.com here

For UK, check on Amazon UK here.

Also Good RTX 3080 Ti Graphics Card Alternatives

Below are more RTX 3080 Ti graphics cards that are not as premium as the cards mentioned above, but are generally cheaper and good options as well. These three features tri-fan cooling solution and they do perform as expected. The Gigabyte Vision is the only different card here since it has an all-white color scheme. Just choose which one do you prefer more when it comes to aesthetics.

These three features tri-fan cooling solution and they do perform as expected. The Gigabyte Vision is the only different card here since it has an all-white color scheme. Just choose which one do you prefer more when it comes to aesthetics.

- MSI GeForce RTX 3080 Ti Gaming X Trio 12G available on Amazon.com here or Amazon UK here

- Gigabyte GeForce RTX 3080 Ti Gaming OC 12G available on Amazon.com here

- Gigabyte GeForce RTX 3080 Ti Vision OC 12G available on Amazon.com here

Best RTX 3080 Ti with No (RGB) Lighting

Understandably, not all of us are a fan of RGB lighting. Some users don’t like RGB lighting or any kind of lighting at all. While you can disable the RGB lighting on the graphics card mentioned above, here are three RTX 3080 Ti that doesn’t have RGB lighting. The only exception is with the Asus TUF RTX 3080 Ti since the TUF logo on the rear end has a small RBG lighting. Nevertheless, all of these cards feature an all-black color scheme.

Nevertheless, all of these cards feature an all-black color scheme.

- ASUS TUF Gaming GeForce RTX 3080 Ti OC Edition available on Amazon.com here or Amazon UK here

- EVGA GeForce RTX 3080 Ti XC3 Ultra Gaming available on Amazon.com here

- MSI GeForce RTX 3080 Ti Ventus 3X 12G OC available on Amazon.com here

Best Water-cooled RTX 3080 Ti Graphics Cards

For those who don’t mind spending more, money no object, and simply want the coolest and best performing RTX 3080 Ti, these two liquid-cooled RTX 3080 Ti would be your option.

ASUS ROG Strix LC GeForce RTX 3080 Ti OC Edition Gaming

The Asus ROG Strix LC RTX 3080 Ti OC features a hybrid cooling solution. Its GPU is cooled by a 240mm radiator and the tube connecting the radiator and GPU copper plate is 560mm long. It also has RGB lighting on the fans and on the graphics card itself. In addition to the liquid cooling, there’s a blower-type fan that cools the aluminum heatsink that is in contact with the VRM and other components.

ASUS ROG STRIX LC RTX 3080 Ti OC available on Amazon.com here.

For UK, check on Amazon UK here.

EVGA GeForce RTX 3080 Ti XC3 Ultra Hybrid Gaming

EVGA’s RTX 3080 Ti XC3 Ultra Hybrid is somewhat similar to Asus’ ROG Strix LC. But the XC3 Ultra Hybrid doesn’t have a head-turner RGB lighting. Only the logo on its side and at the back have RGB lighting. It generally has an all-black aesthetic, including the two 120mm fans cooling the radiator.

And unlike Asus’ liquid-cooled RTX 3080 Ti, the EVGA RTX 3080 Ti XC3 Ultra Hybrid occupies only two PCI slots and requires only two 8-pin PCIe power connectors.

EVGA RTX 3080 Ti XC3 Ultra Hybrid check on Amazon.com here

For UK, check on Amazon UK here.

There you have it, which do you think is the best RTX 3080 Ti graphics card for your use case? If you think the RTX 3080 Ti is too expensive and out of your budget, perhaps you may be interested in checking out some of the best RTX 3080 graphics cards instead. On the other hand, AMD’s flagship Radeon RX 6900 XT is also a 4K-capable graphics card, not to mention it is cheaper than the RTX 3080 Ti. Check out some of the best RX 6900 XT cards here.

On the other hand, AMD’s flagship Radeon RX 6900 XT is also a 4K-capable graphics card, not to mention it is cheaper than the RTX 3080 Ti. Check out some of the best RX 6900 XT cards here.

| NVIDIA GeForce RTX 3080 Ti |

Ravencoin NVIDIA GeForce RTX 3080 Ti Payback 74mo. Hashrate 58.9 Mh/s Mining Profit 24h 0.54 $ 15.21 RVN |

1 200 $ | 0.54 $15.21 RVN | 74mo. | KAWPOW | 58.9 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce RTX 3090 |

Ravencoin NVIDIA GeForce RTX 3090 Payback 105mo. Hashrate 52 Mh/s Mining Profit 24h 0.48 $ 13.43 RVN |

1 500 $ | 0.48 $13.43 RVN | 105mo. | KAWPOW | 52 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce RTX 3080 |

Ravencoin NVIDIA GeForce RTX 3080 Payback 66mo. Hashrate 47 Mh/s Mining Profit 24h 0.43 $ 12.14 RVN |

860 $ | 0.43 $12.14 RVN | 66mo. | KAWPOW | 47 Mh/s | RavencoinPools listed: 3 |

| AMD Radeon VII |

Ravencoin AMD Radeon VII Payback 87mo. Hashrate 42 Mh/s Mining Profit 24h 0.39 $ 10.85 RVN |

1 000 $Used | 0.39 $10.85 RVN | 87mo. | KAWPOW | 42 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce RTX 3070 Ti |

Ravencoin NVIDIA GeForce RTX 3070 Ti Payback 71mo. Hashrate 40 Mh/s Mining Profit 24h 0.37 $ 10.33 RVN |

780 $ | 0.37 $10.33 RVN | 71mo. | KAWPOW | 40 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce RTX 2080 Ti |

Ravencoin NVIDIA GeForce RTX 2080 Ti Payback 71mo. Hashrate 36 Mh/s Mining Profit 24h 0.33 $ 9.299 RVN |

700 $ | 0.33 $9.299 RVN | 71mo. | KAWPOW | 36 Mh/s | RavencoinPools listed: 3 |

| AMD Radeon RX 6800 |

Ravencoin AMD Radeon RX 6800 Payback 88mo. Hashrate 32.7 Mh/s Mining Profit 24h 0.3 $ 8.447 RVN |

790 $ | 0.3 $8.447 RVN | 88mo. | KAWPOW | 32.7 Mh/s | RavencoinPools listed: 3 |

| AMD Radeon RX 6800XT |

Ravencoin AMD Radeon RX 6800XT Payback 89mo. Hashrate 32.7 Mh/s Mining Profit 24h 0.3 $ 8.447 RVN |

800 $ | 0.3 $8.447 RVN | 89mo. | KAWPOW | 32.7 Mh/s | RavencoinPools listed: 3 |

| AMD Radeon RX 6900XT |

Ravencoin AMD Radeon RX 6900XT Payback 100mo. Hashrate 32.7 Mh/s Mining Profit 24h 0.3 $ 8.447 RVN |

900 $ | 0.3 $8.447 RVN | 100mo. | KAWPOW | 32.7 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce RTX 3060 Ti |

Ravencoin NVIDIA GeForce RTX 3060 Ti Payback 63mo. Hashrate 30.5 Mh/s Mining Profit 24h 0.28 $ 7.879 RVN |

530 $ | 0.28 $7.879 RVN | 63mo. | KAWPOW | 30.5 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce RTX 3070 |

Ravencoin NVIDIA GeForce RTX 3070 Payback 79mo. Hashrate 30.3 Mh/s Mining Profit 24h 0.28 $ 7.827 RVN |

660 $ | 0.28 $7.827 RVN | 79mo. | KAWPOW | 30.3 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce RTX 2080 |

Ravencoin NVIDIA GeForce RTX 2080 Payback 73mo. Hashrate 30 Mh/s Mining Profit 24h 0.28 $ 7.749 RVN |

600 $ | 0.28 $7.749 RVN | 73mo. | KAWPOW | 30 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce GTX 1080 Ti |

Ravencoin NVIDIA GeForce GTX 1080 Ti Payback 36mo. Hashrate 25 Mh/s Mining Profit 24h 0.23 $ 6.458 RVN |

250 $Used | 0.23 $6.458 RVN | 36mo. | KAWPOW | 25 Mh/s | RavencoinPools listed: 3 |

| AMD Radeon Vega 64 |

Ravencoin AMD Radeon Vega 64 Payback 65mo. Hashrate 25 Mh/s Mining Profit 24h 0.23 $ 6.458 RVN |

450 $ | 0.23 $6.458 RVN | 65mo. | KAWPOW | 25 Mh/s | RavencoinPools listed: 3 |

| AMD Radeon Vega 56 |

Ravencoin AMD Radeon Vega 56 Payback 51mo. Hashrate 25 Mh/s Mining Profit 24h 0.23 $ 6.458 RVN |

350 $ | 0.23 $6.458 RVN | 51mo. | KAWPOW | 25 Mh/s | RavencoinPools listed: 3 |

| AMD Radeon RX 5700 |

Ravencoin AMD Radeon RX 5700 Payback 57mo. Hashrate 24.8 Mh/s Mining Profit 24h 0.23 $ 6.406 RVN |

390 $ | 0.23 $6.406 RVN | 57mo. | KAWPOW | 24.8 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce RTX 2070 Super |

Aeternity NVIDIA GeForce RTX 2070 Super Payback 78mo. Hashrate 8.22 Gps Mining Profit 24h 0.22 $ 3.010 AE |

520 $ | 0.22 $3.010 AE | 78mo. | CuckooCycle | 8.22 Gps | AeternityPools listed: 2 |

| NVIDIA GeForce RTX 3060 |

Ravencoin NVIDIA GeForce RTX 3060 Payback 70mo. Hashrate 22.7 Mh/s Mining Profit 24h 0.21 $ 5.864 RVN |

440 $ | 0.21 $5.864 RVN | 70mo. | KAWPOW | 22.7 Mh/s | RavencoinPools listed: 3 |

| AMD Radeon RX 6700XT |

Ravencoin AMD Radeon RX 6700XT Payback 78mo. Hashrate 22.7 Mh/s Mining Profit 24h 0.21 $ 5.864 RVN |

490 $ | 0.21 $5.864 RVN | 78mo. | KAWPOW | 22.7 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce RTX 2070 |

Aeternity NVIDIA GeForce RTX 2070 Payback 82mo. Hashrate 7.5 Gps Mining Profit 24h 0.2 $ 2.747 AE |

500 $ | 0.2 $2.747 AE | 82mo. | CuckooCycle | 7.5 Gps | AeternityPools listed: 2 |

| NVIDIA GeForce RTX 2060 Super |

Ravencoin NVIDIA GeForce RTX 2060 Super Payback 46mo. Hashrate 22.2 Mh/s Mining Profit 24h 0.2 $ 5.735 RVN |

280 $ | 0.2 $5.735 RVN | 46mo. | KAWPOW | 22.2 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce GTX 1070 Ti |

Aeternity NVIDIA GeForce GTX 1070 Ti Payback 36mo. Hashrate 6.2 Gps Mining Profit 24h 0.17 $ 2.271 AE |

180 $Used | 0.17 $2.271 AE | 36mo. | CuckooCycle | 6.2 Gps | AeternityPools listed: 2 |

| NVIDIA GeForce GTX 1080 |

Aeternity NVIDIA GeForce GTX 1080 Payback 41mo. Hashrate 6 Gps Mining Profit 24h 0.16 $ 2.197 AE |

200 $Used | 0.16 $2.197 AE | 41mo. | CuckooCycle | 6 Gps | AeternityPools listed: 2 |

| NVIDIA P104-100 |

Aeternity NVIDIA P104-100 Payback 35mo. Hashrate 5.9 Gps Mining Profit 24h 0.16 $ 2.161 AE |

170 $Used | 0.16 $2.161 AE | 35mo. | CuckooCycle | 5.9 Gps | AeternityPools listed: 2 |

| NVIDIA GeForce RTX 2060 |

Aeternity NVIDIA GeForce RTX 2060 Payback 54mo. Hashrate 5.9 Gps Mining Profit 24h 0.16 $ 2.161 AE |

260 $ | 0.16 $2.161 AE | 54mo. | CuckooCycle | 5.9 Gps | AeternityPools listed: 2 |

| NVIDIA GeForce GTX 1070 |

Bitcoin Gold NVIDIA GeForce GTX 1070 Payback 38mo. Hashrate 55.5 Sol/s Mining Profit 24h 0.14 $ 0.007 BTG |

160 $Used | 0.14 $0.007 BTG | 38mo. | Equihash 144_5 | 55.5 Sol/s | Bitcoin GoldPools listed: 7 |

| AMD Radeon RX 5600XT |

Ravencoin AMD Radeon RX 5600XT Payback 68mo. Hashrate 15.06 Mh/s Mining Profit 24h 0.14 $ 3.890 RVN |

280 $ | 0.14 $3.890 RVN | 68mo. | KAWPOW | 15.06 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce GTX 1660 Ti |

Ravencoin NVIDIA GeForce GTX 1660 Ti Payback 65mo. Hashrate 15 Mh/s Mining Profit 24h 0.14 $ 3.875 RVN |

270 $ | 0.14 $3.875 RVN | 65mo. | KAWPOW | 15 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce GTX 1660 Super |

Ravencoin NVIDIA GeForce GTX 1660 Super Payback 61mo. Hashrate 14.2 Mh/s Mining Profit 24h 0.13 $ 3.668 RVN |

240 $ | 0.13 $3.668 RVN | 61mo. | KAWPOW | 14.2 Mh/s | RavencoinPools listed: 3 |

| AMD Radeon RX 6600XT |

Ravencoin AMD Radeon RX 6600XT Payback 84mo. Hashrate 13 Mh/s Mining Profit 24h 0.12 $ 3.358 RVN |

300 $ | 0.12 $3.358 RVN | 84mo. | KAWPOW | 13 Mh/s | RavencoinPools listed: 3 |

| AMD Radeon RX 590 |

Ravencoin AMD Radeon RX 590 Payback 54mo. Hashrate 12 Mh/s Mining Profit 24h 0.11 $ 3.100 RVN |

180 $Used | 0.11 $3.100 RVN | 54mo. | KAWPOW | 12 Mh/s | RavencoinPools listed: 3 |

| AMD Radeon RX 580 |

Ravencoin AMD Radeon RX 580 Payback 53mo. Hashrate 11 Mh/s Mining Profit 24h 0.1 $ 2.841 RVN |

160 $Used | 0.1 $2.841 RVN | 53mo. | KAWPOW | 11 Mh/s | RavencoinPools listed: 3 |

| AMD Radeon RX 480 |

Ravencoin AMD Radeon RX 480 Payback 36mo. Hashrate 11 Mh/s Mining Profit 24h 0.1 $ 2.841 RVN |

110 $Used | 0.1 $2.841 RVN | 36mo. | KAWPOW | 11 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce GTX 1660 |

Aeternity NVIDIA GeForce GTX 1660 Payback 73mo. Hashrate 3.7 Gps Mining Profit 24h 0.1 $ 1.355 AE |

220 $ | 0.1 $1.355 AE | 73mo. | CuckooCycle | 3.7 Gps | AeternityPools listed: 2 |

| AMD Radeon RX 570 |

Ravencoin AMD Radeon RX 570 Payback 54mo. Hashrate 10 Mh/s Mining Profit 24h 0.09 $ 2.583 RVN |

150 $Used | 0.09 $2.583 RVN | 54mo. | KAWPOW | 10 Mh/s | RavencoinPools listed: 3 |

| AMD Radeon RX 470 |

Ravencoin AMD Radeon RX 470 Payback 36mo. Hashrate 10 Mh/s Mining Profit 24h 0.09 $ 2.583 RVN |

100 $Used | 0.09 $2.583 RVN | 36mo. | KAWPOW | 10 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce GTX 1060 |

Bitcoin Gold NVIDIA GeForce GTX 1060 Payback 46mo. Hashrate 35 Sol/s Mining Profit 24h 0.09 $ 0.004 BTG |

120 $Used | 0.09 $0.004 BTG | 46mo. | Equihash 144_5 | 35 Sol/s | Bitcoin GoldPools listed: 7 |

| NVIDIA GeForce GTX 1650 |

Ravencoin NVIDIA GeForce GTX 1650 Payback 94mo. Hashrate 8.1 Mh/s Mining Profit 24h 0.07 $ 2.092 RVN |

210 $ | 0.07 $2.092 RVN | 94mo. | KAWPOW | 8.1 Mh/s | RavencoinPools listed: 3 |

| NVIDIA GeForce GTX 1050 Ti |

Ravencoin NVIDIA GeForce GTX 1050 Ti Payback 52mo. Hashrate 7 Mh/s Mining Profit 24h 0.06 $ 1.808 RVN |

100 $Used | 0.06 $1.808 RVN | 52mo. | KAWPOW | 7 Mh/s | RavencoinPools listed: 3 |

List-table of Nvidia GeForce video cards | AMD news

Skip to content

Category: Video cards

Tags: #list_of_graphic_cards_GeForce, #list_of_video_cards_Nvidia, #nvidia_geforce

Description

Here is a detailed list-table of Nvidia GeForce video cards. The newest models are listed at the top of the list, the oldest ones at the bottom.

The newest models are listed at the top of the list, the oldest ones at the bottom.

List table of Nvidia GeForce(GF) RTX4000 Series video cards

Nvidia GeForce RTX4000 video cards are based on 5nm Ada Lovelace architecture.

| GeForce | GPU Name | Speed (Turbo) | Memory | PCIe | Bits | CUDA Cores | FP32 | TWD |

| RTX4090 | AD102-300 | 2235Mhz 2250Mhz | 24Gb GDDR6X | 4.0 | 384 | 16384 | 82.6 TFLOPs | 450 |

| RTX4080 | AD103-300 | 2205Mhz 2508Mhz | 16Gb GDDR6X | 4.0 | 256 | 9728 | 48.8 TFLOPs | 320 |

| RTX4080 12Gb | AD104-400 | 2310Mhz 2685Mhz | 12Gb GDDR6X | 4. 0 0 |

192 | 9728 | 48.2 TFLOPs | 285 |

List table of Nvidia GeForce(GF) RTX3000 Series video cards

Nvidia GeForce RTX3000 video cards are based on the 8nm Ampere architecture.

| GeForce | GPU Name | Speed (Turbo) | Memory | PCIe | Bits | CUDA Cores | FP32 | TWD |

| RTX3090Ti | GA102-350 | 1560Mhz 1860Mhz | 24Gb GDDR6X | 4.0 | 384 | 10752 | 40 TFLOPs | 450 |

| RTX3090 | GA102-300 | 1395Mhz 1695Mhz | 24Gb GDDR6X | 4.0 | 384 | 10496 | 35.6 TFLOPs | 350 |

| RTX3080Ti | GA102-225 | 1365Mhz 1665Mhz | 12Gb GDDR6X | 4. 0 0 |

384 | 10240 | 34.1 TFLOPs | 350 |

| RTX3080 | GA102-200 | 1440Mhz 1710Mhz | 10Gb GDDR6X | 4.0 | 320 | 8704 | 29.8 TFLOPs | 320 |

| RTX3070Ti | GA104-400 | 1575Mhz 1770Mhz | 8Gb GDDR6X | 4.0 | 256 | 6144 | 21.75 TFLOPs | 290 |

| RTX3070 | GA104-300 | 1500Mhz 1725Mhz | 8Gb GDDR6 | 4.0 | 256 | 5888 | 20.3 TFLOPs | 220 |

| RTX3060Ti | GA104-200 | 1410Mhz 1665Mhz | 8Gb GDDR6 | 4.0 | 256 | 4864 | 16.2 TFLOPs | 200 |

| RTX3060 | GA106-300 | 1320Mhz 1777Mhz | 8Gb GDDR6 | 4.0 | 192 | 3584 | 12. 7 TFLOPs 7 TFLOPs |

170 |

| RTX3050 | GA106-150 | 1552Mhz 1777Mhz | 8Gb GDDR6 | 4.0 | 128 | 2560 | 9.1 TFLOPs | 130 |

List table of Nvidia GeForce(GF) RTX2000 Series video cards

Nvidia GeForce RTX2000 video cards are based on 12nm Turing architecture.

| GeForce | GPU Name | Speed (Turbo) | Memory | PCIe | Bits | CUDA Cores | FP32 | TWD |

| RTX2080Ti | TU102-300 | 1350Mhz 1545Mhz | 11Gb GDDR6 | 3.0 | 352 | 4352 | 13.4 TFLOPs | 250 |

| RTX2080 Super | TU104-450 | 1350Mhz 1545Mhz | 8Gb GDDR6 | 3.0 | 256 | 3072 | 11. 1 TFLOPs 1 TFLOPs |

250 |

| RTX2080 | TU104-400 | 1515Mhz 1710Mhz | 8Gb GDDR6 | 3.0 | 256 | 2944 | 10.1 TFLOPs | 215 |

| RTX2070 Super | TU104-410 | 1605Mhz 1770Mhz | 8Gb GDDR6 | 3.0 | 256 | 2560 | 9.1 TFLOPs | 215 |

| RTX2070 | TU106-400 | 1410Mhz 1620Mhz | 8Gb GDDR6 | 3.0 | 256 | 2304 | 7.5 TFLOPs | 175 |

| RTX2060 Super | TU106-410 | 1410Mhz 1620Mhz | 8Gb GDDR6 | 3.0 | 256 | 2176 | 7.2 TFLOPs | 175 |

| RTX2060 12Gb | TU106-300 | 1470Mhz 1650Mhz | 12Gb GDDR6 | 3.0 | 192 | 2176 | 7.2 TFLOPs | 185 |

| RTX2060 | TU106-300 | 1365Mhz 1680Mhz | 6Gb GDDR6 | 3. 0 0 |

192 | 1920 | 6.5 TFLOPs | 160 |

List table of Nvidia GeForce(GF) GTX1600 Series video cards

Nvidia GeForce GTX1600 video cards are based on 12nm Turing architecture.

| GeForce | GPU Name | Speed (Turbo) | Memory | PCIe | Bits | CUDA Cores | FP32 | TWD |

| GTX1660Ti | TU116-400 | 1500Mhz 1770Mhz | 6Gb GDDR6 | 3.0 | 192 | 1536 | 5.4 TFLOPs | 120 |

| GTX1660 Super | TU116-300 | 1530Mhz 1785Mhz | 6Gb GDDR6 | 3.0 | 192 | 1408 | 5.1 TFLOPs | 125 |

| GTX1660 | TU116-300 | 1530Mhz 1785Mhz | 6Gb GDDR6 | 3. 0 0 |

192 | 1408 | 5.0 TFLOPs | 120 |

| GTX1650 Super | TU116-250 | 1530Mhz 1725Mhz | 4Gb GDDR6 | 3.0 | 128 | 1280 | 4.4 TFLOPs | 100 |

| GTX1650 | TU117-300 | 1485Mhz 1665Mhz | 4Gb GDDR6 | 3.0 | 128 | 896 | 3.0 TFLOPs | 75 |

| GTX1630 | TU117-150 | 1740Mhz 1785Mhz | 4Gb GDDR6 | 3.0 | 64 | 512 | 1.83 TFLOPs | 75 |

List table of Nvidia GeForce(GF) GTX1000 Series video cards

Nvidia GeForce GTX1000 video cards are based on 16nm Pascal architecture.

| GeForce | GPU Name | Speed (Turbo) | Memory | PCIe | Bits | CUDA Cores | FP32 | TWD |

| GTX1080Ti | GP102-350 | 1480Mhz 1582Mhz | 11Gb GDDR5X | 3. 0 0 |

352 | 3584 | 11.3 TFLOPs | 250 |

| GTX1080 | GP104-400 | 1607Mhz 1733Mhz | 8Gb GDDR5X | 3.0 | 256 | 2560 | 8.9 TFLOPs | 180 |

| GTX1070Ti | GP104-300 | 1607Mhz 1683Mhz | 8Gb GDDR5 | 3.0 | 256 | 2432 | 8.2 TFLOPs | 180 |

| GTX1070 | GP104-200 | 1506Mhz 1683Mhz | 8Gb GDDR5 | 3.0 | 256 | 1920 | 6.5 TFLOPs | 150 |

| GTX1060 6Gb_Video | GP106-400 | 1506Mhz 1708Mhz | 6Gb GDDR5 | 3.0 | 192 | 1280 | 4.4 TFLOPs | 120 |

| GTX1060 5Gb_Video | PG410 | 1506Mhz 1708Mhz | 5Gb GDDR5 | 3.0 | 160 | 1280 | 4. 3 TFLOPs 3 TFLOPs |

120 |

| GTX1060 3Gb_Video | GP106-300 | 1506Mhz 1708Mhz | 3Gb GDDR5 | 3.0 | 192 | 1152 | 3.9 TFLOPs | 120 |

| GTX1050Ti | GP107-400 | 1291Mhz 1392Mhz | 4Gb GDDR5 | 3.0 | 128 | 768 | 2.1 TFLOPs | 75 |

| GTX1050 3Gb_Video | PG210 | 1392Mhz 1518Mhz | 3Gb GDDR5 | 3.0 | 128 | 768 | 2.3 TFLOPs | 75 |

| GTX1050 | GP107-300 | 1354Mhz 1455Mhz | 2Gb GDDR5 | 3.0 | 128 | 640 | 1.9 TFLOPs | 75 |

| GTX1030 | GP108-300 | 1227Mhz 1468Mhz | 2Gb GDDR5 | 3.0 | 64 | 384 | 1.1 TFLOPs | 30 |

List table of Nvidia GeForce(GF) GTX900 Series video cards

Nvidia GeForce GTX900 video cards are based on 28nm Maxwell architecture.

| GeForce | GPU Name | Speed (Turbo) | Memory | PCIe | Bits | CUDA Cores | FP32 | TWD |

| GTX980Ti | GM200-310 | 1000Mhz 1076Mhz | 6Gb GDDR5 | 3.0 | 384 | 2816 | 6.1 TFLOPs | 250 |

| GTX980 | GM204-400 | 1126Mhz 1216Mhz | 4Gb GDDR5 | 3.0 | 256 | 2048 | 5.0 TFLOPs | 165 |

| GTX970 | GM204-200 | 1051Mhz 1178Mhz | 3.5Gb GDDR5 | 3.0 | 224 | 1664 | 3.9 TFLOPs | 145 |

| GTX960 | GM206-300 | 1127Mhz 1178Mhz | 2Gb GDDR5 | 3.0 | 128 | 1024 | 2. 4 TFLOPs 4 TFLOPs |

120 |

| GTX950 | GM206-250 | 1024Mhz 1188Mhz | 2Gb GDDR5 | 3.0 | 128 | 768 | 1.8 TFLOPs | 90 |

List table of Nvidia GeForce(GF) GTX700 Series video cards

Nvidia GeForce GTX700 video cards are based on 28nm Kepler architecture.

| GeForce | GPU Name | Speed (Turbo) | Memory | PCIe | Bits | CUDA Cores | FP32 | TWD |

| GTX780Ti | GK110-425 | 875Mhz 928Mhz | 3Gb GDDR5 | 3.0 | 384 | 2880 | 5.4 TFLOPs | 250 |

| GTX780 | GK110-300 | 863Mhz 900Mhz | 3Gb GDDR5 | 3.0 | 384 | 2304 | 4. 2 TFLOPs 2 TFLOPs |