BFG Tech GeForce 6600 GT OC (Solo and SLI)

Bjorn3D.com Reviewer

March 31, 2005

Hardware, Reviews & Articles

Leave a comment

One of the coolest things you can offer customers is a warrantied overclock, and BFG continues to do just that with its “OC” line of video cards. Check out this review of BFG’s 6600 GT OC; I cover the performance of the card by itself and in SLI mode. See how much of a difference the slight overclock makes when compared to a competitor’s non-overclocked card.

INTRODUCTION

With its lifetime warranty, it’s easy to recommend the BFG Tech brand of video cards to people who ask for purchasing advice. A warranty isn’t enough to sell a product though. BFG also consistently offers great performance and often top performance thanks to its “OC” line of graphic cards, which offer warrantied out-of-the-box overclocked speeds.

Today, I’m going to take a look at BFG’s entry level SLI-capable card, the 6600 GT OC. This card comes with a 25MHz overclock on both the core and memory clock. It is also a dual DVI card with HDTV support. Read on to see how the card performs by itself and teamed up with a twin for some SLI action.

FEATURES, SPECIFICATIONS and BUNDLE

Features

- PCI Express™

- NVIDIA SLI multi-GPU ready

- Superscalar GPU architecture

- NVIDIA CineFX 3.0 engine

- High Speed GDDR3 memory interface

- Microsoft DirectX 9.0 Shader Model 3.0 support

Specifications

- BFG GeForce™ 6600 GT OC

- PCI Express™

- Memory 128MB GDDR3

- Core Clock 525MHz (vs. 500MHz standard)

- Memory Clock 1050MHz (vs. 1000MHz standard)

- RAMDAC Dual 400MHz

- Microsoft® DirectX® 9.

0 and lower, OpenGL 1.5 and lower for Microsoft® Windows®

0 and lower, OpenGL 1.5 and lower for Microsoft® Windows® - Connectors Dual DVI-I, HDTV

- S-Video Out

- 393 million vertices/sec setup

- 16.8GB/second memory bandwidth

Bundle

- BFG GeForce™ 6600 GT OC graphics card

- Quick install manual

- DVI-I to VGA dongle x 2

- HDTV / Video-Out Cable

- Driver CD, which includes:

- NVIDIA® ForceWare™ unified graphics drivers

- NVIDIA® demos

- Full installation manual .pdf

- NVIDIA® NVDVD™ 2.0 multimedia software

- Windowblinds™ BFG/NVIDIA® skins

The most exciting thing about the BFG 6600 GT OC is that you get a fully warrantied overclock out of the box, which means you know that you get better performance than any of the competition’s stock 6600 GT’s. The bundle, though, is not very exciting, but BFG manages to throw in NVDVD 2.0 and some Windowblinds BFG/NVIDIA skins. Many of us would like to see a good game or two in a bundle, but BFG typically does not follow that path. Rather, the company’s focus is performance, hence the entire “OC” line of cards.

Rather, the company’s focus is performance, hence the entire “OC” line of cards.

|

$$ FIND THE BEST PRICES FOR THE BFG 6600 GT OC PCI-E AT PRICEGRABBER $$ |

CLOSER LOOK

The BFG 6600 GT OC is noticeably heavier than the Leadtek WinFast PX6600 GT that I just reviewed due to all the copper used for the cooler. Copper weighs more than aluminum, and the Leadtek 6600 GT’s cooler is all aluminum. I think the copper actually looks a bit more distinctive, especially on BFG’s typical blue PCB. As you can see below, copper covers every chip on the board. Additionally, the cooler moves air with a nice-looking clear fan.

Since it is the PCI Express version, this BFG 6600 GT OC is SLI multi-GPU ready. It also features 128MB of GDDR3 and support for Shader Model 3. 0. BFG opted to go with the dual DVI layout instead of the more common VGA + DVI configuration. That’s a good choice considering the continual increase in LCD monitor popularity. Never fear though if you have no use for DVI connections; BFG includes two DVI-to-VGA adapters in the box.

0. BFG opted to go with the dual DVI layout instead of the more common VGA + DVI configuration. That’s a good choice considering the continual increase in LCD monitor popularity. Never fear though if you have no use for DVI connections; BFG includes two DVI-to-VGA adapters in the box.

In addition to the blue PCB and copper cooler to provide good looks, the BFG 6600 GT OC also features a blue LED to light up your case. You can check out the pictures below to get an idea of how bright the LED is. In the pictures, you can see what the SLI setup looks like.

|

$$ FIND THE BEST PRICES FOR THE BFG 6600 GT OC PCI-E AT PRICEGRABBER $$ |

TEST SYSTEM, OVERCLOCKING and BENCHMARKS

Test System

My test system features the DFI LANPARTY NF4 SLI-DR, which I recently reviewed, and an Athlon 64 3200+. I’ve also included a gig of RAM and used Windows XP SP2.

I’ve also included a gig of RAM and used Windows XP SP2.

The result charts on the following pages will show you how the BFG 6600 GT OC performs in single and dual-GPU mode compared to the following video card configurations: single Leadtek 6600 GT, dual Leadtek 6600 GT’s in SLI, and a single reference NVIDIA GeForce 6800 GT. I have also included the percentage difference between a single 6600 GT OC’s performance and the 6600 GT OC SLI’s performance on the charts. Here are the components that make up my test system.

|

Overclocking

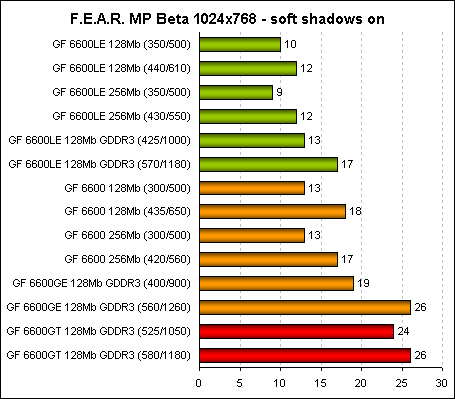

When you buy an “OC” card from BFG, you get overclocking right out of the box. In the case of the 6600 GT OC, you get an additional 25MHz over stock on both the core and memory clock. Rather than the reference clocks of 500MHz and 500MHz (1.0GHz DDR3), the 6600 GT OC runs at 525MHz for the core and 525MHz (1.05GHz DDR3) for the memory. BFG’s overclock is typically on the conservative side, so I figured I could get some more out of this card. I was able to overclock the 6600 GT OC to 580MHz core (16% higher than 500MHz) and 560MHz/1120MHz memory (12% higher than 500MHz). The performance increase that resulted from this overclock was between 4% and 11%.

Benchmarks

- 3DMark03 v3.6.0 – default settings

- 3DMark05 v1.2.0 – default settings

- AquaMark3 – NoAA / NoAF and 4xAA / 8xAF, both with drivers set to application controlled

- Counter-Strike: Source – Video Stress Test – NoAA / 2xAF and 4xAA / 8xAF, both with highest details set in game with drivers set to application controlled

- Doom 3 1.

1 (demo1) – NoAA / High Quality and 4xAA / High Quality, both with highest details set in game with drivers set to application controlled

1 (demo1) – NoAA / High Quality and 4xAA / High Quality, both with highest details set in game with drivers set to application controlled - Far Cry 1.3 – NoAA / 1xAF and 4xAA / 4xAF, both with highest details set in game with drivers set to application controlled

Note about customer support:

I was having issues when running the test system with two 6600 GT OC’s in SLI mode. During benchmarks, the NVIDIA Sentinel warning system kicked in and indicated that not enough power was being fed to the cards. I checked the cards to make sure they were inserted fully, and I did some other minor trial and error troubleshooting. Nothing I did seemed to fix the problem, and I wasn’t worried about the power supply since this system has a 680W unit in it. I decided to just go ahead and call tech support at about 6:30pm to see if they had any reports of issues with the cards or maybe even the PSU or motherboard I was using. After a very short wait on hold, I talked to a very nice support person who suggested that I use Driver Cleaner and reinstall the drivers (he suggested the 71. 90 drivers from guru3d.com). I tried the suggested steps but went with the 66.93 drivers instead since I needed to use those for the comparison to previous benchmarks. The Sentinel system hasn’t popped up again since then. It’s really great to have such quick and easy-to-use 24×7 tech support available after purchasing a product.

90 drivers from guru3d.com). I tried the suggested steps but went with the 66.93 drivers instead since I needed to use those for the comparison to previous benchmarks. The Sentinel system hasn’t popped up again since then. It’s really great to have such quick and easy-to-use 24×7 tech support available after purchasing a product.

|

$$ FIND THE BEST PRICES FOR THE BFG 6600 GT OC PCI-E AT PRICEGRABBER $$ |

PERFORMANCE – 3DMARK

There is no need for introduction to Futuremark’s 3DMark benchmarking applications. Although many people question their benchmarking relevance, their popularity and ease of use is undeniable. As long as people seem to love comparing their 3DMark results, I’ll probably include them in my reviews since they provide a good reference point.

Synthetic benchmarks paint SLI in a very good light, but we have to take these results with a grain of salt since they don’t directly indicate how the cards would perform in any certain games or game engine. You can get a good feel for how much of an edge the BFG 6600 GT OC has over the stock clocked Leadtek card though.

|

$$ FIND THE BEST PRICES FOR THE BFG 6600 GT OC PCI-E AT PRICEGRABBER $$ |

PERFORMANCE – AQUAMARK3

The AquaMark3 benchmark is based on an actual game engine, and it can really stress even the most modern cards. I ran the benchmark with AA and AF disabled and also with 4xAA and 8xAF in the application and “Application Preference” set in the driver control panel.

Once again, the BFG card shows us what a little overclocking can do by outperforming the Leadtek card by around two frames per second across the board.

|

$$ FIND THE BEST PRICES FOR THE BFG 6600 GT OC PCI-E AT PRICEGRABBER $$ |

PERFORMANCE – SOURCE STRESS TEST

The Source Video Stress Test can be accessed within the Counter-Strike: Source game menu. The benchmark is rather short but involves rendering some very complex imagery. The final result is shown in frames per second (FPS). I ran the test with two different AA / AF settings in the game’s options — NoAA / 2xAF and 4xAA / 8xAF. Also, I selected high details for all quality options.

Not much is surprising here. The BFG SLI pair is the top performer overall with NoAA / 2xAF, and it competes closely with the 6800 GT when 4xAA / 8xAF is set.

The BFG SLI pair is the top performer overall with NoAA / 2xAF, and it competes closely with the 6800 GT when 4xAA / 8xAF is set.

|

$$ FIND THE BEST PRICES FOR THE BFG 6600 GT OC PCI-E AT PRICEGRABBER $$ |

PERFORMANCE – DOOM 3

While it can play fairly well on low-end systems at lower resolutions, Doom 3 can really punish a system if you crank up the details and resolution. I ran the included demo1 timedemo with quality set to high and AA turned off and also with quality set to high and AA set to 4x in the game.

Doom 3 is a game that definitely benefits from SLI, especially at higher resolutions and with AA and AF enabled. The BFG cards offer a slight advantage over the Leadtek 6600 GT, and the BFG SLI configuration overcomes the 6800 GT by an impressive amount.

|

$$ FIND THE BEST PRICES FOR THE BFG 6600 GT OC PCI-E AT PRICEGRABBER $$ |

PERFORMANCE – FAR CRY

Far Cry is currently one of the most pipeline-punishing PC games available. Playing at the highest resolution with eye candy maxed out and still getting playable frame rates is not really possible for even the most powerful systems and graphics cards. The demo I have chosen to use with Far Cry is the PCGH_VGA Timedemo from 3dcenter.

SLI seems to act kind of weird here for some reason. I’m not sure if it’s a Far Cry thing or if it’s just this test situation or a test system limitation. By itself though, the BFG 6600 GT OC offers a nice extra bit of performance over the Leadtek 6600 GT.

|

$$ FIND THE BEST PRICES FOR THE BFG 6600 GT OC PCI-E AT PRICEGRABBER $$ |

CONCLUSION

For the out-of-the-box warrantied overclock, you will have to pay a slight price premium over stock speed 6600 GT’s, but if you want one of the highest performing 6600 GT’s available, then BFG has you covered with its 6600 GT OC. It’s a great card with cool looks and a mediocre bundle. As always, though, I have to emphasize BFG’s lifetime warranty and 24×7 tech support. Those two things are very big selling points to me and offer a lot of comfort you just can’t get from many other companies.

Obviously since the 6600 GT OC offers great performance by itself, it will also provide some of the best performance numbers (if not the best) in SLI mode when you set up a pair of them on your SLI motherboard. If performance and support are your top concerns, then look no further than BFG. The “OC” line continues to offer top performance.

If performance and support are your top concerns, then look no further than BFG. The “OC” line continues to offer top performance.

My main concern about the BFG 6600 GT OC was how hot it seems to run. Every time I checked the temperature in the NVIDIA driver control panel, the GPU was indicating a temperature between 60C and 75C (higher if I had just exited a game or benchmark). This is actually hotter than the Leadtek 6600 GT’s that I’ve referred to several times in this article. The all-copper solution is also quite a bit heavier than aluminum solutions.

Pros:

+ Lifetime warranty

+ 24×7 tech support

+ Top 6600 GT performance

+ No power connection required

+ SLI capable

+ Already overclocked

Cons:

– Copper cooler makes it relatively heavy for a small card

– Runs a little hot (although still in acceptable ranges)

Final Score: 9 out of 10 and the Bjorn3D Seal of Approval

|

$$ FIND THE BEST PRICES FOR THE BFG 6600 GT OC PCI-E AT PRICEGRABBER $$ |

Previous Icemat Siberia Headphones

Next Sony PlayStation Portable: The Games

Check Also

If you have your eye set for the Cooler Master HAF 700 EVO but just cannot imaging shelling out $499 of your heard earned money, then the HAF 700 is just be the case for you. Retailed at $200 cheaper than the HAF 700 EVO, the HAF 700 shared a lot of DNA with its bigger brother yet it is much more affordable.

Retailed at $200 cheaper than the HAF 700 EVO, the HAF 700 shared a lot of DNA with its bigger brother yet it is much more affordable.

One advantage a stationary computer has over a laptop is that you can usually keep …

NVIDIA’s GeForce 6600GT on AGP

Overclocking:

We took the time to overclock this video card, to give an idea of how much extra performance there is under the hood. As usual, this may not represent realistic clocks that could be achieved by all GeForce 6600GT’s on AGP, but it should give a good indication. We managed to overclock the video card from its default clock speeds up to 556/1100MHz, which isn’t too bad when you consider that the memory is only rated to 1000MHz — we’ve gained 200MHz on the memory clocks and a healthy 56MHz on the core.

The increase in clock speeds allowed us to increase the resolution in Doom 3, from 1280×1024 2xAA 8xAF to 1600×1200 0xAA 8xAF, while still delivering an average frame rate of around 50 frames per second, while also keeping a very playable minimum frame rate of 25 frames per second in our test system. We could now achieve a smooth and enjoyable gaming experience in Doom 3 at 1600×1200. Also, in Far Cry, it allowed us to increase the resolution to 1280×1024 with 4xAF at the same in-game detail settings that we used for our manual run through on this video card.

We could now achieve a smooth and enjoyable gaming experience in Doom 3 at 1600×1200. Also, in Far Cry, it allowed us to increase the resolution to 1280×1024 with 4xAF at the same in-game detail settings that we used for our manual run through on this video card.

Final Thoughts:

We have slightly mixed feelings about the GeForce 6600GT on AGP at the moment, albeit due to a number of rather telling issues that we came across. These issues fall under the heading of driver bugs — there are a number of compelling issues that we see as required fixes to poor rendering quality.

The hitching issue that we discussed in our FarCry 1.3 evaluation is still there in the new ForceWare 66.93 drivers, which can prove to be an annoying problem. There is also the over bright issue on the roof of the factory — we still feel that this doesn’t look quite right on either vendor’s video driver. Neither of the last two driver versions of ATI’s Catalyst driver, and NVIDIA’s ForceWare driver has improved the quality of the scene being rendered.

Also we discussed the poor and blurred image quality in Need For Speed: Underground 2 — the blurred image quality was only apparent on the GeForce 6600GT, but we have noticed it before on the two GeForce 6800GT’s which we evaluated last week. We will investigate this matter further, as we feel that there is a possibility that the game is forcing the drivers to use the out-of-favour Quincunx Anti-Aliasing, which actually provides a rather blurred scene. There was also the issue of poor quality sky on both video cards, this isn’t anything new though, as we came across this during our high-end roundup — we can hope that this is a demo-specific image quality problem, and that it will be fixed in the upcoming release of the full game.

There are questions to be asked about the reduced memory clocks, and we are more than a little curious about the reasoning behind this. There are several explanations behind NVIDIA’s decision to reduce the memory clocks by 100MHz on the AGP version of the GeForce 6600GT. The obvious reason would be for cost, due to the additional board components like the HSI bridge chip, which inevitably increase the costs to manufacture the video card.

The obvious reason would be for cost, due to the additional board components like the HSI bridge chip, which inevitably increase the costs to manufacture the video card.

While we would expect something to be cut back on, there doesn’t appear to be anything apart from the clock speed deficit. The memory that has been used is the same 2.0ns memory that can be found on the PCI-Express version of this card, so the physical costs for the modules will be the same in both cases. The other major explanation behind the reduced memory clocks could be NVIDIA’s way of dangling the proverbial PCI-Express carrot to its customers — they will only get the highest performance by moving over to PCI-Express. This could prove to be an incentive for users to upgrade to the PCI-Express platform; however, we are still awaiting motherboards on the Athlon 64 front, so we feel that the PCI-Express uptake isn’t going to move as fast as both ATI and NVIDIA would hope, at least for the moment.

It will be interesting to see what board partners decide to do, as there are likely to be a few board partners who differ from NVIDIA’s reference clock speeds. We may well see AGP versions of the GeForce 6600GT clocked to 500/1000MHz, but only time will tell at this early stage.

We may well see AGP versions of the GeForce 6600GT clocked to 500/1000MHz, but only time will tell at this early stage.

Final, Final Thoughts:

On the whole, the performance delivered by the GeForce 6600GT is class leading, but due to a number of poor image quality issues, we found that the real-world gaming experience delivered by the GeForce 6600GT wasn’t everything that it could be. Game play was smooth in most titles, with the exception of FarCry, where our hitching issues came back to haunt us in the new release of the ForceWare driver. The real downer was the poor image quality in NFS: Underground 2; at present we are not 100% sure of the cause for the blurred appearance, but we will be investigating this further. Look out for some image quality examinations in the discussion thread over the next day or so.

The GeForce 6600GT on AGP has all the ingredients to take the lead as the best bang for buck video card in the mainstream. It does suffer from the image quality issues that we have mentioned above, and we hope that new drivers will fix these bugs and confirm the GeForce 6600GT’s position as the king of mainstream AGP video cards. With a serious lack of presence from ATI’s own bridge chip, RIALTO, and therefore no AGP Radeon X700 XT, we expect the GeForce 6600GT to hold on to the mainstream AGP top spot for a good while yet — it has the performance advantage over the Radeon 9800 Pro, and we feel that it is only a matter of time for the image quality in Need For Speed: Underground 2 to catch up. On the grounds that this will be fixed over the coming weeks, we feel that the GeForce 6600GT AGP is well worth your hard-earned cash in favour of the ageing Radeon 9800 Pro — it will deliver a consistently better gaming experience.

With a serious lack of presence from ATI’s own bridge chip, RIALTO, and therefore no AGP Radeon X700 XT, we expect the GeForce 6600GT to hold on to the mainstream AGP top spot for a good while yet — it has the performance advantage over the Radeon 9800 Pro, and we feel that it is only a matter of time for the image quality in Need For Speed: Underground 2 to catch up. On the grounds that this will be fixed over the coming weeks, we feel that the GeForce 6600GT AGP is well worth your hard-earned cash in favour of the ageing Radeon 9800 Pro — it will deliver a consistently better gaming experience.

1 — Introduction2 — How We Tested3 — Doom 34 — FarCry5 — Warhammer 40,000: Dawn Of War6 — Rome: Total War7 — NFS: Underground 2 Demo8 — Overclocking & Final Thoughts…

GeForce 6600 GT: 128 or 256 MB?

In the first article devoted to the question of the expediency of using 256 MB for budget video cards, it was concluded that the memory clock frequency is more important than its size. Now we will check the validity of this statement in relation to products of the middle price range, taking motherboards based on GeForce 6600 GT chips as an example.

Now we will check the validity of this statement in relation to products of the middle price range, taking motherboards based on GeForce 6600 GT chips as an example.

Let’s help

Previously, GeForce 6600 GT accelerators were produced only with 128 MB of memory, but modifications with 256 MB are already on sale. The lack of official specifications and reference design for a board with 256 MB on board led to several versions of the GeForce 6600 GT 256 MB appearing, differing in frequencies, type and location of memory chips. At the moment, there are cards with GDDR3 chips operating at 1000 MHz, as well as GDDR2 (800 MHz), but other options are possible, and therefore you should carefully check the characteristics of the video adapter before buying. nine0003

| Leadtek 6600 GT |

| Point of View GeForce 6600GT |

Now about the participants of today’s testing. The 128 MB version is presented by Leadtek Winfast PX6600 GT TDH Extreme. This video card has higher than nominal core/memory frequencies — 550/1125 MHz, so to imitate the results of the standard GeForce 6600 GT 128 MB these characteristics were lowered to 500/1000 MHz. The variant with 256 MB is presented by Point of View GeForce 6600 GT 256 MB with 500/1000 MHz. This graphics accelerator was also tested at 500/800 MHz (to evaluate the performance of the junior model with 256 MB) and 550/1125 MHz (to compare with the overclocked version from Leadtek). Note that on this board, additional memory is located on the back, which, in our opinion, complicates the overclocking of the video card, so an auxiliary fan may be required to achieve the best effect. nine0003

The 128 MB version is presented by Leadtek Winfast PX6600 GT TDH Extreme. This video card has higher than nominal core/memory frequencies — 550/1125 MHz, so to imitate the results of the standard GeForce 6600 GT 128 MB these characteristics were lowered to 500/1000 MHz. The variant with 256 MB is presented by Point of View GeForce 6600 GT 256 MB with 500/1000 MHz. This graphics accelerator was also tested at 500/800 MHz (to evaluate the performance of the junior model with 256 MB) and 550/1125 MHz (to compare with the overclocked version from Leadtek). Note that on this board, additional memory is located on the back, which, in our opinion, complicates the overclocking of the video card, so an auxiliary fan may be required to achieve the best effect. nine0003

Before moving on to the test results, let’s talk a little about the changes in the programs used and in the process itself. First, in addition to testing with 1 GB of RAM, a performance study was conducted with 512 MB. Secondly, the list of tests included Serious Sam 2, which has a fairly high level of visual effects, as well as a new version of the 3DMark06 synthetic package from Futuremark. Thirdly, the RivaTuner program used to measure video memory load has been updated to version 15.8, which makes it possible to obtain information not only about the use of the local video memory of the accelerator, but also about the amount of RAM allocated for the needs of the video card (non-local video memory) . Unfortunately, due to some features of the ForceWare drivers, this data can only be obtained for software with the Direct3D API, but on the other hand, the number of programs for OpenGL is not too large. nine0003

Thirdly, the RivaTuner program used to measure video memory load has been updated to version 15.8, which makes it possible to obtain information not only about the use of the local video memory of the accelerator, but also about the amount of RAM allocated for the needs of the video card (non-local video memory) . Unfortunately, due to some features of the ForceWare drivers, this data can only be obtained for software with the Direct3D API, but on the other hand, the number of programs for OpenGL is not too large. nine0003

Let’s look at the test results. Let’s start with the variant when 512 MB of RAM was used: in almost all applications, cards with 256 MB have an advantage, only in F.E.A.R. still not felt (within the measurement error) doubling the amount of video memory. Taking into account that prices for 6600 GT 128 MB from a well-known manufacturer start at $140, and for 6600 GT 256 MB — from $160, then for owners of computers with 512 MB RAM, the 256 MB version of the card (even if the memory frequency is lower) will be a better purchase . On the other hand, a 512 MB PC3200 module costs about $40-45, and when comparing 6600 GT 256 MB with 512 MB RAM and 6600 GT 128 MB with 1 GB RAM, the second option always wins, which we think is more reasonable in this case. Although, of course, it is better to buy both additional RAM and a video card with 256 MB. nine0003

On the other hand, a 512 MB PC3200 module costs about $40-45, and when comparing 6600 GT 256 MB with 512 MB RAM and 6600 GT 128 MB with 1 GB RAM, the second option always wins, which we think is more reasonable in this case. Although, of course, it is better to buy both additional RAM and a video card with 256 MB. nine0003

If the system has 1 GB of RAM, the situation is similar to what we described in the previous article: Far Cry and F.E.A.R. insensitive (in selected graphics quality modes) to additional video memory, DOOM 3 and 3DMark demonstrate a slight advantage of the card with 256 MB of video memory, which, in our opinion, is not worth paying extra for. Quite different from other games, the results show tests in Serious Sam 2: the presence of an additional 128 MB gives from 8 to 33% performance increase! Moreover, on the card with 128 MB during the run of demo recordings, periodic access to the hard disk was observed, during which the speed dropped to 1-2 frames per second, which did not happen on the adapter with 256 MB of video memory. Note that so far the number of such games is small, but in the future there will be more of them, and this applies primarily to strategies, RPGs and MMORPGs, which are less demanding on the performance of the video chip, but often have many textures. Although it’s rather difficult to say exactly when such a moment will come and whether the speed of the GeForce 6600 GT 256 MB will be enough then.

Note that so far the number of such games is small, but in the future there will be more of them, and this applies primarily to strategies, RPGs and MMORPGs, which are less demanding on the performance of the video chip, but often have many textures. Although it’s rather difficult to say exactly when such a moment will come and whether the speed of the GeForce 6600 GT 256 MB will be enough then.

nine0003

Tables with test results for each game

In addition to the data presented in the diagrams, there are tables with all the test results for each game, where the performance percentages of cards with 128 and 256 MB of video memory are calculated for the same and different amounts of RAM, as well as a table of memory load in all applications. The information available there is almost self-explanatory, except for the case of Serious Sam 2: here Lows, with (fps) shows the minimum number of frames per second received during the test (in brackets), and the time during which this fps value was observed. In the table of memory usage in the Serious Sam 2 section, in parentheses are the memory load indicators with 512 MB of RAM, in all other games the results with different amounts of RAM did not differ.

In the table of memory usage in the Serious Sam 2 section, in parentheses are the memory load indicators with 512 MB of RAM, in all other games the results with different amounts of RAM did not differ.

nine0003

Let’s sum up: if in the previous article the recommendations were unequivocal, then in the case of the GeForce 6600 GT the choice already depends on the configuration of the existing/future computer. In any case, buying additional megabytes of video memory is not a waste of money, although, on the other hand, adding some more to the amount spent on the GeForce 6600 GT 256 MB, you can buy a faster ATI Radeon X800 GTO 256 MB or GeForce 6600 GT 128 MB and more one RAM module. Well, which of these options to choose, it’s up to you to decide, based on personal preferences and available funds.

nine0003

| Serious Sam 2 | F.E.A.R. | |

| GeForce 6600 GT 128 MB | ||

| local | 117 | 117 |

| non-local | 247 (183) | 110 |

| total | 364 (300) | 227 |

| GeForce 6600 GT 256 MB | ||

| local | 245 | 210 |

| non-local | 148 | 5 |

| total | 393 | 215 |

Unlock GeForce 6200 | KV.

by

by

Manufacturers give you a chance

Our users love

various overclocking tricks and

alteration of computer

components. desire to receive

more options and speed

less money is natural.

The need for this

manipulations with «iron», let

and unsafe, violating

guarantee, rarely anyone is stopped.

Special attention of fans

improve your computer

attracted cases where

manufacturers leave a loophole

to convert stripped down versions

their products into complete ones.

This doesn’t happen all that often. nine0003

Companies producing

central and graphic

processors, first released

an expensive version of your new

product. They count on

wealthy enthusiasts and

professionals willing to pay

deliberately overpriced

the latest technological innovations.

However, this category of users

few, her interest in

new product quickly dries up.

Then the producer starts

offer more affordable

products for the mass market. Before

how it will be prepared

production of special

inexpensive series of crystals,

budget products are carried out on

the base of the same crystals, only in them

artificial changes are made

to reduce performance. nine0003

nine0003

And then the finest hour comes

sophisticated users. Communicating

on thematic forums, they are looking for

decision to bypass the introduced

limitation producer.

For example, the fight against reduced

clock frequencies are already underway

long time ago, help users in

overclocking render themselves

motherboard manufacturers and

video cards. Since during acceleration

changes are made to the processor itself,

this method does not void the warranty and

does not require skills to work with

soldering iron.

Another relatively safe

non-intrusive method

product, — unlock

disabled processor blocks.

The manufacturer can disable

perfectly serviceable blocks, therefore

the user has a real chance

get after modification completely

workable product. nine0003

Alas, overclocking and unlocking more often

only possible for the first

batches of budget chips.

The manufacturer over time either

implements reliable hardware

blocking, or releases

truncated version of the crystal. But

lovers of overclocking and modifications already

find a new object

experiments. ..

..

GeForce 6200

GeForce 6200 video card today (spring

2005) is one of the objects

interest for lovers

unlock. The point is that this

the video card is based on the NVIDIA NV43 chip,

which also underlies another

video cards, higher class —

GeForce 6600/6600GT. nine0003

The chip is produced according to technology 0.11

nm (low power consumption,

good overclocking potential)

has two pixel pipelines

rendering with 4 branches on each and

three vertex processors,

supports the PCI Express bus. Last

fact does not allow GeForce video cards

6th series get your due

distribution because

PCI-enabled motherboards

Express is still too expensive. NVIDIA started

produce video cards

optional PCIe-AGP bridge,

which, however, are appreciably

expensive. nine0003

Interestingly, the first versions

video cards GeForce 6200 and 6600 are released

based on the same design, and

even their clock speeds are completely

match: 300 MHz for the chip and 250 (500)

or 275 (550) MHz for memory. Both

Both

video cards use DDR memory,

connected using 128-bit

tires. Budget version, GeForce 6200,

obtained in the simplest way —

software shutdown of the second

pixel pipeline.

Turn on the second conveyor

The most reliable way to turn on

second pipeline — replace BIOS

video cards. GeForce 6200 flashing BIOS

from GeForce 6600 or modified

original BIOS, and as a result

budget video card starts

work just like the more expensive one

version. This way is enough

complex and potentially dangerous

video cards — firmware procedure

«foreign» BIOS is not always

ends successfully.

The second, safer way is to

using the NVStrap driver

RivaTuner utility (look for it at www.nvworld.ru). This

the software module starts at

at the beginning of initialization

operating system before

start drivers. He

reprograms registers

graphics chip so

that both pipelines become

enabled, after which

exits without letting the drivers

chance of being discovered. Drivers

think they are dealing with a real

GeForce 6600, use both pipelines

for work. As a result

As a result

performance is improved. nine0003

Unlock procedure detailed

described in the RivaTuner documentation.

Let me give you a brief

statement:

- After starting RivaTuner, look

information provided by the BIOS

video cards. For this you need

enable the option «NVIDIA VGA BIOS

Information» in the module «Graphic subsystem

diagnostic» RivaTuner. If the issued

listing will be the line «SW masked

units», and in it the item «pixel

01b» (software disabled

modules: pixel pipeline),

likelihood of

unlock is very high. nine0169 - In the module «Low-level system tweaks»

you need to click the «Install» button,

to install the NVStrap module. - In the same program module

the last one will be available

section — «Graphics processor

configuration». Select the item

«custom» in dropdown list

and click the «Customize» button. - In the opened editor

controller registers

video cards must be enabled

disabled conveyor.

Put the bird in front

line «Pixel Unit», in which

the value of the «Status» field is

«Disabled» (this will be

disabled by software

conveyor). nine0169

nine0169

- Now you need to put a bird

in the item «Allow enabling hardware masked

units» and click «OK». - After rebooting with

RivaTuner needs to be checked

whether both conveyors are turned on.

Going into the same menus, make sure

that all the lines «Pixel Unit» in

register editor

controller contain

«Enabled» in the «Status» field.

If you have problems with

OS boot or work

video cards, you should manually

delete nvstrap.sys file from folder

windows/system32.

What we got

effect from the inclusion of the second

conveyor, was carried out

little testing. Was

cheap Leadtek graphics card selected

PX6200TD on which is installed

unlockable chip

NV43 revision A2. As part of the test

system included an Athlon 64 3500+ processor

(frequency 2.2 GHz, 512 KB L2 cache), board

based on nForce4 Ultra chipset, 512 MB memory

(two-channel mode).

| Leadtek FX6200TD GeForce 6200 (300/275) |

Original state 4×1, 3vp |

Unlock 8×1, 3vp |

% |

| OpenLG (SPECViewperf 8) |

|||

| 3Ds max | 14. 36 36 |

14.4 | 0.3% |

| Catia | 12.12 | 12.2 | 0.7% |

| Ensight | 8.257 | 8.3 | 0.5% |

| Light | 11.28 | 11.4 | 1.1% |

| Maya | 23.7 | 23.9 | 0.8% |

| ProEng | 17.36 | 17.7 | 2.0% |

| SW | 10.75 | 10.8 | 0.5% |

| Ugs | 4.24 | 4.24 | 0.0% |

| 3D games (1024×768, max.  details) details) |

|||

| Doom 3 | 41.3 | 45.6 | 10.4% |

| UT2004 | 100.2 | 130.6 | 30.3% |

| Call of duty | 155 | 179.7 | 15.9% |

| Halo | 31.3 | 45.8 | 46.3% |

| Tomb Raider | 27.8 | 37.3 | 34.2% |

| Unreal II | 83.4 | 93.6 | 12.2% |

Professional

OpenGL applications whose fragments

are part of the SPECViewperf 8 package,

no significant changes

noted: the increase did not exceed 1-2%.

Another thing is popular 3D games.

0c

0c