GeForce FX 5200 [in 1 benchmark]

NVIDIA

GeForce FX 5200

Buy

- Interface AGP 8x

- Core clock speed 250 MHz

- Max video memory 128 MB

- Memory type DDR

- Memory clock speed 400 MHz

- Maximum resolution

Summary

NVIDIA started GeForce FX 5200 sales 6 March 2003 at a recommended price of $69.99. This is Celsius architecture desktop card based on 150 nm manufacturing process and primarily aimed at gamers. 128 MB of DDR memory clocked at 0.4 GHz are supplied, and together with 128 Bit memory interface this creates a bandwidth of 6.4 GB/s.

Compatibility-wise, this is single-slot card attached via AGP 8x interface.

We have no data on GeForce FX 5200 benchmark results.

General info

Some basic facts about GeForce FX 5200: architecture, market segment, release date etc.

| Place in performance rating | not rated | |

| Architecture | Celsius (1999−2005) | |

| GPU code name | NV18 C1 | |

| Market segment | Desktop | |

| Release date | 6 March 2003 (19 years ago) | |

| Launch price (MSRP) | $69.99 | |

| Current price | $95 (1.4x MSRP) | of 49999 (A100 SXM4) |

Technical specs

GeForce FX 5200’s general performance parameters such as number of shaders, GPU base clock, manufacturing process, texturing and calculation speed. These parameters indirectly speak of GeForce FX 5200’s performance, but for precise assessment you have to consider its benchmark and gaming test results.

| Core clock speed | 250 MHz | of 2610 (Radeon RX 6500 XT) |

| Number of transistors | 29 million | of 14400 (GeForce GTX 1080 SLI Mobile) |

| Manufacturing process technology | 150 nm | of 4 (GeForce RTX 4080 Ti) |

| Texture fill rate | 1. 000 000 |

of 959.6 (Radeon RX 7900 XTX) |

Compatibility, dimensions and requirements

Information on GeForce FX 5200’s compatibility with other computer components. Useful when choosing a future computer configuration or upgrading an existing one. For desktop graphics cards it’s interface and bus (motherboard compatibility), additional power connectors (power supply compatibility).

| Interface | AGP 8x | |

| Width | 1-slot | |

| Supplementary power connectors | None |

Memory

Parameters of memory installed on GeForce FX 5200: its type, size, bus, clock and resulting bandwidth. Note that GPUs integrated into processors have no dedicated memory and use a shared part of system RAM instead.

| Memory type | DDR | |

| Maximum RAM amount | 128 MB | of 128 (Radeon Instinct MI250X) |

| Memory bus width | 128 Bit | of 8192 (Radeon Instinct MI250X) |

| Memory clock speed | 400 MHz | of 22400 (GeForce RTX 4080) |

| Memory bandwidth | 6. 4 GB/s 4 GB/s |

of 14400 (Radeon R7 M260) |

Video outputs and ports

Types and number of video connectors present on GeForce FX 5200. As a rule, this section is relevant only for desktop reference graphics cards, since for notebook ones the availability of certain video outputs depends on the laptop model, while non-reference desktop models can (though not necessarily will) bear a different set of video ports.

| Display Connectors | 1x DVI, 1x VGA, 1x S-Video |

API support

APIs supported by GeForce FX 5200, sometimes including their particular versions.

| DirectX | 8.0 | |

| OpenGL | 1.3 | of 4.6 (GeForce GTX 1080 Mobile) |

| OpenCL | N/A | |

| Vulkan | N/A |

Benchmark performance

Non-gaming benchmark performance of GeForce FX 5200. Note that overall benchmark performance is measured in points in 0-100 range.

Note that overall benchmark performance is measured in points in 0-100 range.

- Passmark

Passmark

This is probably the most ubiquitous benchmark, part of Passmark PerformanceTest suite. It gives the graphics card a thorough evaluation under various load, providing four separate benchmarks for Direct3D versions 9, 10, 11 and 12 (the last being done in 4K resolution if possible), and few more tests engaging DirectCompute capabilities.

Benchmark coverage: 26%

FX 5200

7

Similar GPUs

Here is our recommendation of several graphics cards that are more or less close in performance to the one reviewed.

Recommended processors

These processors are most commonly used with GeForce FX 5200 according to our statistics.

Pentium

N4200

4. 1%

1%

Pentium

4415U

3.1%

Pentium 4

2.4 GHz

2.1%

Celeron

N4020

2.1%

Pentium Silver

N5030

2.1%

Pentium D

945

1.6%

Pentium 4

HT 631

1.6%

Pentium 4

P4 3.0

1.6%

Core i3

3220

1. 6%

6%

Pentium Dual

Core E2140

1.6%

User rating

Here you can see the user rating of the graphics card, as well as rate it yourself.

Questions and comments

Here you can ask a question about GeForce FX 5200, agree or disagree with our judgements, or report an error or mismatch.

Please enable JavaScript to view the comments powered by Disqus.

NVIDIA GeForce FX 5200 — review. GPU Benchmark & Specs

NVIDIA GeForce FX 5200 graphics card (also called GPU) comes in in the performance rating. It is a good result. The graphics card NVIDIA GeForce FX 5200 runs with the minimal clock speed 250 MHz. It is featured by the acceleration option and able to run up to . The manufacturer has equipped NVIDIA with GB of 128 MB memory, clock speed 400 MHz and bandwidth 6.4 GB/s.

The power consumption of the graphics card is , and the fabrication process is only 150 nm. Below you will find the main data on the compatibility, sizes, technologies and gaming performance test results. Also you can read and leave the comments.

Below you will find the main data on the compatibility, sizes, technologies and gaming performance test results. Also you can read and leave the comments.

Let’s take a closer look at the most important specifications of the graphics card. To have a good idea what a graphics card is the best, we recommend to use comparison service.

3.8

Out of 20

Hitesti score

Popular graphics cards

Most viewed

AMD Radeon RX Vega 7

Intel UHD Graphics 630

Intel UHD Graphics 600

AMD Radeon RX Vega 10

NVIDIA Quadro T1000

Intel HD Graphics 530

Intel UHD Graphics 620

NVIDIA GeForce MX330

Intel HD Graphics 4600

Intel HD Graphics 520

Buy here:

AliExpress

General info

The basic set of information will help you find out the graphics card NVIDIA GeForce FX 5200 release date and its purpose (laptops or PCs), as well as the price at the time of the release and the average current price. This data also includes the architecture employed by the producer, and the chip’s codename.

This data also includes the architecture employed by the producer, and the chip’s codename.

| Place in performance rating: | not rated | |||

| Value for money (0-100): | 0.11 | |||

| Architecture: | Celsius | |||

| Code name: | NV18 C1 | |||

| Type: | Desktop | |||

| Release date: | 6 March 2003 (18 years ago) | |||

| Launch price (MSRP): | $69.99 | |||

| Price now: | $107 (1. 5x MSRP) 5x MSRP) |

|||

| GPU code name: | NV18 C1 | |||

| Market segment: | Desktop | |||

Technical specs

This is the important information that defines the graphics card’s capacity. The simpler the device production process, the better. The core’s power frequency is responsible for its speed (direct correlation) while the elaboration of signals is performed by the transistors (the more transistors, the faster the computations are carried out).

| Core clock speed: | 250 MHz | |||

| Transistor count: | 29 million | |||

| Manufacturing process technology: | 150 nm | |||

| Texture fill rate: | 1. 000 000 |

|||

| Number of transistors: | 29 million | |||

Compatibility, dimensions and requirements

Today there are numerous form factors for PC cases, so it is extremely important to know the length of the graphics card and the types of its connection. This will help facilitate the upgrade process.

| Interface: | AGP 8x | |||

| Supplementary power connectors: | None | |||

Memory

The internal main memory is used for storing data while conducting computations. Contemporary games and professional graphic apps have high requirements for the memory’s volume and capacity. The higher this parameter, the more powerful and fast the graphics card is. Type of memory, the capacity and bandwidth for NVIDIA GeForce FX 5200.

Type of memory, the capacity and bandwidth for NVIDIA GeForce FX 5200.

| Memory type: | DDR | |||

| Maximum RAM amount: | 128 MB | |||

| Memory bus width: | 128 Bit | |||

| Memory clock speed: | 400 MHz | |||

| Memory bandwidth: | 6.4 GB/s | |||

Video outputs and ports

As a rule, all contemporary graphics cards feature several connection types and additional ports. Knowing these peculiarities is crucial for avoiding problems with connecting the graphics card to the monitor or other peripheral devices.

| Display Connectors: | 1x DVI, 1x VGA, 1x S-Video | |||

API support

All API-supported NVIDIA GeForce FX 5200 are listed below.

| DirectX: | 8.0 | |||

| OpenGL: | 1.3 | |||

Overall gaming performance

All tests have been based on FPS counter. Let’s have a look on what place NVIDIA GeForce FX 5200 has been taken in the gaming performance test (calculation has been made in accordance with the game developer recommendations about system requirements; it can differ from the real world situations).

Select games to view

Horizon Zero DawnDeath StrandingF1 2020Gears TacticsDoom EternalHunt ShowdownEscape from TarkovHearthstoneRed Dead Redemption 2Star Wars Jedi Fallen OrderNeed for Speed HeatCall of Duty Modern Warfare 2019GRID 2019Ghost Recon BreakpointFIFA 20Borderlands 3ControlF1 2019League of LegendsTotal War: Three KingdomsRage 2Anno 1800The Division 2Dirt Rally 2.0AnthemMetro ExodusFar Cry New DawnApex LegendsJust Cause 4Darksiders IIIFarming Simulator 19Battlefield VFallout 76Hitman 2Call of Duty Black Ops 4Assassin´s Creed OdysseyForza Horizon 4FIFA 19Shadow of the Tomb RaiderStrange BrigadeF1 2018Monster Hunter WorldThe Crew 2Far Cry 5World of Tanks enCoreX-Plane 11. 11Kingdom Come: DeliveranceFinal Fantasy XV BenchmarkFortniteStar Wars Battlefront 2Need for Speed PaybackCall of Duty WWIIAssassin´s Creed OriginsWolfenstein II: The New ColossusDestiny 2ELEXThe Evil Within 2Middle-earth: Shadow of WarFIFA 18Ark Survival EvolvedF1 2017Playerunknown’s Battlegrounds (2017)Team Fortress 2Dirt 4Rocket LeaguePreyMass Effect AndromedaGhost Recon WildlandsFor HonorResident Evil 7Dishonored 2Call of Duty Infinite WarfareTitanfall 2Farming Simulator 17Civilization VIBattlefield 1Mafia 3Deus Ex Mankind DividedMirror’s Edge CatalystOverwatchDoomAshes of the SingularityHitman 2016The DivisionFar Cry PrimalXCOM 2Rise of the Tomb RaiderRainbow Six SiegeAssassin’s Creed SyndicateStar Wars BattlefrontFallout 4Call of Duty: Black Ops 3Anno 2205World of WarshipsDota 2 RebornThe Witcher 3Dirt RallyGTA VDragon Age: InquisitionFar Cry 4Assassin’s Creed UnityCall of Duty: Advanced WarfareAlien: IsolationMiddle-earth: Shadow of MordorSims 4Wolfenstein: The New OrderThe Elder Scrolls OnlineThiefX-Plane 10.

11Kingdom Come: DeliveranceFinal Fantasy XV BenchmarkFortniteStar Wars Battlefront 2Need for Speed PaybackCall of Duty WWIIAssassin´s Creed OriginsWolfenstein II: The New ColossusDestiny 2ELEXThe Evil Within 2Middle-earth: Shadow of WarFIFA 18Ark Survival EvolvedF1 2017Playerunknown’s Battlegrounds (2017)Team Fortress 2Dirt 4Rocket LeaguePreyMass Effect AndromedaGhost Recon WildlandsFor HonorResident Evil 7Dishonored 2Call of Duty Infinite WarfareTitanfall 2Farming Simulator 17Civilization VIBattlefield 1Mafia 3Deus Ex Mankind DividedMirror’s Edge CatalystOverwatchDoomAshes of the SingularityHitman 2016The DivisionFar Cry PrimalXCOM 2Rise of the Tomb RaiderRainbow Six SiegeAssassin’s Creed SyndicateStar Wars BattlefrontFallout 4Call of Duty: Black Ops 3Anno 2205World of WarshipsDota 2 RebornThe Witcher 3Dirt RallyGTA VDragon Age: InquisitionFar Cry 4Assassin’s Creed UnityCall of Duty: Advanced WarfareAlien: IsolationMiddle-earth: Shadow of MordorSims 4Wolfenstein: The New OrderThe Elder Scrolls OnlineThiefX-Plane 10. 25Battlefield 4Total War: Rome IICompany of Heroes 2Metro: Last LightBioShock InfiniteStarCraft II: Heart of the SwarmSimCityTomb RaiderCrysis 3Hitman: AbsolutionCall of Duty: Black Ops 2World of Tanks v8Borderlands 2Counter-Strike: GODirt ShowdownDiablo IIIMass Effect 3The Elder Scrolls V: SkyrimBattlefield 3Deus Ex Human RevolutionStarCraft 2Metro 2033Stalker: Call of PripyatGTA IV — Grand Theft AutoLeft 4 DeadTrackmania Nations ForeverCall of Duty 4 — Modern WarfareSupreme Commander — FA BenchCrysis — GPU BenchmarkWorld in Conflict — BenchmarkHalf Life 2 — Lost Coast BenchmarkWorld of WarcraftDoom 3Quake 3 Arena — TimedemoHalo InfiniteFarming Simulator 22Battlefield 2042Forza Horizon 5Riders RepublicGuardians of the GalaxyBack 4 BloodDeathloopF1 2021Days GoneResident Evil VillageHitman 3Cyberpunk 2077Assassin´s Creed ValhallaDirt 5Watch Dogs LegionMafia Definitive EditionCyberpunk 2077 1.5GRID LegendsDying Light 2Rainbow Six ExtractionGod of War

25Battlefield 4Total War: Rome IICompany of Heroes 2Metro: Last LightBioShock InfiniteStarCraft II: Heart of the SwarmSimCityTomb RaiderCrysis 3Hitman: AbsolutionCall of Duty: Black Ops 2World of Tanks v8Borderlands 2Counter-Strike: GODirt ShowdownDiablo IIIMass Effect 3The Elder Scrolls V: SkyrimBattlefield 3Deus Ex Human RevolutionStarCraft 2Metro 2033Stalker: Call of PripyatGTA IV — Grand Theft AutoLeft 4 DeadTrackmania Nations ForeverCall of Duty 4 — Modern WarfareSupreme Commander — FA BenchCrysis — GPU BenchmarkWorld in Conflict — BenchmarkHalf Life 2 — Lost Coast BenchmarkWorld of WarcraftDoom 3Quake 3 Arena — TimedemoHalo InfiniteFarming Simulator 22Battlefield 2042Forza Horizon 5Riders RepublicGuardians of the GalaxyBack 4 BloodDeathloopF1 2021Days GoneResident Evil VillageHitman 3Cyberpunk 2077Assassin´s Creed ValhallaDirt 5Watch Dogs LegionMafia Definitive EditionCyberpunk 2077 1.5GRID LegendsDying Light 2Rainbow Six ExtractionGod of War

low

1280×720

med.

1920×1080

high

1920×1080

ultra

1920×1080

QHD

2560×1440

4K

3840×2160

Horizon Zero Dawn (2020)

low

1280×720

med.

1920×1080

high

1920×1080

ultra

1920×1080

QHD

2560×1440

4K

3840×2160

Death Stranding (2020)

low

1280×720

med.

1920×1080

high

1920×1080

ultra

1920×1080

QHD

2560×1440

4K

3840×2160

F1 2020 (2020)

low

1280×720

med.

1920×1080

high

1920×1080

ultra

1920×1080

QHD

2560×1440

4K

3840×2160

Gears Tactics (2020)

low

1280×720

med.

1920×1080

high

1920×1080

ultra

1920×1080

QHD

2560×1440

4K

3840×2160

Doom Eternal (2020)

low

1280×720

med.

1920×1080

high

1920×1080

ultra

1920×1080

QHD

2560×1440

4K

3840×2160

| Legend | |

| 5 | Stutter – The performance of this graphics cards with this game is not well explored yet. According to interpolated information obtained from graphics cards of similar efficiency levels, the game is likely to stutter and show low frame rates. |

May Stutter – The performance of this graphics cards with this game is not well explored yet. According to interpolated information obtained from graphics cards of similar efficiency levels, the game is likely to stutter and show low frame rates. |

|

| 30 | Fluent – According to all known benchmarks with the specified graphical settings, this game is expected to run at 25fps or more |

| 40 | Fluent – According to all known benchmarks with the specified graphical settings, this game is expected to run at 35fps or more |

| 60 | Fluent – According to all known benchmarks with the specified graphical settings, this game is expected to run at 58fps or more |

| May Run Fluently – The performance of this graphics cards with this game is not well explored yet. According to interpolated information obtained from graphics cards of similar efficiency levels, the game is likely to show fluent frame rates. | |

| ? | Uncertain – The testing of this graphics cards on this game showed unexpected results. A slower card might be able to produce higher and more consistent frame rates when running the same benchmark scene. |

| Uncertain – The performance of this graphics cards with this game is not well explored yet. No reliable data interpolation can be made based on the performance of similar cards of the same category. | |

| The value in the fields reflects the average frame rate across the entire database. To obtain individual results, move your cursor over the value. | |

AMD equivalent

AMD Radeon R9 390 X2

Compare

Benchmark

Benchmarks help determine the performance in standard tests for NVIDIA GeForce FX 5200. We have listed the world’s most famous benchmarks so that you could obtain accurate results in each (see the description). Graphics card preliminary testing is especially important in the presence of high loads so that the user could see to what extent the graphic processing unit copes with computations and data elaboration.

Overall benchmark performance

Benchmark Passmark: Graphic cards performance test result. Check Passmark test results of GPUs on hitesti.com

NVIDIA Quadro NVS 280 PCI

NVIDIA GeForce FX 5500

NVIDIA GeForce FX 5200

3.8

Out of 20

Hitesti score

Share on social network:

In order to leave a review you need to log in

Reviews of NVIDIA GeForce FX 5200

Compare NVIDIA GeForce FX 5200

VS

AMD Radeon R9 390 X2

AMD Radeon 530

AMD FirePro W5100

NVIDIA GF117

NVIDIA GRID K2

NVIDIA NVS 5400M

NVIDIA GeForce RTX 2070 Mobile

NVIDIA GeForce RTX 2080 Ti

AMD Radeon Pro WX 4100

AMD Radeon 520

Characteristics of NVIDIA GeForce FX 5200 / Overclockers.

ua

ua

- News

- Specifications

- Reviews

- Processors

- Motherboards

- Memory

- Video cards

- Cooling systems

- Enclosures

- Power supplies

- Accumulators

- Peripherals

- Systems

- U.A. | EN

- 5800 (Ultra) — NV30. High-end replacement for Ti4800

- 5600 (Ultra) — NV31. Middle class, replacement for Ti4200

- 5200 (Ultra) — NV34. Low-end replacement for MX440

- 0.13 Micron Process Technology — allows you to place more semiconductor elements on a chip and increase the frequency of a 256-bit core.

The FX5200 series has a 0.15 micron process.

The FX5200 series has a 0.15 micron process. - Intellisample Technology is a new anti-aliasing technology that eliminates jaggies, ladders and combs 50% better than before. It also allows you to adjust the color gamut to take into account the difference in the perception of light and color directly by the eye and how it is reproduced on the monitor. In addition, this technology uses new and improved anisotropic filtering, which reduces texture distortion by making dynamic adjustments to its image. The FX 5200 doesn’t have the Z-compression and ironclad color support of this technology. Yes, and it cannot have — the power of the chip is simply not enough to implement such technologies.

- 8 Pixel Pipelines — output up to 8 pixels per clock. In our (5200) case — only 4.

- 400 MHz RAMDAC — for the 5200 series video memory digital-to-analog converter operates at a frequency of 350 megahertz.

- DDR II memory — instead of progressive DDR II memory, the FX 5200 uses conventional DDR.

2GeForce RTX 3060GeForce RTX 3050GeForce RTX 2080 TiGeForce RTX 2080 SuperGeForce RTX 2080GeForce RTX 2070 SuperGeForce RTX 2070GeForce RTX 2060 SuperGeForce RTX 2060GeForce GTX 1660 TiGeForce GTX 1660 SuperGeForce GTX 1660GeForce GTX 1650 SuperGeForce GTX 1650 GDDR6GeForce GTX 1650 rev.3GeForce GTX 1650 rev.2GeForce GTX 1650GeForce GTX 1630GeForce GTX 1080 TiGeForce GTX 1080GeForce GTX 1070 TiGeForce GTX 1070GeForce GTX 1060GeForce GTX 1060 3GBGeForce GTX 1050 TiGeForce GTX 1050 3GBGeForce GTX 1050GeForce GT 1030GeForce GTX Titan XGeForce GTX 980 TiGeForce GTX 980GeForce GTX 970GeForce GTX 960GeForce GTX 950GeForce GTX TitanGeForce GTX 780 TiGeForce GTX 780GeForce GTX 770GeForce GTX 760GeForce GTX 750 TiGeForce GTX 750GeForce GT 740GeForce GT 730GeForce GTX 690GeForce GTX 680GeForce GTX 670GeForce GTX 660 TiGeForce GTX 660GeForce GTX 650 Ti BoostGeForce GTX 650 TiGeForce GTX 650GeForce GT 640 rev.2GeForce GT 640GeForce GT 630 rev.2GeForce GT 630GeForce GTX 590GeForce GTX 580GeForce GTX 570GeForce GTX 560 TiGeForce GTX 560GeForce GTX 550 TiGeForce GT 520GeForce GTX 480GeForce GTX 470GeForce GTX 465GeForce GTX 460 SEGeForce GTX 460 1024MBGeForce GTX 460 768MBGeForce GTS 450GeForce GT 440 GDDR5GeForce GT 440 GDDR3GeForce GT 430GeForce GT 420GeForce GTX 295GeForce GTX 285GeForce GTX 280GeForce GTX 275GeForce GTX 260 rev.

2GeForce RTX 3060GeForce RTX 3050GeForce RTX 2080 TiGeForce RTX 2080 SuperGeForce RTX 2080GeForce RTX 2070 SuperGeForce RTX 2070GeForce RTX 2060 SuperGeForce RTX 2060GeForce GTX 1660 TiGeForce GTX 1660 SuperGeForce GTX 1660GeForce GTX 1650 SuperGeForce GTX 1650 GDDR6GeForce GTX 1650 rev.3GeForce GTX 1650 rev.2GeForce GTX 1650GeForce GTX 1630GeForce GTX 1080 TiGeForce GTX 1080GeForce GTX 1070 TiGeForce GTX 1070GeForce GTX 1060GeForce GTX 1060 3GBGeForce GTX 1050 TiGeForce GTX 1050 3GBGeForce GTX 1050GeForce GT 1030GeForce GTX Titan XGeForce GTX 980 TiGeForce GTX 980GeForce GTX 970GeForce GTX 960GeForce GTX 950GeForce GTX TitanGeForce GTX 780 TiGeForce GTX 780GeForce GTX 770GeForce GTX 760GeForce GTX 750 TiGeForce GTX 750GeForce GT 740GeForce GT 730GeForce GTX 690GeForce GTX 680GeForce GTX 670GeForce GTX 660 TiGeForce GTX 660GeForce GTX 650 Ti BoostGeForce GTX 650 TiGeForce GTX 650GeForce GT 640 rev.2GeForce GT 640GeForce GT 630 rev.2GeForce GT 630GeForce GTX 590GeForce GTX 580GeForce GTX 570GeForce GTX 560 TiGeForce GTX 560GeForce GTX 550 TiGeForce GT 520GeForce GTX 480GeForce GTX 470GeForce GTX 465GeForce GTX 460 SEGeForce GTX 460 1024MBGeForce GTX 460 768MBGeForce GTS 450GeForce GT 440 GDDR5GeForce GT 440 GDDR3GeForce GT 430GeForce GT 420GeForce GTX 295GeForce GTX 285GeForce GTX 280GeForce GTX 275GeForce GTX 260 rev. 2GeForce GTX 260GeForce GTS 250GeForce GTS 240GeForce GT 240GeForce GT 230GeForce GT 220GeForce 210Geforce 205GeForce GTS 150GeForce GT 130GeForce GT 120GeForce G100GeForce 9800 GTX+GeForce 9800 GTXGeForce 9800 GTSGeForce 9800 GTGeForce 9800 GX2GeForce 9600 GTGeForce 9600 GSO (G94)GeForce 9600 GSOGeForce 9500 GTGeForce 9500 GSGeForce 9400 GTGeForce 9400GeForce 9300GeForce 8800 ULTRAGeForce 8800 GTXGeForce 8800 GTS Rev2GeForce 8800 GTSGeForce 8800 GTGeForce 8800 GS 768MBGeForce 8800 GS 384MBGeForce 8600 GTSGeForce 8600 GTGeForce 8600 GSGeForce 8500 GT DDR3GeForce 8500 GT DDR2GeForce 8400 GSGeForce 8300GeForce 8200GeForce 8100GeForce 7950 GX2GeForce 7950 GTGeForce 7900 GTXGeForce 7900 GTOGeForce 7900 GTGeForce 7900 GSGeForce 7800 GTX 512MBGeForce 7800 GTXGeForce 7800 GTGeForce 7800 GS AGPGeForce 7800 GSGeForce 7600 GT Rev.2GeForce 7600 GTGeForce 7600 GS 256MBGeForce 7600 GS 512MBGeForce 7300 GT Ver2GeForce 7300 GTGeForce 7300 GSGeForce 7300 LEGeForce 7300 SEGeForce 7200 GSGeForce 7100 GS TC 128 (512)GeForce 6800 Ultra 512MBGeForce 6800 UltraGeForce 6800 GT 256MBGeForce 6800 GT 128MBGeForce 6800 GTOGeForce 6800 256MB PCI-EGeForce 6800 128MB PCI-EGeForce 6800 LE PCI-EGeForce 6800 256MB AGPGeForce 6800 128MB AGPGeForce 6800 LE AGPGeForce 6800 GS AGPGeForce 6800 GS PCI-EGeForce 6800 XTGeForce 6600 GT PCI-EGeForce 6600 GT AGPGeForce 6600 DDR2GeForce 6600 PCI-EGeForce 6600 AGPGeForce 6600 LEGeForce 6200 NV43VGeForce 6200GeForce 6200 NV43AGeForce 6500GeForce 6200 TC 64(256)GeForce 6200 TC 32(128)GeForce 6200 TC 16(128)GeForce PCX5950GeForce PCX 5900GeForce PCX 5750GeForce PCX 5550GeForce PCX 5300GeForce PCX 4300GeForce FX 5950 UltraGeForce FX 5900 UltraGeForce FX 5900GeForce FX 5900 ZTGeForce FX 5900 XTGeForce FX 5800 UltraGeForce FX 5800GeForce FX 5700 Ultra /DDR-3GeForce FX 5700 Ultra /DDR-2GeForce FX 5700GeForce FX 5700 LEGeForce FX 5600 Ultra (rev.

2GeForce GTX 260GeForce GTS 250GeForce GTS 240GeForce GT 240GeForce GT 230GeForce GT 220GeForce 210Geforce 205GeForce GTS 150GeForce GT 130GeForce GT 120GeForce G100GeForce 9800 GTX+GeForce 9800 GTXGeForce 9800 GTSGeForce 9800 GTGeForce 9800 GX2GeForce 9600 GTGeForce 9600 GSO (G94)GeForce 9600 GSOGeForce 9500 GTGeForce 9500 GSGeForce 9400 GTGeForce 9400GeForce 9300GeForce 8800 ULTRAGeForce 8800 GTXGeForce 8800 GTS Rev2GeForce 8800 GTSGeForce 8800 GTGeForce 8800 GS 768MBGeForce 8800 GS 384MBGeForce 8600 GTSGeForce 8600 GTGeForce 8600 GSGeForce 8500 GT DDR3GeForce 8500 GT DDR2GeForce 8400 GSGeForce 8300GeForce 8200GeForce 8100GeForce 7950 GX2GeForce 7950 GTGeForce 7900 GTXGeForce 7900 GTOGeForce 7900 GTGeForce 7900 GSGeForce 7800 GTX 512MBGeForce 7800 GTXGeForce 7800 GTGeForce 7800 GS AGPGeForce 7800 GSGeForce 7600 GT Rev.2GeForce 7600 GTGeForce 7600 GS 256MBGeForce 7600 GS 512MBGeForce 7300 GT Ver2GeForce 7300 GTGeForce 7300 GSGeForce 7300 LEGeForce 7300 SEGeForce 7200 GSGeForce 7100 GS TC 128 (512)GeForce 6800 Ultra 512MBGeForce 6800 UltraGeForce 6800 GT 256MBGeForce 6800 GT 128MBGeForce 6800 GTOGeForce 6800 256MB PCI-EGeForce 6800 128MB PCI-EGeForce 6800 LE PCI-EGeForce 6800 256MB AGPGeForce 6800 128MB AGPGeForce 6800 LE AGPGeForce 6800 GS AGPGeForce 6800 GS PCI-EGeForce 6800 XTGeForce 6600 GT PCI-EGeForce 6600 GT AGPGeForce 6600 DDR2GeForce 6600 PCI-EGeForce 6600 AGPGeForce 6600 LEGeForce 6200 NV43VGeForce 6200GeForce 6200 NV43AGeForce 6500GeForce 6200 TC 64(256)GeForce 6200 TC 32(128)GeForce 6200 TC 16(128)GeForce PCX5950GeForce PCX 5900GeForce PCX 5750GeForce PCX 5550GeForce PCX 5300GeForce PCX 4300GeForce FX 5950 UltraGeForce FX 5900 UltraGeForce FX 5900GeForce FX 5900 ZTGeForce FX 5900 XTGeForce FX 5800 UltraGeForce FX 5800GeForce FX 5700 Ultra /DDR-3GeForce FX 5700 Ultra /DDR-2GeForce FX 5700GeForce FX 5700 LEGeForce FX 5600 Ultra (rev. 2)GeForce FX 5600 Ultra (rev.1)GeForce FX 5600 XTGeForce FX 5600GeForce FX 5500GeForce FX 5200 UltraGeForce FX 5200GeForce FX 5200 SEGeForce 4 Ti 4800GeForce 4 Ti 4800-SEGeForce 4 Ti 4200-8xGeForce 4 Ti 4600GeForce 4 Ti 4400GeForce 4 Ti 4200GeForce 4 MX 4000GeForce 4 MX 440-8x / 480GeForce 4 MX 460GeForce 4 MX 440GeForce 4 MX 440-SEGeForce 4 MX 420GeForce 3 Ti500GeForce 3 Ti200GeForce 3GeForce 2 Ti VXGeForce 2 TitaniumGeForce 2 UltraGeForce 2 PROGeForce 2 GTSGeForce 2 MX 400GeForce 2 MX 200GeForce 2 MXGeForce 256 DDRGeForce 256Riva TNT 2 UltraRiva TNT 2 PRORiva TNT 2Riva TNT 2 M64Riva TNT 2 Vanta LTRiva TNT 2 VantaRiva TNTRiva 128 ZXRiva 128 9Fury XRadeon R9 FuryRadeon R9 NanoRadeon R9 390XRadeon R9 390Radeon R9 380XRadeon R9 380Radeon R7 370Radeon R7 360Radeon R9 295X2Radeon R9 290XRadeon R9 290Radeon R9 280XRadeon R9 285Radeon R9 280Radeon R9 270XRadeon R9 270Radeon R7 265Radeon R7 260XRadeon R7 260Radeon R7 250Radeon R7 240Radeon HD 7970Radeon HD 7950Radeon HD 7870 XTRadeon HD 7870Radeon HD 7850Radeon HD 7790Radeon HD 7770Radeon HD 7750Radeon HD 6990Radeon HD 6970Radeon HD 6950Radeon HD 6930Radeon HD 6870Radeon HD 6850Radeon HD 6790Radeon HD 6770Radeon HD 6750Radeon HD 6670 GDDR5Radeon HD 6670 GDDR3Radeon HD 6570 GDDR5Radeon HD 6570 GDDR3Radeon HD 6450 GDDR5Radeon HD 6450 GDDR3Radeon HD 5570 GDDR5Radeon HD 3750Radeon HD 3730Radeon HD 5970Radeon HD 5870Radeon HD 5850Radeon HD 5830Radeon HD 5770Radeon HD 5750Radeon HD 5670Radeon HD 5570Radeon HD 5550Radeon HD 5450Radeon HD 4890Radeon HD 4870 X2Radeon HD 4870Radeon HD 4860Radeon HD 4850 X2Radeon HD 4850Radeon HD 4830Radeon HD 4790Radeon HD 4770Radeon HD 4730Radeon HD 4670Radeon HD 4650Radeon HD 4550Radeon HD 4350Radeon HD 4350Radeon HD 43500 (IGP 890GX) Radeon HD 4200 (IGP)Radeon HD 3870 X2Radeon HD 3870Radeon HD 3850Radeon HD 3690Radeon HD 3650Radeon HD 3470Radeon HD 3450Radeon HD 3300 (IGP)Radeon HD 3200 (IGP)Radeon HD 3100 (IGP)Radeon HD 2900 XT 1Gb GDDR4Radeon HD 2900 XTRadeon HD 2900 PRORadeon HD 2900 GTRadeon HD 2600 XT DUALRadeon HD 2600 XT GDDR4Radeon HD 2600 XTRadeon HD 2600 PRORadeon HD 2400 XTRadeon HD 2400 PRORadeon HD 2350Radeon X1950 CrossFire EditionRadeon X1950 XTXRadeon X1950 XTRadeon X1950 PRO DUALRadeon X1950 PRORadeon X1950 GTRadeon X1900 CrossFire EditionRadeon X1900 XTXRadeon X1900 XTRadeon X1900 GT Rev2Radeon X1900 GTRadeon X1800 CrossFire EditionRadeon X1800 XT PE 512MBRadeon X1800 XTRadeon X1800 XLRadeon X1800 GTORadeon X1650 XTRadeon X1650 GTRadeon X1650 XL DDR3Radeon X1650 XL DDR2Radeon X1650 PRO on RV530XTRadeon X1650 PRO on RV535XTRadeon X1650Radeon X1600 XTRadeon X1600 PRORadeon X1550 PRORadeon X1550Radeon X1550 LERadeon X1300 XT on RV530ProRadeon X1300 XT on RV535ProRadeon X1300 CERadeon X1300 ProRadeon X1300Radeon X1300 LERadeon X1300 HMRadeon X1050Radeon X850 XT Platinum EditionRadeon X850 XT CrossFire EditionRadeon X850 XT Radeon X850 Pro Radeon X800 XT Platinum EditionRadeon X800 XTRadeon X800 CrossFire EditionRadeon X800 XLRadeon X800 GTO 256MBRadeon X800 GTO 128MBRadeon X800 GTO2 256MBRadeon X800Radeon X800 ProRadeon X800 GT 256MBRadeon X800 GT 128MBRadeon X800 SERadeon X700 XTRadeon X700 ProRadeon X700Radeon X600 XTRadeon X600 ProRadeon X550 XTRadeon X550Radeon X300 SE 128MB HM-256MBR adeon X300 SE 32MB HM-128MBRadeon X300Radeon X300 SERadeon 9800 XTRadeon 9800 PRO /DDR IIRadeon 9800 PRO /DDRRadeon 9800Radeon 9800 SE-256 bitRadeon 9800 SE-128 bitRadeon 9700 PRORadeon 9700Radeon 9600 XTRadeon 9600 PRORadeon 9600Radeon 9600 SERadeon 9600 TXRadeon 9550 XTRadeon 9550Radeon 9550 SERadeon 9500 PRORadeon 9500 /128 MBRadeon 9500 /64 MBRadeon 9250Radeon 9200 PRORadeon 9200Radeon 9200 SERadeon 9000 PRORadeon 9000Radeon 9000 XTRadeon 8500 LE / 9100Radeon 8500Radeon 7500Radeon 7200 Radeon LE Radeon DDR OEM Radeon DDR Radeon SDR Radeon VE / 7000Rage 128 GL Rage 128 VR Rage 128 PRO AFRRage 128 PRORage 1283D Rage ProIntelArc A770 16GBArc A770 8GBArc A750Arc A380Arc A310NVIDIAGeForce RTX 4090GeForce RTX 4080GeForce RTX 4080 12GBGeForce RTX 3090 TiGeForce RTX 3090GeForce RTX 3080 TiGeForce RTX 3080 12GBGeForce RTX 3080GeForce RTX 3070 TiGeForce RTX 3070GeForce RTX 3060 TiGeForce RTX 3060 rev.

2)GeForce FX 5600 Ultra (rev.1)GeForce FX 5600 XTGeForce FX 5600GeForce FX 5500GeForce FX 5200 UltraGeForce FX 5200GeForce FX 5200 SEGeForce 4 Ti 4800GeForce 4 Ti 4800-SEGeForce 4 Ti 4200-8xGeForce 4 Ti 4600GeForce 4 Ti 4400GeForce 4 Ti 4200GeForce 4 MX 4000GeForce 4 MX 440-8x / 480GeForce 4 MX 460GeForce 4 MX 440GeForce 4 MX 440-SEGeForce 4 MX 420GeForce 3 Ti500GeForce 3 Ti200GeForce 3GeForce 2 Ti VXGeForce 2 TitaniumGeForce 2 UltraGeForce 2 PROGeForce 2 GTSGeForce 2 MX 400GeForce 2 MX 200GeForce 2 MXGeForce 256 DDRGeForce 256Riva TNT 2 UltraRiva TNT 2 PRORiva TNT 2Riva TNT 2 M64Riva TNT 2 Vanta LTRiva TNT 2 VantaRiva TNTRiva 128 ZXRiva 128 9Fury XRadeon R9 FuryRadeon R9 NanoRadeon R9 390XRadeon R9 390Radeon R9 380XRadeon R9 380Radeon R7 370Radeon R7 360Radeon R9 295X2Radeon R9 290XRadeon R9 290Radeon R9 280XRadeon R9 285Radeon R9 280Radeon R9 270XRadeon R9 270Radeon R7 265Radeon R7 260XRadeon R7 260Radeon R7 250Radeon R7 240Radeon HD 7970Radeon HD 7950Radeon HD 7870 XTRadeon HD 7870Radeon HD 7850Radeon HD 7790Radeon HD 7770Radeon HD 7750Radeon HD 6990Radeon HD 6970Radeon HD 6950Radeon HD 6930Radeon HD 6870Radeon HD 6850Radeon HD 6790Radeon HD 6770Radeon HD 6750Radeon HD 6670 GDDR5Radeon HD 6670 GDDR3Radeon HD 6570 GDDR5Radeon HD 6570 GDDR3Radeon HD 6450 GDDR5Radeon HD 6450 GDDR3Radeon HD 5570 GDDR5Radeon HD 3750Radeon HD 3730Radeon HD 5970Radeon HD 5870Radeon HD 5850Radeon HD 5830Radeon HD 5770Radeon HD 5750Radeon HD 5670Radeon HD 5570Radeon HD 5550Radeon HD 5450Radeon HD 4890Radeon HD 4870 X2Radeon HD 4870Radeon HD 4860Radeon HD 4850 X2Radeon HD 4850Radeon HD 4830Radeon HD 4790Radeon HD 4770Radeon HD 4730Radeon HD 4670Radeon HD 4650Radeon HD 4550Radeon HD 4350Radeon HD 4350Radeon HD 43500 (IGP 890GX) Radeon HD 4200 (IGP)Radeon HD 3870 X2Radeon HD 3870Radeon HD 3850Radeon HD 3690Radeon HD 3650Radeon HD 3470Radeon HD 3450Radeon HD 3300 (IGP)Radeon HD 3200 (IGP)Radeon HD 3100 (IGP)Radeon HD 2900 XT 1Gb GDDR4Radeon HD 2900 XTRadeon HD 2900 PRORadeon HD 2900 GTRadeon HD 2600 XT DUALRadeon HD 2600 XT GDDR4Radeon HD 2600 XTRadeon HD 2600 PRORadeon HD 2400 XTRadeon HD 2400 PRORadeon HD 2350Radeon X1950 CrossFire EditionRadeon X1950 XTXRadeon X1950 XTRadeon X1950 PRO DUALRadeon X1950 PRORadeon X1950 GTRadeon X1900 CrossFire EditionRadeon X1900 XTXRadeon X1900 XTRadeon X1900 GT Rev2Radeon X1900 GTRadeon X1800 CrossFire EditionRadeon X1800 XT PE 512MBRadeon X1800 XTRadeon X1800 XLRadeon X1800 GTORadeon X1650 XTRadeon X1650 GTRadeon X1650 XL DDR3Radeon X1650 XL DDR2Radeon X1650 PRO on RV530XTRadeon X1650 PRO on RV535XTRadeon X1650Radeon X1600 XTRadeon X1600 PRORadeon X1550 PRORadeon X1550Radeon X1550 LERadeon X1300 XT on RV530ProRadeon X1300 XT on RV535ProRadeon X1300 CERadeon X1300 ProRadeon X1300Radeon X1300 LERadeon X1300 HMRadeon X1050Radeon X850 XT Platinum EditionRadeon X850 XT CrossFire EditionRadeon X850 XT Radeon X850 Pro Radeon X800 XT Platinum EditionRadeon X800 XTRadeon X800 CrossFire EditionRadeon X800 XLRadeon X800 GTO 256MBRadeon X800 GTO 128MBRadeon X800 GTO2 256MBRadeon X800Radeon X800 ProRadeon X800 GT 256MBRadeon X800 GT 128MBRadeon X800 SERadeon X700 XTRadeon X700 ProRadeon X700Radeon X600 XTRadeon X600 ProRadeon X550 XTRadeon X550Radeon X300 SE 128MB HM-256MBR adeon X300 SE 32MB HM-128MBRadeon X300Radeon X300 SERadeon 9800 XTRadeon 9800 PRO /DDR IIRadeon 9800 PRO /DDRRadeon 9800Radeon 9800 SE-256 bitRadeon 9800 SE-128 bitRadeon 9700 PRORadeon 9700Radeon 9600 XTRadeon 9600 PRORadeon 9600Radeon 9600 SERadeon 9600 TXRadeon 9550 XTRadeon 9550Radeon 9550 SERadeon 9500 PRORadeon 9500 /128 MBRadeon 9500 /64 MBRadeon 9250Radeon 9200 PRORadeon 9200Radeon 9200 SERadeon 9000 PRORadeon 9000Radeon 9000 XTRadeon 8500 LE / 9100Radeon 8500Radeon 7500Radeon 7200 Radeon LE Radeon DDR OEM Radeon DDR Radeon SDR Radeon VE / 7000Rage 128 GL Rage 128 VR Rage 128 PRO AFRRage 128 PRORage 1283D Rage ProIntelArc A770 16GBArc A770 8GBArc A750Arc A380Arc A310NVIDIAGeForce RTX 4090GeForce RTX 4080GeForce RTX 4080 12GBGeForce RTX 3090 TiGeForce RTX 3090GeForce RTX 3080 TiGeForce RTX 3080 12GBGeForce RTX 3080GeForce RTX 3070 TiGeForce RTX 3070GeForce RTX 3060 TiGeForce RTX 3060 rev. 2GeForce RTX 3060GeForce RTX 3050GeForce RTX 2080 TiGeForce RTX 2080 SuperGeForce RTX 2080GeForce RTX 2070 SuperGeForce RTX 2070GeForce RTX 2060 SuperGeForce RTX 2060GeForce GTX 1660 TiGeForce GTX 1660 SuperGeForce GTX 1660GeForce GTX 1650 SuperGeForce GTX 1650 GDDR6GeForce GTX 1650 rev.3GeForce GTX 1650 rev.2GeForce GTX 1650GeForce GTX 1630GeForce GTX 1080 TiGeForce GTX 1080GeForce GTX 1070 TiGeForce GTX 1070GeForce GTX 1060GeForce GTX 1060 3GBGeForce GTX 1050 TiGeForce GTX 1050 3GBGeForce GTX 1050GeForce GT 1030GeForce GTX Titan XGeForce GTX 980 TiGeForce GTX 980GeForce GTX 970GeForce GTX 960GeForce GTX 950GeForce GTX TitanGeForce GTX 780 TiGeForce GTX 780GeForce GTX 770GeForce GTX 760GeForce GTX 750 TiGeForce GTX 750GeForce GT 740GeForce GT 730GeForce GTX 690GeForce GTX 680GeForce GTX 670GeForce GTX 660 TiGeForce GTX 660GeForce GTX 650 Ti BoostGeForce GTX 650 TiGeForce GTX 650GeForce GT 640 rev.2GeForce GT 640GeForce GT 630 rev.2GeForce GT 630GeForce GTX 590GeForce GTX 580GeForce GTX 570GeForce GTX 560 TiGeForce GTX 560GeForce GTX 550 TiGeForce GT 520GeForce GTX 480GeForce GTX 470GeForce GTX 465GeForce GTX 460 SEGeForce GTX 460 1024MBGeForce GTX 460 768MBGeForce GTS 450GeForce GT 440 GDDR5GeForce GT 440 GDDR3GeForce GT 430GeForce GT 420GeForce GTX 295GeForce GTX 285GeForce GTX 280GeForce GTX 275GeForce GTX 260 rev.

2GeForce RTX 3060GeForce RTX 3050GeForce RTX 2080 TiGeForce RTX 2080 SuperGeForce RTX 2080GeForce RTX 2070 SuperGeForce RTX 2070GeForce RTX 2060 SuperGeForce RTX 2060GeForce GTX 1660 TiGeForce GTX 1660 SuperGeForce GTX 1660GeForce GTX 1650 SuperGeForce GTX 1650 GDDR6GeForce GTX 1650 rev.3GeForce GTX 1650 rev.2GeForce GTX 1650GeForce GTX 1630GeForce GTX 1080 TiGeForce GTX 1080GeForce GTX 1070 TiGeForce GTX 1070GeForce GTX 1060GeForce GTX 1060 3GBGeForce GTX 1050 TiGeForce GTX 1050 3GBGeForce GTX 1050GeForce GT 1030GeForce GTX Titan XGeForce GTX 980 TiGeForce GTX 980GeForce GTX 970GeForce GTX 960GeForce GTX 950GeForce GTX TitanGeForce GTX 780 TiGeForce GTX 780GeForce GTX 770GeForce GTX 760GeForce GTX 750 TiGeForce GTX 750GeForce GT 740GeForce GT 730GeForce GTX 690GeForce GTX 680GeForce GTX 670GeForce GTX 660 TiGeForce GTX 660GeForce GTX 650 Ti BoostGeForce GTX 650 TiGeForce GTX 650GeForce GT 640 rev.2GeForce GT 640GeForce GT 630 rev.2GeForce GT 630GeForce GTX 590GeForce GTX 580GeForce GTX 570GeForce GTX 560 TiGeForce GTX 560GeForce GTX 550 TiGeForce GT 520GeForce GTX 480GeForce GTX 470GeForce GTX 465GeForce GTX 460 SEGeForce GTX 460 1024MBGeForce GTX 460 768MBGeForce GTS 450GeForce GT 440 GDDR5GeForce GT 440 GDDR3GeForce GT 430GeForce GT 420GeForce GTX 295GeForce GTX 285GeForce GTX 280GeForce GTX 275GeForce GTX 260 rev. 2GeForce GTX 260GeForce GTS 250GeForce GTS 240GeForce GT 240GeForce GT 230GeForce GT 220GeForce 210Geforce 205GeForce GTS 150GeForce GT 130GeForce GT 120GeForce G100GeForce 9800 GTX+GeForce 9800 GTXGeForce 9800 GTSGeForce 9800 GTGeForce 9800 GX2GeForce 9600 GTGeForce 9600 GSO (G94)GeForce 9600 GSOGeForce 9500 GTGeForce 9500 GSGeForce 9400 GTGeForce 9400GeForce 9300GeForce 8800 ULTRAGeForce 8800 GTXGeForce 8800 GTS Rev2GeForce 8800 GTSGeForce 8800 GTGeForce 8800 GS 768MBGeForce 8800 GS 384MBGeForce 8600 GTSGeForce 8600 GTGeForce 8600 GSGeForce 8500 GT DDR3GeForce 8500 GT DDR2GeForce 8400 GSGeForce 8300GeForce 8200GeForce 8100GeForce 7950 GX2GeForce 7950 GTGeForce 7900 GTXGeForce 7900 GTOGeForce 7900 GTGeForce 7900 GSGeForce 7800 GTX 512MBGeForce 7800 GTXGeForce 7800 GTGeForce 7800 GS AGPGeForce 7800 GSGeForce 7600 GT Rev.2GeForce 7600 GTGeForce 7600 GS 256MBGeForce 7600 GS 512MBGeForce 7300 GT Ver2GeForce 7300 GTGeForce 7300 GSGeForce 7300 LEGeForce 7300 SEGeForce 7200 GSGeForce 7100 GS TC 128 (512)GeForce 6800 Ultra 512MBGeForce 6800 UltraGeForce 6800 GT 256MBGeForce 6800 GT 128MBGeForce 6800 GTOGeForce 6800 256MB PCI-EGeForce 6800 128MB PCI-EGeForce 6800 LE PCI-EGeForce 6800 256MB AGPGeForce 6800 128MB AGPGeForce 6800 LE AGPGeForce 6800 GS AGPGeForce 6800 GS PCI-EGeForce 6800 XTGeForce 6600 GT PCI-EGeForce 6600 GT AGPGeForce 6600 DDR2GeForce 6600 PCI-EGeForce 6600 AGPGeForce 6600 LEGeForce 6200 NV43VGeForce 6200GeForce 6200 NV43AGeForce 6500GeForce 6200 TC 64(256)GeForce 6200 TC 32(128)GeForce 6200 TC 16(128)GeForce PCX5950GeForce PCX 5900GeForce PCX 5750GeForce PCX 5550GeForce PCX 5300GeForce PCX 4300GeForce FX 5950 UltraGeForce FX 5900 UltraGeForce FX 5900GeForce FX 5900 ZTGeForce FX 5900 XTGeForce FX 5800 UltraGeForce FX 5800GeForce FX 5700 Ultra /DDR-3GeForce FX 5700 Ultra /DDR-2GeForce FX 5700GeForce FX 5700 LEGeForce FX 5600 Ultra (rev.

2GeForce GTX 260GeForce GTS 250GeForce GTS 240GeForce GT 240GeForce GT 230GeForce GT 220GeForce 210Geforce 205GeForce GTS 150GeForce GT 130GeForce GT 120GeForce G100GeForce 9800 GTX+GeForce 9800 GTXGeForce 9800 GTSGeForce 9800 GTGeForce 9800 GX2GeForce 9600 GTGeForce 9600 GSO (G94)GeForce 9600 GSOGeForce 9500 GTGeForce 9500 GSGeForce 9400 GTGeForce 9400GeForce 9300GeForce 8800 ULTRAGeForce 8800 GTXGeForce 8800 GTS Rev2GeForce 8800 GTSGeForce 8800 GTGeForce 8800 GS 768MBGeForce 8800 GS 384MBGeForce 8600 GTSGeForce 8600 GTGeForce 8600 GSGeForce 8500 GT DDR3GeForce 8500 GT DDR2GeForce 8400 GSGeForce 8300GeForce 8200GeForce 8100GeForce 7950 GX2GeForce 7950 GTGeForce 7900 GTXGeForce 7900 GTOGeForce 7900 GTGeForce 7900 GSGeForce 7800 GTX 512MBGeForce 7800 GTXGeForce 7800 GTGeForce 7800 GS AGPGeForce 7800 GSGeForce 7600 GT Rev.2GeForce 7600 GTGeForce 7600 GS 256MBGeForce 7600 GS 512MBGeForce 7300 GT Ver2GeForce 7300 GTGeForce 7300 GSGeForce 7300 LEGeForce 7300 SEGeForce 7200 GSGeForce 7100 GS TC 128 (512)GeForce 6800 Ultra 512MBGeForce 6800 UltraGeForce 6800 GT 256MBGeForce 6800 GT 128MBGeForce 6800 GTOGeForce 6800 256MB PCI-EGeForce 6800 128MB PCI-EGeForce 6800 LE PCI-EGeForce 6800 256MB AGPGeForce 6800 128MB AGPGeForce 6800 LE AGPGeForce 6800 GS AGPGeForce 6800 GS PCI-EGeForce 6800 XTGeForce 6600 GT PCI-EGeForce 6600 GT AGPGeForce 6600 DDR2GeForce 6600 PCI-EGeForce 6600 AGPGeForce 6600 LEGeForce 6200 NV43VGeForce 6200GeForce 6200 NV43AGeForce 6500GeForce 6200 TC 64(256)GeForce 6200 TC 32(128)GeForce 6200 TC 16(128)GeForce PCX5950GeForce PCX 5900GeForce PCX 5750GeForce PCX 5550GeForce PCX 5300GeForce PCX 4300GeForce FX 5950 UltraGeForce FX 5900 UltraGeForce FX 5900GeForce FX 5900 ZTGeForce FX 5900 XTGeForce FX 5800 UltraGeForce FX 5800GeForce FX 5700 Ultra /DDR-3GeForce FX 5700 Ultra /DDR-2GeForce FX 5700GeForce FX 5700 LEGeForce FX 5600 Ultra (rev. 2)GeForce FX 5600 Ultra (rev.1)GeForce FX 5600 XTGeForce FX 5600GeForce FX 5500GeForce FX 5200 UltraGeForce FX 5200GeForce FX 5200 SEGeForce 4 Ti 4800GeForce 4 Ti 4800-SEGeForce 4 Ti 4200-8xGeForce 4 Ti 4600GeForce 4 Ti 4400GeForce 4 Ti 4200GeForce 4 MX 4000GeForce 4 MX 440-8x / 480GeForce 4 MX 460GeForce 4 MX 440GeForce 4 MX 440-SEGeForce 4 MX 420GeForce 3 Ti500GeForce 3 Ti200GeForce 3GeForce 2 Ti VXGeForce 2 TitaniumGeForce 2 UltraGeForce 2 PROGeForce 2 GTSGeForce 2 MX 400GeForce 2 MX 200GeForce 2 MXGeForce 256 DDRGeForce 256Riva TNT 2 UltraRiva TNT 2 PRORiva TNT 2Riva TNT 2 M64Riva TNT 2 Vanta LTRiva TNT 2 VantaRiva TNTRiva 128 ZXRiva 128

2)GeForce FX 5600 Ultra (rev.1)GeForce FX 5600 XTGeForce FX 5600GeForce FX 5500GeForce FX 5200 UltraGeForce FX 5200GeForce FX 5200 SEGeForce 4 Ti 4800GeForce 4 Ti 4800-SEGeForce 4 Ti 4200-8xGeForce 4 Ti 4600GeForce 4 Ti 4400GeForce 4 Ti 4200GeForce 4 MX 4000GeForce 4 MX 440-8x / 480GeForce 4 MX 460GeForce 4 MX 440GeForce 4 MX 440-SEGeForce 4 MX 420GeForce 3 Ti500GeForce 3 Ti200GeForce 3GeForce 2 Ti VXGeForce 2 TitaniumGeForce 2 UltraGeForce 2 PROGeForce 2 GTSGeForce 2 MX 400GeForce 2 MX 200GeForce 2 MXGeForce 256 DDRGeForce 256Riva TNT 2 UltraRiva TNT 2 PRORiva TNT 2Riva TNT 2 M64Riva TNT 2 Vanta LTRiva TNT 2 VantaRiva TNTRiva 128 ZXRiva 128

You can simultaneously select

up to 10 video cards by holding Ctrl

nVidia GeForce FX5200 (NV34) / Graphics cards

Author: Artyom Semenkov

NV30 has been announced for a long time. But video cards based on nVidia GeForce FX 5800 (NV 30) have a high price and many simply cannot afford them, or simply the user does not want to overpay for speed that he does not need. Usually in such cases, as a rule, a little later after the release of the flagship, its stripped-down versions are released, which makes it possible to eventually cover all sectors of the market.

Usually in such cases, as a rule, a little later after the release of the flagship, its stripped-down versions are released, which makes it possible to eventually cover all sectors of the market.

As of May 2003, nVidia has three chips based on the FX architecture in production, to be as accurate as possible, the FX 5600 video cards will go on mass sale only next month — right now its production stocks are being formed. And to be absolutely principled, then, as we predicted, the NV30 will never enter the mass market and is reoriented exclusively to the professional Quadro line, where the price does not matter as much as in the consumer market. The top NV30 chip in all roadmaps of video card manufacturers has already been replaced by NV35. Nevertheless, in our comparison table, we put down exactly NV30… While NV35 goes on sale, a lot of water will flow away.

FX family comparison table:

| Level | High End | Middle | Low end | |||

| Chip | NV30 | NV30 Ultra | NV31 | NV31 Ultra | NV34 | NV34 Ultra |

| Description | FX 5800 | FX 5600 | FX 5200 | |||

| Technology micron | 0. 13 13 |

0.13 | 0.15 | |||

| Transistors, million | 125 | 75 | 45 | |||

| Pixel pipelines | 4 | 2 | 2 | |||

| Texture blocks | 8 | 4 | 4 | |||

| Core frequency, Mhz | 400 | 500 | 300 | 350 | 250 | 325 |

| Memory bus frequency, Mhz | |

|

|

|

|

|

| Memory bus bit | 128 (DDR II) | 128 (DDR) | 128 (DDR) | |||

| RAMDAC, Mhz | 2×400 | 2×400 | 2×400 | |||

| Power supply | Mandatory | Desirable | Optional | |||

Note: The recommended core and memory frequencies for NV31 and NV34 chips have changed several times.

Today we will present benchmarks for the nVidia GeForce FX 5200 (NV34), but this will be fixed soon, and an article about the mid-range GeForce FX 5600 (NV31) will also be presented.

NV34 Chip Differences

Currently, nVidia is releasing a line of three FX family GPUs for three market sectors, and each chip comes in two versions, regular and ultra:

As you can see, nVidia stopped using the MX label to designate their low-end products. You can forget about MX…

Reducing the cost of the chip and card requires sacrifice. Here is a list of the main differences between the older NV30 and NV34. So, what the NV30 does not have:

nVidia GeForce FX 5200 chip

The chip has 45 million transistors and is manufactured using a 0.15 micron manufacturing process.

The GeForce FX 5200 has 2 pixel pipelines and 4 texture units. However, all this is conditional, today it is difficult to judge a video card only by the number of certain pipelines. This is due to the fact that each time the driver separately configures the operation of the chip for each individual scene of a particular computer game.

In general, we can say that the 3D capabilities of the NV34 do not differ from those of the NV30/31. The NV34 supports the DirectX 9 API and hence Shaders 2.0 and 2.0+. However, there is a difference: the GeForce FX 5200 chip does not have IntelliSample optimization.

The memory interface of the GeForce FX 5200 also differs from that of its older brother, the NV30. The chip uses a standard DDR memory controller, which theoretically will lead to a significant drop in performance, especially when anisotropic filtering and full-screen anti-aliasing are enabled.

The chips of the GeForce FX 5200 family do not support HDTV, but they can boast of having a built-in TV codec, a TMDS transmitter, and two built-in 350 MHz RAMDACs. Although today this is no longer a surprise to anyone.

The GeForce FX 5200 family has managed to change the «recommended» clock values of the core and memory ten times over the long time of its development and short time of existence. The problem is that the first tests of working samples showed such amazingly low performance that it was not only unreasonable, but also deadly to release cards in such a state on the market. We simply hesitate to present those very first results — it’s just not objective in relation to nVidia, but believe me, they were significantly lower than the MX440. The reason, first of all, is in the untuned Detonator drivers for a completely new chip architecture and really low clock frequencies of new chips. Gradually the situation leveled off — the standard recommended frequencies had to be raised, each new version of the driver equalized the performance of the cards, and the output of suitable chips gradually came to reasonable technological standards. In our comparison table at the beginning of the article, we gave the «original» core and memory clock speeds, the reality that you can buy in your nearest store may be completely different. Video card manufacturers are not shy about varying these values over a wide range….

In our comparison table at the beginning of the article, we gave the «original» core and memory clock speeds, the reality that you can buy in your nearest store may be completely different. Video card manufacturers are not shy about varying these values over a wide range….

Direct competitors of the new chips are Radeon 9000 and 9000 Pro, and their soon-to-be-replaced Radeon 9200 and 9200 PRO.

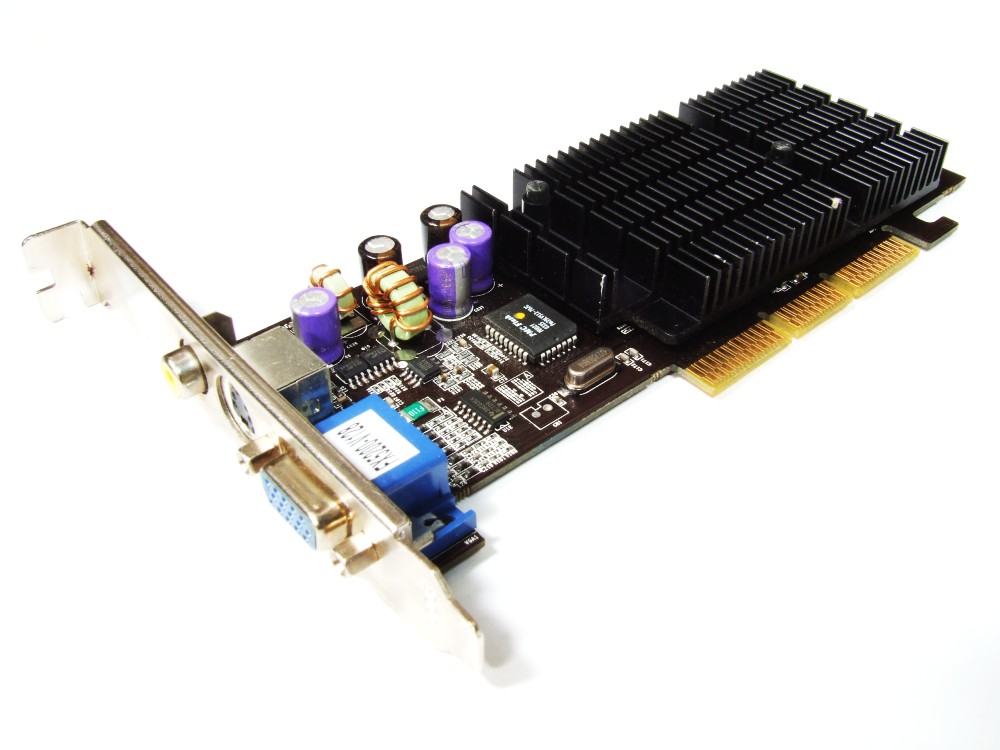

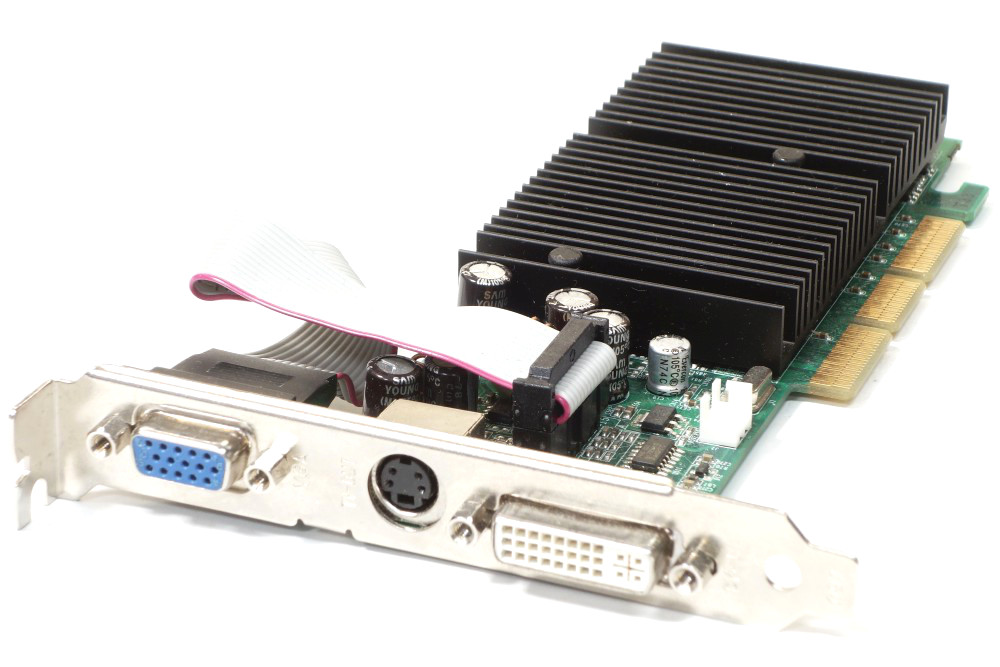

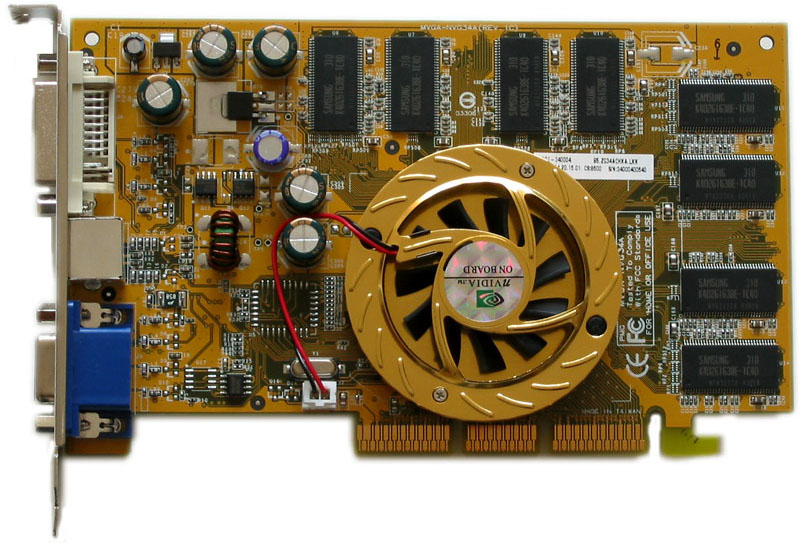

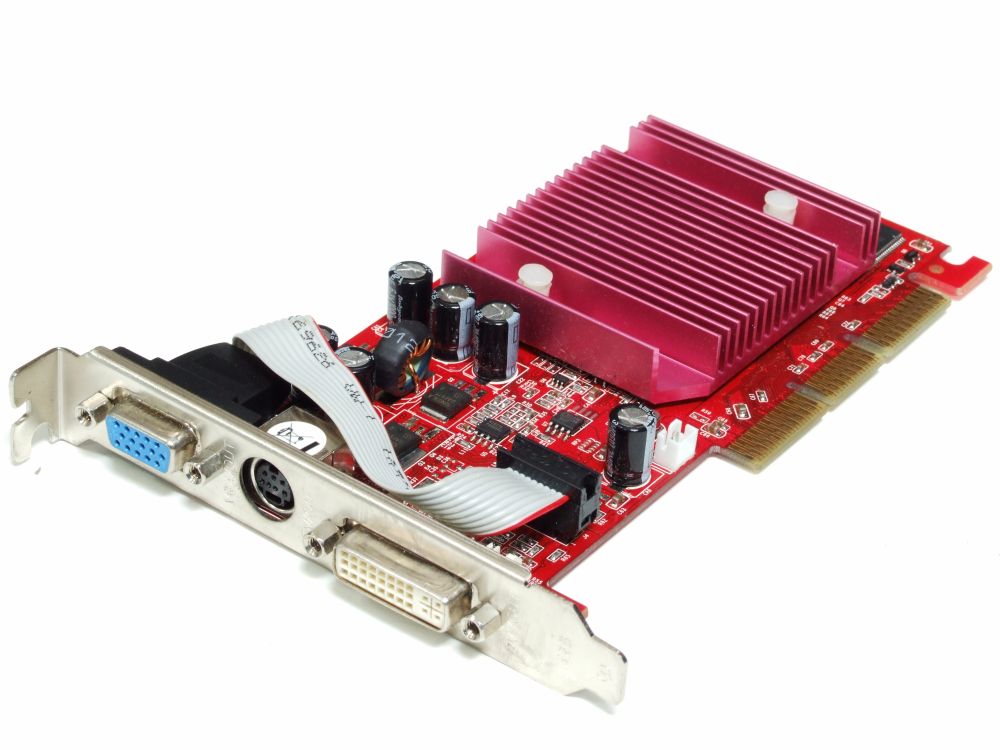

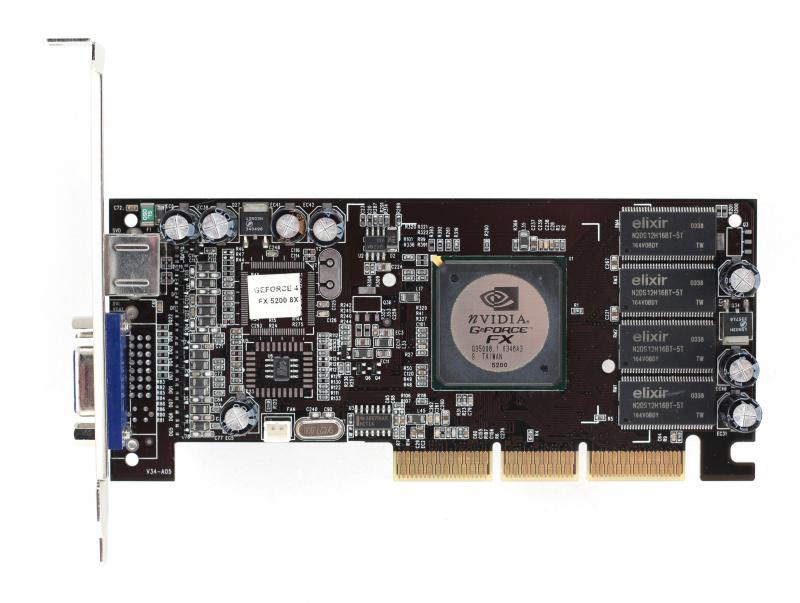

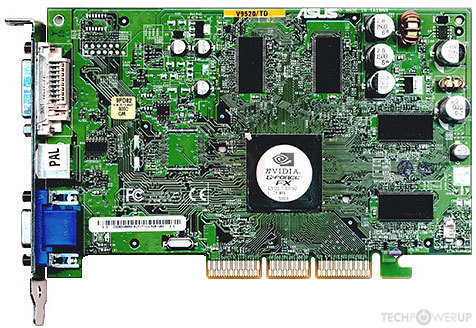

Video card Daytona GEF FX5200

Video cards from this manufacturer have always been characterized by low prices and mediocre workmanship.

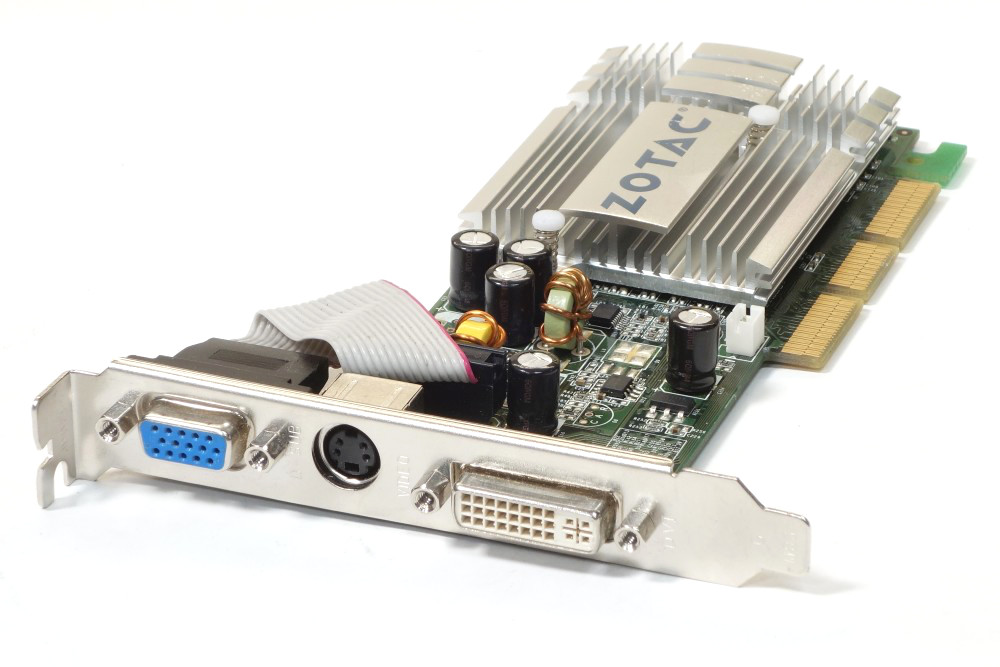

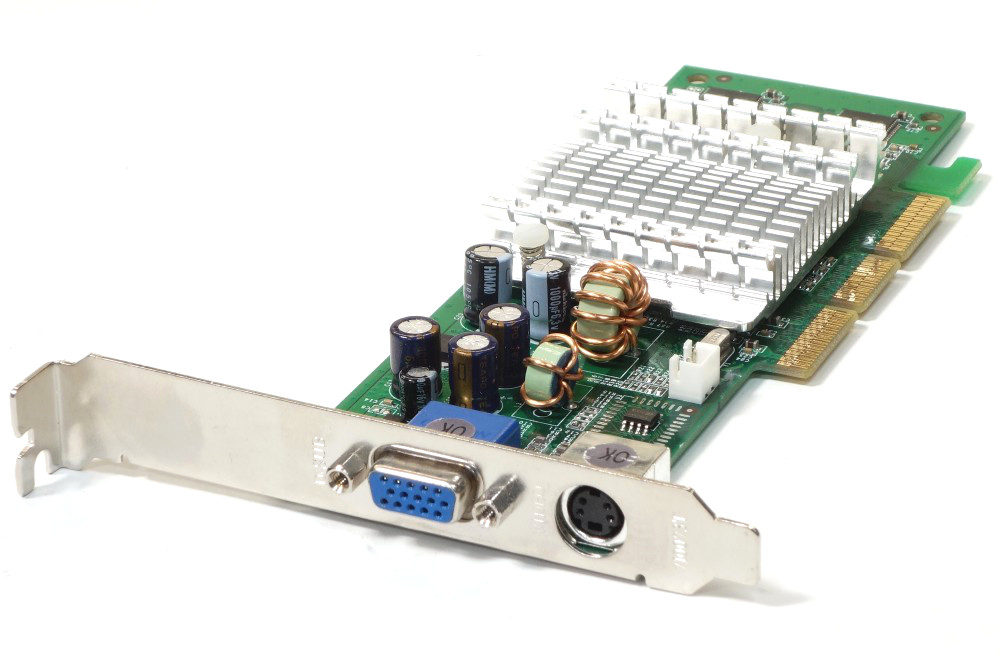

The video card has an AGP x2/x4/x8 interface. The layout is non-standard, which is not at all strange, boards from Daytona usually have their own layout and design. cooling is standard, passive, and is a medium-sized needle radiator.

The effectiveness of such cooling on this chip is very debatable, since the video card still got quite hot, and during overclocking it is strongly recommended to replace it with a normal fan. The memory chips are not covered by radiators.

The memory chips are not covered by radiators.

The video card has 128 megabytes of memory with a 6ns access time. Memory manufactured by PMI is marked as HP58C2128164SAT-6. Here is the explanation for the lack of cooling on the memory — the slow 6 ns memory does not heat up.

Daytona GEF FX5200 core and memory frequencies are 250 MHz and 150 (300DDR) MHz respectively. Attention! The memory frequency of this board is lower than it should be according to the latest nVidia recommendations (recommended frequency is 200 (400DDR) MHz).

The card has a standard set of outputs: analog, digital (DVI) and TV-OUT. TV-OUT is implemented using the GeForce FX 5200 chip itself, as it already has integrated tools for implementing TV-OUT.

Sometimes it comes in a box, but its equipment is the same as in the OEM version, namely the Daytona GEF FX5200 video card and a driver disk.

Testing

| Test stand: | |

| Motherboard: | FIC AD11 (AMD 761+ VIA 686B) |

| Processor: | AMD Athlon XP 2000+ (Tbred) |

| Memory: | 512MB PC2100 NCP DDR SDRAM CL 2. 0; 0; |

| Hard disk: | Maxtor Fireball 3 30 GB; |

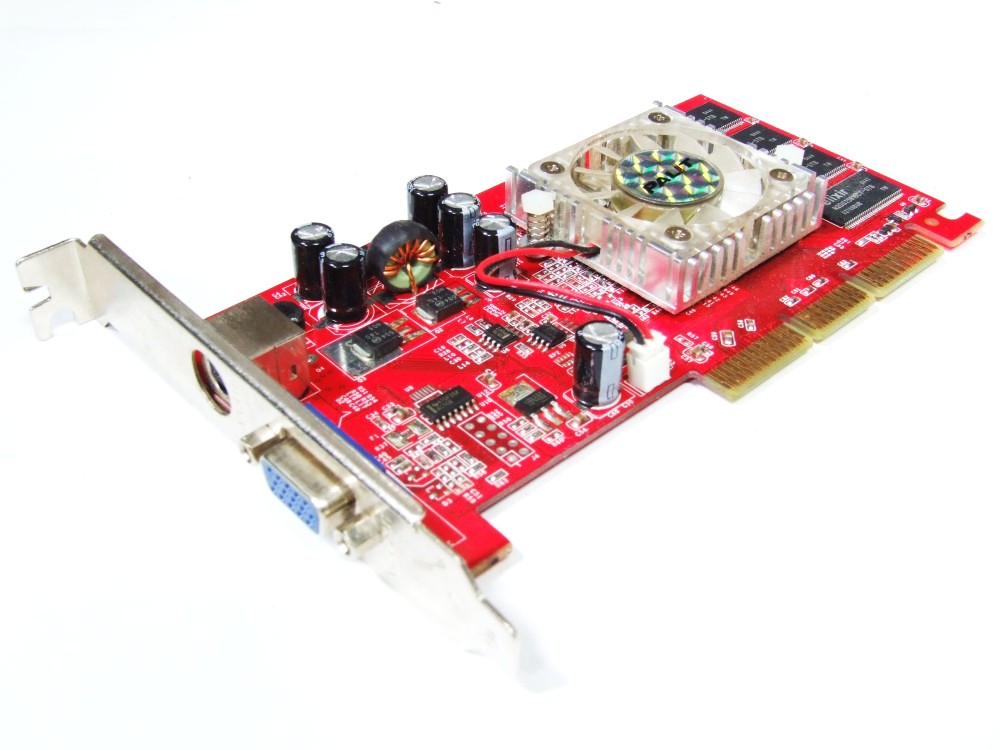

| Video cards: | Daytona GEF FX5200 (nVidia GeForce FX 5200) Chaintech (nVidia GeForce 4 MX480) Sapphire Radeon 9000 (ATI Radeon 9000) |

| Operating system, test programs and drivers | |

| OS | Microsoft Windows XP SP1 |

| Driver for ATI graphics cards: | Catalyst 3.1 |

| Driver for nVidia video cards: | Detonator 41.09 |

| Test programs: | — MadOnion 3DMark2001 SE; — MadOnion 3DMark2003; — Codecreatures; — Unreal Tournament 2003; — Serious Sam The Second Encounter (OpenGL). |

Test results

Unreal Tournament 2003

FX 5200 significantly loses both to its competitor — ATI Radeon 9000 and to its predecessor — GeForce 4 MX480. ATI Radeon 9000 and nVidia GeForce 4 MX480 show approximately the same results.

ATI Radeon 9000 and nVidia GeForce 4 MX480 show approximately the same results.

In the botmatch test, the same positions remained, but the gap between nVidia GeForce FX and ATI Radeon 9000/ nVidia GeForce 4 MX480 has narrowed, but still not small: 4-8 fps on average.

Serious Sam The Second Encounter

nVidia GeForce FX lags behind ATI Radeon 9000, and quite significantly loses to nVidia GeForce 4 MX480. In this test, nVidia GeForce 4 MX480 wins: it is ahead of its competitor — ATI Radeon 9000 by a fairly large gap.

3D Mark 2001 SE

nVidia GeForce FX lags behind ATI Radeon 9000 and loses to nVidia GeForce 4 MX480.

3D Mark 2001 detailed results

Game Test 4 — Nature

Test results for nVidia GeForce FX are almost identical to those of ATI Radeon 9000. The nVidia GeForce 4 MX480 fails this test due to lack of pixelware support.

Fill rate

With fill rate tests clearly outperformed by the nVidia GeForce FX 5200, we began to have doubts that the FX 5200 based video cards are based on the design of 2×2 pixel pipelines.

High poly count — 8 lights

The results are clearly not in favor of the nVidia GeForce FX 5200.

Vertex program speed

Here the places are the same 1st for nVidia GeForce 4 MX480. 2nd for ATI Radeon 9000 and 3rd for nVidia GeForce FX 5200.

Pixel program speed

The nVidia GeForce FX 5200 does much better with pixel programs.

Speed of advanced pixel programs

There is no confusion with the results here: nVidia GeForce FX 5200 is very far behind its competitor ATI Radeon 9000. The fact is that video cards optimized for DirectX 9 support pixel programs version 1. 4, if the card does not support version 1.4, then this test uses pixel programs version 1.1. And they require more passes. It is not yet clear what is the reason for this result, either the driver, or the 3Dmark test package itself.

4, if the card does not support version 1.4, then this test uses pixel programs version 1.1. And they require more passes. It is not yet clear what is the reason for this result, either the driver, or the 3Dmark test package itself.

Codecreatures

This BenchMark uses DirectX 8.1 generation pixel programs. And the work with the latter is better with nVidia GeForce FX than with ATI Radeon 9000. The gap is not significant, but it is there. The nVidia GeForce 4 MX480 doesn’t pass this test at all, as it doesn’t support pixel programs.

Codecreatures — average number of polygons

These test results allow you to present and compare the average polygons per second.

Image quality

The nVidia GeForce FX 5200 is slightly behind the nVidia GeForce 4 MX480, things looked much better if the nVidia GeForce FX 5200 had not been stripped of the IntelliSample engine. ATI Radeon 9000 test results could not be captured due to ATI driver issues with Unreal Tournament 2003.

Conclusion

There are two impressions about the GeForce FX 5200. On the one hand, the performance is no different from the GeForce4 MX, and sometimes much lower than that of competitors and its predecessor.

On the other hand, a low price of ~$90 (although the price should be lowered, since cards based on this graphics chip can really compete only with the GeForce4 MX and RADEON 9000), good DirectX 9 optimizations and excellent work with pixel programs, although There were also some incidents: the test results in the Advanced Pixelshaders scene, although most likely these are driver problems, and this will be fixed soon.

In general, the FX 5200 is the previous MX with more functionality in the form of DX9 support. But who is going to buy a weak video card for demanding games that do not yet exist using DX9, in order to end up watching a slideshow on it? Players don’t need it, they need more serious solutions, and everyone else doesn’t really need DX9 support.