Gigabyte GeForce RTX 2080 Ti Gaming OC Review

Manufacturer: Gigabyte

UK price (as reviewed): £1,109.99 (inc. VAT)

US price (as reviewed): Currently unavailable

As manufacturers come around to the idea that RGB is pointless or at least very questionable without a neutral backdrop to act as a “blank canvas”, components are starting to become pretty uniform aesthetically speaking. The first wave of Threadripper boards were quite an obvious indication of this, and now the same is coming true for graphics cards. Take the RTX 2080 Ti Gaming OC from Gigabyte here, for instance, and compare it to the RTX 2080 Duke OC from MSI or the RTX 2080 Ti Amp Edition from Zotac. Still, skin-deep similarities do not mean much, so it’s worth seeing what features partners are bringing to the table for the RTX cards, especially as the new generation of Founders Edition cards are themselves very respectable.

This is currently Gigabyte’s most premium GPU, as it is the higher specced model of the two RTX 2080 Ti cards in its lineup. Like many RTX 2080 Ti cards, stock for this SKU is very thin on the ground, with the vast majority of stores only accepting pre-orders at the time of writing, and prices could easily vary at the same store by £100 or more within a day or two. As such, we’ve fallen back on the MSRP, which is £1,099 i.e. the same as the Founders Edition (also out of stock). This puts it £100 above the MSRP for reference boards, but most MSRPs are pretty meaningless for this GPU right now. Regardless, for £1,100 it’s really a shame not to see an all-metal shroud, with Gigabyte instead relying as usual on plastic. Now, it is solid enough, but it feels like a pretty standard graphics card next to the premium craftsmanship exhibited by the FE cards. The metal backplate, meanwhile, is certainly welcome, but it’s plain and unexciting and you don’t get a matching cover for the NVLink SLI connector either.

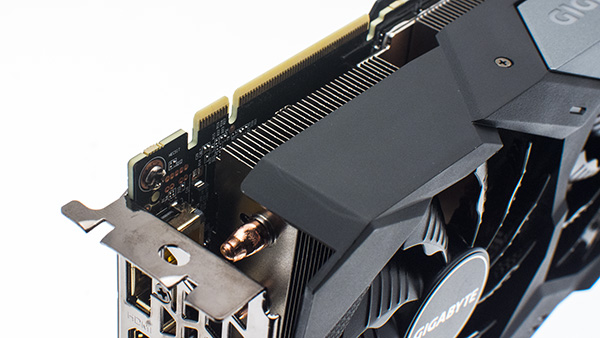

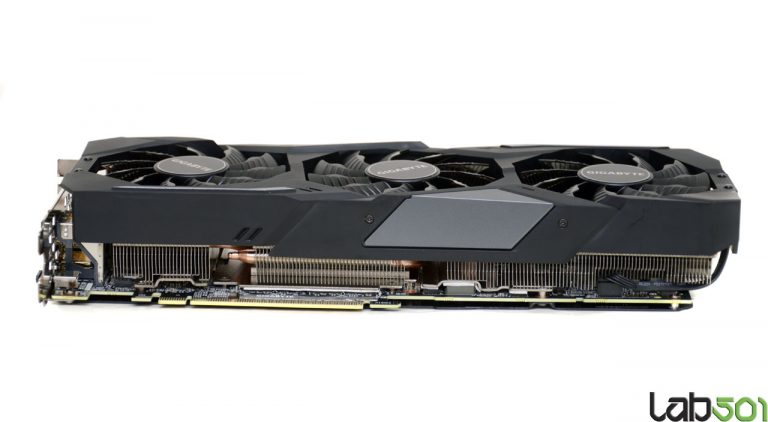

The cooler extends the card’s length beyond the PCB edge a little to a total of 287mm, which actually isn’t that long by today’s standards, though this overhang is not covered by the backplate. It’s a 2.5-slot design (triple-slot, effectively speaking), but the height of the card is standard. No anti-sag mechanism is supplied, though it doesn’t need it as much as some even bulkier cards do.

As you would hope, the RTX 2080 Ti Gaming OC comes with a factory overclock. Specifically, the card ships with a boost clock of 1,650MHz, a mere 15MHz more than the FE but 105MHz more than any cards that happen to have reference clocks. With software, you can activate the card’s OC Mode, which raises the boost clock to 1,665MHz, but this is not how the card runs out of the box. As usual, the 11GB of GDDR6 memory is left at the default speed of 14Gbps in both modes.

The display connectors here are in keeping with the reference design, and more than suitable for a premium GPU in 2018.

Likewise, the dual eight-pin PCIe plugs are what you get on reference and Founders Edition designs. Combined with the PCIe slot, you get 375W of peak available power, so there’s no need for anything more. The plugs are top-mounted and easy to access, and there are white LEDs that will blink in case of abnormal power supply; when everything is okay they stay off. A cable that converts two six-pin PCIe connectors to one eight-pin PCIe plug is supplied, and the cabling is all black for a consistent finish.

Also along the top edge is the singular area that gets treated to RGB lighting, the Gigabyte logo. This is supported by the RGB Fusion ecosystem, although when we tried it on our system we were only able to pick a static colour, and the “off” functionality refused to work. It’s advertised as supporting extra colour modes, so we suspect this is a software bug.

The Windforce 3X cooler uses three 11-blade 82mm fans, with the central one spinning in the opposite direction to the other two, which Gigabyte claims reduces turbulence, the idea being that where one fan edge meets another, airflow will be in the same direction rather than opposing. The fan blades have a triangular edge to “split” airflow, while grooves on the outer surface are designed to guide the air down in an even spread. The fans also support switching off under a certain temperature, so you can expect silence when the GPU is idle or under low load only.

The fan blades have a triangular edge to “split” airflow, while grooves on the outer surface are designed to guide the air down in an even spread. The fans also support switching off under a certain temperature, so you can expect silence when the GPU is idle or under low load only.

The cooler itself relies on six 6mm copper heat pipes. Sadly, these do not have a nickel coating, but that’s more of an aesthetic bonus. They all make direct contact with the GPU and twist and turn in order to feed the three separate aluminium fin stacks. Additionally, contact plates that are bonded directly to the fins ensure that all VRM components and memory packages are directly cooled via thermal pads, which are also used to help dissipate some heat to the backplate.

The PCB is pretty much a clone of reference models that are used on the Founders Edition cards, although Gigabyte claims to have certified the chokes and capacitors on the 13+3 phase power system as ‘Ultra Durable’.

While the standard warranty with this card is three years and thus in line with the FE variant, Gigabyte allows customers to extend it to four years free of charge if they simply register the card within 30 days of purchase.

- Graphics processor Nvidia GeForce RTX 2080 Ti, 1,350MHz (1,650MHz boost) (1,365MHz/1,665MHz in OC Mode)

- Pipeline 4,352 stream processors, 544 Tensor Cores, 68 RT Cores, 272 texture units, 88 ROPs

- Memory 11GB GDDR6, 14Gbps effective

- Bandwidth 616GB/sec, 352-bit interface

- Compatibility DirectX 12, Vulcan, OpenGL 4.5

- Outputs 3 x DisplayPort 1.4a, 1 x HDMI 2.0b, 1 x USB-C VirtualLink

- Power connections 2 x eight-pin PCIe, top-mounted

- Size 287mm long, 115mm tall, 50.2mm tall (~2.5-slot)

- Warranty Three years (user-extendable to four years if registered within 30 days of purchase)

1 — Gigabyte GeForce RTX 2080 Ti Gaming OC Review2 — Test Setup3 — 3DMark and VRMark4 — Battlefield 15 — Deus Ex: Mankind Divided6 — Middle-Earth: Shadow of War7 — Total War: Warhammer II8 — Wolfenstein II: The New Colossus9 — World of Tanks Encore10 — Power and Thermals11 — Overclocking12 — Performance Analysis and Conclusion

Gigabyte GeForce RTX 2080 Gaming OC 8G Review: Turing Goes Semi-Passive

Tom’s Hardware Verdict

Gigabyte’s GeForce RTX 2080 Gaming OC 8G offers great performance at 2560×1440 on high-refresh displays, the luxury of a semi-passive fan mode, and a quiet cooling solution. Now that it costs less than Nvidia’s sturdily-built Founders Edition model, we’re willing to consider it a viable alternative for enthusiasts looking to save some money.

Now that it costs less than Nvidia’s sturdily-built Founders Edition model, we’re willing to consider it a viable alternative for enthusiasts looking to save some money.

Pros

- +

Runs cooler than Nvidia’s Founders Edition design

- +

Higher GPU Boost rating than Nvidia’s Founders Edition design

- +

Semi-passive fan mode facilitates silence and low power consumption at idle

- +

Configurable lighting via bundled RGB Fusion app

- +

Lowest price among third-party GeForce RTX 2080s

Today’s best Gigabyte GeForce RTX 2080 Gaming deals

164 Amazon customer reviews

☆☆☆☆☆

No price information

Check Amazon

No price information

For more information visit their website

Gigabyte GeForce RTX 2080 Gaming OC 8G Review

Update, 11/23/18: Due to depleted inventory of GeForce GTX 1080 Ti cards and falling prices on third-party Turing-based models, we are revisiting our impressions of Gigabyte’s GeForce RTX 2080 Gaming OC 8G and updating value comparisons throughout the review.

Just weeks after the launch of Nvidia’s GeForce RTX 2080 and 2080 Ti cards, previous-gen 1080 Ti boards based on the Pascal architecture have almost completely disappeared.

Perhaps that’s alright, though. The least-expensive GeForce RTX 2080 now sells for $750 (£585 in the UK), which is still higher than the price Nvidia told us to expect when its Turing-based line-up debuted in the U.S., but substantially less expensive in the UK. And the Gigabyte GeForce RTX 2080 Gaming OC 8G we’re reviewing today even includes a copy of Battlefield V. Clearly, Nvidia’s board partners are trying harder to drum up interest in GeForce RTX 2070 and 2080 (the 2080 Ti remains woefully overpriced).

When we originally published our review of the GeForce RTX 2080 Gaming OC 8G, it was priced around $830 (£750 in the UK). In the weeks that followed, however, it fell to $750 (£585 in the UK). Those prices are far more attractive compared to Nvidia’s own Founders Edition design. After all, Gigabyte offers a highly-capable Windforce 3X thermal solution, a semi-passive fan mode for absolute silence at idle, configurable lighting, and a four-year warranty.

After all, Gigabyte offers a highly-capable Windforce 3X thermal solution, a semi-passive fan mode for absolute silence at idle, configurable lighting, and a four-year warranty.

Meet The GeForce RTX 2080 Gaming OC 8G

Despite the GeForce RTX 2080 Gaming OC 8G’s commanding size, it’s not that heavy of a graphics card. Nvidia’s Founders Edition weighs 2.8 pounds (1.3 kg). The Zotac Gaming GeForce RTX 2080 AMP dips in at 2.5 pounds (1.2 kg). Meanwhile, our scale claims the Gigabyte card weighs just 2.12 pounds (0.98 kg). Less heft usually means a lighter heat sink cooling the GPU. But good fans can help counter a lack of mass.

The GeForce RTX 2080 Gaming OC 8G measures 11.3 x 4.4 x 2 inches (28.7 x 11.3 x 5 cm), meaning it occupies three expansion slots worth of space on your motherboard (along with a bit of room above/behind the card due to its backplate). For some enthusiasts, this isn’t an issue. The extra width is used for a taller heat sink, which improves cooling. Gamers with smaller cases or multiple GPUs will have a harder time accommodating such a configuration though, especially when it exhausts waste heat out the card’s top and down toward the motherboard through vertically-oriented fins.

Gamers with smaller cases or multiple GPUs will have a harder time accommodating such a configuration though, especially when it exhausts waste heat out the card’s top and down toward the motherboard through vertically-oriented fins.

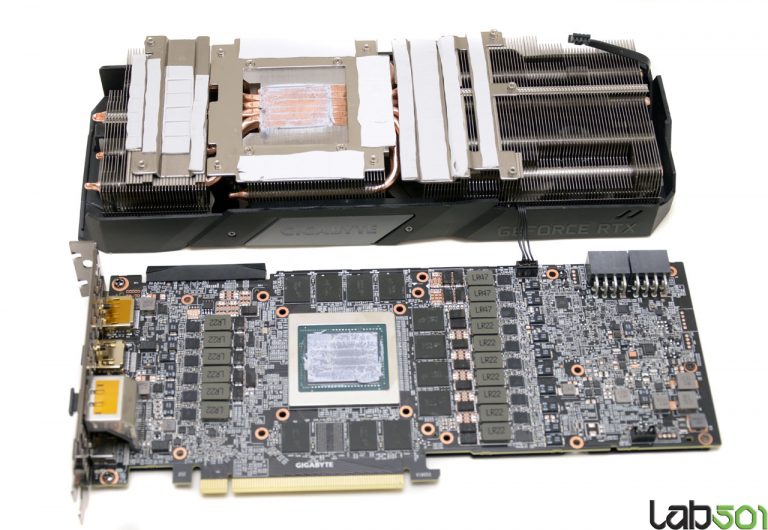

Gigabyte uses plastic gratuitously across its shroud, which houses three 82mm fans. These fans blow down through an array of aluminum fins split into three sections. The section closest to the display outputs sits up over the PCB. It doesn’t make contact with any on-board components, but rather helps dissipate thermal energy from four heat pipes touching the TU104 processor. The middle section rests on top of Nvidia’s GPU. Six pipes cross through it. Below the fins and heat pipes, a plate mounts to the PCB. Thermal pads between it and the GDDR6 modules help cool Micron’s memory. The third section is the largest, extending from the power circuitry out over the PCB’s back edge by almost a centimeter. It also has four pipes passing through, along with a shaped metal plate underneath drawing heat from the VRMs through pads.

The trio of fans, the six copper heat pipes, and the direct-touch sink combine to form what Gigabyte calls its Windforce 3X cooling system. As part of this system, the outside fans spin counter-clockwise, while the middle fan rotates clockwise. Turbulence is purportedly kept to a minimum, generating less competing airflow from adjacent fans. Then, at idle, the fans stop spinning altogether through a feature that Gigabyte calls 3D Active Fan. Enthusiasts who prefer to maintain lower idle temperatures can disable 3D Active Fan through Gigabyte’s Aorus Engine software. Frankly, the fans make so little noise at idle that we’d prefer to keep them spinning (even though the semi-passive mode is one of this card’s competitive advantages).

The Nvidia and Zotac cards we already reviewed are big and heavy, justifying base plates that help keep both cards rigid. Gigabyte’s doesn’t weigh as much, so it doesn’t run quite the same risk of flexing in a mobile LAN box. However, the GeForce RTX 2080 Gaming OC 8G’s thermal solution moves a lot more relative to its PCB when you press on one side of the fan shroud or the other.

Gigabyte does add a metal plate to the back of its Gaming OC card. Seven screws keep it pinned up against the PCB, with thick chunks of thermal pad behind the GDDR6 memory and power circuitry helping circumvent hot-spots.

Up top, Gigabyte’s GeForce RTX 2080 Gaming OC 8G hosts a single NVLink connection covered by a think piece of plastic, a company logo in the middle, and GeForce RTX branding on the other end. Downloadable RGB Fusion software allows you to control the color and effect of LED back-lighting the Gigabyte logo, similar to what we saw on Zotac’s Gaming GeForce RTX 2080 AMP. Eight- and six-pin power connectors are rotated 180 degrees to avoid conflict with the form-fitted heat sink, and special white LEDs mounted to the PCB light up to tell you if something is wrong with the auxiliary power. We only saw these illuminate at boot.

Display outputs on Gigabyte’s card match the GeForce RTX 2080 Founders Edition: you get three full-sized DisplayPort 1. 4 connectors, one HDMI 2.0 port, and VirtualLink support via USB Type-C. A fairly free-flowing grille isn’t functionally significant, unfortunately, since the cooler’s fins move air perpendicular to the bracket.

4 connectors, one HDMI 2.0 port, and VirtualLink support via USB Type-C. A fairly free-flowing grille isn’t functionally significant, unfortunately, since the cooler’s fins move air perpendicular to the bracket.

| GeForce RTX 2080 Ti FE | Gigabyte GeForce RTX 2080 Gaming OC 8G | GeForce RTX 2080 FE | GeForce GTX 1080 Ti FE | |

| Architecture (GPU) | Turing (TU102) | Turing (TU104) | Turing (TU104) | Pascal (GP102) |

| CUDA Cores | 4352 | 2944 | 2944 | 3584 |

| Peak FP32 Compute | 14.2 TFLOPS | 10.7 GFLOPS | 10.6 TFLOPS | 11.3 TFLOPS |

| Tensor Cores | 544 | 368 | 368 | N/A |

| RT Cores | 68 | 46 | 46 | N/A |

| Texture Units | 272 | 184 | 184 | 224 |

| Base Clock Rate | 1350 MHz | 1515 MHz | 1515 MHz | 1480 MHz |

| GPU Boost Rate | 1635 MHz | 1815 MHz | 1800 MHz | 1582 MHz |

| Memory Capacity | 11GB GDDR6 | 8GB GDDR6 | 8GB GDDR6 | 11GB GDDR5X |

| Memory Bus | 352-bit | 256-bit | 256-bit | 352-bit |

| Memory Bandwidth | 616 GB/s | 448 GB/s | 448 GB/s | 484 GB/s |

| ROPs | 88 | 64 | 64 | 88 |

| L2 Cache | 5. 5MB 5MB |

4MB | 4MB | 2.75MB |

| TDP | 260W | 225W | 225W | 250W |

| Transistor Count | 18.6 billion | 13.6 billion | 13.6 billion | 12 billion |

| Die Size | 754 mm² | 545 mm² | 545 mm² | 471 mm² |

| SLI Support | Yes (x8 NVLink, x2) | Yes (x8 NVLink) | Yes (x8 NVLink) | Yes (MIO) |

What lives under the GeForce RTX 2080 Gaming OC 8G’s hood is already well-known. We dug deep into the TU104 graphics processor and its underlying architecture in Nvidia’s Turing Architecture Explored: Inside the GeForce RTX 2080. Gigabyte takes the same graphics processor with 2,944 of its CUDA cores enabled and bumps the typical GPU Boost rating up slightly to 1,815 MHz in Gaming mode and 1,830 MHz in OC mode (versus the Founders Edition card’s 1800 MHz). Eight gigabytes of GDDR6 memory move data at 14 Gb/s, matching Nvidia’s reference design. As you might expect, then, performance comparisons between the two models fall within a single-digit percentage variance.

Eight gigabytes of GDDR6 memory move data at 14 Gb/s, matching Nvidia’s reference design. As you might expect, then, performance comparisons between the two models fall within a single-digit percentage variance.

How We Tested Gigabyte’s GeForce RTX 2080 Gaming OC 8G

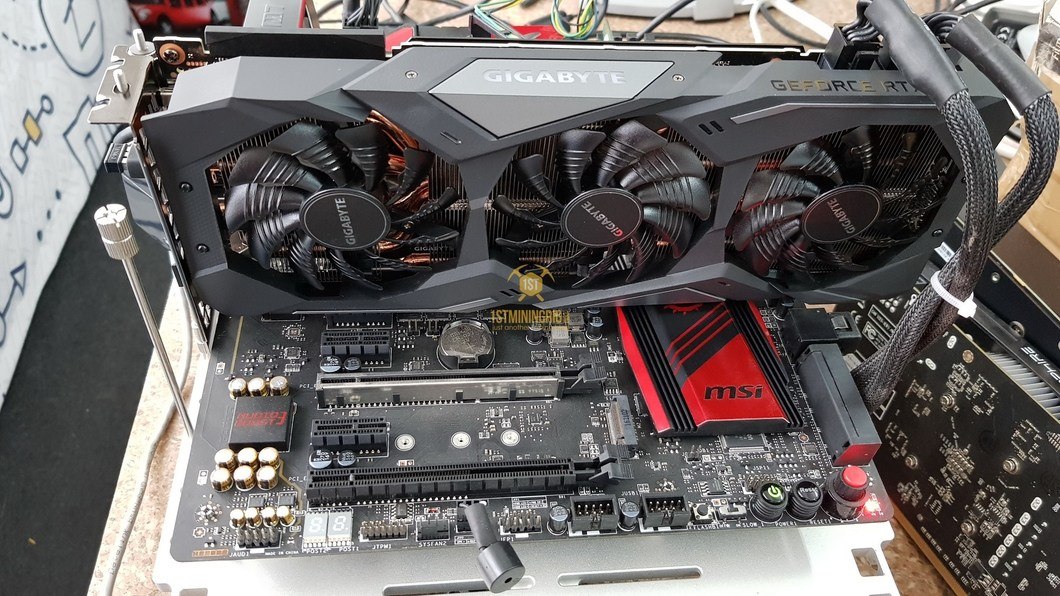

While many users will attach Gigabyte’s card to a system with the latest Intel or AMD processor, our graphics station still employs an MSI Z170 Gaming M7 motherboard with an Intel Core i7-7700K CPU at 4.2 GHz. The processor is complemented by G.Skill’s F4-3000C15Q-16GRR memory kit. Crucial’s MX200 SSD remains, joined by a 1.4TB Intel DC P3700 loaded down with games.

As far as competition goes, we can assume that GeForce RTX 2080 and all of the partner boards based on the same design are bested by GeForce RTX 2080 Ti and Titan V, both of which we have in our test pool. We also compare GeForce GTX 1080 Ti, Titan X, GeForce GTX 1080, GeForce GTX 1070 Ti, and GeForce GTX 1070 from Nvidia. AMD is represented by the Radeon RX Vega 64 and 56.

Our benchmark selection now includes Ashes of the Singularity: Escalation, Battlefield 1, Civilization VI, Destiny 2, Doom, Far Cry 5,Forza Motorsport 7, Grand Theft Auto V, Metro: Last Light Redux, Rise of the Tomb Raider, Tom Clancy’s The Division, Tom Clancy’s Ghost Recon Wildlands, The Witcher 3 and World of Warcraft: Battle for Azeroth.

The testing methodology we’re using comes from PresentMon: Performance In DirectX, OpenGL, And Vulkan. In short, these games are evaluated using a combination of OCAT and our own in-house GUI for PresentMon, with logging via AIDA64.

All of the numbers you see in today’s piece are fresh, using updated drivers. For Nvidia, we’re using build 411.51 for GeForce RTX 2080 Ti and 2080. Zotac’s Gaming GeForce RTX 2080 AMP is tested on 411.70, while Gigabyte’s GeForce RTX 2080 Gaming OC 8G employs 416.34. The other cards were tested with build 398.82. Titan V’s results were spot-checked with 411.51 to ensure performance didn’t change. AMD’s cards utilize Crimson Adrenalin Edition 18.8.1, which was the latest at test time.

Titan V’s results were spot-checked with 411.51 to ensure performance didn’t change. AMD’s cards utilize Crimson Adrenalin Edition 18.8.1, which was the latest at test time.

Interestingly, there is a bug in The Witcher 3 that was introduced a couple of builds ago. It causes flickering through our benchmark scene, where the background appears to go white and come back. This issue doesn’t seem to affect performance, but it’s certainly distracting. Nvidia released a hotfix driver on October 28 to address it.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

- 1

Current page:

Gigabyte GeForce RTX 2080 Gaming OC 8G Review

Next Page Performance Results: 2560×1440

Chris Angelini is an Editor Emeritus at Tom’s Hardware US. He edits hardware reviews and covers high-profile CPU and GPU launches.

Gigabyte GeForce RTX 2080 Ti WindForce OC review

73points

Gigabyte GeForce RTX 2080 Ti WindForce OC

Gigabyte GeForce RTX 2080 Ti WindForce OC

Why is Gigabyte GeForce RTX 2080 Ti WindForce OC better than the average?

- GPU clock speed?

1350MHzvs1279MHz - Floating-point performance?

14.1 TFLOPSvs9.47 TFLOPS - Pixel rate?

142.6 GPixel/svs85.22 GPixel/s - GPU memory speed?

1750MHzvs1560.11MHz - Effective memory speed?

14000MHzvs10250.63MHz - Texture rate?

440.6 GTexels/svs223.62 GTexels/s - VRAM?

11GBvs6.23GB - Maximum memory bandwidth?

616GB/svs343.4GB/s

Which are the most popular comparisons?

Gigabyte GeForce RTX 2080 Ti WindForce OC

vs

MSI GeForce RTX 2080 Ti Gaming X Trio

Gigabyte GeForce RTX 2080 Ti WindForce OC

vs

Gigabyte GeForce RTX 2080 Ti Gaming OC

Gigabyte GeForce RTX 2080 Ti WindForce OC

vs

Asus TUF GeForce RTX 3090 Ti Gaming OC

Gigabyte GeForce RTX 2080 Ti WindForce OC

vs

Nvidia GeForce RTX 2060

Gigabyte GeForce RTX 2080 Ti WindForce OC

vs

Nvidia GeForce RTX 3070

Gigabyte GeForce RTX 2080 Ti WindForce OC

vs

Gigabyte GeForce RTX 3060 Ti Eagle

Gigabyte GeForce RTX 2080 Ti WindForce OC

vs

Asus ROG Strix GeForce RTX 3070 Gaming

Gigabyte GeForce RTX 2080 Ti WindForce OC

vs

EVGA GeForce RTX 3070 XC3 Ultra Gaming

Gigabyte GeForce RTX 2080 Ti WindForce OC

vs

EVGA GeForce RTX 2080 Ti Gaming

Gigabyte GeForce RTX 2080 Ti WindForce OC

vs

Gigabyte Aorus GeForce RTX 3090 Ti Xtreme Waterforce

Price comparison

User reviews

Performance

1. GPU clock speed

GPU clock speed

1350MHz

The graphics processing unit (GPU) has a higher clock speed.

2.GPU turbo

1620MHz

When the GPU is running below its limitations, it can boost to a higher clock speed in order to give increased performance.

3.pixel rate

142.6 GPixel/s

The number of pixels that can be rendered to the screen every second.

4.floating-point performance

14.1 TFLOPS

Floating-point performance is a measurement of the raw processing power of the GPU.

5.texture rate

440.6 GTexels/s

The number of textured pixels that can be rendered to the screen every second.

6.GPU memory speed

1750MHz

The memory clock speed is one aspect that determines the memory bandwidth.

7.shading units

Shading units (or stream processors) are small processors within the graphics card that are responsible for processing different aspects of the image.

8.texture mapping units (TMUs)

TMUs take textures and map them to the geometry of a 3D scene. More TMUs will typically mean that texture information is processed faster.

9.render output units (ROPs)

The ROPs are responsible for some of the final steps of the rendering process, writing the final pixel data to memory and carrying out other tasks such as anti-aliasing to improve the look of graphics.

Memory

1.effective memory speed

14000MHz

The effective memory clock speed is calculated from the size and data rate of the memory. Higher clock speeds can give increased performance in games and other apps.

2.maximum memory bandwidth

616GB/s

This is the maximum rate that data can be read from or stored into memory.

3.VRAM

VRAM (video RAM) is the dedicated memory of a graphics card. More VRAM generally allows you to run games at higher settings, especially for things like texture resolution.

More VRAM generally allows you to run games at higher settings, especially for things like texture resolution.

4.memory bus width

352bit

A wider bus width means that it can carry more data per cycle. It is an important factor of memory performance, and therefore the general performance of the graphics card.

5.version of GDDR memory

Newer versions of GDDR memory offer improvements such as higher transfer rates that give increased performance.

6.Supports ECC memory

✖Gigabyte GeForce RTX 2080 Ti WindForce OC

Error-correcting code memory can detect and correct data corruption. It is used when is it essential to avoid corruption, such as scientific computing or when running a server.

Features

1.DirectX version

DirectX is used in games, with newer versions supporting better graphics.

2. OpenGL version

OpenGL version

OpenGL is used in games, with newer versions supporting better graphics.

3.OpenCL version

Some apps use OpenCL to apply the power of the graphics processing unit (GPU) for non-graphical computing. Newer versions introduce more functionality and better performance.

4.Supports multi-display technology

✔Gigabyte GeForce RTX 2080 Ti WindForce OC

The graphics card supports multi-display technology. This allows you to configure multiple monitors in order to create a more immersive gaming experience, such as having a wider field of view.

5.load GPU temperature

Unknown. Help us by suggesting a value.

A lower load temperature means that the card produces less heat and its cooling system performs better.

6.supports ray tracing

✖Gigabyte GeForce RTX 2080 Ti WindForce OC

Ray tracing is an advanced light rendering technique that provides more realistic lighting, shadows, and reflections in games.

7.Supports 3D

✔Gigabyte GeForce RTX 2080 Ti WindForce OC

Allows you to view in 3D (if you have a 3D display and glasses).

8.supports DLSS

✔Gigabyte GeForce RTX 2080 Ti WindForce OC

DLSS (Deep Learning Super Sampling) is an upscaling technology powered by AI. It allows the graphics card to render games at a lower resolution and upscale them to a higher resolution with near-native visual quality and increased performance. DLSS is only available on select games.

9.PassMark (G3D) result

Unknown. Help us by suggesting a value.

This benchmark measures the graphics performance of a video card. Source: PassMark.

Ports

1.has an HDMI output

✔Gigabyte GeForce RTX 2080 Ti WindForce OC

Devices with a HDMI or mini HDMI port can transfer high definition video and audio to a display.

2. HDMI ports

HDMI ports

More HDMI ports mean that you can simultaneously connect numerous devices, such as video game consoles and set-top boxes.

3.HDMI version

HDMI 2.0

Newer versions of HDMI support higher bandwidth, which allows for higher resolutions and frame rates.

4.DisplayPort outputs

Allows you to connect to a display using DisplayPort.

5.DVI outputs

Allows you to connect to a display using DVI.

6.mini DisplayPort outputs

Allows you to connect to a display using mini-DisplayPort.

Miscellaneous

1.Has USB Type-C

✔Gigabyte GeForce RTX 2080 Ti WindForce OC

The USB Type-C features reversible plug orientation and cable direction.

2.USB ports

With more USB ports, you are able to connect more devices.

Price comparison

Cancel

Which are the best graphics cards?

Gigabyte RTX 2080 and RTX 2080 Ti Review

NVIDIA just launched their GeForce RTX 20 series and have introduced their RTX 2080 and RTX 2080 Ti. It comes at a specific time when the silicon-fabrication technology is not progressing similarly to how it was about four years ago, breaking the architecture roadmaps of some of the giant names like NVIDIA, which is urging them to create innovative and fresh architectures on current nodes.

It comes at a specific time when the silicon-fabrication technology is not progressing similarly to how it was about four years ago, breaking the architecture roadmaps of some of the giant names like NVIDIA, which is urging them to create innovative and fresh architectures on current nodes.

The brute transistor count boosts are not considered as available options today, so NVIDIA needed to have a really notable feature to be able to sell fresh new GPUs. This feature we speak of is the RTX Technology, which is so huge for the brand that it was able to alter the classification of the client segment graphics cards. This is also with the launching of GeForce RTX 20 Series.

While the lineup of Gigabyte’s RTX 20 series graphics card is coming in fast, the series from AORUS has seen some short delay; yet today, we’re going to check out two extreme products namely the RTX 2080 and the RTX 2080 Ti. On offer, these feature a factory overclock to the memory and GPU and triple fan coolers.

With the inclusion of the Ray Tracing and DLSS, the contemporary Turing architecture, plus a power-filled boot, the RTX 2080 and RTX 2080 Ti are surely exciting. Today, we’re going to see what these cards have to offer us, and if these are products that you’d need for your PC build.

Gigabyte RTX 2080 and RTX 2080 Ti Review – What’s in the Box?

Gigabyte RTX 2080

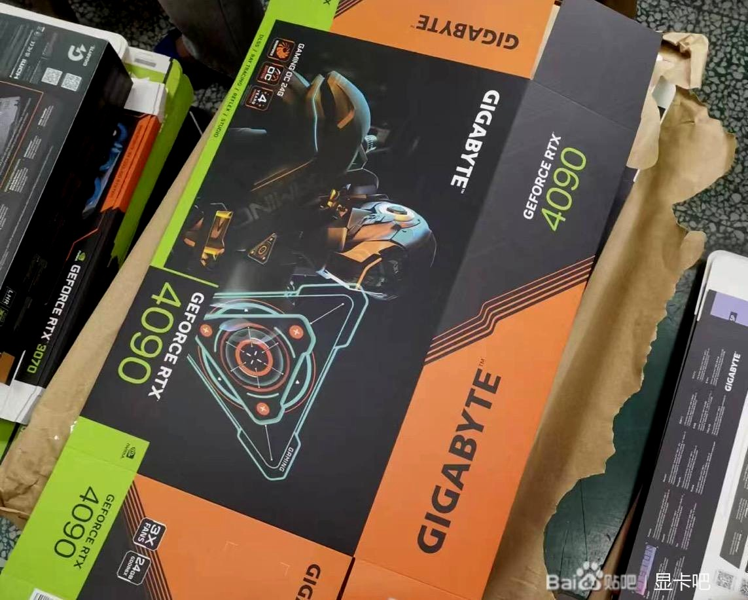

The RTX 2080 comes in a glossy black packaging with a mechanical eye of a robot printed in front. At the corner, you will find a summary of the product’s key features. One side of the box has a few details about its extended warranty when you register to AORUS Care online.

The back of the box displays more details and photos about the highlights of the RTX 2080 such as its triple fan setup with the middle fan rotating in the opposite direction. According to Gigabyte, this helps unify the direction of the wind between the fans since it reduces turbulence. This results in wider coverage when cooling. Other highlights are the Super Overclocking via one click and its compatibility with AORUS engine. The side of the GPU shroud also has the RGB for the logo o GIGABYTE.

Other highlights are the Super Overclocking via one click and its compatibility with AORUS engine. The side of the GPU shroud also has the RGB for the logo o GIGABYTE.

When you open the box, you will see the RTX 2080 itself together with a utility / driver and a quick-start guide. The larger black leaflet tells about the additional one year to the product’s 3-year warranty. We think that this is a nice touch that the company made.

Gigabyte RTX 2080 Ti

Let’s begin with the package of the RTX 2080 Ti. It comes in a rather large cardboard box; and if you think about it, it’s actually bigger than the rest of the cards under the RTX 20 series.

The front portion of the box features a huge GeForce RTX logo, together with the AORUS logo right at the top left area of the package. You’ll also read the AORUS X-treme series logo right at the center.

The company put a lot of emphasis on their packaging, especially on the RTX side where the first feature listed by AIBs was the Ray Tracing. The next would be the GDD-R6, the Direct-X 12, then the Ansel support. NVIDIA took the support of Ray Tracing and bet the future of their GPUs with them. Of course, they highlighted other features on the package like the RGB fusion support, 8 GB GDD-R6, Windforce cooling, plus the 4-year warranty.

The next would be the GDD-R6, the Direct-X 12, then the Ansel support. NVIDIA took the support of Ray Tracing and bet the future of their GPUs with them. Of course, they highlighted other features on the package like the RGB fusion support, 8 GB GDD-R6, Windforce cooling, plus the 4-year warranty.

The back of the package features your typical information and highlights; these are the primary features as well as the card specifications. The main features of the top-notch custom cards from AORUS would be its blazing and excellent performance which is accomplished by having a fully custom design, the lighting systems featuring the Stack x3 fans, the Windforce cooling, and the latest angular fin heatsink. The latter offers enhanced cooling performance unlike the classic flat-surfaced fin heatsinks that most people know of.

They also focused on the site, GeForce.com, on the AIB cards where you can easily download all the newest drivers, as well as GeForce Experience applications. These are vital for every gamer to be able to access all the feature sets of their brand-new cards.

These are vital for every gamer to be able to access all the feature sets of their brand-new cards.

When you check out the sides of the package, you’ll be again, greeted by the large branding from GeForce RTX. There’s also the mention of their 8 GB GDD-R6 memory which is available on these cards.

The increased bandwidth dispatched through the latest GDD-R6 interface would greatly boost the efficiency in gaming titles at much higher resolutions over the GDD-R5X and GDD-R5 based graphics cards.

Now that we’re done with the outer portion of the package, it’s time for us to check the good stuff and see what’s inside the box of the RTX 2080 Ti. Within the box is another box that comes with a dotted texture plus a reflective AORUS logo at its center. AORUS is obviously aiming for the more premium and sophisticated look with this specific product, and we’re not complaining. It really does look nice and appealing.

Here, the accessory package and graphics card are both held in place by a sturdy foam container. For the graphics card, it comes with a couple of manuals and accessories that might not really be useful if you’re a hardcore enthusiast; but of course, it is something that will prove to be useful for the regular gaming audiences. Following is a list of accessories included in the box: the AORUS VGA Holder, AORUS Metal Sticker, a quick guide, an I / O guide, the 4-year warranty registration, and a driver CD.

For the graphics card, it comes with a couple of manuals and accessories that might not really be useful if you’re a hardcore enthusiast; but of course, it is something that will prove to be useful for the regular gaming audiences. Following is a list of accessories included in the box: the AORUS VGA Holder, AORUS Metal Sticker, a quick guide, an I / O guide, the 4-year warranty registration, and a driver CD.

The Product

Gigabyte RTX 2080 and RTX 2080 Ti Review

RTX 2080

The appearance of the RTX 2080 is obviously inspired by the G1 Gaming GTX 1080 which was released previously. It is made of plastic with a matte black finish while some of its sections are grey. What makes this different from other Gigabyte cards is that it doesn’t have orange accents. The grey and black theme is great since no one will have any problems when creating a build with matching colors.

For the triple fan setup, it is part of the cooling solution called Windforce 3X. For its measurements, each of the fans have a diameter of 82 millimeters. As mentioned earlier, the fan at the center rotates at the opposing direction of the two other fans.

For its measurements, each of the fans have a diameter of 82 millimeters. As mentioned earlier, the fan at the center rotates at the opposing direction of the two other fans.

Now when it comes to removing the heatsink from the PCB, all you have to do is take the seven screws from the back and you’re good to go. Upon seeing the PCB, you will see that the design of NVIDIA has been used. It retains the similar 8 + 2 power phase layout of the Founders card. The eight GDDR6 chips are produced by Micron.

Now for the cooler, it is completely different from that of the Founders card. The cooler of the RTX 2080 makes use of six heatpipes made of copper. Each of these have a diameter of 6 millimeters and these have contact with the core of the GPU. For the large fin array that is made of aluminum, this makes up the bulk of the cooler. A cold plate with thermal pads is also present for the MOSFETs and the VRAM chips.

When looking at the front portion of the card, you will find a little Gigabyte logo that RGB LEDs illuminate. You can configure this using the RGB Fusion software. At the back is a full-length backplate made of metal that looks and feels like anodized aluminum. It also has Gigabyte’s logo printed in white. The logo is not an RGB zone and this portion is kept simple.

You can configure this using the RGB Fusion software. At the back is a full-length backplate made of metal that looks and feels like anodized aluminum. It also has Gigabyte’s logo printed in white. The logo is not an RGB zone and this portion is kept simple.

The RTX 2080 from Gigabyte is fitted with the Turing TU-104-based GPU; it is also a product series that is created and released to take the place of the GeForce GTX 1080. Its graphics card is fully customized and fitted with Gigabyte’s newest model – the Windforce 3x cooler.

Once again, the cooler is direct heatpipe touch-based – this means that the heatpipes are really fixed on top of the GPU. The Windforce 2x is installed with a top side RGB LED logo that can be animated and color-controlled.

RTX 2080 Ti

The thickness of this card is quite evenly-split between the fan shroud and its heatsink. This device mostly has matte black plastic which is accented with nice silver swoops together with gleaming black edges.

Beneath its adorning cover, a total of three 100-millimeter fans in a stacked setting overlaps each other across the length of the cooler. By spinning and coinciding in the opposite course, Gigabyte notes that the Windforce Stack 3x system yields costless airflow from each of the fans instead of having ineffective turbulence.

Interestingly, based on the company’s own experiments and diagram, the right and middle fans blow down on the motherboard, while the middle and left fans push the air out of the card’s top.

Its heatsink is divided into two similar sized portions which are shaped uniquely to welcome the surface mounted elements just underneath them. Right at its core, the fins are cut to have the same height to maximize the surface area; however, about ⅔ of the outer portions of both sinks utilize an angular-fin style with every other fin present, sitting lower. Gigabyte claims that this aids in channeling air via fins while simultaneously decreasing noise.

The heatsink that is nearest the display outputs is set at the top of the TU-102 processor of NVIDIA. A total of 7 heatpipes make direct contact with the GPU where two of these bends back into the heatsink’s bottom and top edges to guarantee evenly dissipated thermal energy.

Also, there are 5 pipes that go through the other sink, running throughout its far edge. A spacer located beneath the GPU sink squeezes the thermal pads between itself and the GDD-R6 memory modules that surround the TU-102. Additionally, both sides o link to the voltage-regulation circuitry on the board to mitigate hot spots.

Instead of establishing its thermal solution to a metallic frame that is almost similar to the RTX 2080 Ti Extreme 11G’s PCB, the backplate from Gigabyte directly bolts to the heatsink in 10 areas. A metallic frame located at the top of the sink enhances the rigidity by maintaining the thermal solution and preventing it from flexing. Despite this, it is a bit weighty to hang from a PCIe slot; so what Gigabyte did was to bundle a stand for supporting the card. It pushes up from the floor of your chassis or your bottom mounted PSU.

It pushes up from the floor of your chassis or your bottom mounted PSU.

Above the Fan Stop sign, you will see a pair of 8-pin power connectors rotating at 180-degrees to bypass hanging up on the heatsinks beneath them.

The LEDs at the top of each connector aids in diagnosing power supply concerns. When they’re switched off, it means that all’s good; if you end up forgetting to run a cable from the PSU, these will light up. It also works when the power is intermittent; these blink to point out that there’s something wrong. At the other end of the top portion, you’ll see an NV-Link interface which is safeguarded by an orange piece made of plastic. As for the built-in cover on NVIDIA’s Founders Edition card, it sure looks better and classier.

Since the heatsink’s fins have the ability to direct air up and down, you won’t really need to have ventilation on the expansion bracket. Instead, Gigabyte fills the two-slot cover with a total of seven display outputs: 3 HDMI ports, 3 Display Port connectors, plus 1 USB Type C interface. Of course, the TU-102 only simultaneously drives four displays; so, a total of 7 connectors should be used.

Of course, the TU-102 only simultaneously drives four displays; so, a total of 7 connectors should be used.

At any time, either the two DP interfaces or the two HDMI ports are switched off. We really liked that Gigabyte gave gamers all that connectivity which saves everyone from having to utilize those annoying adapters. Additionally, there is a metal backplate that provides 2 heatsink pieces something solid and sturdy to screw into. This also hosts the logo of AORUS which is controllable and backlit via the RGB Fusion 2.0 software from Gigabyte. The plate is also solid, concealing the PCB from one end to another.

The Gigabyte RTX 2080 Ti is the latest and leading consumer graphics card coming from NVIDIA. This series has been integrated with the TU-102 GPU which has active shader processors which is vital when you compare this with the GTX 1080 Ti.

The device will get 11 GB GDD-R6 graphics memory; and together with this, you’ll also get the 352-bit -wide memory bus. The GPU comes with a total of six GPCs (Graphics Processing Clusters), 72 SMs (Streaming Multiprocessors), and 36 TPCs (Texture Processing Clusters). Each of the SMs has a total of 64 CUDA cores, 4 texture units, 8 Tensor Cores, plus 96 KB of L1 / shared memory. This can be set up for different capacities based on the graphics or the computer workloads.

Each of the SMs has a total of 64 CUDA cores, 4 texture units, 8 Tensor Cores, plus 96 KB of L1 / shared memory. This can be set up for different capacities based on the graphics or the computer workloads.

Ray tracing acceleration is done via the new RT Core processing, the TU-102 has a total of 72 of these with 576 tensor cores plus 96 ROP units. When it comes to the clock frequencies, we’re looking at a base frequency of around 1,350 MHz that has a turbo allowance that’s going to 1,635 MHz.

Product Features

The Windforce 3x – Cooling System

This cooling system features the 3x 82-millimeter distinct blade fans, 6 combined copper heat pipes, 3D active fan functions, heatpipe direct touch, and alternate spinning fans. Together, these deliver a highly effective and efficient heat dissipating capacity for better performance at decreased temperatures.

Extreme Durability + Overclocking

The graphics card utilizes the 8 + 22 power phase style to let the MOSFET operate at the lowest temperature as possible, load balancing and over temperature protection style for each of the MOSFET available, and the Ultra Durable approved capacitors & chokes to give you a longer system life and excellent performance.

Alternate Spinning Patent

The patent of Gigabyte which is “Alternate Spinning” is the one and only solution that can readily deal with the rough airflow of three fans.

Usually, the biggest issue with three fans is the turbulence. Since these rotate simultaneously in the same direction, the airflow direction becomes opposite with between the fans. This will cause turbulent and rather rough airflow, thus, decreasing the heat dissipation efficiency.

Gigabyte made the fan turn the opposite direction so the airflow direction between the other two fans are the same. This decreases the turbulence while also enhancing the airflow pressure.

NVIDIA NV-Link Support

Support for the NVIDIA NV-Link TM technology combines two similar GPU’s with a GeForce RTX NV-Link Bridge to provide the best experience in VR or 4K gaming. You can also readily modify the light effects on the bridge via the AORUS engine.

Distinct Blade Fan + the 3D Active Fans

Airflow is divided by the triangular fan’s edge while being seamlessly guided through the 3D stripe curve found on the fan’s surface. This, in turn, effectively improves the airflow. The 3D Active Fan gives you semi passive cooling so the fans will stay off when the GPU is on low power game or low load. This will allow you to fully enjoy your gameplay in total silence even when your system is running idle or light.

This, in turn, effectively improves the airflow. The 3D Active Fan gives you semi passive cooling so the fans will stay off when the GPU is on low power game or low load. This will allow you to fully enjoy your gameplay in total silence even when your system is running idle or light.

The Direct Touch Heat Pipes

The structure of the copper heat pipe increases the direct contact area together with the GPU, thus, improving the transfer of heat. Also, the heat pipe conceals the VRAM via large metallic plate contact to guarantee adequate cooling.

Composite Heat Pipes

The available composite heat pipes associate phase transition and thermal conductivity to thoroughly control the heat transfers occurring between the two solid interfaces that boost cooling capacity.

Using the Product

RTX 2080

Are you wondering if the RTX 2080 can play games using the highest possible settings at a 1080p resolution? Well yes, it sure does, and it will be able to handle pretty much any game you can throw at it.

Another great thing about the card is that it is extremely quiet. Not only does this benefit from the “3D Active Fan”. This means that the spinning of the fans stop at low-load instances. However, even if we ran our stress tests on loop, the card was still hardly audible. Honestly, the fact that we could barely hear the fans spinning already tells you a whole lot of things you’d need to know about how silent this specific card is.

And while performance is a given to the RTX 2080 graphics card from Gigabyte, the company’s continuous effort on ensuring that things stay cool and quiet is extremely commendable. These are indeed little details that greatly affect your overall gaming experience, and we really loved that the company focused on this specific feature. Further, by having an efficient and effective cooling system, the card was able to readily overclock appropriately, and even on higher speeds. Plus, it allowed all games to perform much better than how it usually would.

RTX 2080 Ti

As for the RTX 2080 Ti, it provides you with an overall excellent performance. We’re seeing a couple of caps when on the decreased resolution of gaming.

Depending on the workload and title, you will see about 25% to even 40% boosts in performance for this specific resolution. The RTX 2080 Ti really begins to flex after a resolution of 2560 x 1440, and anything that is below will be normalized because of processor and driver bottlenecks.

In all honesty, we can’t really think of a game that won’t operate well combined with the most excellent image quality settings. The more challenging you make it on the card, the better it will perform if you compare this to the last generation products. When it comes to gaming, you should do away with at least a monitor that is 24 inches at 2,560 / 1600, or higher to make it a better fit. All in all, the 11 GB graphics memory is perfect, thus, making the products extremely future proof.

A benefit of the 2080 Ti is that it features a zero RPM fan mode. During low load situations, we were really amazed that it was able to stay completely silent. Even when on full load, it was still performing quietly, hitting only 43 dBA in total.

Though this may not be the most appealing cooler around, the company clearly made the perfect choice for their brand-new GPU. So, without questions, this surely is a fast card. It also set most of everyone’s second highest scores to date in the synthetic benchmarks.

When we speak of the 4K gaming, it is absolutely one of the best around, thus considered as a beast by many. Even if it’s considered cheaper, it was able to run and perform quietly and it wasn’t also wasteful when it comes to the power. To top it off, it was able to overclock really well and effectively.

So after testing both products for some time, we can definitely say that we highly suggest both the RTX 2080 and RTX 2080 Ti to you.

AORUS Engine Utility

The company bunched the RTX 2080 and their RTX 2080 Ti with its own service for overclocking, lighting, and fan control. Its newest version of the software, which is called the AORUS Engine Utility, has a cutting edge and instinctive interface that lets you alter the clock speed, fan performance, voltage, as well as the power target based on your needs.

Its newest version of the software, which is called the AORUS Engine Utility, has a cutting edge and instinctive interface that lets you alter the clock speed, fan performance, voltage, as well as the power target based on your needs.

From the home screen, the software lets you easily toggle between the default Gaming Mode (1815 MHz), OC Mode (1830 MHz), the Silent Mode (1710 MHz), and the User Mode which can be customized based on your preferences. The buttons located on the bottom-right area corresponds to the RGB Fusion or lighting control, toggle, and hardware monitoring to switch the semi-passive fan mode either off or on.

If you click on the “Professional Mode” text located at the top right portion, the AORUS Engine will switch to another interface that is complete with the memory clock rate slider, GPU boost control, manual fan speed, GPU voltage adjustment, and connected power target / temperature target sliders.

For the single-click super overclocking feature, all you need to do is click on the AORUS engine to easily modify the card to meet a variety of requirements even without knowledge on overclocking.

Conclusion

The RTX 2080 and RTX 2080 Ti delivers superb gaming performance. We would recommend the RTX 2080 over the RTX 2080 Ti if you are a gamer who uses 1440p as native resolution especially if you want to limit your expenses.

On the other hand, if you are an ultra HD gamer, go for the RTX 2080 Ti. It works excellently and we think that it’s an enthusiast class graphics card. As we mentioned earlier, it’s really quiet and delivers a nice cooling performance.

Even under load, its cooling system is able to maintain proper temperature levels throughout every area. The overall results of its performance is great. Aside from ultra HD gaming, we recommend the RTX 2080 Ti if you’re willing to pay for its higher price. Either one you pick you will sure as heck be onto a winner.

Where to Buy

You can pick up the Gigabyte RTX 2080 for around $1200 AUD whilse the RTX 2080 Ti can be had for around $1999 AUD. For more head on over to the RTX 2080 and RTX 2080 Ti product pages.

Like this:

Like Loading…

AORUS RTX 2080 Ti Gaming Box Review — Cooler than Cool

Table of Contents

Introduction

Gigabyte has upped the ante with their release of the AORUS RTX 2080 Ti Gaming Box, the very first liquid-cooled external graphics card solution. It showcases Gigabyte’s commitment to offering the latest and greatest in eGFX technology. At $1,500 the RTX 2080 Ti Gaming Box is more expensive than many Thunderbolt 3 ultrabooks. This raises the question: is the performance gain worth the price of admission?

Hardware

Aorus RTX 2080 Ti Gaming Box + Logi G900 + 8bitdo N30pro

| Specifications compare | |

| Price US$ |

$1,499 |

| PSU location-type | internal-fATX |

| PSU max power | 450W |

| GPU max power | 300W |

| Power delivery (PD) | 100W |

| USB-C controller | TI83 |

| TB3 USB-C ports | 1 |

| Size (in/mm, LxWxH) | 11. 8 x 5.5 x 6.80 8 x 5.5 x 6.80300 x 140 x 173 |

| Max GPU len (in/cm) | 12.60/32.0 |

| Weight (kg/lb) | 3.79/8.34 |

| Updated firmware | 44.44 ✔ |

| TB3 cable length (cm) | 50 |

| Vendor page | link |

| Implementations |

link |

AORUS’ new Gaming Box is no longer the diminutive-sized ITX box that made its first generation a popular eGPU choice. The larger size is a necessary evolution to incorporate an AIO closed-loop liquid cooling system and more importantly to reduce overall noise emission. Surprisingly this second generation Gaming Box remains compact for the power and components it contains. Its footprint is barely larger than a 240mm radiator. For reference, here are some photos to compare the RTX 2080 Ti Gaming Box to the RX Vega 64 LC and the RX 580 Gaming Box.

AORUS RTX 2080 Ti Gaming Box vs RX Vega 64 LC

RTX 2080 Ti Gaming Box vs RX 580 Gaming Box

A unique feature of all Gaming Boxes are their travel cases. It’s no exception with the latest AORUS RTX 2080 Ti version. The new bag retains the same color scheme of a bright orange liner and black exterior. There’s plenty of padding to keep the enclosure protected, and it makes for a truly portable solution. A small compartment contains the power cable and a Thunderbolt 3 cable. It would be a nice touch if Gigabyte had included a longer 40Gbps 100W PD Thunderbolt 3 cable rather than the .5m.

Aorus Rtx 2080 Ti Gaming Box Travel Bag

Top Panel & 240mm Radiator

Unlike the original Gigabyte/AORUS Gaming Box that was rather flimsy with thin sheet metal construction, the RTX 2080 Ti Gaming Box is much more solid with aluminum panels reenforcing an inner metal frame. Foam padding and dust filters provide premium touches. Finished in graphite, it matches many ultrabooks that are constructed out of aluminum. Another refinement is the placement of RGB. It is now in a more appropriate location underneath the front fascia. The lighting zone doesn’t strike you straight in the eyeballs like the bare LED diodes in the 1st generation Gaming Box.

Another refinement is the placement of RGB. It is now in a more appropriate location underneath the front fascia. The lighting zone doesn’t strike you straight in the eyeballs like the bare LED diodes in the 1st generation Gaming Box.

Better build quality means it’s harder to service, especially for compact units such as this Gaming Box. Gigabyte used many more screws in this new enclosure. I counted over 50 before I lost track. At the core of the second generation Gaming Box is an RTX 2080 Ti PCB and its all-in-one liquid cooler. A 240mm radiator is mounted to an L-shaped metal frame. The two 120mm cooling fans have cables that are just long enough to reach the Y-adapter that eventually connects to the graphics board. If you ever need to take this eGPU apart, make sure this fan connector is securely in place when you put it back together (ask me how I know).

AORUS RTX 2080 Ti Gaming Box Right Side

AORUS RTX 2080 Ti Gaming Box Left Side

Due to the horizontal placement of the graphics card, the Thunderbolt 3 mainboard sits vertically. The two boards when connected perpendicular to each other create enough space for an fATX power supply. The PSU is a 12V single-rail unit, producing a max 450W output. One 24-pin and one 4-pin EPS power connector go to the mainboard while two 6+2-pin PCIe power connectors go to the graphics card. This power supply is longer than the unit in the first generation Gaming Box. Another addition with the new AORUS RTX 2080 Ti Gaming Box is a power switch/reset button. We had observed power-related issues with the 1st gen that required a reset, so having a dedicated button is appreciated.

The two boards when connected perpendicular to each other create enough space for an fATX power supply. The PSU is a 12V single-rail unit, producing a max 450W output. One 24-pin and one 4-pin EPS power connector go to the mainboard while two 6+2-pin PCIe power connectors go to the graphics card. This power supply is longer than the unit in the first generation Gaming Box. Another addition with the new AORUS RTX 2080 Ti Gaming Box is a power switch/reset button. We had observed power-related issues with the 1st gen that required a reset, so having a dedicated button is appreciated.

450W fATX PSU Label

PSU Cables & Connectors

One major complaint with the first generation Gaming Box is the three tiny 40mm fans, two of which are in the front and run constantly. Gigabyte issued a firmware update that keeps them off during light use. In this 2nd generation box, Gigabyte switched to using one 70mm enclosure fan to replace those two 40mm units. The remaining 40mm fan is inside the power supply. Overall noise level from the enclosure fans has reduced greatly as a result of these changes.

Overall noise level from the enclosure fans has reduced greatly as a result of these changes.

Enclosure 70x10mm Fan

Rear Ports & PSU 40mm Fan

The RTX 2080 Ti itself is a delicate and expensive PCB, suspended in midair by the x16 physical PCIe slot and five tiny screws attached to the monitor output ports. Given how short the coolant hoses are, it’s unlikely this graphics card and its AIO components can be repurposed inside a standard PC case. ITX cases with a PCIe extension cable may be able to use it.

As expected in a premium eGFX, the AORUS RTX 2080 Ti Gaming Box has a dual Thunderbolt 3 controller setup to provide stability to expansion ports. The Thunderbolt 3 mainboard has a primary JHL6540 TB3 controller hosting the eGPU and a secondary JHL6340 TB3 controller hosting the expansion ports. Other crucial components as found on a typical Thunderbolt 3 enclosure are Texas Instrument TPS65983 USB-C controller and Winbond 25XX series EEPROM firmware chipset.

AORUS RTX 2080 Ti Gaming Box Board Components

Full Teardown Component Layout

Testings

Due to a dual Thunderbolt 3 controller setup, the RTX 2080 Ti Gaming Box has two sets of Intel Alpine Ridge and their accompanied firmware EEPROM chips. Both the primary TB3 controller, JHL6540 and the secondary TB3 controller, JHL6340 are running firmware version 44.44. Upstream Power Delivery remains at the maximum 100W to the host laptop. This allows the AORUS RTX 2080 Ti Gaming Box to charge all Thunderbolt 3 laptops, including the latest 2019 16-in MacBook Pro (96W).

Both the primary TB3 controller, JHL6540 and the secondary TB3 controller, JHL6340 are running firmware version 44.44. Upstream Power Delivery remains at the maximum 100W to the host laptop. This allows the AORUS RTX 2080 Ti Gaming Box to charge all Thunderbolt 3 laptops, including the latest 2019 16-in MacBook Pro (96W).

AORUS RTX 2080 Ti Gaming Box macOS Thunderbolt Tree

100W Power Delivery macOS Power System Info

Firmware flashing on Thunderbolt 3 enclosures is possible through Intel FW Update Tool in Windows. However this process is not straightforward and was almost impossible with a Mac. We had been trying to to come up with a solution for a while. With the release of the Blackmagic eGPU firmware update, @mac_editor was able to reverse-engineer and develop a very effective solution to flash Thunderbolt 3 enclosure firmware in macOS. Our community is also in the process of collecting firmware that is not available from the Thunderbolt vendor websites. It is our wish that Apple and Intel would release an official tool to bake our own firmware to make the most of these eGPU enclosures.

Ch441a Eeprom Programmer

TBT-Flash – Thunderbolt Firmware macOS Tool

Ch441a EEPROM Programmer USB EPP 24cxx25xx

Ethernet port works plug-and-play in Windows at 1Gbps. The Network Interface Controller attaches to a x1 PCIe connection which is hosted by the secondary Thunderbolt 3 controller, JHL6340. This same TB3 controller also hosts 3x USB-A expansion ports via another x1 PCIe connection. As we learned with the first generation Gaming Box, using a single TB3 controller for both eGPU and expansion ports can cause lagging issues for low-latency peripherals such as mouse and keyboard. In the two months I tested this RTX 2080 Ti Gaming Box, I didn’t experience any lag.

Regarding noise and thermal emission, this 2nd generation Gaming Box is cooler and quieter than the original Gaming Box. Placement of the 70mm fan draws air primarily into the power supply. The eGPU never reached higher than mid-60s degree Celsius. Overall noise level is typical for an AIO 240mm liquid cooler. When the Thunderbolt connection becomes active and powers on the Gaming Box, you hear a rush of liquid flowing through the hoses and radiator. This settles down quickly and the next noise you hear is from the 40mm fan inside the PSU. This fan noise is a weak point in an otherwise great cooling system. There are two software utilities to monitor and control different features of the RTX 2080 Ti Gaming Box. AORUS Engine provides monitoring and overclocking of the graphics card. RBG Fusion is the other software with the sole purpose of changing RGB settings.

Aorus Engine

I was excited to test the AORUS RTX 2080 Ti Gaming Box with newly available Ice Lake processor ultrabooks. The 10th generation Intel U-CPU has an on-die Thunderbolt 3 controller that eliminates the shared connection through PCH in previous iterations. The host laptop of choice with i7-1065G7 CPU was a Razer Blade Stealth. The Mercury White model I got is the base configuration with no discrete graphics, which allows the CPU to run at 25W TDP. This makes it an ideal eGPU-host.

Another in-demand host laptop with the RTX 2080 Ti eGPU is the 2019 16-in MacBook Pro. I had tried both the i9 and i7 models but found the 6-core i7 more tolerable in terms of thermal management and overall noise during heavy use. Due to no Nvidia support since macOS Mojave 10.14, we can only use GeForce graphics cards in Windows through Boot Camp mode. If you were wondering if eGPU support for Boot Camp has gotten better, I hate to say that it’s muddy at best.

2019 Razer Blade Stealth + RTX 2080 Ti Gaming Box

Rtx 2080 Ti Gaming Box + AORUS Fi27q-P Monitor

Through Nvidia Optimus, gaming with the internal display is possible and is an overall excellent experience. Given how powerful this Gaming Box is, it really needs an external monitor to achieve full potential. I have a fairly capable Samsung CHG90 – 144Hz FreeSync 2 32:9 FHD monitor that is G-Sync compatible and works well with the RTX 2080 Ti eGPU. However to do it justice, I got an Acer Predator XB273K – 144Hz G-Sync certified 16:9 4K monitor. The Gigabyte team also shipped me an AORUS FI27Q-P – 165Hz G-Sync compatible 16:9 QHD monitor for additional testing. My plan was to observe any noticeable differences between G-Sync certified and G-Sync compatible monitors with the AORUS RTX 2080 Ti Gaming Box.

The two main selling features of Nvidia Turing graphics cards are NVIDIA DLSS (deep learning supersampling) and RTX (real-time ray tracing). At launch there were not many titles optimized for these GPUs. The library of ray tracing games are getting better. The ones I could test with the AORUS RTX 2080 Ti Gaming Box were Battlefield V, Control, and Wolfenstein: Youngblood. As seen in the one-minute gameplay video above, the AORUS RTX 2080 Ti Gaming Box enables eGPU gaming at 4K 60FPS RTXOn with an ultrabook. Testing with all three monitors mentioned above, I could not tell the difference in terms of smoothness between G-Sync certified and G-Sync compatible.

Battlefield V Settings

Control Settings

Wolfenstein: Youngblood Settings

One thing we’ve learned over the years is that eGPU can be a great solution for compute tasks. Unlike the performance loss through gaming, the loss through compute is minimal. We’ve seen strong interest in using eGPU with machine learning platforms such as TensorFlow. With 4,352 CUDA cores, the RTX 2080 Ti is the perfect match for this application. Distributed computing is another great use for external GPU. Our community recently lends a hand in the fight against COVID-19 through Folding@Home and BOINC. The SARS-COV2 projects through FAH use graphics cards specifically. A 3x eGPU setup I recent built can post 2,293,210 points per day.

Triple Radeon eGPUs FAH Advanced Control

RX 580 + RX 5600 XT + RX 5700 XT eGPUs Folding@home Radeon Performance Monitoring

Using eGPU with Linux is also of great interest. My distro of choice is Pop!_OS by System76. I installed the Nvidia ISO that includes the graphics drivers for this RTX 2080 Ti Gaming Box. Pop!_OS is based on Ubuntu which has very good Thunderbolt hot-plug support. Gaming in Linux has gotten a lot better too. Thanks to Proton and Steam Play, we can now play many Windows titles in Linux with ease. I tried Witcher III and GTAV on this eGPU setup. The installation process and gaming experience was no different compared to Windows.

Linux Egpu Switcher

Conclusion

Gigabyte’s AORUS RTX 2080 Ti Gaming Box is an affordable solution relative to piecing together an RTX 2080 Ti and a premium external GPU enclosure yourself. By no means is it the best value for eGPU users. Yet by all means, it is the highest-performing eGPU available today and the only liquid-cooled option. The ultrabook and monitor you pair will need to be equally as capable in order to make the most use of this eGFX. Enabling eGPU gaming at 144Hz in FHD, 100Hz in QHD, and 60Hz in UHD, this Gaming Box keeps up with desktop alternatives while retaining the versatility of the ultrabook. Ultimately to choose the AORUS RTX 2080 Ti Gaming Box is to answer Outkast’s call for rebellious originality, “What’s cooler than being cool?”

Related Articles

Share this Post

This article is also published as a forum topic here »

Gigabyte AORUS GeForce RTX 2080 Ti XTREME 11G Review

Christopher Coke Updated: Posted:

Category: Hardware Reviews 0

If you’re ready to step into the world of high-end 4K gaming, the RTX 2080 Ti is the only way to go. If you’re ready to make the jump, your next choice is which 2080 Ti you should choose. Today, we’re looking at a showpiece GPU if ever we’ve seen one with the Gigabyte AORUS GeForce RTX 2080 TI XTREME 11G. It features innovative triple-fan cooling, a speedy factory overclock, and one of the most impressive lighting solutions we’ve ever seen, but is it worth $1299? Join us as we find out.

Specifications

- Current Price: $1299

- Graphics Processing: GeForce RTX 2080 Ti

- Core Clock: 1770 MHz (Reference Card: 1545 MHz)

- RTX-OPS: 81

- CUDA Cores: 4352

- Memory Clock: 14140 MHz

- Memory Size: 11 GB

- Memory Type: GDDR6

- Memory Bus: 352 bit

- Memory Bandwidth (GB/sec): 616 GB/s

- Card Bus: PCI-E 3.0 x 16

- Digital max resolution: [email protected]

- Multi-view: 4

- Card size: L=290 W=134.31 H=59.9 mm

- PCB Form: ATX

- DirectX: 12

- OpenGL: 4.6

- Recommended PSU: 750W

- Power Connectors: 8 Pin x 2

- Output: DisplayPort 1.4 x 3, HDMI 2.0b x 3, USB Type-CTM(support VirtualLinkTM) x 1

- SLI support: 2-way NVIDIA NVLINKTM

- Accessories

- 1. AORUS VGA holder

- 2. AORUS metal sticker

- 3. Quick guide

- 4. 4-year warranty registration

- 5. I/O guide

- 6. Driver CD

Buying a graphics card today is more complicated than its ever been. You have a lot to consider. What resolution are you playing at, what kinds of games do you plan to play, do you want ray tracing, warranties, future-proofing, RGB, cooling, G-Sync, HDR… the list goes on. Thankfully, things become a whole lot simpler once you decide you’re ready to make the jump to 4K. At the moment, only the RTX 2080 and RTX 2080 Ti are true “4K cards” and, if we’re looking at new AAA games, the 2080 Ti becomes the only real option. The question then becomes which RTX 2080 Ti you should buy.

That’s where the AORUS XTREME comes in with a suite of features to make the case that it’s the 2080 Ti for you. Right off the bat, this model comes with a hefty factory overclock to 1770 MHz, up from the reference speed of 1545 MHz, and is one of the absolute fastest factory overclocks I’ve seen or could find. Out of the box performance is definitely enhanced on this one, assuming it’s not being thermal throttled.

To that end, Gigabyte has packed the RTX 2080 Ti XTREME 11G with a massive heatsink an innovative triple-fan cooling solution. The copper heat pipes included in the heatsink make direct contact with the GPU and MOSFETs to effectively draw heat up into the fin array. They’re also hollowed out to utilize phase transition thermodynamics for more efficient heat transfer. Up top, Gigabyte uses three 100mm fans but what makes this solution interesting is that the center fan actually spins counter to the other two to reduce air turbulence for improved cooling potential. In practice, this actually works quite well as you’ll see in our performance results.

The card also features a great backplate to support its additional weight. At 11.4 x 5.4 x 2.4 inches (LxWxD) and 2.5 slots. It also makes direct contact with the board for modest heat dissipation abilities but even more importantly adds a lot of rigidity to the card to keep it from flexing. Gigabyte actually bundles a metal support arm with the card for additional support to ward off GPU sag. The backplate also has a bright AORUS logo, too, which we’ll get to soon.

The card packs a punch. Under the hood, the AORUS is features 4352 CUDA cores and 11GB of GDDR6 clocked to 14GB/s. On the 352-bit bus, the total memory bandwidth is 616 GB/s. Thanks to the pre-set overclock and 544 tensor cores, the RTX-Ops receive a nice bump to 81 from the 78 found on the Founders Edition. Likewise, you also get an extra 1.2 TFLOPS of performance for a total of 15.4.

What all of that really boils down to is that the AORUS GeForce RTX 2080 Ti XTREME 11G is perhaps the most capable air-cooled card on the market.

The other big element with this GPU is its lighting. Yes, yes, lighting doesn’t affect the performance of the card, I know. But still, what we have here is one of the best light shows ever seen on a GPU. The AORUS 2080 Ti XTREME it kitted out with LEDs. Each fan has a set long the fins, creating an eye-catching ring when they’re in motion (they turn off when stopped). In the center is the AORUS falcon, whose color changes depending on your preset. Pictures really don’t do it justice.

The card is clearly made to look great in a vertical mount as pictured above but also looks great traditionally mounted. A logo on the side flows in RGB rather than the single-color options used on their cheaper cards. The gloss looks great too. The falcon logo on the back is also customizable and is quite bright. When mounted vertically, it washes the area behind it in colored light.

The fan lighting is also very customizable with a lot of great-looking presets. My favorite was definitely the tri-color preset which blends three colors in a flowing gradient. This is definitely the best RGB I’ve ever seen on a GPU and definitely the best I’ve seen from Gigabyte. My only qualms are that it makes you want to disable intelligent fan stop to keep the lights on. Likewise, the falcon logo in the front is based on how fast the fans are spinning, so running them below 40% results in some noticeable flickering. Thankfully, in any situation where you’d want the fans to run for gaming, they’ll be running fast enough to keep the lighting consistent.

On paper, the AORUS RTX 2080 Ti XTREME 11G has the specs to be a showstopper. Let’s take a look and see how it pans out in real life.

Benchmark Results

Test System: Gigabyte X570 AORUS Master Motherboard, AMD Ryzen R9 3900X CPU, NZXT Kraken X72 AIO Cooler, G.Skill TridentZ Royal DDR4-3600MHz 16GB DRAM Kit, Gigabyte AORUS NVMe Gen4 SSD 2TB, Corsair HX-1050 1050 Watt Power Supply.

For all of our benchmark testing, we strive to provide real-world results akin to what you would see in your own PC. To that end, we stress test our cards through a series of modern games and compare them against one another. We also record peak temperatures within these games on stock fan settings. Be aware that the thermal results we report can usually be improved upon by creating a custom fan curve in programs like MSI Afterburner.

In today’s review, we’ve chosen to focus on 1440p and 4K gameplay. The reason for this is that the 2080 Ti is clearly not intended for 1080p and we wouldn’t recommend anyone purchase this caliber of card for FHD gameplay. Any card at this price is going to be for enthusiasts that are pushing high resolutions, frame rates or both. To that end, we don’t think it’s impossible someone might choose this card for 1440p in an effort to push a 144Hz monitor to its limit for the foreseeable future.

When it comes to settings, we turn everything up with the exception of MSAA and FXAA and instead opt for the lowest level of TAA possible. In cases where that’s not possible, we enable the existing anti-aliasing option at its lowest setting. Ray tracing is also disabled for this testing as so few games currently support it.

Let’s see how it did.

Running the game through our suite of test titles shows the value of that factory overclock in real terms. Without doing any overclocking of your own, you’re getting top of the line performance that routinely outpaces the 2080 Ti Founders Edition (which had its own factory overclock) by 5-7 frames.

At 1440p, the card will still struggle to push these games at 144 FPS without turning down some settings but it was consistently able to top the other cards we compared against. Rightfully so at this price point and the internal specs. Still, it was able to achieve triple-digit frame rates consistently with the exception of Metro: Exodus (and reducing a couple of key settings would accomplish this with minimal graphical degradation).

At 4K, the results are similar with the AORUS RTX 2080 Ti XTREME 11G leading the pack. Here, the card card was able to hold 4K60 with a bit of headroom to spare. Not much, however, and it’s important to note that this is still not the case for the latest titles out there. Soon, we’ll be refreshing our suite of test games to include these, but The Division 2, for example, pushed the card into the mid-50s for FPS fairly regularly.

What’s not reflected in those average FPS numbers is how consistent those frame rates were after multiple hours of gaming once warm air permeated the case. As you can tell in the chart above, thermal throttling was never an issue. At peak the card topped out at 76C and never progressed higher. The ambient temperature for this testing was 23C. This definitely reinforces that the triple-fan, alternate spinning solution Gigabyte used here is effective in combination with their heat pipes and that massive sink.

This is especially impressive because the card was mounted vertically with choked off airflow thanks to the side glass panel. Now, usually I would mount the card horizontally but since the XTREME was so clearly intended for a vertical mount, I changed my approach. With a traditional mount, temperatures dropped another 2-3C on average.

Overclocking

I am no master overclocker but a card of this caliber screams to be pushed. I started off simply by using Gigabyte’s AORUS Engine Software. Turing cards are able to utilize Nvidia’s auto-overclocking scanner to find the maximum safe overclock. After allowing AORUS Engine to scan, I came out with 87 MHz boost to the core clock. This is modest and the resulting impact on frame rates was minimal.

Instead, I retreated back to MSI Afterburner, my go-to overclocking program, and was able to push the card to a +115 MHz boost after raising the power limit. As is often the case with modern GPU overclocking, the gains were small but noticeable on paper with an average gain of 1-3 FPS.

Is it worth it? If you’re the kind of gamer looking to push his hardware to the limit (and, come on, if you buy a $1299 graphics card, that probably describes you), then yes. For the average gamer, taking the time to play and eek out those last few MHz isn’t going to net you a FPS boost you can feel, but with AORUS Engine (and other tools) Auto Scan functionality, overclocking your card is easier than ever. With one button press and ten minutes, the card will push itself to a modest to decent overclock. With that kind of system, why wouldn’t you take advantage of it?

Final Thoughts

At $1299, the Gigabyte AORUS GeForce RTX 2080 Ti XTREME isn’t going to be for everybody but, quite frankly, no 2080 Ti is for everybody. If you’re looking to make the jump to 4K, 60 FPS gameplay while also running cool and looking great, this is simply the best way to do it. The Gigabyte AORUS GeForce RTX 2080 Ti XTREME is an absolute, unequivocal BEAST of a card.

The product described in this article was provided by the manufacturer for evaluation purposes.

9.5Amazing

Pros

- Outstanding performance — top of its class

- One of, if not the best, factory overclock

- Great thermal performance, even with low airflow

- Runs fairly quiet at 80% and lower fan speeds

- Looks outstanding — hands-down, the best looking 2080 Ti

Cons

- 2.5 slots — it’s a big boy (but comes with its own support)

- Logo can flicker at low fan speeds

- Expensive

Tags

graphics cards

RTX

Review of the video card GIGABYTE GeForce RTX 3060 GAMING OC 12G

In the context of the current total shortage of video cards, the announcement of any new product is an extraordinary event that attracts the attention of both gamers who do not leave hopes of getting a suitable solution for a comfortable pastime, and virtual currency miners who are waiting for replenishment for their mining farms. Today we have a review of a novelty from the line of graphic solutions with Ampere architecture — GeForce RTX 3060 12 GB . A very promising mid-range model with plenty of memory and an attractive recommended price tag of $329. We evaluate the capabilities of a video card with a GA106 chip using the original GIGABYTE GeForce RTX 3060 GAMING OC 12G model.

Help

ZMIST

- 1 NVIDIA GeForce RTX 3060

- 2 Gigabyte GeForce RTX 3060 Gaming OC 12G

- 3 Design and componing

- 4 in the work

- 5 Configuration0014

- 6 performance 4K

- 7 performance 2560 × 1440

- 8 performance 1920 × 1080

- 9 working applications on GPU

- 10 Acceleration

- 11 Energy

nvidia b 3060 uses the GA106 processor with Ampere graphics architecture. Like other GPUs in the series, the chip is manufactured by Samsung using 8nm technology. The crystal has an area of 276 mm² and includes 13.

3 billion transistors. Note that the silicon plate here is noticeably more compact than that of the older GA104 chip, which is used for the GeForce RTX 3060 Ti/3070 (392 mm², 17.4 billion). The smaller dimensions of the crystal indirectly allow us to talk about reducing the cost of manufacturing video cards.

It is curious that the desktop version of the GeForce RTX 3060 uses a GPU configuration with a slightly smaller number of functional blocks than the mobile modification of the video card. The latter has noticeably reduced operating frequencies, but the number of active computers is higher. Suppose that in the case of the desktop GeForce RTX 3060, a certain reserve is left for the possible appearance of accelerated SUPER modifications in the future.

| GeForce RTX 3060 Ti | GeForce RTX 3060 | GeForce RTX 2060 | |

| Crystal name | GA104-200 | GA106-300 | TU106-200A |

| Graphic architecture | Ampere | Ampere | Turing |

| Manufacturing process | 8 nm | 8 nm | 12 nm |

| Crystal area | 392 mm² | 276 mm² | 445 mm² |

| Number of transistors, billion | 17. |

13.25 billion | 10.8 billion |

| GPU clock speed | 1410/1665 MHz | 1320/1777 MHz | 1365/1680 MHz |

| Stream Processors | 4864 | 3584 | 1920 |

| Texture blocks | 152 | 112 | 120 |

| Rasterizers | 80 | 48 | 48 |

| RT cores | 38 (2nd generation) | 28 (2nd generation) | 30 (1st generation) |

| Tensor cores | 152 (3rd generation) | 112 (3rd generation) | 240 (2nd generation) |