WD Black SN850X SSD review: Face-meltingly fast

Home / PCs & Components / Reviews

Reviews

Comes darn close to supplanting the FireCuda 530 in real-world testing as well as random ops.

By Jon Jacobi

Freelance contributor, PCWorld Aug 23, 2022 6:11 am PDT

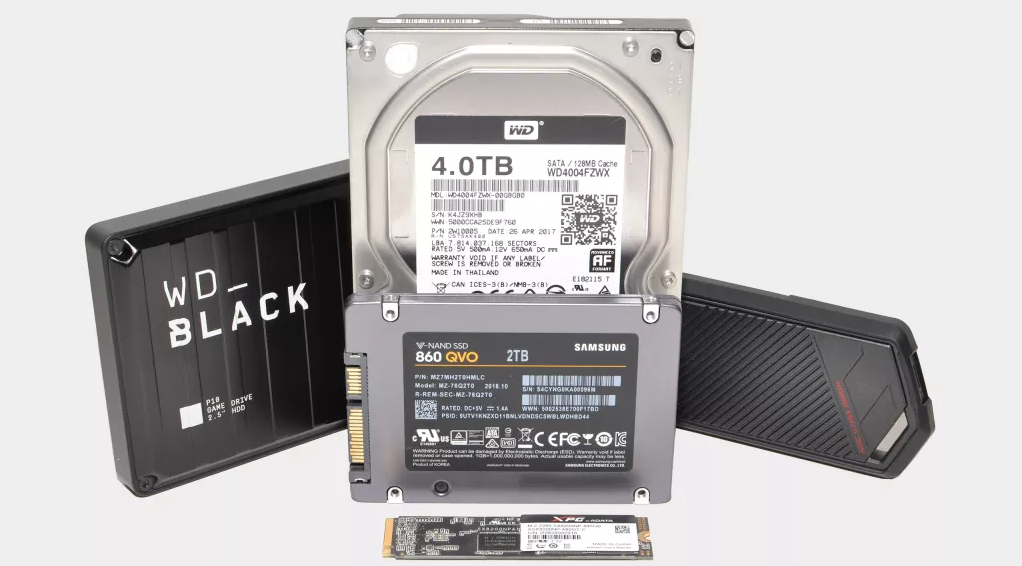

Image: WD

At a glance

Expert’s Rating

Pros

- Excellent performance

- Decently affordable given its speed

- Available up to 4TB

- Optional heatsink for 1/2TB models

Cons

- Pricey per gigabyte

- Somewhat parsimonious TBW ratings

Our Verdict

WD’s Black SN850X is an excellent, and slightly more affordable alternative to Seagate’s might FireCuda 530. It’s also especially adept at real-world transfers and random operations.

WD’s Black SN850X lit up our test meters with outstanding real-world and random performance. It equaled or surpassed the mighty Seagate FireCuda 530 in a couple of tests, and by a rather wide margin in one.

Even if it couldn’t quite match its rival’s sequential throughput under synthetic benchmarks, the SN850’s overall performance makes it a more than viable alternative to Seagate’s best. It’s also a bit cheaper, though its TBW ratings are also far lower.

This review is part of our ongoing roundup of the best SSDs. Go there for information on competing products and how we tested them.

As you might guess given our glowing report on performance, you won’t be seeing the WD Black SN850X in the bargain bin. Indeed, while it’s less expensive than the FireCuda 530 at the moment, it’s still not cheap, with an MSRP of $160 for the 1TB model, $290 for the 2TB, and $700 for the 4TB capacity.

Add $20 if you want a heatsink (most modern motherboards provide their own) for the 1TB/2TB capacities. The 1TB and 2TB (tested) versions are single-sided, and I’m guessing the 4TB is double-sided, hence the lack of a heatsink option.

WD’s new Black SN850X NVMe SSD is very, very fast.

WD

The SSDs themselves sport the usual 2280 (22x80mm) M.2 form factor and are PCIe 4 x4 NVMe types. The NAND is 112-layer TLC (Triple-Level Cell/3-bit) with what the company claims is a Western Digital designed controller.

WD’s solid state expertise comes courtesy of SanDisk, a company it purchased a while back, and that’s the name on the controller.

WD provides a generous five-year warranty, but the TBW (terabytes that may be written) ratings, while about average for cheaper drives, are a bit parsimonious for a top-shelf drive. Basically, 600TBW for every 1TB of capacity—less than half of what Seagate provides for the FireCuda 530.

mentioned in this article

Seagate FireCuda 530 (2TB)

TBW ratings are like the “or 50,000 miles” on automobile warranty. If you exceed them, the warranty is over whether it’s been one year or five.

WD Black SN850X: Performance

I’ve definitely let the cat out of the bag as regards performance, but I’m guessing you’d like some specifics. I get paid to provide them, so here goes. The SN850X was a smidge slower (mostly writing) in CrystalDiskMark 8’s sequential test. Not by a lot, mind you.

I get paid to provide them, so here goes. The SN850X was a smidge slower (mostly writing) in CrystalDiskMark 8’s sequential test. Not by a lot, mind you.

Longer bars are better.

On the other hand, it fairly clobbered its rival in random writes with 32 queues in play. The performance between the two drives (and most others) was very similar with only a single queue, so you can chalk this up to the controller.

Nice job by SanDisk on the controller. Longer bars are better.

Adding everything together, the rival FireCuda 530 comes out on top, though not by a whole lot. The SN850X’s aggregate score is the second-fastest we’ve seen.

Longer bars are better.

Real-world performance with our 48GB data sets was excellent as well for the SN850X. The aggregate time is the fastest we’ve seen on our new test bed.

Shorter bars are better.

The SN850X once again bested its main rival when writing a single large 450GB file. By a narrow margin to be sure, but a win is a win. The record for a single (non-RAID) drive is 209 seconds by the Teamgroup Cardea A440 Pro—another very fast drive that’s only a bit slower overall than the FireCuda 530 and SN850X.

A shorter bar is better.

As you can see, the SN850X lacks nothing in performance. What it didn’t show in CrystalDiskMark 8 in terms of sequential throughput, it demonstrated in the real world. As we live in the real world…

Internal drive tests currently utilize Windows 11, 64-bit running on an MSI MEG X570/AMD Ryzen 3700X combo with four 16GB Kingston 2666MHz DDR4 modules, a Zotac (Nvidia) GT 710 1GB PCIe x2 graphics card, and an Asmedia ASM3242 USB 3.2×2 card. Copy tests utilize an ImDisk RAM disk employing 58GB of the 64GB total memory.

Each test is performed on a newly formatted and TRIM’d drive so the results are optimal. Over time, as a drive fills up, performance will decrease due to less NAND for caching, and other factors. The performance numbers shown apply only to the drive of the capacity tested. SSD performance can vary by capacity due to more or fewer chips to shotgun reads/writes across and the amount of NAND available for secondary caching.

If I was choosing a drive to anchor a new test bed, it would be the WD Black SN850X. Random performance is what makes operating systems seem quick, and that is where the SN850X shines. Then again, if I were concerned about warranty/TBW or sequential throughput, the FireCuda 530 might get the nod. Half a dozen, one or the other. Nonetheless, an excellent product from WD.

Author: Jon L. Jacobi, Freelance contributor

Jon Jacobi is a musician, former x86/6800 programmer, and long-time computer enthusiast. He writes reviews on TVs, SSDs, dash cams, remote access software, Bluetooth speakers, and sundry other consumer-tech hardware and software.

SSD lifespan | How long does an SSD last?

The values that are cited are not written in stone. The lifetime of SSDs significantly depends on the write strategy used. Manufacturers use special algorithms for this, which endeavor to achieve the most efficient “write management” possible. The widespread wear-leveling technology, which is managed by the built-in controller or the firmware of an SSD, evenly distributes the entries of all memory blocks. By not always writing in the same block, a balanced utilization and the subsequent delayed aging of an SSD can be achieved.

The widespread wear-leveling technology, which is managed by the built-in controller or the firmware of an SSD, evenly distributes the entries of all memory blocks. By not always writing in the same block, a balanced utilization and the subsequent delayed aging of an SSD can be achieved.

Another measure to extend the lifetime of an SSD is to activate the TRIM function. The TRIM command has provided improved memory management since Windows 7 was released. If the operating system was installed directly onto the SSD, it is usually activated automatically. You can also activate the command yourself via the command line (fsutil behavior set DisableDeleteNotify 0, if TRIM is deactivated). Activation is made easier with the tools that SSD manufacturers offer online for monitoring and maintaining solid state disks free of charge.

Over-provisioning is an optional component of intelligent storage management. If the function is activated, an operational “special memory” becomes available to the SSD controller. This can then be used as a kind of cache for managing and relocating temporary data. Over-provisioning can support SSD maintenance via garbage collection, wear leveling, and bad block management, for example. When the function is activated, however, you forego some storage capacity. Not all SSDs support this function.

This can then be used as a kind of cache for managing and relocating temporary data. Over-provisioning can support SSD maintenance via garbage collection, wear leveling, and bad block management, for example. When the function is activated, however, you forego some storage capacity. Not all SSDs support this function.

As a user, you can also do something to increase the lifetime of the SSD. You can outsource backup directories for larger and write-intensive data backups to inexpensive HDDs. Folders for temporary files and browser profile folders, into which a lot of data is permanently written, do not have to be on an SSD. System-relevant files, which are also responsible for the performance of Windows (e.g., pagefile.sys, hiberfil.sys), should remain on the SSD in order to guarantee efficient system performance.

In addition to the most intelligent memory management possible, other factors are also decisive for the service life of the electronic memory. It is important to know how an SSD should be stored and handled. Thermal problems (e.g., high ambient temperatures) and high humidity can damage the memory or shorten its service life. Mechanical-physical influences (e.g., from falling) are less of a threat to an SSD than to a HDD, but damage from mechanical forces cannot be completely ruled out.

It is important to know how an SSD should be stored and handled. Thermal problems (e.g., high ambient temperatures) and high humidity can damage the memory or shorten its service life. Mechanical-physical influences (e.g., from falling) are less of a threat to an SSD than to a HDD, but damage from mechanical forces cannot be completely ruled out.

Electronic factors can also influence the lifetime of an SSD. The controller (meaning the control unit of an SSD) is particularly susceptible to surge damage. If SSDs are not used for a long time, data can also be lost if it is not accessed for a while. As a precaution, you should check on it occasionally, use it briefly, or at least boot the device. Otherwise, a loss of cell charge can lead to data degradation. Among other things, this can result in bit errors that, despite error correction, trigger firmware corruption and thereby disable an SSD. SSDs should therefore not be used for the permanent offline archiving of data.

Other factors include defective flash semiconductor memories, incorrectly programmed firmware and firmware updates, and memory management algorithms that have not been programmed optimally. SSDs are generally technologically complex. In terms of possible sources of error, malfunctions and negative influences that can end or at least limit service life, they are inferior to the simpler, classic, magnetic storage technology of HDDs. Of course, user errors and other factors can also lead to data loss, such as corrupt files, faulty file systems and file allocation tables, viruses, accidental formatting and the unplanned deletion of files, folders, and partitions.

results of an experiment lasting a year and a half / Sudo Null IT News But which SSD should you choose?

A year and a half ago, a Tech Report journalist decided to conduct an experiment to identify the most reliable SSDs. He took six models of drives: Corsair Neutron GTX, Intel 335 Series, Kingston HyperX 3K, Samsung 840, Samsung 840 Pro, and put all six on a cyclic read / write process. The memory capacity of each drive was 240-256 GB, depending on the model.

The memory capacity of each drive was 240-256 GB, depending on the model.

It should be said right away that all six models successfully withstood the load declared by the manufacturer. Moreover, most models have withstood more read-write cycles than stated by the developers.

However, 4 out of 6 models gave up before reaching the volume of 1 PB of information «pumped» through the disk. But 2 models from those that participated in this «iron death» attraction (Kingston and Samsung 840 Pro) even withstood 2 PB, and only then they refused. Of course, a sample of 6 SSDs cannot serve as an indicator of performance for all SSDs without exception, but this sample still has a certain representativeness. The cyclic read-write procedure is also not an ideal indicator, because drives can fail for a variety of reasons. But the test results are very interesting.

One of the conclusions: manufacturers are quite delicate in choosing the limit for the operation of their drives — as mentioned above, all SSDs have withstood the prescribed limit for the amount of recorded information.

As for the models themselves, the first to fail was Intel 335 Series . The SSD of this model has one feature — they stop working as soon as bad sectors appear. Immediately after that, the drive enters read mode, and then completely turns into a «brick». If it wasn’t for the «stop on failure» instruction, the SSD might have lasted longer. Problems began with the disk after passing the mark of 700 TB. The information on the disk remained readable until the reboot, after which the disk turned into a piece of iron.

The Samsung 840 Series successfully reached the 800 TB mark, but started showing a lot of errors starting at 900 TB and failed without any warning before reaching the petabyte mark.

Kingston HyperX 3K failed next — the model also has an instruction to stop working when a number of bad sectors appear. Towards the end of the work, the device began to issue notifications of problems, letting you know that the end is near. After the mark of 728 TB, the drive went into read mode, and after a reboot it stopped responding.

The Corsair Neutron GTX was the next victim, passing the 1.1 PB mark. But the drive already had thousands of bad sectors, the device began to issue a large number of warnings about problems. Even after another 100 TB, the drive will allow data to be written. But after another reboot, the device was no longer even detected by the system.

There are only two models left Kingston and Samsung 840 Pro, which heroically continued to work, even reaching the mark of 2 PB.

Kingston Hyper X uses data compression whenever possible, but the tester began to write incompressible data for the purity of the test. For this, Anvil’s Storage Utilities program was used, which is used to perform data read-write tests.

The disk performed well, although between 900 TB and 1 PB there were already fatal errors, plus bad sectors. There were only two errors, but it’s still a problem. After the disk failed at 2.1 PB, it was no longer detected by the system after a reboot.

The last iron soldier to fall in this battle was the Samsung 840 Pro

The drive passed the 2.1 PB mark and failed.

Well, there is no happy ending, everyone died, unfortunately. But it could not be otherwise — that is the point of the test, to wait for the failure of all the SSD models participating in it.

Performance

The tester performed a series of tests to determine the performance of the models that participated in the test. Here are the results:

It is worth reiterating that neither the sample nor the test performed is as representative as possible, but certain conclusions can be drawn.

SSD drive life — a detailed overview

Let’s talk about the durability of SSD drives. Let’s find out how long they live, calculate the average life of a regular SSD and M2, and also try to extend the life. We will use calculation formulas and third-party programs. So let’s go!

So let’s go!

Types of flash memory: what you need to know

There are several types of flash memory. The table below shows the main differences.

| Characteristic NAND | QLC |

Let’s talk about optimization to optimize the speed of work and extend the shelf life.

Let’s talk about optimization to optimize the speed of work and extend the shelf life.

It shows the volume that is guaranteed to be overwritten without errors. Based on TBW, the utility gives a specific time left to work on the SSD drive. [mask_link][/mask_link]

It shows the volume that is guaranteed to be overwritten without errors. Based on TBW, the utility gives a specific time left to work on the SSD drive. [mask_link][/mask_link]  But a small error is acceptable (about 50-100 MB / s).

But a small error is acceptable (about 50-100 MB / s).

Click «Scan» and wait for the scan to complete.

Click «Scan» and wait for the scan to complete.

But if instead of zero there is “1”, then it is turned off.

But if instead of zero there is “1”, then it is turned off.

Each update patches old holes, improves the speed of the OS and increases stability.

Each update patches old holes, improves the speed of the OS and increases stability.