MSI Radeon R9 290X Gaming 4G Review

Review index:

- 1 – Gallery

- 2 – GPU Info: GPU Caps Viewer, GPU-Z

- 3 – Benchmarks

- 4 – Burn-in Test and Throttling

- 5 – Conclusion

|

I received this card several weeks ago but I didn’t find the time to write about it. Even if Radeon R9 290X has been launched around one year ago, this card has no successor yet. So a quick review is still interesting, especially to update the benchmarking comparative tables for upcoming GTX 900 reviews…

MSI R9 290X Gaming 4G is a graphics card that belongs to MSI’s Gaming Series. Here are all Series ranked from the top line (Lightning) to the bottom line (CLASSIC):

|

This R9 Gaming is a card for gamers. Although you can overclock it, this card is not intended for overclockers because some OC features like voltage check points are missing. These features are available on the Hawk and Lightning series.

This card is based on the Hawaii XT GPU. More information about this GPU can be found on this page.

MSI R9 290X Gaming 4G homepage can be found HERE.

1.1 – Bundle

I received the card directly from MSI without bundle… so no picture!

1.2 – The Graphics Card

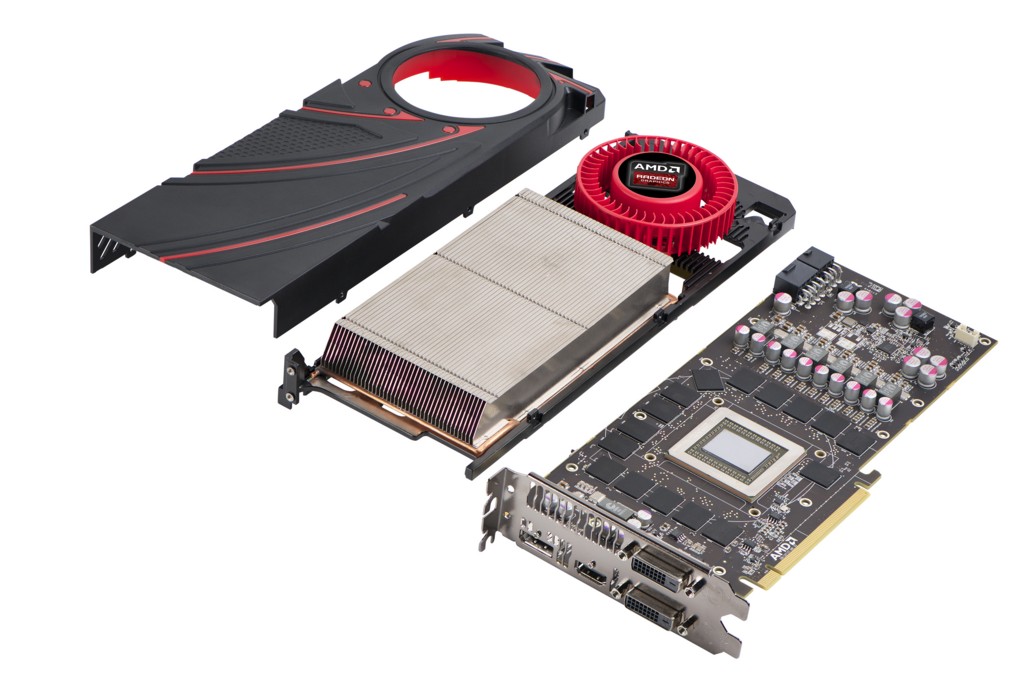

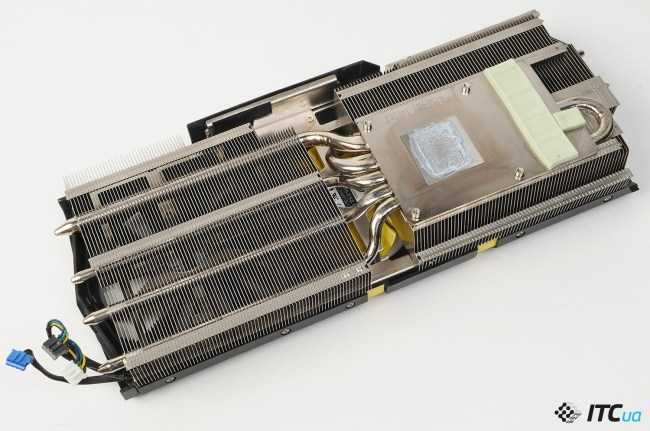

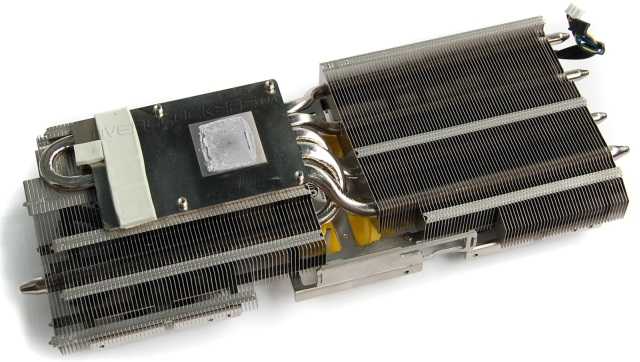

MSI has equipped its R9 290X with a custom VGA cooler based on the famous Twin Frozr IV cooler technology. This is a very efficient cooler that manages to keep low temperatures mor or less quietly: at idle, the VGA cooler is nearly noiseless while under heavy load the noise is tolerable.

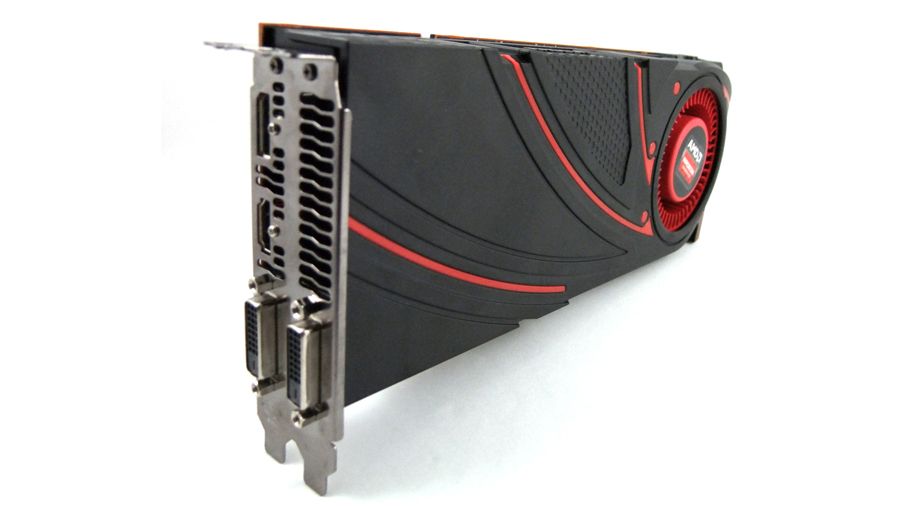

MSI R9 290X comes with a backplate which is a good point, and includes the following output connectors:

– 1 x DisplayPort 1.2

– 1 x HDMI 1.4 (see HERE for the bandwidth of HDMI 1. 4 interface).

4 interface).

– 2 x DVI

This card has however a little problem of design: once plugged, you can’t easily unplug the PCI-E power cables because the heat sink to too near of the power connectors:

The dirty solution: just remove/break the small tie on each power connector!

Weird conception bug. Looks like nobody has tested this card at MSI labs before the launch day… or PSUs at MSI are all like my PSU, without the tie ?

Tools:

– GPU Caps Viewer 1.22.0

– GPU Shark 0.9.2

– GPU-Z 0.8.0

This MIS R9 290X has a GPU core clock of 1030MHz (reference clock: 1000MHz). The 4GB of GDDR5 memory are not overclocked and comes with the reference clock speed of 1250MHz real speed (or 5GHz effective speed).

I benchmarked MSI’s R9 290X with several GPU tests. See THIS PAGE for all results in 1920×1080 fullscreen mode.

By default, Radeon cards work with the PowerTune set to 0% in the Overdrive section of the Catalyst Control Center. With this setting, the R9 290X runs at around 960 MHz when it’s stressed by FurMark. 960Mhz is lower than the normal 3D clock speed (1030MHz). Why? Because PowerTune tries to keep the power consumption under a threshold defined by the PowerTune setting.

At idle, the total power consumption of the testbed is 55W and the GPU temperature is 31°C which is a nice temperature.

With a PowerTune of 0%, the total power consumption of the testbed is 412W and the GPU temperature reaches 82°C.

When FurMark is running, the CPU pulls around 25W and the total power. The efficiency factor of the Corsair AX 860i is 0.92. An estimation of the power consumption of the R9 290X is:

P = (412-25-50) * 0.92

P = 305W

To unleash the beast hidden behind the Twin Frozr IV cooler, you have to set the PowerTune to 20%:

Let’s see the numbers after a 5-min burn-in test session:

– total power consumption: 462W

– GPU temperature: 86°C

With a PowerTune of +20%, an estimation of the power consumption of the R9 290X is:

P = (462-25-50) * 0. 92

92

P = 351W

351W!!! It’s an insane power consumption. But the card is designed to resist and it resists. The Twin Frozr IV does it job by evacuating the heat produced by the GPU. But to correctly cool the GPU the VGA cooler has to do some noise…

The R9 290X Gaming 4G has successfully passed the FurMark stress test. This card is FurMark-proof.

This R9 290X Gaming 4G is a great product by MSI. The card is built with a solid mechanical structure (backplate, Twin Frozr IV), does not suffer from throttling when it’s under heavy graphics load and has a nice design.

Final Verdict

| 8/10 |

PROS: – 4GB of graphics memory – nice GPU temperature at idle state (31°C) – quiet VGA cooler at idle – factory-overclocked – strong power cicuitry, no GPU throttling – backplate for mechanical protection – DisplayPort 1.2 for 4K gaming CONS: |

MSI R9 290X Lightning Review — Tom’s Hardware

Skip to main content

Tom’s Hardware is supported by its audience. When you purchase through links on our site, we may earn an affiliate commission. Here’s why you can trust us.

When you purchase through links on our site, we may earn an affiliate commission. Here’s why you can trust us.

Today’s best MSI R9 290X Lightning deals

No price information

For more information visit their website

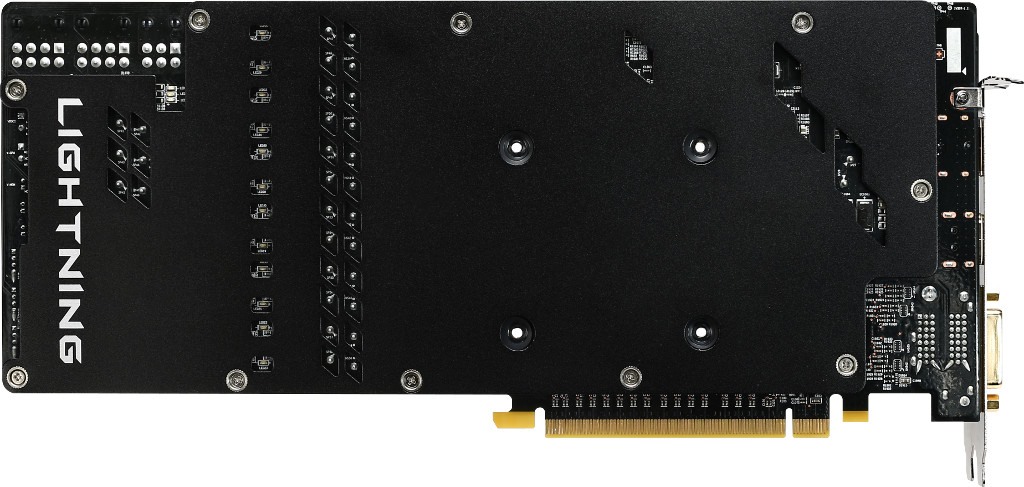

Meet The Largest, Heaviest Radeon R9 290X Of All

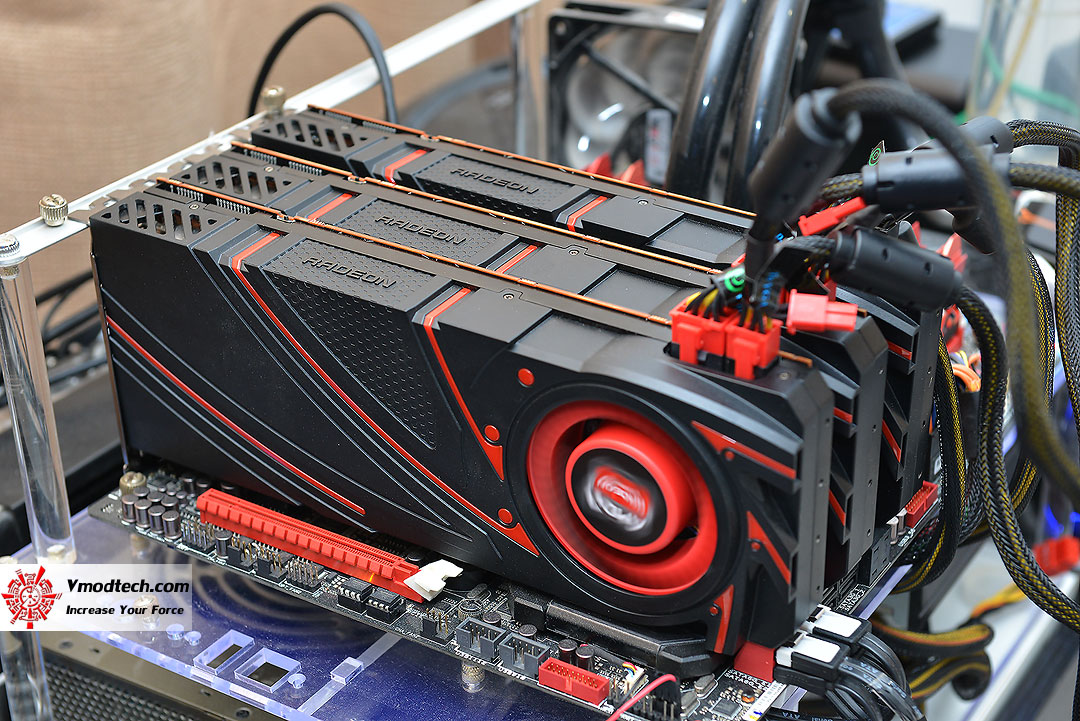

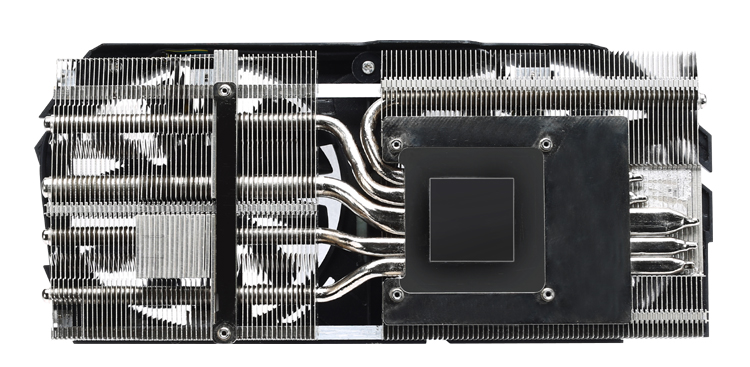

Based on the size of its R9 290X Lightning, it appears that MSI has a thing for overkill. But the company might also be onto something. As we already know, cooling AMD’s Hawaii GPU properly is what separates the men from the boys. Forget about re-purposing cooling solutions from other cards. Asus tried that and it didn’t go over well at all. Instead, MSI sent over a three-slot take on the Radeon R9 290X, which, as you can see, employs a trio of cooling fans and a lot of metal dedicated to keeping that hot graphics processor operating within its comfort zone.

This thing isn’t a toy. A $700 price tag, tied for the most-expensive Radeon on Newegg, makes you think hard before dropping nearly $150 more than the cheapest models with aftermarket cooling.

A $700 price tag, tied for the most-expensive Radeon on Newegg, makes you think hard before dropping nearly $150 more than the cheapest models with aftermarket cooling.

Even the Lightning’s box is massive; it includes an extra compartment with lots of accessories and a certificate of ownership. You’re dealing with a limited-edition piece of hardware, after all.

The snazzy-looking board and high-end packaging (not to mention lofty price) naturally have us expecting quite a bit out of MSI’s R9 290X Lightning. Historically, the company reserves this branding for its flagship models. Does this board live up to that standard? It’s time to break out the lab gear and find out.

Box Style and Contents

The number of accessories you get with this card is impressive, though we question the wisdom of including 6-to-8-pin adapter cables. Why solder eight-pin plugs to the card and then encourage users to fry thinner cables that might not be suitable for driving a high-end GPU? The same goes for the bundled Molex adapter. Who in their right mind would make up the balance of too-few cables by tapping into a pair of four-pin plugs? We’ve woken up to the smell of smoke in the morning; it’s not fun. Seriously. We expect folks who buy $700 graphics cards to use similarly enthusiast-oriented PSUs.

Who in their right mind would make up the balance of too-few cables by tapping into a pair of four-pin plugs? We’ve woken up to the smell of smoke in the morning; it’s not fun. Seriously. We expect folks who buy $700 graphics cards to use similarly enthusiast-oriented PSUs.

Beyond the manual and CD (which contains the drivers, MSI’s Afterburner software, and a fan control utility for the three fans), the box contains a metal plate, thermal pads, and screws. The plate fits over the DC-DC converters when you embark on an extreme overclocking mission, go the water cooling route, and remove the massive heat sink.

Lab Note about the Dimensions

The dimensions reported here don’t necessarily match the manufacturer’s official technical specifications. Rather, we measure them by hand to assure they’re correct. The image and chart below should help illustrate what each measurement actually means. Auxiliary PCI Express power connectors are not included; they have to be added depending on the power plug and cable design.

Size Comparison

MSI’s R9 290X Lightning is as long as the Sapphire R9 290X Tri-X. However, it monopolizes three expansion slots, and is a tad higher, too. As a result, we’re fairly certain it’s the bulkiest Radeon R9 290X we’ve tested thus far.

| Models | Length L | Height H | Depth D1 | Depth D2 |

|---|---|---|---|---|

| Asus R9290X-DC2OC-4GD5 R9 290X DirectCU II OC | 11.3″ / 288 mm | 5.6″ / 142 mm | 1.5″ / 38 mm | 0.16″ / 4 mm |

| Sapphire Tri-X OC R9 290X | 12.0″ / 305 mm | 4.5″ / 114 mm | 1.5″ / 38 mm | 0.16″ / 4 mm |

| Gigabyte GV-R929XOC-4GD R9 290X Windforce OC | 11.1″ / 282 mm | 4.8″ / 123 mm | 1.5″ / 38 mm | 0.16″ / 4 mm |

| HIS R9 290X IceQ X² Turbo | 11.7″ / 297 mm | 5.3″ / 135 mm | 1.4″ / 36 mm | 0.16″ / 4 mm |

| MSI R9 290X Gaming 4G | 11.0″ / 279 mm | 4. 7″ / 120 mm 7″ / 120 mm |

1.5″ / 38 mm | 0.24″ / 6 mm |

| MSI R9 290X Lightning | 12.0″ / 305 mm | 4.8″ / 122 mm | 2.1″ / 53 mm | 0.2″ / 5 mm |

Weight Comparison

The weight of a card might be interesting if you’re trying to figure out if any additional support is needed, or to calculate the amount of stress your motherboard might be under in a CrossFire-based setup. Since MSI’s offering is by far the heaviest in this field, we want to emphasize the importance of bracing it somehow, even if you’re only able to use cable ties.

| Models | |

|---|---|

| Asus R9290X-DC2OC-4GD5 R9 290X DirectCU II OC | 2.5 lbs / 1135 g |

| Sapphire Tri-X OC R9 290X | 2.25 lbs / 1022 g |

| Gigabyte GV-R929XOC-4GD R9 290X Windforce OC | 2.32 lbs / 1053 g |

| HIS R9 290X IceQ X² Turbo | 2.15 lbs / 976 g |

| MSI R9 290X Gaming 4G | 2. 29 lbs / 1038 g 29 lbs / 1038 g |

| MSI R9 290X Lightning | 3.49 lbs / 1581 g |

- 1

Current page:

Meet The Largest, Heaviest Radeon R9 290X Of All

Next Page Features And Pictures

Tom’s Hardware is part of Future US Inc, an international media group and leading digital publisher. Visit our corporate site .

©

Future US, Inc. Full 7th Floor, 130 West 42nd Street,

New York,

NY 10036.

AMD Radeon R9 290X Review

The GeForce GTX Titan blew us all away eight months ago with its mindblowingly fast GPU, cramming 7080 million transistors into a 561mm2 die to provide massive processing power and bandwidth. The catch, of course, was that Nvidia wanted (and still wants) $1,000 for it — a sum that didn’t necessarily seem to prevent cards from flying off shelves even though it’s more than our entire entry-level rig.

Nvidia followed up three months later with the equally impressive GTX 780 for a more plausible $650, where it remains today. Neither of those cards had much of an impact on AMD’s sales as the company’s most expensive offering at the time was a $450 Radeon HD 7970 GHz Edition (the 7990 arrived a few months later).

Neither of those cards had much of an impact on AMD’s sales as the company’s most expensive offering at the time was a $450 Radeon HD 7970 GHz Edition (the 7990 arrived a few months later).

Eagerly awaiting AMD’s response, we were disappointed that the first run of Rx 200 cards were rebadges. For instance, the R7 260X and R9 270X are the Radeon HD 7790 and 7870 overclocked, while the R9 280X is an underclocked version of the HD 7970 GHz Edition — an equivalency chart can be found in our previous Radeon R7/R9 review. We were less than impressed.

You could easily argue that Nvidia did the same thing with its GTX 770 (a rebadged GTX 680) and the GTX 760 (a rebadged OEM GTX 660), but these products followed the highly impressive GTX 780 and GTX Titan, which were new parts. Moreover, the GTX 770 was not only faster than the GTX 680, but it also arrived with $100 knocked off its price.

Although AMD’s new series got off to an underwhelming start, we knew better things would eventually follow, and this week’s Radeon R9 290X is the first truly new product of its range. In a sense, the R9 290X (codenamed «Hawaii XT») could be considered AMD’s Titan, as it takes the Tahiti architecture and stuffs with nearly 2000 million more transistors.

In a sense, the R9 290X (codenamed «Hawaii XT») could be considered AMD’s Titan, as it takes the Tahiti architecture and stuffs with nearly 2000 million more transistors.

It’s the most complex and powerful GPU AMD has created and by no coincidence, it’s also the company’s most expensive single-GPU product to date matching the Radeon HD 7970. Before you click away, that’s «only» $550, which is substantially cheaper than Nvidia’s solution.

The question, of course, is whether the R9 290X can actually keep company with Nvidia’s flagships…

Radeon R9 290X in Detail

The R9 290X measures 27.5 cm long (10.8 in), a typical length for a modern high-end graphics card and roughly the same as the original HD 7970. Its GPU core is clocked at 1000MHz, the same frequency as the 7970 GHz Edition, while the GDDR5 memory operates at just 5GHz, 17% slower than the 7970. Still, pairing that frequency with a 512-bit memory bus gives the R9 290X a whopping theoretical bandwidth of 320GB/s, an 11% advantage over the HD 7970 GHz Edition.

While the HD 7970 had a 3GB frame buffer, the R9 290X has been upgraded to 4GB. For the most part, no games will use more than 2 to 3GB of memory at resolutions up to 2560×1600. This means you will need to play on an extreme multi-monitor setup where more than one 290X would be required to provide playable performance and take advantage of the larger memory buffer.

The R9 290X’s core configuration also differs from the 7970’s. The new card carries an incredible 2816 SPUs, 176 TAUs and 64 ROPs, up 38% from 2048 SPUs and 96 TAUs with 100% more ROPs.

The «Hawaii XT» GPU is cooled by a massive aluminum vapor chamber heatsink with 41 fins measuring 15.0cm long, 8.5cm wide and 2.5cm tall. This is virtually the same cooler AMD used on its reference HD 7970. The vapor chamber design was first implemented by the Radeon HD 5970 and was later adopted by Nvidia’s GTX 580. Heat is dispersed by a 75x20mm fan that pulls air in from the case and pushes it out the back.

The R9 290X’s fan operates quietly for the most part, but despite the card’s impressive idle consumption, it still chugs up to 300 watts under load (20% more than the GTX Titan), so the fan does kick up during a heavy gaming session.

To feed the card enough power, AMD includes 8-pin and 6-pin PCI Express connectors — the same setup you’ll find on the HD 7970, GTX 780 and other demanding boards.

Naturally, the R9 290X supports Crossfire, but there are no bridge connectors on this card. This makes the R9 290X the first graphics card to support Crossfire without needing a hardware strap.

The only other connectors are on the I/O panel. Our AMD reference sample has a pair of dual DL-DVI connectors, an HDMI 1.4a port, a DisplayPort 1.2 socket and a dual BIOS switch. The BIOS switch can be found on top of the graphics card and it lets you select between what AMD calls «Quiet Mode» and «Uber Mode».

Speaking of which by default the R9 290X runs in what AMD calls quiet mode, this sets the default maximum fan speed to 40%. However if you want to keep the R9 290X cooler use the Uber mode which sets the default max fan speed to 55%. Of course these options can be further customized in the Catalyst Control panel which now features new OverDrive options.

Due to power and performance being so closely related now the idea with the Uber mode is to keep the R9 290X cooler so it will always perform to its fullest. However in our office which had an ambient room temperature of 21 degrees while testing we saw no difference in performabce between the Uber and Silent modes.

The R9 290X supporrts a max resolution of 2560×1600 on up to three monitors as well as Ultra HD (also known as UHD or 4K) over both HDMI 1.4b (low refresh) and DisplayPort 1.2.

Many Ultra HD/4K monitors can achieve a 60Hz refresh rate using a tiled display configuration. AMD Eyefinity technology can be leveraged to support these tiled displays by making two 2Kx2K tiles act as one 4Kx2K monitor. AMD has taken steps to make this easy for end users by providing an Automatic AMD Eyefinity Configuration feature. This feature allows an automatic «plug and play» configuration of supported Ultra HD/4K tiled displays when a Display Port cable is connected.

When you hot-plug a tiled 4K monitor (such as the Sharp PN-K321 or Asus PQ321Q), a 2×1 display group will be automatically created and the two tiles will be combined to act as one monitor. This configuration will be remembered and re-enabled when the display is unplugged or the system is rebooted.

This configuration will be remembered and re-enabled when the display is unplugged or the system is rebooted.

It is also possible to manually disable the display group in CCC, and have the two tiles act as independent monitors. Additionally, with a multi-stream hub using the mini-DisplayPort 1.2 sockets, the card can power up to six screens.

AMD Radeon R9 290X Review

AMD Radeon R9 290X 4GB Review

Manufacturer: AMD

UK price (as reviewed): MSRP £449.99 (inc VAT)

US price (as reviewed): MSRP $549 (ex Tax)

The first stage of AMD’s Volcanic Islands GPU launch, covering the Radeon R7 240 up to the Radeon R9 280X, brought with it new information about technologies such as TrueAudio and Mantle. From a pure hardware standpoint, however, it was a little disappointing given that it was mostly just a rebrand of existing HD 7000 series products. Today, however, we can finally pull the wrapping off of AMD’s new flagship card, the Radeon R9 290X 4GB. It’s got a new GPU and everything.

It’s got a new GPU and everything.

AMD’s new top-tier single GPU card is launching on these shores with a retail price of £450. This means it actually undercuts the card its designed to compete with, Nvidia’s GTX 780 3GB, by around £50 at the time of writing. This means AMD is opting, for now at least, to leave the somewhat ludicrous price bracket occupied by GTX Titan solely to Nvidia. AMD is also positioning the R9 290X as the card to have for gaming across multiple monitors or at 4K. It’s worth noting that the final piece of Volcanic Islands pie, the R9 290, is still to launch, as this review is solely for the R9 290X.

Under the Hood

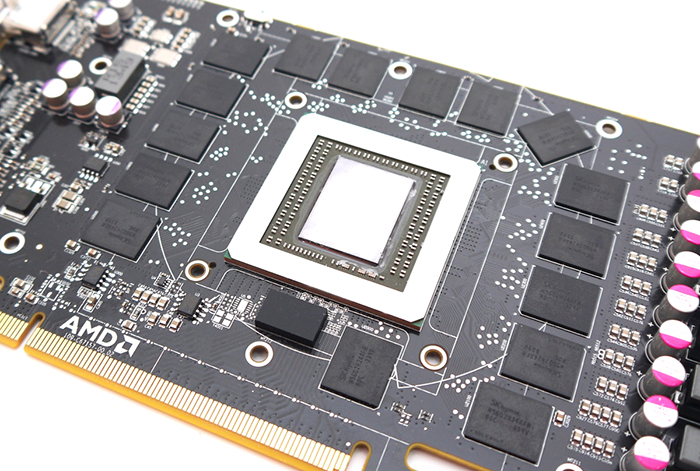

The R9 290 series Hawaii GPU is built on a 28nm process node and based on the existing Graphics Core Next architecture, which is no surprise given AMD’s commitment to it through Mantle. With 6.2 billion transistors and measuring 438mm2, it’s AMD’s biggest GCN GPU yet and represents a 24 percent raw die size increase over the HD 7970. The GPU also now supports the MQSAD instruction set. This new GPU is fully enabled on R9 290X cards, with no sections left unused, and clocked at a rounded 1GHz.

The GPU also now supports the MQSAD instruction set. This new GPU is fully enabled on R9 290X cards, with no sections left unused, and clocked at a rounded 1GHz.

At a high level, Hawaii is organised mainly into four of what AMD has termed shader engines. Each of these features its own geometry processor, which is load balanced with the others and which still contains a geometry assembler, vertex assembler and tesselator. This means that the dual front-end design of Tahiti has been upgraded to a quad front-end one here.

Compute functionality has also been expanded thanks to a quadrupling of Tahiti’s asynchronous compute engine (ACE) count from 2 to 8. These ACEs work in parallel with the main graphics command processor such that graphics and compute tasks can be processed at the same time. The ACEs have direct access to the L2 cache and global data share, and each of them can handle up to eight queues at once. AMD has evidently utilised GCN’s scalable nature to produce a more parallel GPU that should improve its multi-tasking.

Hawaii’s main processing grunt comes from its 44 Compute Units (11 per shader engine), a 37.5 percent increase over Tahiti. These are mostly unchanged, and as such each one features 16KB of L1 cache, four texture units and four of AMD’s SIMD engines. These SIMD engines are the smallest individual unit of work in the GCN architecture, and consist of 16 stream processors a piece and a 64KB register file. The total texture unit and stream processors counts of Hawaii are thus 176 and 2,816 respectively. By comparison, the GTX 780 GPU has 194 and 2,304. The R9 290 series GPU’s L2 cache is now 1MB, a 33 percent improvement, and likewise AMD boasts of a 1TB/sec maximum total L1/L2 bandwidth, again a 33 percent boost.

As well as the CUs, each geometry processor again has access to its own rasteriser, which doubles Tahiti’s number. Four render back-end units per shader engine complete the graphics pipeline, and as each of these features four ROPs, there are now a whopping 64 in total, which is again twice that of the HD 7970 and R9 280X GPU. Consequently, the card can process almost twice as many pixels per second as these GPUs, 64Gpixels/sec to be precise (64 pixels per clock, 1GHz clock speed), which emphasises AMD’s focus on multi-monitor and 4K set-ups here.

Consequently, the card can process almost twice as many pixels per second as these GPUs, 64Gpixels/sec to be precise (64 pixels per clock, 1GHz clock speed), which emphasises AMD’s focus on multi-monitor and 4K set-ups here.

The jump from six to eight 64-bit memory controllers takes Tahiti’s already high 384-bit memory interface to a massive 512-bit one. On the silicon, this is also at a higher density than before, so it actually takes up 20 percent less area despite being a wider bus. It’s connected to 4GB of GDDR5, which is clocked at 5GHz for a total memory bandwidth of 320GB/sec – a 32GB/sec advantage over the GTX 780, GTX Titan, and Radeon HD 7970/R9 280X. The 1GHz lower memory clock speed is a result of the memory interface’s higher density, but the figure AMD is keen to highlight is 50 percent more memory bandwidth per mm2 than the HD 7970 GHz Edition.

Crossfire burns its bridge

The R9 290X also comes with a new CrossFire DMA (direct memory access) engine in the CrossFire compositing block. This allows GPUs in such set-ups to fully communicate over PCIe without the need for an external CrossFire bridge. AMD also ensures us that no performance has been lost in the transition to a bridge-less design, indeed the bandwidth available over PCIe is far greater than over the existing brige implementations. Also the company boasts of scaling somewhere between 1.8x and 2.0x in modern games when using two R9 290X cards versus one.

This allows GPUs in such set-ups to fully communicate over PCIe without the need for an external CrossFire bridge. AMD also ensures us that no performance has been lost in the transition to a bridge-less design, indeed the bandwidth available over PCIe is far greater than over the existing brige implementations. Also the company boasts of scaling somewhere between 1.8x and 2.0x in modern games when using two R9 290X cards versus one.

Finally, also embedded within the Hawaii silicon is AMD’s dedicated audio pipeline, TrueAudio, which you can read about in more detail in our R7 260X coverage. Naturally, thanks to the GCN architecture, Mantle is also supported, with AMD having recently released a blog post outlining some clarifications regarding its new API.

1 — AMD Radeon R9 290X Review2 — AMD Radeon R9 290X Review — Tuning up PowerTune3 — AMD Radeon R9 290X Review — All About 4K4 — AMD Radeon R9 290X Review — Meet the Card5 — GeForce GTX Titan Review — Test Setup6 — AMD Radeon R9 290X Review — Battlefield 3 Performance7 — AMD Radeon R9 290X Review — BioShock Infinite Performance8 — AMD Radeon R9 290X Review — Crysis 3 Performance9 — AMD Radeon R9 290X Review — Skyrim Performance10 — AMD Radeon R9 290X Review — Unigine Valley 1. 0 Benchmark11 — AMD Radeon R9 290X Review — Power and Thermals12 — AMD Radeon R9 290X Review — Overclocking13 — AMD Radeon R9 290X Review — Performance Analysis14 — AMD Radeon R9 290X Review — Conclusion

0 Benchmark11 — AMD Radeon R9 290X Review — Power and Thermals12 — AMD Radeon R9 290X Review — Overclocking13 — AMD Radeon R9 290X Review — Performance Analysis14 — AMD Radeon R9 290X Review — Conclusion

AMD Radeon R9 290X Review

by XbitLabs Team

Last update 29 May 2021

XbitLabs participates in several affiliate programs. If you click links on our website and make a purchase, we may earn a commision. Learn More

Finally we can tell about Radeon R9 290X performance. The top AMD graphics card is competing now with very powerful rivals: Nvidia GeForce GTX 780 and GeForce GTX 780 Ti.

AMD’s flagship Radeon R9 290X graphics card with the new Hawaii XT core was released a few months ago. Since then there have been released several beta and one official version of the Catalyst driver suite that were supposed not only to improve the new Radeon’s performance but also optimize its cooling and noise parameters which had been criticized in early reviews.

The graphics card market has changed, too. Nvidia seems to have been prepared for AMD’s Radeon R9 290X/290 release, so besides cutting the price of the GeForce GTX 780 and GTX 770 they rolled out the faster GeForce GTX 780 Ti. The market situation for nearly all of the Radeons has also been worsened by the cryptocurrency craze. Coupled with the traditional Christmas excitement, it has provoked a considerable rise in prices and even shortage of AMD-based products. So the bottom price of a reference Radeon R9 290X is about one fourth higher than the price of an original GeForce GTX 780 with high-efficiency cooler and factory overclocking as of the time of our writing this.

Anyway, AMD fans don’t lose hope that the prices will stabilize and the shortage will end. We are also looking forward to getting original versions of AMD’s new cards with better and quieter coolers. Until then we have to content ourselves with testing the reference Radeon R9 290X and comparing it with its current market opponents using new drivers and an extended set of benchmarks.

Contents

Specifications and Recommended Price

The following table helps you compare the AMD Radeon R9 290X specifications with the reference versions of AMD Radeon R9 280X, Nvidia GeForce GTX 780 and Nvidia GeForce GTX 780 Ti:

This review has been late due to the sheer lack of test samples, so we won’t delve into details about the architectural differences of the new GPU and the whole graphics card from their predecessors. Suffice it to say that the new Hawaii XT features the same Graphics Core Next architecture as the Tahiti XT but has more muscle. To be specific, it incorporates 2816 instead of 2048 unified shader processors, 176 instead of 128 texture-mapping units, and 64 instead of 32 raster operators. Still manufactured on 28nm tech process, the GPU consists of about 6.2 billion transistors (instead of 4.313 billion) and has grown in size from 365 to 438 sq. mm. The clock rate has remained the same at 1000 MHz (in 3D mode). The memory frequency is lower compared to the Radeon HD 7970 GHz Edition: 5000 vs. 6000 MHz. However, the memory bus is 512-bit now, so the memory bandwidth has grown from 288 to 320 GB/s. Instead of 3 GB, the AMD Radeon R9 290X comes with 4 GB of onboard memory.

6000 MHz. However, the memory bus is 512-bit now, so the memory bandwidth has grown from 288 to 320 GB/s. Instead of 3 GB, the AMD Radeon R9 290X comes with 4 GB of onboard memory.

In the software department, the new Mantle API must be noted in the first place. It is supposed to minimize the effect of the API on the game engine code and reduce CPU load, yet its benefits are still debatable. Time will show how useful it really is. Besides it, we can note the DirectX 11.2 support, the exclusive TrueAudio technology and the well-known AMD Eyefinity feature.

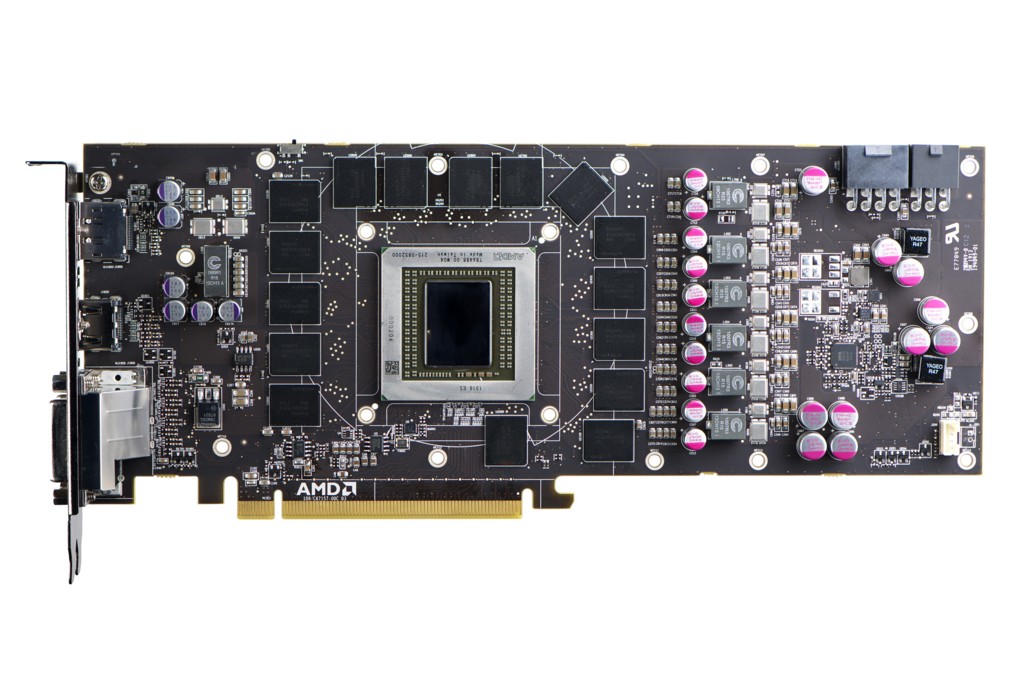

PCB Design and Features

The new AMD Radeon R9 290X follows the classic dual-slot design where the face side of the PCB is covered by the cooling system with a radial fan. The device’s rather boring brick-like appearance is somewhat enlivened by a few sculpted red lines:

The card measures 275x99x39 mm. Its reverse side is exposed.

The AMD Radeon R9 290X has dual-link DVI-I and DVI-D outputs, one HDMI 1.4a connector and one DisplayPort version 1. 2.

2.

There’s a vent grid in the card’s mounting bracket to exhaust the hot air from the cooler out of the computer case.

As opposed to its predecessors, the new AMD Radeon R9 290X lacks CrossFireX connectors. Multi-GPU configurations are now built using the PCI Express bus.

Additional power is delivered to the card via one 6-pin and one 8-pin connector. The peak power draw is specified to be 275 watts, which is a mere 25 watts more than required by the Radeon R9 280X.

A BIOS switch can be found in its traditional location:

It allows booting from a different BIOS chip and choosing between two operation modes for the card’s cooler: Uber Mode and Quiet Mode. We’ll tell you more about them shortly in our description of the cooling system.

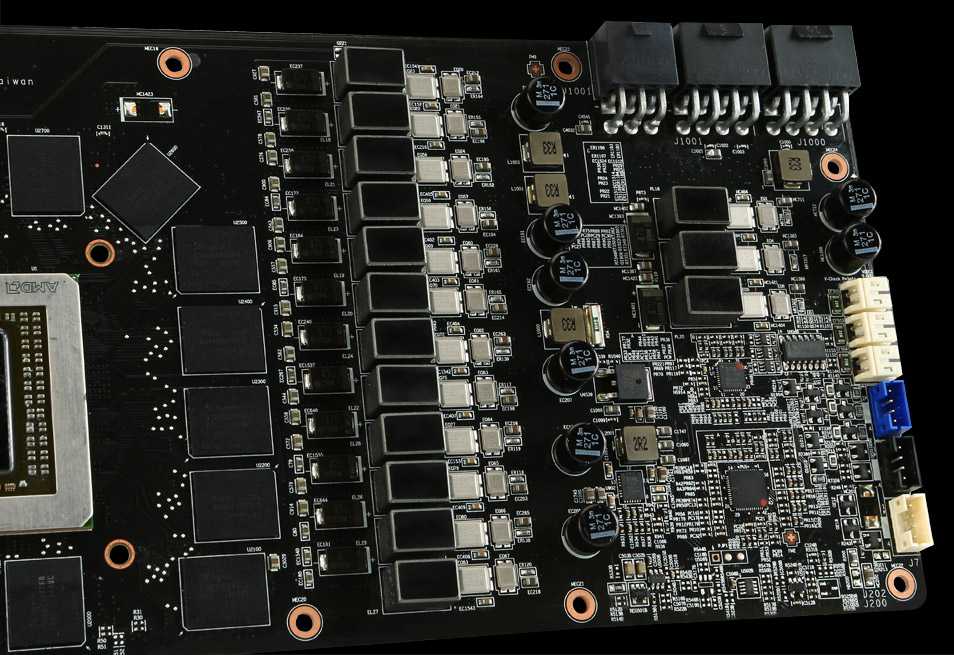

The PCB design is overall similar to that of the Radeon R9 280X and of the earlier Radeon HD 7970 GHz Edition:

The power system includes 5 phases for the GPU, 1 phase for the memory, and 1 phase for PLL. It uses state-of-the-art DirectFET transistors.

The GPU voltage regulator is based on an International Rectifier 3567B controller:

AMD puts an emphasis on it, claiming that the controller helps improve AMD’s PowerTune technology. The voltage regulator is 6.25 mV accurate now, so there are as many as 255 possible values in the range of 0 to 1.55 volts.

The GPU looks impressive with its 438 sq. mm die which is 20% larger than the Tahiti. Well, it is still smaller than the GK110 (561 sq. mm). Our sample of the GPU was manufactured on the 31st week of 2013 in Taiwan. Its marking is printed around the chip on the protective metal frame:

The GPU incorporates 2816 unified shader processors, 176 texture-mapping units, and 64 raster operators. It is expected to work at clock rates up to 1000 MHz in 3D applications, depending on load and temperature. The clock rate is dropped to 300 MHz in 2D mode while the voltage is lowered from 1.195 to 0.945 volts.

The ASIC quality is rather high for an engineering sample at 76. 2%:

2%:

The card comes with 4 gigabytes of GDDR5 memory in FCBGA chips soldered to the face side of the PCB. These are H5GQ2h34AFR R0C components from SK Hynix:

The chips are rated for 6000 MHz but clocked at 5000 MHz on the Radeon R9 290X, so we can expect them to overclock well. The memory bandwidth is anyway quite high with the 512-bit bus: 320 GB/s. That’s higher compared to the GeForce GTX 780 but somewhat worse compared to the GeForce GTX 780 Ti.

Thus, the Radeon R9 290X has the following specs:

Now we can check out its cooling system, temperature and noisiness.

Cooling System: Efficiency and Noise Level

The AMD Radeon R9 290X is equipped with a cooler whose design hasn’t changed much since AMD’s earlier reference coolers. The plastic casing fastened with several screws around the base frame covers a large aluminum heatsink, a steel heat-spreading plate and a radial fan:

The heatsink is soldered to the base which has contact with the memory chips and power components via thermal pads.

The heatsink consists of slim aluminum fins soldered to a copper base:

The base is a large vapor chamber with too much of thick and viscous thermal grease in the middle.

The fan drives the air through the heatsink and exhausts it out of the computer case. The 70mm blower is made by FirstD (the FD7525U12D model).

Its speed is PWM-regulated in a range of 1000 to 5500 RPM. The fan has a peak output power of 20.4 watts at 1.7 amperes.

To measure the temperature of the graphics card we ran Aliens vs. Predator (2010) five times at the maximum visual quality settings, at a resolution of 2560×1440 pixels, with 16x anisotropic filtering and with 4x MSAA.

We used MSI Afterburner 3.0.0 beta 17 and GPU-Z version 0.7.4 to monitor temperatures inside the closed computer case. The computer’s configuration is detailed in the following section of our review. All tests were performed at 25°C room temperature.

As we noted above, the reference Radeon R9 290X has two BIOS versions with different fan settings. There are two modes: Silent and Normal (or Quiet and Uber). They differ in how the fan’s speed depends on the GPU’s temperature and clock rate. Here’s what we have in the Silent mode during the looped Aliens vs. Predator test:

There are two modes: Silent and Normal (or Quiet and Uber). They differ in how the fan’s speed depends on the GPU’s temperature and clock rate. Here’s what we have in the Silent mode during the looped Aliens vs. Predator test:

At the beginning of the second test cycle the GPU grew as hot as 94°C, triggering thermal throttling (similar to what was first implemented in Intel CPUs a few years ago). The clock rate was lowered whereas the speed of the fan didn’t exceed 2300 RPM (44% of the fan’s full power). Although the noise level is out of the comfort zone, AMD calls this mode quiet. Of course, the card’s performance is lower in this mode since the clock rate can drop down to 700 MHz, which is about 30% lower than the GPU’s default frequency. Thus, the reference design doesn’t seem to be attractive for practical purposes.

But the main problem is that when you switch to another BIOS and enable the Normal mode, the fan can accelerate up to 49% or 2800 RPM, which is still not enough to avoid the frequency drop. The GPU gets 94°C hot again, just not so quickly – during the fourth test cycle. That’s why we tried to find out the fan’s speed which would prevent the GPU from slowing down. We almost did it at 55% and 3020 RPM, yet the frequency would still sag down to 978 MHz occasionally:

The GPU gets 94°C hot again, just not so quickly – during the fourth test cycle. That’s why we tried to find out the fan’s speed which would prevent the GPU from slowing down. We almost did it at 55% and 3020 RPM, yet the frequency would still sag down to 978 MHz occasionally:

So we sped the fan up to 60% or 3340 RPM:

The GPU was no hotter than 87°C then and didn’t suffer any frequency drops. The card was too noisy at that speed of the fan, of course.

After we took the card apart and replaced its default thermal grease with Arctic MX-4, we carried out our temperature test one again and saw that there were no frequency drops at 55% fan speed.

Replacing the default thermal interface seems to do the card some good. The peak temperature was close to the threshold, though. It is quite possible that the frequency would have dropped if the test had lasted longer.

We measured the level of noise using an electronic noise-level meter CENTER-321 in a closed and quiet room about 20 sq. meters large. The noise-level meter was set on a tripod at a distance of 15 centimeters from the graphics card which was installed on an open testbed. The mainboard with the graphics card was placed at an edge of a desk on a foam-rubber tray. The bottom limit of our noise-level meter is 29.8 dBA whereas the subjectively comfortable (not low, but comfortable) level of noise when measured from that distance is about 36 dBA. The speed of the graphics card’s fans was being adjusted by means of a controller that changed the supply voltage in steps of 0.5 V.

meters large. The noise-level meter was set on a tripod at a distance of 15 centimeters from the graphics card which was installed on an open testbed. The mainboard with the graphics card was placed at an edge of a desk on a foam-rubber tray. The bottom limit of our noise-level meter is 29.8 dBA whereas the subjectively comfortable (not low, but comfortable) level of noise when measured from that distance is about 36 dBA. The speed of the graphics card’s fans was being adjusted by means of a controller that changed the supply voltage in steps of 0.5 V.

We’ve included the results of the reference Nvidia GeForce GTX 780 and two original cards, EVGA GeForce GTX 780 Superclocked ACX and MSI Radeon R9 280X Gaming, in the diagram below for the sake of comparison. We’ll also use these cards in our performance tests. The vertical dotted lines mark the top speed of the fans in the automatic regulation mode. There are two such lines for the AMD Radeon R9 290X: for the automatic Silent mode and for 55% fan speed.

Here are the results:

The Radeon R9 290X is the noisiest reference card. We had expected that even before the test, judging by our subjective impressions. The high heat dissipation of AMD’s new flagship means that its noise in the quiet mode is about as high as with the reference GeForce GTX 780 but the latter doesn’t drop its GPU frequency by 30%. When running 3D games at a stable 1000 MHz, the Radeon R9 290X has 55% fan speed, which is unbearably noisy. This graphics card is just not meant for home users.

Overclocking Potential

Our temperature tests make it clear that overclocking the reference Radeon R9 290X in the automatic fan regulation mode is pointless as the clock rate would just drop down as soon as the GPU temperature hit 94°C. That’s why we fixed the fan speed at 65% or 3660 RPM and didn’t increase the core voltage. We managed to increase the GPU and memory clock rates by 130 and 760 MHz, respectively.

The resulting clock rates were 1130/5760 MHz:

The fan worked at 65% of its full speed and roared mercilessly, yet the GPU was as hot as 90°C.

Even though we achieved some success in our overclocking experiment, we have to admit that the reference Radeon R9 290X is no good for overclocking unless you are completely deaf.

Testbed and Methods

Here is the list of components we use in our testbed.

- Mainboard: Intel Siler DX79SR (Intel X79 Express, LGA 2011, BIOS 0590 dated 17.07.2013)

- CPU: Intel Core i7-3970X Extreme Edition 3.5/4.0 GHz (Sandy Bridge-E, C2, 1.1 V, 6x256KB L2 cache, 15MB L3 cache)

- CPU cooler: Phanteks PH-TC14P (2x Corsair AF140 fans, 900 RPM)

- Thermal grease: ARCTIC MX-4

- Graphics cards:

- Nvidia GeForce GTX 780 Ti 3GB (876/928/7000 MHz)

- EVGA GeForce GTX 780 Superclocked ACX 3GB (863/916/6008 MHz; and overclocked to 1032/1085/7348 MHz)

- AMD Radeon R9 290X 4GB (1000/5000 MHz and overclocked to 1130/5760 MHz)

- MSI Radeon R9 280X Gaming 3GB (1050/6000 MHz)

- System memory: DDR3 4x8GB G.

SKILL TridentX F3-2133C9Q-32GTX (2133 MHz, 9-11-11-31, 1.6 V)

SKILL TridentX F3-2133C9Q-32GTX (2133 MHz, 9-11-11-31, 1.6 V) - System disk: SSD 256GB Crucial m4 (SATA 6 Gbit/s, CT256M4SSD2, BIOS v0009)

- Games/software disk: Western Digital VelociRaptor (SATA-2, 300 GB, 10000 RPM, 16 MB cache, NCQ) in a Scythe Quiet Drive 3.5″ enclosure

- Backup disk: Samsung Ecogreen F4 HD204UI (SATA-2, 2 TB, 5400 RPM, 32 MB cache, NCQ)

- Computer case: Antec Twelve Hundred (front panel: three Noiseblocker NB-Multiframe S-Series MF12-S2 fans at 1020 RPM; back panel: two Noiseblocker NB-BlackSilentPRO PL-1 fans at 1020 RPM; top panel: one preinstalled 200mm fan at 400 RPM)

- Control & monitoring panel: Zalman ZM-MFC3

- Power supply: Corsair AX1200i (1200 W), 120mm fan

- Monitor: 27″ Samsung S27A850D (DVI-I, 2560×1440, 60 Hz)

As we are going to check out the performance growth within AMD’s own model range, we will compare the Radeon R9 290X with a Radeon R9 280X (previously known as Radeon HD 7970 GHz Edition) in its version from MSI.

The MSI card’s GPU is pre-overclocked by 50 MHz, but we guess this is a negligible thing for such top-end graphics solutions.

The second opponent we’ve chosen for our Radeon R9 290X is, of course, a GeForce GTX 780. We don’t have any reference versions of that card left, so we take an EVGA GeForce GTX 780 Superclocked ACX 3GB and run it in two modes: at the clock rates of the reference GTX 780 and at the highest clock rates supported by our sample of the EVGA card.

We will also compare the Radeon R9 290X with a GeForce GTX 780 Ti which is currently somewhat more expensive, yet can be viewed as the Radeon’s market opponent. We will use a reference GTX 780 Ti with default clock rates:

In order to lower the dependence of the graphics cards’ performance on the overall platform speed, we overclocked our 32nm six-core CPU to 4.8 GHz by setting its frequency multiplier at x48 and enabling Loadline Calibration. The CPU’s voltage was increased to 1.38 volts in the mainboard’s BIOS:

Hyper-Threading was turned on. We used 32 GB of system memory at 2.133 GHz with timings of 9-11-11-20_CR1 and voltage of 1.6125 volts.

We used 32 GB of system memory at 2.133 GHz with timings of 9-11-11-20_CR1 and voltage of 1.6125 volts.

The testbed ran Microsoft Windows 7 Ultimate x64 SP1 with all critical updates installed. We used the following drivers:

- Intel Chipset Drivers – 9.4.4.1006 WHQL dated 21.09.2013

- DirectX End-User Runtimes, dated 30 November 2010

- AMD Catalyst 13.12 WHQL (13.251.0.0) dated 18.12.2013

- GeForce 331.93 Beta dated 27.11.2013

We benchmarked the graphics cards’ performance at two display resolutions: 1920×1080 and 2560×1440 pixels. There were two visual quality modes: “Quality+AF16x” means the default texturing quality in the drivers + 16x anisotropic filtering whereas “Quality+ AF16x+MSAA 4x(8x)” means 16x anisotropic filtering and 4x or 8x antialiasing. We enabled anisotropic filtering and full-screen antialiasing from the game’s menu. If the corresponding options were missing, we changed these settings in the Control Panels of the Catalyst and GeForce drivers. We also disabled Vsync there. There were no other changes in the driver settings.

We also disabled Vsync there. There were no other changes in the driver settings.

The graphics cards were tested in two benchmarks and 15 games updated to the latest versions. Batman: Arkham Origins returns to our test programs whereas Battlefield 4 debuts in it:

- 3DMark (2013) (DirectX 9/11) – version 1.2.250.0; Cloud Gate, Fire Strike and Fire Strike Extreme scenes.

- Unigine Valley Bench (DirectX 11) – version 1.0, maximum visual quality settings, 16x AF and/or 4x MSAA, 19201080.

- Total War: SHOGUN 2 – Fall of the Samurai (DirectX 11) – version 1.1.0, integrated benchmark (the Sekigahara battle) with the maximum visual quality settings and 8x MSAA.

- Battlefield 3 (DirectX 11) – version 1.4, Ultra settings, two successive runs of a scripted scene from the beginning of the “Going Hunting” mission (110 seconds long).

- Sniper Elite V2 Benchmark (DirectX 11) – version 1.05, we used Adrenaline Sniper Elite V2 Benchmark Tool v1.

0.0.2 BETA with maximum graphics quality settings (“Ultra” profile), Advanced Shadows: HIGH, Ambient Occlusion: ON, Stereo 3D: OFF, Supersampling: OFF, two sequential runs of the test.

0.0.2 BETA with maximum graphics quality settings (“Ultra” profile), Advanced Shadows: HIGH, Ambient Occlusion: ON, Stereo 3D: OFF, Supersampling: OFF, two sequential runs of the test. - Sleeping Dogs (DirectX 11) – version 1.5, we used Adrenaline Sleeping Dogs Benchmark Tool v1.0.2.1 with maximum image quality settings, Hi-Res Textures pack installed, FPS Limiter and V-Sync disabled, two consecutive runs of the built-in benchmark with quality antialiasing at Normal and Extreme levels.

- Hitman: Absolution (DirectX 11) – version 1.0.447.0, built-in test with Ultra settings, enabled tessellation, FXAA and global lighting.

- Crysis 3 (DirectX 11) – version 1.2.0.1000, maximum visual quality settings, Motion Blur – Medium, lens flares – on, FXAA and MSAA 4x, two consecutive runs of a scripted scene from the beginning of the “Swamp” mission (110 seconds long).

- Tomb Raider (2013) (DirectX 11) – version 1.1.748.0, we used Adrenaline Benchmark Tool, all image quality settings set to “Ultra”, V-Sync disabled, FXAA and 2x SSAA antialiasing enabled, TessFX technology activated, two consecutive runs of the in-game benchmark.

- BioShock Infinite (DirectX 11) – version 1.1.24.21018, we used Adrenaline Action Benchmark Tool with “Ultra” and “Ultra+DOF” quality settings, two consecutive runs of the in-game benchmark.

- Metro: Last Light (DirectX 11) – version 1.0.0.15, we used the built-in benchmark for two consecutive runs of the D6 scene. All image quality and tessellation settings were at “Very High”, Advanced PhysX technology was enabled, we tested with and without SSAA antialiasing.

- GRID 2 (DirectX 11) – version 1.0.85.8679, we used the built-in benchmark, the visual quality settings were all at their maximums, the tests were run with and without MSAA 8x antialiasing with eight cars on the Chicago track.

- Company of Heroes 2 (DirectX 11) – version 3.0.0.11811, two consecutive runs of the integrated benchmark at maximum image quality and physics effects settings.

- Total War: Rome II (DirectX 11) — version 1.8.0 build 8891.481024, Extreme quality, V-Sync disabled, SSAA enabled, two consecutive runs of the integrated benchmark.

- ArmA III (DirectX 11) — version 1.08.0.113494, we used the ArmA3Mark benchmark with the Ultra quality, V-Sync disabled, two consecutive runs of the integrated benchmark.

- Batman: Arkham Origins (DirectX 11) – version 1.0 update 8, Ultra visual quality, V-Sync disabled, all the effects enabled, all DX11 Enhanced features enabled, Hardware Accelerated PhysX = Normal, two consecutive runs of the in-game benchmarks.

- Battlefield 4 (DirectX 11) – version 1.4, Ultra settings, two successive runs of a scripted scene from the beginning of the “Tashgar” mission (110 seconds long).

We publish the bottom frame rate for games that report it. Each test was run twice, the final result being the best of the two if they differed by less than 1%. If we had a larger difference, we reran the test at least once to get repeatable results.

Performance

The results of the AMD Radeon R9 290X at its default and overclocked frequencies are indicated in red. The color of the Nvidia GeForce GTX 780 Ti is green. The EVGA GeForce GTX 780 Superclocked ACX is turquoise and the MSI Radeon R9 280X Gaming is lilac.

The color of the Nvidia GeForce GTX 780 Ti is green. The EVGA GeForce GTX 780 Superclocked ACX is turquoise and the MSI Radeon R9 280X Gaming is lilac.

3DMark 2013

AMD-based cards have always been strong in Futuremark’s tests, so it is no wonder that the Radeon R9 290X feels at its ease in the newest 3DMark:

At the default clock rates the Radeon R9 290X is up to 31% faster than the MSI Radeon R9 280X Gaming and is also ahead of the GeForce GTX 780. When overclocked, it can challenge the slightly more expensive GeForce GTX 780 Ti. Of course, the latter can be easily overclocked as well, yet the first results of the Radeon R9 290X look encouraging.

Unigine Valley Bench

This benchmark shows us a different picture:

The Radeon R9 290X still enjoys a hefty advantage over the MSI Radeon R9 280X Gaming – 34 to 36%, yet this is barely enough to compete with the more affordable GeForce GTX 780. When both cards are overclocked, the GTX 780 is superior.

Now let’s turn to our gaming tests.

Total War: SHOGUN 2 – Fall of the Samurai

Excepting the most resource-consuming settings (2560×1440 with 8x antialiasing), the Radeon R9 290X proves to be a little faster than the GeForce GTX 780, both working at their standard clock rates. But thanks to the higher frequency potential of the EVGA card, the overclocked GTX 780 is just as good as the overclocked R9 290X. Original Radeon R9 290X cards will probably overclock much better, yet Nvidia has the advantage of time and also an ace in its sleeve in the way of the GeForce GTX 780 Ti.

Note also that the Radeon R9 290X is 24 to 29% ahead of the MSI Radeon R9 280X Gaming here.

Battlefield 3

The standings are overall the same as in the previous game:

We can just note that the Radeon R9 290X enjoys a larger advantage over the MSI Radeon R9 280X Gaming: 28 to 32%.

Sniper Elite V2 Benchmark

As opposed to the two previous tests, it is the Nvidia-based cards that are ahead here.

The Radeon R9 290X is 8 to 11% behind the GeForce GTX 780 at the standard clock rates and only manages to bridge the gap by means of overclocking. The new card from AMD can’t compete with the GeForce GTX 780 Ti in this game and outperforms the MSI Radeon R9 280X Gaming by 22 to 25%. That doesn’t seem to be as large an advantage as we expected from the specs of the new Hawaii XT GPU.

The new card from AMD can’t compete with the GeForce GTX 780 Ti in this game and outperforms the MSI Radeon R9 280X Gaming by 22 to 25%. That doesn’t seem to be as large an advantage as we expected from the specs of the new Hawaii XT GPU.

Sleeping Dogs

The AMD Radeon R9 290X is unrivaled in this game:

It is only at 2560×1440 with antialiasing that the GeForce GTX 780 and the GeForce GTX 780 Ti can fight back.

Hitman: Absolution

This game is yet another example of an application where AMD-based cards are traditionally stronger than their Nvidia-based opponents.

The Radeon R9 290X is 14 to 32% faster than the GeForce GTX 780. Its advantage over its predecessor Radeon R9 280X (or Radeon HD 7970 GHz Edition) amounts up to 46%.

Crysis 3

The Radeon R9 290X is always a little faster than the GeForce GTX 780 when both work at their default clock rates. When overclocked, they are roughly equal to each other and ahead of the GeForce GTX 780 Ti. The MSI Radeon R9 280X Gaming is a typical 29 to 31% behind the new AMD solution.

The MSI Radeon R9 280X Gaming is a typical 29 to 31% behind the new AMD solution.

Tomb Raider (2013)

The gap between the Radeon R9 290X and the MSI Radeon R9 280X Gaming is smaller than average in this game: 22 to 24%.

The Radeon R9 290X is 5 to 11% ahead of the GeForce GTX 780 in every test mode. When overclocked, it even beats the standard GeForce GTX 780 Ti!

BioShock Infinite

This game’s rendering engine is favorable towards AMD GPUs, therefore the Radeon R9 290X is always better than the GeForce GTX 780. It must be noted that the new card from AMD isn’t far ahead when we enable antialiasing, but each of the AMD-based solutions can deliver higher bottom speed, which is important for smooth gameplay.

Metro: Last Light

First, let’s check this game out with the Advanced PhysX option turned on:

The GeForce series are unrivaled in this case. The Radeon R9 290X is left to compete against the MSI Radeon R9 280X Gaming, beating it by 29 to 44%.

It’s different when we disable Advanced PhysX:

The Radeon R9 290X is superior in every test mode, enjoying a large advantage over the GeForce GTX 780 when SSAA is turned off. When overclocked, it even beats the standard GeForce GTX 780 Ti.

GRID 2

GRID 2 runs faster on the AMD-based solutions. The Radeon R9 290X is 8 to 15% ahead of the GeForce GTX 780 and gets very close to the GTX 780 Ti in some of the test modes. Its advantage over the MSI Radeon R9 280X Gaming amounts to 20-28%.

Company of Heroes 2

The Radeon R9 290X is even more impressive in this game:

It beats the GeForce GTX 780 by 20-36% and leaves the GTX 780 Ti behind, too. Its Tahiti XT-based predecessor is slower by 25 to 39%.

Total War: Rome II

Here is yet another game where the Radeon R9 290X beats the GeForce GTX 780:

The gap isn’t large, yet AMD is superior. The overclocked Radeon R9 290X is comparable to the standard GeForce GTX 780 Ti again.

ArmA III

Top-end graphics cards can only be compared at the most resource-consuming settings in this benchmark. Otherwise, their speed is limited by the overall performance of the platform.

Otherwise, their speed is limited by the overall performance of the platform.

Batman: Arkham Origins

Returning to our testing program after a recent update, the game suggests that the Radeon R9 290X is overall slower than the GeForce GTX 780.

However, we can note that the Radeon R9 290X is just as good as the GeForce GTX 780 when we enable antialiasing. And most gamers are likely to enable it on such top-end graphics cards. The Radeon R9 290X even beats its opponent and the GeForce GTX 780 Ti in the heaviest test mode. It is in this game that we observe the largest gap between the Radeon R9 290X and the Radeon R9 280X. It is as large as 47%!

Battlefield 4

It’s the first time we ever use Battlefield 4 for our benchmarking, so we want to show you the graphics settings we use (we vary the resolution and the antialiasing mode, of course):

As you can see, we choose the maximum visual quality possible. But you should lower the numbers by 20-25% to estimate the real-life frame rate because the intro scene is not actual gameplay with its numerous explosions and shootouts.

Here are the results:

The Radeon R9 290X beats the GeForce GTX 780 once again. The new Radeon is fast indeed and can outperform the standard GeForce GTX 780 Ti when overclocked. Its advantage over the Radeon R9 280X amounts to 26-28% here.

Now we can proceed to our performance summary charts.

Performance Summary Charts

First of all, let’s see how faster the new single-GPU flagship Radeon R9 290X in comparison with its predecessor (Radeon HD 7970 GHz Edition) as represented by the MSI Radeon R9 280X Gaming. The latter serves as the baseline:

The average performance growth is 23-26% without antialiasing and 29-31% with antialiasing across all the tests. We can recall the GeForce GTX Titan which was 34-55% faster than the GeForce GTX 680 at the time of its release, but the Titan costs $1000 even now. Moreover, the Gaming card from MSI is pre-overclocked by 50 MHz, which adds 2-3% to the performance of the reference Radeon R9 280X.

The second pair of our summary charts helps compare the Radeon R9 290X and the GeForce GTX 780 at their default clock rates, the latter card serving as the baseline.

The GeForce GTX 780 wins in Sniper Elite V2, Metro: Last Light (with Advanced PhysX) and in Batman: Arkham Origins (without antialiasing). In the rest of the games the Radeon R9 290X is at least as good as the GeForce GTX 780 and even beats the latter in Sleeping Dogs, Hitman: Absolution, GRID 2, Company of Heroes 2, Total War: Rome II and Battlefield 4. The average advantage of the Radeon R9 290X across all the games is 7-8% without antialiasing and 6-10% with antialiasing.

As we mentioned in the Introduction, today even original GeForce GTX 780s with high-efficiency coolers and factory overclocking are considerably cheaper than reference Radeon R9 290X cards, so the new card should also be compared with the slightly more expensive GeForce GTX 780 Ti. Here are the diagrams:

Nvidia wins this round. The Radeon R9 290X is only ahead in Hitman: Absolution, Company of Heroes 2, and in some test modes of Sleeping Dogs and Batman: Arkham Origins. In the rest of the games the GeForce GTX 780 Ti remains the fastest single-GPU gaming card.

The last pair of diagrams indicate the performance scalability of the Radeon R9 290X. We overclocked our sample’s GPU and memory by 130 MHz (13%) and 760 MHz (15.2%) and here are the benefits:

So the overclocked Radeon R9 290X is 9-10% faster at 1920×1080 and 10-11% faster at 2560×1440. That’s normal scalability for a top-end graphics card.

Power Consumption

We measured the power consumption of computer systems with different graphics cards using a multifunctional panel Zalman ZM-MFC3 which can report how much power a computer (the monitor not included) draws from a wall socket. There were two test modes: 2D (editing documents in Microsoft Word and web surfing) and 3D (the intro scene of the Swamp level from Crysis 3 running four times in a loop at 2560×1440 with maximum visual quality settings but without MSAA). Here are the results:

The peak power consumption of the Radeon R9 290X configuration is 69 watts (14.4%) higher compared to the MSI Radeon R9 280X Gaming and only 20 watts (3. 8%) higher compared to the standard GeForce GTX 780. The GeForce GTX 780 Ti configuration needs about as much power as the AMD Radeon R9 290X. When the latter is overclocked, the computer’s power draw rises to 574 watts (by 4.6%). Any of these configurations can be powered by a 600-watt PSU.

8%) higher compared to the standard GeForce GTX 780. The GeForce GTX 780 Ti configuration needs about as much power as the AMD Radeon R9 290X. When the latter is overclocked, the computer’s power draw rises to 574 watts (by 4.6%). Any of these configurations can be powered by a 600-watt PSU.

Conclusion

At the time of its announcement in October 2013 the AMD Radeon R9 290X made a worthy opponent to the Nvidia GeForce GTX 780 despite all the problems with noise and overheat. However, Nvidia was quick to react by cutting the price of its single-GPU flagship (we don’t count the Titan in whereas the GTX 780 Ti wasn’t yet released then). Today, in early 2014, the reference Radeon R9 290X costs more than original GeForce GTX 780s. Even though it is faster on average, the small difference in performance doesn’t make up for the higher noise level and potential GPU frequency drops due to overheat.

If you want to buy a Radeon R9 290X, you should wait for original versions from first-tier brands as all of them have already announced their Hawaii XT based products. If priced lower than the GeForce GTX 780 Ti, they will be quite an attractive buy. We’ll test one such product very soon. We’re also preparing a review of the AMD Radeon R9 290 (without the “X”), so stay tuned to us in the new year!

If priced lower than the GeForce GTX 780 Ti, they will be quite an attractive buy. We’ll test one such product very soon. We’re also preparing a review of the AMD Radeon R9 290 (without the “X”), so stay tuned to us in the new year!

AMD Radeon R9 review: 280X, 285, 290 & 290X

OVERVIEW

We’ve tested four AMD chipsets for gaming enthusiasts. First up is the Radeon R9 280X. This has a Tahiti XTL or XT2 core, depending on the manufacturer, with 2,048 GPU cores and stock 850MHz core and 1,000MHz boost speeds. The Tahiti XTL and XT2 chips both have the same number of GPU cores and the same clock speeds, but the XT2 variant apparently has some power efficiency improvements.

The Radeon R9 285 cards are based on AMD’s newest Tonga PRO GPUs, and are the first cards to support AMD’s Graphics Core Next (GCN) 1.2 architecture. According to Wikipedia, this brings «improved tessellation performance, lossless delta colour compression in order to reduce memory bandwidth usage, [and] an updated and more efficient instruction set». The R9 285 cards we’ve tested all have 2GB of GDDR5 RAM, compared to the 3GB in the mid-range R9 280, and have the same 1,792 GPU cores. AMD’s stock R9 285 speed is 918MHz.

The R9 285 cards we’ve tested all have 2GB of GDDR5 RAM, compared to the 3GB in the mid-range R9 280, and have the same 1,792 GPU cores. AMD’s stock R9 285 speed is 918MHz.

The R9 290 is significantly more expensive than the R9 285, but is a big step up in terms of specification. You get 2,560 GPU cores, 4GB of GDDR5 memory and a 947MHz core clock speed. Finally, there’s the most powerful card we’ve tested: the R9 290X. This has a huge 2,816 GPU cores running at 1,080MHz, and is a rival for the top-end Nvidia GTX 970 cards.

| Card | Rating | Award | Price inc VAT | Supplier | ||||

|---|---|---|---|---|---|---|---|---|

| XFX Radeon R9 280X Double Dissipation Black Edition | 5 | Best Buy | £186 | Asus Strix R9 285 | 3 | £207 | www.scan.co.uk | |

| Club3D Radeon R9 285 royalQueen | 3 | £187 | www.morecomputers.com | |||||

| Sapphire R9 285 2GB GDDR5 ITX Compact Edition | 4 | £185 | XFX AMD Radeon R9 290 Black Double Dissipation Edition | 5 | Recommended | £242 | www.ebuyer.com | |

| Sapphire VAPOR-X R9 290X 4GB GDDR5 TRI-X OC | 4 | £273 | www.dabs.com |

INDIVIDUAL CARDS

Radeon R9 280X

The XFX Radeon R9 280X is a very big card; at 295mm long it’s only slightly shorter than the huge Sapphire R9 290X, so measure your case carefully. The card needs both six-pin and eight-pin PCI Express power connectors, so you’ll need a decent power supply. The R9 280X has one dual-link and one single-link DVI port, HDMI and twin Mini DisplayPort sockets, so you may need a Mini DisplayPort to DisplayPort adaptor (around £10 from www.scan.co.uk) to plug into your DisplayPort monitor.

The card needs both six-pin and eight-pin PCI Express power connectors, so you’ll need a decent power supply. The R9 280X has one dual-link and one single-link DVI port, HDMI and twin Mini DisplayPort sockets, so you may need a Mini DisplayPort to DisplayPort adaptor (around £10 from www.scan.co.uk) to plug into your DisplayPort monitor.

The R9 280X was seriously impressive in our tests. It could play Dirt Showdown smoothly at 3,840×2,160 at almost 42fps, had no problem with Tomb Raider at 2,560×1,440 with Ultra quality and demanding SSAA switched on, and could just about play Tomb Raider smoothly at 3,840×2,160 using the FXAA rather than SSAA anti-aliasing technique, with a 30.5fps average. In the highly challenging Metro: Last Light Redux benchmark, we saw a playable 35.3fps average at 1,920×1,080 with Very High quality and SSAA enabled. Disabling SSAA at this resolution gave us a silky-smooth 59.8fps, and even at 2,560×1,440 we still saw a playable 39.8fps.

It may be over a year old, but the AMD R9 280X is still an impressive card, and is very good value for its performance. It wins a Best Buy award.

It wins a Best Buy award.

Radeon R9 285

We have three cards based on AMD’s newer R9 285 chipset. The Asus Strix R9 285 is the largest model, at 269mm long, and also the tallest, at 126mm high. This is due to a huge twin-fan cooler, which is almost silent when the graphics card is running at idle, but builds to a low rush under heavy load. The Club3D R9 285 royalQueen is smaller, at 218mm long, and also has twin cooling fans. However, this is a much noisier card than the Asus model under load when the twin fans spin up. Both the Asus and Club3D R9 285 cards have the standard twin DVI, HDMI and DisplayPort outputs.

The Sapphire R9 285 2GB GDDR5 ITX Compact Edition is something a bit different, as it’s a single-fan design in a short 172mm-long package. This is amazingly short for such a small card, meaning it will fit in even compact Mini-ITX cases, as its name suggests. The Sapphire card has two Mini DisplayPort outputs, so you may need an adaptor for your monitor (see the XFX Radeon R9 280X review above). The Sapphire R9 285 also requires a single 8-pin PCI Express power plug, compared to the twin 6-pin PCI Express plugs on the other two R9 285-based cards. Despite its single fan, the card is very quiet indeed, even under load.

The Sapphire R9 285 also requires a single 8-pin PCI Express power plug, compared to the twin 6-pin PCI Express plugs on the other two R9 285-based cards. Despite its single fan, the card is very quiet indeed, even under load.

All three cards are mildly overclocked compared to AMD’s reference R9 285 speed. The Asus card runs at 954MHz, the Club3D model at 945MHz and the Sapphire card 928MHz. The cards have broadly similar performance in all three game tests; they produced very high, perfectly smooth frame rates in Dirt Showdown and Tomb Raider at 1,920×1,080 with Ultra detail, and maintained a just-playable average of 29fps (28fps for the Sapphire card) in Metro: Last Light Redux. This is a bit close for comfort, so you should turn off SSAA to get properly smooth frame rates in this challenging title.

All three cards managed around a playable 34fps in Dirt Showdown at 3,840×2,160 with Ultra detail, and in Tomb Raider at 2,560×1,440 we saw a playable 34fps from the Club3D and Sapphire cards and 35fps from the Asus model. To get a playable 2,560×1,440 frame rate in Metro: Last Light Redux we just had to turn off SSAA, whereupon we saw smooth 45fps averages from all three cards.

To get a playable 2,560×1,440 frame rate in Metro: Last Light Redux we just had to turn off SSAA, whereupon we saw smooth 45fps averages from all three cards.

Among the R9 285 cards, the Sapphire model is our favourite, as it has similar performance to the other models but is smaller and quieter. The MSI GTX 960 Gaming 2G is cheaper and just as quick, so is a credible Nvidia-based rival, but our pick at this price is the XFX Radeon R9 280X, which is far quicker than all three Radeon R9 285 models.

Radeon R9 290

The last two AMD-based cards are very much in the hardcore gamer price range. The £242 XFX AMD Radeon R9 290 Black Double Dissipation Edition is overclocked to 980MHz, and the low roar its twin fans make under load isn’t intrusive.

The card is very quick indeed, with a smooth 44.1fps in Metro: Last Light Redux at 1,920×1,080, with Very High detail and SSAA enabled. This is very close to the scores managed by the more expensive Nvidia GTX 970-based cards. The card was quicker than the GTX 970 models in the Tomb Raider benchmark, too, with a huge 84.3fps.

The card was quicker than the GTX 970 models in the Tomb Raider benchmark, too, with a huge 84.3fps.

48.6fps in Dirt Showdown at 3,840×2,160 shows the card isn’t troubled by this benchmark, and we even saw a smooth 34fps average in Tomb Raider at this huge resolution once we’d swapped the resource-hungry SSAA for the lighter FXAA anti-aliasing technique. In the Metro benchmark, leaving quality on Very High but turning off SSAA led to a smooth 49fps at 2,560×1,440, and we saw a just-playable 33fps frame rate at 3,840×2,160 by turning detail down to High.

The XFX AMD Radeon R9 290 Black Double Dissipation Edition is expensive, but incredibly powerful for the money. The Nvidia GTX 970 cards can’t match it for bang for buck, and it makes the Sapphire Vapor-X R9 290X look like overkill. If you’re going to play games at up to 2,560×1,440, the much cheaper XFX Radeon R9 280X is a better buy, but if you want to dabble with 4K gaming the R9 290 is a great way to do it.

Radeon R9 290X

At the top of the AMD tree is the Sapphire VAPOR-X R9 290X 4GB GDDR5 TRI-X OC. This card’s grandiose title is matched by its appearance. It’s huge, at 300mm long, heavy, and has three big fans and fancy metallic turquoise paint. It also needs two 8-pin PCI Express power connectors.

This card’s grandiose title is matched by its appearance. It’s huge, at 300mm long, heavy, and has three big fans and fancy metallic turquoise paint. It also needs two 8-pin PCI Express power connectors.

The card is very quiet at idle, as the two outer fans power down completely. It makes a low roar under load, but the noise’s low pitch makes it unobtrusive. This is easily the fastest card we tested, on either the AMD or Nvidia side. The 1,920×1,080 Dirt Showdown and Tomb Raider benchmarks were dispatched without a problem, and 49fps in Metro: Last Light Redux with Very High detail and SSAA is a couple of frames per second better than the more expensive Nvidia GTX 970-based cards managed.

Dirt Showdown and Tomb Raider weren’t a problem at 2,560×1,440 either, and turning off SSAA in Metro gave us 54fps at this resolution, even with the game set to Very High detail. We also saw a playable 37fps at 3,840×2,160 once we’d dropped detail to High.

The Sapphire VAPOR-X R9 290X 4GB GDDR5 TRI-X OC is a highly impressive card, which manages to be faster than the Nvidia GTX 970-based models despite being slightly cheaper. However, the XFX AMD Radeon R9 290 Black Double Dissipation Edition does most of what the R9 290X can do at a lower price, so is a better buy overall.

However, the XFX AMD Radeon R9 290 Black Double Dissipation Edition does most of what the R9 290X can do at a lower price, so is a better buy overall.

| Award | Best Buy | Recommended | ||||

| Manufacturer | XFX | Asus | Club3D | Sapphire | XFX | Sapphire |

| Model | Radeon R9 280X Double Dissipation Black Edition | Strix R9 285 | Radeon R9 285 royalQueen | R9 285 2GB GDDR5 ITX Compact Edition | AMD Radeon R9 290 Black Double Dissipation Edition | VAPOR-X R9 290X 4GB GDDR5 TRI-X OC |

| Rating | 5 | 3 | 3 | 4 | 5 | 4 |

| Hardware | ||||||

|---|---|---|---|---|---|---|

| Slots taken up | 2 | 2 | 2 | 2 | 2 | 3 |

| GPU | AMD Radeon R9 280X | AMD Radeon R9 285 | AMD Radeon R9 285 | AMD Radeon R9 285 | AMD Radeon R9 290 | AMD Radeon R9 290X |

| GPU cores | 2,048 | 1,792 | 1,792 | 1,792 | 2,560 | 2,816 |

| GPU clock speed | 1,080MHz | 954MHz | 945MHz | 928MHz | 980MHz | 1,080MHz |

| Memory | 3GB GDDR5 | 2GB GDDR5 | 2GB GDDR5 | 2GB GDDR5 | 4GB GDDR5 | 4GB GDDR5 |

| Memory interface | 384-bit | 256-bit | 256-bit | 256-bit | 512-bit | 512-bit |

| Max memory bandwidth | 176GB/s | 176GB/s | 176GB/s | 320GB/s | 361GB/s | |

| Memory speed | 1,550MHz | 1,375MHz | 1,375MHz | 1,375MHz | 1,250MHz | 1,410MHz |

| Graphics card length | 295mm | 269mm | 218mm | 172mm | 283mm | 300mm |

| DVI outputs | 2 | 2 | 2 | 1 | 2 | 2 |

| D-sub outputs | 0 | 0 | 0 | 0 | 0 | 0 |

| HDMI outputs | 1 | 1 | 1 | 1 | 1 | 1 |

| Mini HDMI outputs | 0 | 0 | 0 | 0 | 0 | 0 |

| DisplayPort outputs | 0 | 1 | 1 | 0 | 1 | 1 |

| Mini DisplayPort outputs | 2 | 0 | 0 | 2 | 0 | 0 |

| Power leads required | 1x 6-pin, 1x 8-pin PCI Express | 2x 6-pin PCI Express | 2x 6-pin PCI Express | 1x 8-pin PCI-Express | 1x 6-pin, 1x 8-pin PCI Express | 2x 8-pin PCI Express |

| Accessories | Mini DisplayPort to DisplayPort adaptor, 2x Molex to 8-pin PCI Express adaptors | 2x Molex to 6-pin PCI Express adaptors | None | DVI to VGA adaptor, DisplayPort cable, Mini DisplayPort to DisplayPort adaptor, 2x 6-pin to 8-pin PCI-Express adaptors | 1x twin Molex to 6-pin PCI Express adaptor, 1x twin 6-pin to 8-pin PCI Express adaptor | 2x twin Molex to 8-pin PCI Express adaptors |

| Buying information | ||||||

| Price including VAT | £186 | £207 | £187 | £185 | £242 | £273 |

| Warranty | Two years RTB | Two years RTB | Two years RTB | Two years RTB | Two years RTB | Two years RTB |

| Supplier | www.scan.co.uk | www.morecomputers.com | www.ebuyer.com | www.ebuyer.com | www.dabs.com | |

| Details | uk.msi.com | www.asus.com | www.club-3d.com | www.sapphiretech.com | www.xfxforce.com | www.sapphiretech. com com |

| Part code | R9-280X-TDBD | STRIX-R9285-DC2OC-2GD5 | CGAX-R92856 | 11235-06-20G | R9-290A-EDBD | 11227-04-40G |

AMD Radeon R9 290X video card review and testing GECID.com. Page 1

::>Video cards

>2013

> AMD Radeon R9 290X

11/22/2013

Page 1

Page 2

One page

It’s no secret that the announcement of a new family of AMD Volcanic Islands graphics accelerators with rather low recommended prices for flagship models surprised not only users, but also the main competitor. So, the answer to the declared value for the heroine of our review is AMD Radeon R9 290X — at $549, it was a price cut for two of the three «top» graphics cards from NVIDIA. At the end of October 2013, the recommended price for the NVIDIA GeForce GTX 780 model was reduced from $649 to $499, and for the NVIDIA GeForce GTX 770 version from $399 to $329. And only the flagship — NVIDIA GeForce GTX TITAN — remained unshakable with its price tag of $999.

So, the answer to the declared value for the heroine of our review is AMD Radeon R9 290X — at $549, it was a price cut for two of the three «top» graphics cards from NVIDIA. At the end of October 2013, the recommended price for the NVIDIA GeForce GTX 780 model was reduced from $649 to $499, and for the NVIDIA GeForce GTX 770 version from $399 to $329. And only the flagship — NVIDIA GeForce GTX TITAN — remained unshakable with its price tag of $999.

Let’s see what exactly made the competitor react so quickly to the release of AMD Radeon R9290X. After all, the announcement of a flagship video card with a price tag of $549 is an amazing event in itself, but without an appropriate level of performance it is not able to stir up the market so much.

The graphics core of the novelty called AMD Hawaii XT is quite logically made on the 28-nm AMD Graphics Core Next microarchitecture and consists of four unified blocks called the «Shader Engine». We also note the increase since the AMD Radeon HD 7970 L2 cache from 384 KB to 1 MB and L1-L2 bus bandwidth from 700 GB/s to 1 TB/s.

We also note the increase since the AMD Radeon HD 7970 L2 cache from 384 KB to 1 MB and L1-L2 bus bandwidth from 700 GB/s to 1 TB/s.

In turn, each Shader Engine, in addition to a separate geometry processing unit, contains 11 computational units and 16 rasterization units.

Each compute unit consists of 64 stream processors and 4 texture units.

Another advantage of the AMD Radeon R9 290X is the increased memory bus to 512-bit. This allowed us to increase the throughput by 20% compared to the AMD Radeon HD 79.70, which, given the effective frequency of video memory 5000 MHz, is now capable of passing an impressive 320.0 GB of information per second.

As a result, the performance characteristics of new items in comparison with other models are as follows:

|

AMD

Radeon R9 290X |

AMD

Radeon R9 280X |

AMD

Radeon HD 7970 GHz Edition |

|

|

Codename |

Hawaii XT |

Tahiti XTL |

Tahiti XT2 |

|

Number of stream processors |

2816 |

2048 |

2048 |

|

Texture blocks |

176 |

128 |

128 |

|

ROPs |

64 |

32 |

32 |

|

GPU frequency, MHz |

1000 |

1000 |

1050 |

|

Effective video memory frequency, MHz |

5000 |

6000 |

6000 |

|

Memory size, MB |

4096 |

3072 |

3072 |

|

Video memory interface, bit |

512 |

384 |

384 |

features of the new flagship.

As it became known, AMD Radeon R9 290X received an updated mechanism for controlling power consumption and overclocking — AMD PowerTune.

The novelty uses a special VID interface with a dedicated telemetry channel, the presence of which is designed to reduce the processing time of the received data for more efficient voltage control in the range from 0 to 1.55 V. automatic voltage change is only 6.25 mV, which allows you to more precisely regulate the performance and TDP of the video card.

AMD Radeon R9 290X is also notable for the lack of connectors for connecting AMD CrossFireX bridges, which means we have the first flagship with a purely software implementation of this function. According to AMD, the bandwidth of the PCI Express 3.0 bus is more than enough to keep the frame buffers in sync. Even if connected via a PCI Express 2.0 connector, there will be no significant drop in performance. In the future, we will definitely test the work of AMD Radeon R9290X in this mode, but for now we can only take the manufacturer’s word for it.

An interesting feature is support for the VESA Display ID v1.3 standard, which allows you to connect and configure displays with resolutions up to «4K» (Ultra HD) in plug’n’play mode without user intervention, which greatly simplifies setup .

Thanks to DSP TrueAudio support, the AMD Radeon R9 290X graphics card is able to output processed (by the developer or automatically using the built-in middleware libraries) sound not only via the HDMI interface, but also to any other audio devices connected using USB or 3.5mm audio jack.

Of course, there was support for API DirectX 11.2 and AMD Mantle, which we will not dwell on in detail, since you can find their features in the material related to the announcement of the AMD Volcanic Islands family.

And now we propose to move from theory to practice, having examined in more detail the reference video card AMD Radeon R9 290X .

|

Model |

AMD Radeon R9 290X |

|

Graphics core |

AMD Hawaii XT |

|

Number of universal shader processors |

2816 |

|

Supported APIs and Technologies |

DirectX 11.2, OpenGL 4.3, AMD Mantle, AMD Eyefinity, AMD App Acceleration, AMD HD3D, AMD CrossFireX, AMD PowerPlay, AMD PowerTune, AMD ZeroCore, AMD TrueAudio |

|

Graphics core frequency, MHz |

1000 |

|

Memory frequency (effective), MHz |

1250 (5000) |

|

Memory capacity, GB |

4 |

|

Memory type |

GDDR5 |

|

Memory bus width, bit |

512 |

|

Memory bandwidth, GB/s |

320. |

|

Tire type |

PCI Express 3.0 x16 |

|

Maximum resolution |

Digital — up to 4096 x 2160 Analog — up to 2048 x 1536 |

|

Image output interfaces |

2 x DVI-D 1 x HDMI 1 x DisplayPort |

|

Support for HDCP and HD video decoding |

Yes |

|

Minimum power supply unit, W |

750 |

|

Dimensions from the official website (measured in our test lab), mm |

Not specified (290 x 112) |

|

Drivers |

Fresh drivers can be downloaded from the website of the manufacturer of GPU |

|

Manufacturer website |

AMD |

Appearance and element base

The novelty is made on a completely new black printed circuit board. As you can see, it cannot boast of compact dimensions, which, in principle, is not surprising for a flagship solution. All sixteen memory chips are located on the front side of the textolite. They are soldered around the graphics core.

As you can see, it cannot boast of compact dimensions, which, in principle, is not surprising for a flagship solution. All sixteen memory chips are located on the front side of the textolite. They are soldered around the graphics core.

The AMD Radeon R9 290X is powered according to the scheme familiar to us from the AMD Radeon HD 7970, which includes seven phases: five for the GPU and one for the video memory chips and PLL. Speaking about the element base of the video card, we note the presence of solid capacitors, chokes with a ferrite core and field-effect transistors with a metal case.