Overclocking the GeForce 8800 Series

For this project I have selected the FOXCONN NVIDIA GeForce 8800 GTS as my test subject, which I reviewed here at Bjorn3D back in November 2006. Presently, the 640MB version of the GeForce 8800 GTS is the second-best video card available on the market. Gamers and computer enthusiasts alike have already speculated on how the GTS could be made to perform to the same level as the GeForce 8800 GTX with some tweaking.

Introduction

Overclocking can take on many forms, and the practice can range from minor product improvements to a re-engineering project which completely alters the product. In this article, I will concentrate my efforts to achieving the most gain with the least amount of effort.

Some industry voices have called overclocking a hobby, while others have compared it to product misuse. However, I believe that if you are reading this article, you are probably one of the many computer enthusiasts who believe it is perfectly acceptable to get something more out of a product without it costing more money. When I think about it, everyone enjoys getting something for nothing; it’s human nature.

Additionally, it is human nature to blame someone else if something goes wrong. This is where I warn you, the reader of this article, that neither I nor Bjorn3d.com recommend that you overclock your video card. This article explains how I conducted these experiments on my own property. Bjorn3d.com and the author of this article will not be responsible for damages or injury resulting from experiments you choose to conduct on your own property. If you read beyond this point, you are accepting responsibility for your own actions and hold Olin Coles and the staff of Bjorn3d.com harmless.

For this project I have selected the FOXCONN NVIDIA GeForce 8800 GTS as my test subject, which I reviewed here at Bjorn3D back in November 2006. Presently, the 640MB version of the GeForce 8800 GTS is the second-best video card available on the market. Gamers and computer enthusiasts alike have already speculated on how the GTS could be made to perform to the same level as the GeForce 8800 GTX with some tweaking. Unfortunately, this just isn’t possible. What is possible though is taking a great product and making it even better; and do it all for free.

Unfortunately, this just isn’t possible. What is possible though is taking a great product and making it even better; and do it all for free.

Test Subject: FOXCONN 640MB GeForce 8800 GTS

By default, the FOXCONN 8800 GTS operates with a 575MHz G80 GPU core clock speed, a 1188MHz shader, and a 900MHz (1800MHz) GDDR3 RAM speed. I will utilize the free overclocking utility ATITool v0.26 to search out the best clock speeds and simulate heavy graphic loading to establish stability. I will then make use of NiBiTor v3.2 which is another free tool to program a custom flashable video BIOS. After creating the new custom video BIOS with NiBiTor, I will use yet another free application, nvFlash, to program the new custom video BIOS onto the GeForce 8800 GTS.

ATITool v0.26 (click for large image)

Sure the name says “ATI” Tool, but the author has made this a great tool for both ATI and NVIDIA products for several years now. I have personally used many different tools for overclocking in the past, but this has proven to be the best tool for all my needs. After a straightforward installation and reboot (to complete the installation of the driver-level service), I open ATITool and see a lot of options confronting me. Try not to be overwhelmed, since all of these options could strike fear in the hearts of the inexperienced.

After a straightforward installation and reboot (to complete the installation of the driver-level service), I open ATITool and see a lot of options confronting me. Try not to be overwhelmed, since all of these options could strike fear in the hearts of the inexperienced.

Getting Started

The first screen displayed will be the only screen that is really needed. While the memory clock speed copies its adjustments across all three performance levels (2D, 3D low power, and 3D performance), the software allows the user to make individual speed settings to the GPU core clock speed. Initially, the default values are saved in the profile named “default”, but as you make changes you may save and delete profiles as needed. For this project, I kept raising my speeds and saving them into a profile named “MAX”.

ATITool: Artifacts Indicate Unstable Settings

To begin the overclocking process, I start by raising the temperature of the GPU core by using the “Show 3D View” button to display a rotating fuzzy cube. It is critical that the video card attain the highest temperature possible prior to overclocking, because the results of a cold overclock may prove unstable during gaming conditions. After reaching the loaded operating temperature, I change to the “Scan for Artifacts” view by pressing the button. Although I could have used the “Find Max Core & Mem” buttons to have ATITool automatically work out the best settings, I choose to manually test each incremental improvement on my own.

It is critical that the video card attain the highest temperature possible prior to overclocking, because the results of a cold overclock may prove unstable during gaming conditions. After reaching the loaded operating temperature, I change to the “Scan for Artifacts” view by pressing the button. Although I could have used the “Find Max Core & Mem” buttons to have ATITool automatically work out the best settings, I choose to manually test each incremental improvement on my own.

Find the Best Speeds

Experience has taught me that overclocking the memory is the best starting point, since it has a very small impact on operating temperatures when compared to overclocking the GPU. I have also learned that clock speed improvements can be made in larger steps on RAM (10MHz steps), then they can be made on the GPU (3MHz steps). It is recommended that each clock be adjusted in small increments one after the other. Do not find the maximum RAM clock and then set out to find the best GPU clock; this will give skewed results at either end.

In my testing, I found that this particular card could operate a maximum RAM clock speed of 1060MHz (2120MHz) with the default GPU core clock. Alternatively, the maximum GPU clock could be raised up to 618MHz while maintaining the default RAM clock speed. However, the best combination of the two results yielded a stable 600MHz GPU core clock (25MHz improvement) with a final RAM clock speed of 1030MHz (130MHz improvement). These settings were saved to the profile I named “MAX”, and tested for a ten-minute duration using the “Scan for Artifacts” function. After stability was successfully tested, I played some of my favorite video games for an hour to confirm real-world stability. Now that I have my numbers, it is time to program them into the card.

Programming

Once the maximum stable speeds for both GPU and RAM have been found and tested, there is a choice ahead: either continue using ATITool to maintain these speeds, or flash them onto the video cards BIOS and make them permanent. Since I have already tested my overclocking results with both artificial loading and real-world usage, it is now safe enough for me to modify the video card BIOS file with my new overclocked settings and make the FOXCONN 8800 GTS operate with enhanced performance without additional software. This will make the card identical to factory overclocked versions.

Since I have already tested my overclocking results with both artificial loading and real-world usage, it is now safe enough for me to modify the video card BIOS file with my new overclocked settings and make the FOXCONN 8800 GTS operate with enhanced performance without additional software. This will make the card identical to factory overclocked versions.

Unfortunately, part of this process requires that the system boot into MS-DOS with a 3.5″ floppy disk drive, which I normally don’t have installed in my computer because it is considered an obsolete legacy piece of hardware. The USB flash drive and recordable optical drives have proven themselves to be very good solutions in terms of suitable replacements, so it may become a difficult task locating a 3.5″ floppy disk drive any further into the future. For the remainder of this project, a bootable USB flash drive or a properly created bootable CD/DVD could have been substituted, but I choose to avoid reinventing the wheel and retained the use of a spare floppy disk drive.

NiBiTor v3.2 Video Card BIOS Editor

To begin the (simple) process of creating a custom BIOS file, I utilized the free NiBiTor v3.2 program to save a copy of my original BIOS. There are two steps for this process:

- Tools → Read BIOS → Select Device (Choose the video card here)

- Tools → Read BIOS → Read Into File (Choose a safe backup location to save the original BIOS)

Save a backup

I should only continue after creating a backup of the original video BIOS, since this file will allow the opportunity to return my settings back the factory defaults if it is every required. It is highly recommended that this backup BIOS be copied and renamed so there will be a working copy and the original backup file available. I named the original BIOS file “BACKUP.ROM”, and then created a copy of it which I named “8800GTS2.ROM”.

Up to this point, I have saved my modified video BIOS “8800GTS2.ROM” onto one floppy disk. I will save the nvFlash program which is available for free download onto this same floppy disk. On a second floppy disk I will use Windows XP to format and create an MS-DOS startup disk. It is may not required to split the project files and nvFlash from the MS-DOS startup disk because of file sizes and available space, but this is a safe practice which also decreases the chance for possible media problems.

On a second floppy disk I will use Windows XP to format and create an MS-DOS startup disk. It is may not required to split the project files and nvFlash from the MS-DOS startup disk because of file sizes and available space, but this is a safe practice which also decreases the chance for possible media problems.

Now that a backup of the video BIOS has been copied and stored for safe keeping, the next step for reading the working file and making changes begins with: File → Open BIOS (Choose the renamed copy “8800GTS2.ROM” of the video BIOS here).

New Speed Values are Added

Once again, the novice could become very concerned about the many tabs and options available in NiBiTor, but for my purposes I will only use the Clockrates tab to change the values for 3D speeds.

Using the values discovered and tested to be stable in my previous steps, I apply these values into the appropriate 3D fields, replacing the original values. Once I have typed in the new Core and Memory values, I saved this modified video BIOS file by choosing: File → Save BIOS (save this modified file onto a formatted floppy disk and name the file something simple with less then eight characters such as “8800GTS2. ROM”).

ROM”).

Flash the BIOS with nvFlash v5.40

Now that I have prepared the new modified video BIOS file and saved it to a floppy disk, I am ready to make my video card operate as if it came from the factory with my new settings. I will now flash my working copy of the modified video BIOS “8800GTS2.ROM” onto the FOXCONN 8800 GTS using nvFlash.

Flash the new BIOS

Flashing a video BIOS is a very simple process; yet extra care and precaution must be taken or the hardware being flashed may be rendered non-operational. I have taken steps to ensure my computer systems stability will not be compromised by removing any system component overclocking (CPU, RAM, bus speeds), and have placed my system on a 1500VA backup battery UPS. With stability ensured, I am ready to move forward and flash the video BIOS.

The video card BIOS is flashed by:

- Boot into MS-DOS mode from a pre-formatted floppy disk

- Insert the floppy disk containing the BIOS file and nvFlash

- Type “nvflash 8800GTS2.

ROM” (where “8800GTS2.ROM is the name of the BIOS file)

ROM” (where “8800GTS2.ROM is the name of the BIOS file) - Press “Y” to confirm that the system is ready to flash the firmware

That’s it! The hard part is done. Once the system reboots after the successful BIOS flash, the video card is programmed with the new enhanced performance settings. But am I finished?

Cooling Improvements

With all this new power there will come increased heat output, which is something the GeForce 8800 series already knows plenty about.

NVIDIA nTune Performance Application

The GeForce 8800 GTS already runs close to 90° C when it is under full load, so I have taken an extra step to make sure my temperatures don’t turn this product into a personal space heater. Using the NVIDIA nTune Performance Application available free, I can adjust the fan speed from the default 60% output up to the desired 100% output.

Don’t be fooled

A closer look at the nTune utility will reveal that it offers the opportunity to overclock the video card through the GPU clock settings interface; but I had to discover the hard way that this was a very unstable and unsafe method which always resulted in system crashes. I have since avoided every feature offered in this utility except the GPU fan settings feature.

I have since avoided every feature offered in this utility except the GPU fan settings feature.

nTune: a necessary evil

If I could find a better program with a smaller footprint which would enable me to manually adjust 8800 series blower fan speeds, I would be using it. But since this is the only one I am aware of, it is a necessary evil.

With the NVIDIA nTune utility, I can manually raise (or lower) the fan controller output. The blower fan on the GeForce 8800 series is somewhat silent at the default 60% output, but it gets humming when set up to 100% output which means that noise may become a concern.

Conclusion

For the cost of the product and about an hour of time spent, I was able to take my FOXCONN 8800 GTS and overclock the G80 GPU up to 600MHz (575MHz default) along with a GDDR3 RAM speed increase up to 1030MHz (900MHz default). This amounts to a 25MHz GPU improvement and a 130MHz RAM improvement; and all for free!

Sure, these results did not transform my 8800 GTS into a GTX, but as I mentioned before this is just plain impossible because of architecture. When it was all said and done, I did make enough improvement to the 8800 GTS to keep it more relative to the 8800 GTX. My video card was already a product pushed closely to the limit from the factory, and now I have it operating and performing as best as it can. Just imagine what could be done to the GeForce 8800 GTX!

When it was all said and done, I did make enough improvement to the 8800 GTS to keep it more relative to the 8800 GTX. My video card was already a product pushed closely to the limit from the factory, and now I have it operating and performing as best as it can. Just imagine what could be done to the GeForce 8800 GTX!

With this in mind, it could be very possible to find more performance available for the taking out of other NVIDIA video cards. Just remember, what you do with your property is your own business. At least now you know how I did it.

Releasing the Beasts — Overclocking the GeForce 8800’s

Video Cards & GPUs

NVIDIA GeForce GPU

It’s time to Release the Beasts — we overclock a couple of GeForce 8800 graphics cards and share our results with you!

Published Nov 15, 2006 11:00 PM CST | Updated Tue, Nov 3 2020 7:04 PM CST

Manufacturer: none

8 minute read time

Introduction

It’s clear that the power on offer from the new GeForce 8800GTS and GTX beasts is more than enough for most people, though honestly — who cares? There is nothing wrong with wanting more power and this is where overclocking comes in.

We did have intentions to do a little piece on overclocking in our XFX article but we thought that we would dive into it more. We took some extra time see exactly what we can expect from the GeForce 8800 graphics cards when it comes to overclocking. Since all cards are practically all coming out of the same factory, you would think it’s safe to assume that most cards are going to sit quite close together when it comes to overclocking.

The main thing we want to know today; can the 8800 GTS, which sits significantly cheaper, come in and match or even beat GTX performance at stock speeds? And if it does, how much further can the 8800 GTX go to make it worth your while to spend those extra hard earned dollars on it, as opposed to the GTS variant?

In this article we’re not going to look at the graphics cards, we’ve already done that. Instead we will have a quick look at our test system setup and what we got with our overclocks. From there it’s straight into the benchmarks as that’s all we really need to see here today.

Let’s get overclocking!

Benchmarks — Test System Setup and 3DMark05

Test System Setup

Processor(s): Intel Core 2 Duo E6600 @ 3150MHz (350MHz FSB with memory @ 1:1)

Motherboard(s): ASUS P5B Deluxe (Supplied by ASUS)

Memory: 2 X 1GB G.Skill HZ PC8000 @ 350MHz 4-4-4-12 (Supplied by Bronet)

Hard Disk(s): Hitachi 80GB 7200RPM SATA 2

Operating System: Windows XP Professional SP2

Drivers: nVidia ForceWare 96.97 (Reviewer Driver) and DX9c

With Coolbits not working on the latest version of the nVidia drivers, it was time to go diving into some other program to use so we could start overclocking our XFX graphics cards.

Surprisingly, the current beta version of ATI Tool worked almost without a hitch. We thought we would be slack and try the auto detect feature but as soon as it went about 4MHz up on the core, it crapped out and we had artifacts everywhere. Using the old manual method — increase the clock speeds, run a 3DMark, rinse and repeat until we crashed something or got artifacts, we got our maximum speed on both the GeForce 8800 GTX and GTS.

Using the old manual method — increase the clock speeds, run a 3DMark, rinse and repeat until we crashed something or got artifacts, we got our maximum speed on both the GeForce 8800 GTX and GTS.

At default the 8800 GTS comes in at 500MHz on the core and 1600MHz DDR on the memory. The core increased to a truly outstanding 643MHz (143MHz increase or about 22%) and the memory got a significant boost up to 1824MHz DDR (224MHz DDR increase or about 12%).

The 8800 GTX comes in at 575MHz on the core and 1800MHz DDR on the memory. We got that to 654MHz (79MHz increase or about 12%) which is just above what we got out of the GTS and the memory had no problems breaking the 2GHz DDR marking coming in at 2020MHz DDR (220MHz DDR increase or about 11%).

3DMark05

Version and / or Patch Used: Build 120

Developer Homepage: http://www.futuremark.com

Product Homepage: http://www.futuremark.com/products/3dmark05/

Buy It Here

3DMark05 is now the second latest version in the popular 3DMark «Gamers Benchmark» series. It includes a complete set of DX9 benchmarks which tests Shader Model 2.0 and above.

It includes a complete set of DX9 benchmarks which tests Shader Model 2.0 and above.

For more information on the 3DMark05 benchmark, we recommend you read our preview here.

We can see in our first benchmark the massive overclocking on the GTS brings it to similar performance of the standard clocked GTX.

The GTX overclock wasn’t as significant as what the GTS offered, so while we do see an increase, it isn’t nearly as big as to what is on offer from the GTS which makes it even more impressive considering the price difference between the two cards.

Benchmarks — 3DMark06

3DMark06

Version and / or Patch Used: Build 102

Developer Homepage: http://www.futuremark.com

Product Homepage: http://www.futuremark.com/products/3dmark06/

Buy It Here

3DMark06 is the very latest version of the «Gamers Benchmark» from FutureMark. The newest version of 3DMark expands on the tests in 3DMark05 by adding graphical effects using Shader Model 3.0 and HDR (High Dynamic Range lighting) which will push even the best DX9 graphics cards to the extremes.

The newest version of 3DMark expands on the tests in 3DMark05 by adding graphical effects using Shader Model 3.0 and HDR (High Dynamic Range lighting) which will push even the best DX9 graphics cards to the extremes.

3DMark06 also focuses on not just the GPU but the CPU using the AGEIA PhysX software physics library to effectively test single and Dual Core processors.

The more intensive 3DMark06 also sees the significant overclock stand out for the GTS. While it does come close to the GTX, when we overclock the most expensive card, we see another nice boost in performance for the top dog.

Benchmarks — Half Life 2 (Lost Coast)

Half Life 2 (Lost Coast)

Version and / or Patch Used: Unpatched

Timedemo or Level Used: Custom Timedemo

Developer Homepage: http://www.valvesoftware.com

Product Homepage: http://www.half-life2. com

com

Buy It Here

By taking the suspense, challenge and visceral charge of the original, and adding startling new realism, responsiveness and new HDR technology, Half-Life 2 Lost Coast opens the door to a world where the player’s presence affects everything around him, from the physical environment to the behaviors even the emotions of both friends and enemies.

We benchmark Half Life 2 Lost Coast with our own custom timedemos as to avoid possible driver optimizations using the «record demo_name» command and loading the timedemo with the «timedemo demo_name» command — For a full list of the commands, click here.

We can see in our non-HDR tests that the GTS wasn’t quite hitting the CPU wall but when we overclocked, it was sitting up with the GTX.

We can also see the overclocked GTX has no performance gains at all here. As soon as we turn on HDR though and start moving up the resolutions again, we can see the difference a lot easier with the overclocked GTS just trailing behind a stock clocked GTX.

Benchmarks — PREY

PREY

Version and / or Patch Used: Unpatched

Timedemo or Level Used: HardwareOC Custom Benchmark

Developer Homepage: http://www.humanhead.com

Product Homepage: http://www.prey.com

Buy It Here

PREY is one of the newest games to be added to our benchmark line-up. It is based off the Doom 3 engine and offers stunning graphics passing what we’ve seen in Quake 4 and does put quite a lot of strain on our test systems.

The GTX saw no gains when it came to overclocking though the GTS on the other hand rocks along and gets some very nice gains across the board.

Benchmarks — F.E.A.R.

F.E.A.R.

Version and / or Patch Used: Unpatched

Timedemo or Level Used: Built-in Test

Developer Homepage: http://www. vugames.com

vugames.com

Product Homepage: http://www.whatisfear.com/us/

Buy It Here

F.E.A.R. (First Encounter Assault Recon) is an intense combat experience with rich atmosphere and a deeply intense paranormal storyline presented entirely in first person. Be the hero in your own spine-tingling epic of action, tension, and terror…and discover the true meaning of F.E.A.R.

We see gains in the minimum on both cards but when we move to the average the GTX doesn’t move much from 1280 x 1024. It’s only at the higher resolutions we really see a difference.

Benchmarks — Quake 4

Quake 4

Version and / or Patch Used: 1.2

Timedemo or Level Used: HardwareOC Custom Benchmark

Developer Homepage: http://www.idsoftware.com

Product Homepage: http://www.quake4game.com

Buy It Here

Quake 4 is one of the latest new games to be added to our benchmark suite. It is based off the popular Doom 3 engine and as a result uses many of the features seen in Doom. However, Quake 4 graphics are more intensive than Doom 3 and should put more strain on different parts of the system.

It is based off the popular Doom 3 engine and as a result uses many of the features seen in Doom. However, Quake 4 graphics are more intensive than Doom 3 and should put more strain on different parts of the system.

For some reason apart from 1600 x 1200 the overclock really didn’t affect both cards. Both saw a significant increase at 1600 x 1200 but at 1280 and 1920, we see that the cards score almost identical.

Benchmarks — Company of Heroes

Company of Heroes

Version and / or Patch Used: Demo

Timedemo or Level Used: Built-in Test

Developer Homepage: http://www.relic.com

Product Homepage: http://www.companyofheroesgame.com

Buy It Here

Company of Heroes, or COH as we’re calling it, is one of the latest World War II games to be released and also one of the newest in our lineup of benchmarks. It is a super realistic real-time strategy (RTS) with plenty of cinematic detail and great effects. Because of its detail, it will help stress out even the most impressive computer systems with the best graphics cards — especially when you turn up all the detail. We use the built-in test to measure the frame rates.

Because of its detail, it will help stress out even the most impressive computer systems with the best graphics cards — especially when you turn up all the detail. We use the built-in test to measure the frame rates.

The overclock for the GTX doesn’t see too much happening in COH though the GTS shows significant gains across the board especially when it comes to the minimum FPS, which is clearly the most important.

Benchmarks — High Quality AA and AF

High Quality AA and AF

Our high quality tests let us separate the men from the boys and the ladies from the girls. If the cards weren’t struggling before they will start to now. ATI and the GeForce 8800 series are able to offer HDR and AA at the same time unlike older nVidia cards.

When we start to increase the detail, we see a similar picture with 3DMark06 — the overclocked GTS is just trailing a stock clocked GTX and the overclocked GTX is still consistently ahead.

In Lost Coast when we aren’t seeing a CPU limitation, we can see that it is able to make use of the new found performance from both cards.

While PREY only saw gains at 1280 x 1024 with regular quality settings, when we turn on AA and AF, we can see at 1920 x 1200 both cards offer a good increase in performance.

Final Thoughts

GeForce 8800 GTS offers an absolutely huge overclock thanks to how generous nVidia and its partners have been with the core. Generally speaking, the overclock brings it pretty close to the performance of a stock clocked GeForce 8800 GTX. With that said though, the GTX doesn’t have any problems with overclocking either giving it the ability to go up and beyond what it was doing at default.

If you were thinking about getting a GTX and have thought, «Oh maybe I will get a GTS instead, due to the overclock on offer…«, if you can afford the GTX, we would recommend that you continue with that and purchase it because it will be nice to have this kind of performance without having to overclock your graphics card.

One of the biggest things that you have to take note of is the frame rates you’re getting with these overclocked cards. A GTX at default speeds with AA, AF and HDR on when running at 1920 x 1200 is getting an average of 104 FPS and when overclocked 114FPS. Is it worth the mucking around for an extra 10FPS? Yes, it is roughly 10% faster but you’re honestly not going to see a real-world difference in game and all you’re doing is increasing the amount of work your GPU is doing. Heck, you could probably even underclock the GTX if you wanted… but that’s just silly.

You have to understand our point though — move away from 3DMark and move away from the FPS difference being 150FPS average and 175FPS average and think, is it really worth even contemplating overclocking your graphics card? It’s pretty safe you will come to the assumption that it’s not but others will want to overclock anyway.

Yes, overclocking can be done with ease and you do get a nice speed bump on the GTS — though honestly, you have to decide if it’s even worth it. If GTS is all you can afford and you want to bump the AA up another level but it runs a little too slow, then do some overclocking. Although, unless you’re gaming at levels of 1920 x 1200 and above, stock performance is clearly going to be enough for the time being it on the majority of the latest games.

If GTS is all you can afford and you want to bump the AA up another level but it runs a little too slow, then do some overclocking. Although, unless you’re gaming at levels of 1920 x 1200 and above, stock performance is clearly going to be enough for the time being it on the majority of the latest games.

Let’s wait for some new games to be released, such as Crysis or UT2007, and then you’ll probably want to overclock as far as you can as these games will be able to stress out the GPU more than the current batch of games.

Shopping Information

PRICING: You can find products similar to this one for sale below.

United States: Find other tech and computer products like this over at Amazon.com

United Kingdom: Find other tech and computer products like this over at Amazon.co.uk

Australia: Find other tech and computer products like this over at Amazon. com.au

com.au

Canada: Find other tech and computer products like this over at Amazon.ca

Deutschland: Finde andere Technik- und Computerprodukte wie dieses auf Amazon.de

Shawn Baker

Shawn takes care of all of our video card reviews. From 2009, Shawn is also taking care of our memory reviews, and from May 2011, Shawn also takes care of our CPU, chipset and motherboard reviews. As of December 2011, Shawn is based out of Taipei, Taiwan.

BFG Tech GeForce 8800 GTS OC 512MB

Overclocking:

Since the current version of RivaTuner doesn’t work with Nvidia’s latest drivers, we chose to use Nvidia nTune for our overclocking endeavours with BFG Tech’s GeForce 8800 GTS OC 512MB card.

As a quick reminder, BFG’s factory-overclocked 8800 GTS 512MB comes with default speeds of 675MHz core, 1,674MHz stream processor and 1,940MHz (effective) memory.

After a couple of hours of tweaking and stability testing using Crysis (it’s a hard life. .. I know), we found that we were able to increase these frequencies relatively successfully, with the core clocked at 719MHz and memory at 2142MHz (effective).

.. I know), we found that we were able to increase these frequencies relatively successfully, with the core clocked at 719MHz and memory at 2142MHz (effective).

This represents increases of 101MHz (202MHz effective) on the memory and 44MHz on the core. If you factor in the fact that the BFG Tech card already has a 25MHz core speed increase by default, the overclock represents a 69MHz increase – that’s not too bad in the grand scheme of things. Sadly, we are unable to report the shader clock increase at the moment because RivaTuner version 2.06 doesn’t work correctly with the drivers used – all of the Forceware-specific options are not available.

Final Thoughts…

With the recent price drops on Nvidia’s GeForce 8800 GT, GeForce 8800 GTS 512MB and GeForce 8800 GTX, there are some interesting options on the market for gamers. At the same time we can’t forget the Radeon HD 3870 X2 either, but as we stated during our review earlier this week, we’re hesitant to give it a solid recommendation because its reliance on drivers.

The cheapest GeForce 8800 GTS 512MB we’ve found is priced at £194.17, including VAT and it’s clocked at Nvidia’s reference speeds. BFG Tech’s card, on the other hand, costs about £10 more at £205.50 (inc. VAT) and comes with a fairly modest factory overclock, but it’s an overclock nevertheless. The result is a relatively unnoticeable performance increase, but what it should mean is that there is the potential for a higher overclock as the chips are qualified to run at higher-than-standard speeds.

Update: it’s also available on OcUK for £204.44 (inc. VAT), but remember that you’ll get free delivery from Scan if you’re a regular contributor in the bit-tech forums.

In addition to that, BFG sweetens the deal with its 10-year warranty in Europe (and a Lifetime warranty in North America) – and there are few graphics board partners that match BFG on this front.

We couldn’t talk about the GeForce 8800 GTS 512MB without mentioning the alternatives—such as the GeForce 8800 GT and GeForce 8800 GTX—that are tentatively priced either side of the card we’re looking at here today. Ultimately, the choice on whether you need to spend £150, £200 or £250 will depend on your requirements.

Ultimately, the choice on whether you need to spend £150, £200 or £250 will depend on your requirements.

1680×1050 seems to be the optimal resolution for the GeForce 8800 GTS 512MB, but performance doesn’t tail off too much at 1920×1200; therefore, if you’re gaming on a higher-resolution screen, we’d recommend plumping for a GeForce 8800 GTX or Radeon HD 3870 X2. However, if you’ve got a 1280×1024 screen you should probably save the cash and opt for a GeForce 8800 GT. It’s also worth mentioning that the GeForce 8800 GT is a pretty capable card at 1680×1050 as well – although it’s not as competent as the 8800 GTS 512MB, where it’s around 15 percent slower on average.

You’re probably wondering why I’ve not mentioned the alternatives from ATI yet – that’s because there really isn’t any alternative at this price point. The newly-released Radeon HD 3870 X2 typically retails for around £270 (inc. VAT)—some £65 more than the BFG Tech GeForce 8800 GTS 512MB. And at the other end of the scale, the Radeon HD 3870 is available for around £130 (inc. VAT) – that’s about £65 less than the cheapest 8800 GTS 512MB and it’s in a different performance class.

VAT) – that’s about £65 less than the cheapest 8800 GTS 512MB and it’s in a different performance class.

So, BFG Tech’s GeForce 8800 GTS OC 512MB appears to have hit a price point that can’t be matched by anything other than stock-clocked GeForce 8800 GTS 512MB cards and as such it earns a recommendation from us. However, it’s important to make sure that it’s going to be connected to a 1680×1050 or 1920×1200 display, as that will show the card in its best possible light. You can get away with running the BFG Tech GeForce 8800 GTS 512MB on higher or lower resolution screens, but the benefits of the card aren’t going to be quite so profound.

- Features

- x

- x

- x

- x

- x

- x

- x

- x

- —

- —

- 8/10

- Performance

- x

- x

- x

- x

- x

- x

- x

- x

- x

- —

- 9/10

- Value

- x

- x

- x

- x

- x

- x

- x

- x

- x

- —

- 9/10

- Overall

- x

- x

- x

- x

- x

- x

- x

- x

- x

- —

- 9/10

What do these scores mean?

BFG Tech GeForce 8800 GTS OC 512MB

1 — BFG Tech GeForce 8800 GTS OC 512MB2 — Card & Warranty3 — BFG Tech GeForce 8800 GTS OC2 512MB Watercooled4 — Test Setup5 — Crysis6 — Call of Duty 4: Modern Warfare7 — World in Conflict8 — Unreal Tournament 39 — Overclocking & Final Thoughts

TSpire.

com. FOXCONN FV-N88XMAD2-ONOC GeForce 8800 GTX 768MB 384-bit GDDR3 PCI Express x16 HDCP Ready SLI Support OverClock Video Card

com. FOXCONN FV-N88XMAD2-ONOC GeForce 8800 GTX 768MB 384-bit GDDR3 PCI Express x16 HDCP Ready SLI Support OverClock Video Card

Recently viewed products

-

FOXCONN FV-N88XMAD2-ONOC GeForce 8800 GTX 768MB 384-bit GDDR3 PCI Express x16 HDCP Ready SLI Support OverClock Video Card

Categories

-

CPUs

-

Video Cards

-

Tesla / Cluster GPU

-

Mobile Video Cards

-

Desktop / Gaming Graphics

-

Matrox

-

Rage Series

-

Radeon Series

-

GeForce Series

-

GeForce 8800 Series

-

GeForce 8800 GTS (G92)

-

GeForce 8800 GT

-

GeForce 8800 GTX

-

GeForce 8800 GTS

-

GeForce 8800 Ultra

-

GeForce 8800Ultra KO

-

GeForce 8800 GS

-

-

GeForce 9800 Series

-

GeForce 9600 Series

-

GeForce 9500 GT Series

-

GeForce 8500 GT Series

-

GeForce 9400 GT Series

-

GeForce 7200GS Series

-

GeForce 7100GS Series

-

GeForce 6500 Series

-

GeForce 6200 Series

-

GeForce 6600 Series

-

GeForce 6800 Series

-

GeForce 7300 Series

-

GeForce 7600 Series

-

GeForce 7800 Series

-

GeForce 7900 Series

-

GeForce 8400 Series

-

GeForce 8600 Series

-

GeForce FX Series

-

GeForce GT Series

-

GeForce GTS Series

-

GeForce GTX Series

-

GeForce MX Series

-

GeForce PCX Series

-

GeForce4 Series

-

GeForce 3 Series

-

GeForce2 Series

-

GeForce 9300 GE Series

-

GeForce 8300GS Series

-

GeForce 315 Series

-

GeForce 9500GS Series

-

GeForce 405 Series

-

GeForce 9300 GS Series

-

GeForce 310 Series

-

GeForce 605 Series

-

GeForce RTX Series

-

-

Old Card

-

-

Professional / Workstation Cards

-

MAC Compatable GPU

-

Special / Medical Cards

-

Video Accessories

-

HP Workstation Video Cards

-

Slot Machines Cards

-

-

Thin Client

-

Dock / Docking Station / Port Replicator

-

Power Supply

-

Controller

-

Computer Hardware

-

Parts of Server

Manufacturers

- ORACLE

- IBM

- 3COM

- ADAPTEC

- APACER

View all

-

Home

/ -

Video Cards

/ -

Desktop / Gaming Graphics

/ -

GeForce Series

/ -

GeForce 8800 Series

/ -

GeForce 8800 GTX

/ -

FOXCONN FV-N88XMAD2-ONOC GeForce 8800 GTX 768MB 384-bit GDDR3 PCI Express x16 HDCP Ready SLI Support OverClock Video Card

Manufacturer:

FOXCONN

SKU: 2016261

Part number: FV-N88XMAD2-ONOC

Availability: In stock

-

Extended Warranty

* -

TSpire. com 6 Months Warranty

com 6 Months Warranty

1 Year Extended Warranty

[+$67.52]

2 Year Extended Warranty

[+$86.21]

3 Year Extended Warranty

[+$120.52]

4 Year Extended Warranty

[+$147.02]

5 Year Extended Warranty

[+$184.12]

|

||||||||||||||||||||||||||||||||

The MSI NX8800GTX-T2D768E-HD videocard

is pretty darn quick in its own right, without anyone messing around with

it. That doesn’t mean PCSTATS is going to spare this flagship videocard from

the overclocking tests.

We’ll be starting today’s overclocking tests

with the nVidia ‘G80’ GPU first. It is clocked at a default speed

of 576 MHz, and that frequency will be increased in 5 MHz increments.

Using RivaTuner, the GeForce 8800GTX core turned out to

be a pretty good overclocker and easily cracked 600 MHz. Shortly thereafter

we passed 610, 620 and 630 MHz without any trouble. nVIDIA may not want

videocard manufacturers to factory overclock the G80, but that doesn’t mean it

doesn’t overclock well!

The MSI NX8800GTX-T2D768E-HD hit a top speed of 665 MHz

before RivaTuner stared to complain about it not passing the internal test. If

we disabled the internal test, the core could probably go higher but with stock

cooling, I didn’t want to push too hard. There are reports online that the

G80 scales well with watercooling, TEC or phase change cooling solutions…. but

unfortunately none of those types of cooling were available at the time of this

review.

Next up was the 768MB of Samsung GDDR3 memory.

This videocard memory is clocked at 1800 MHz by default, or 1.8GHz.

We were a bit more aggressive when it came to overclocking the videocard’s memory,

and took it in 20 MHz strides.

In the

past we have seen Samsung GDDR3 memory overclock well this way, so we were

anticipating some really fast results this time.

The MSI NX8800GTX-T2D768E-HD’s memory didn’t disappoint.

We broke through 1900 MHz in no time, and closed in on 2000MHz just as fast. The

2 GHz plateau came and went, as did the 2100 MHz barrier!

A few moments later, we reached the end of the game,

with the memory at its maximum speed of 2132 MHz. RivaTuner started to complain

about failing the internal driver test, so we didn’t try for anything

faster.

One of the interesting results of all of this

overclocking was that it didn’t actually improve the benchmark results of the

MSI NX8800GTX-T2D768E-HD videocard all around… However, as you saw from the

power draw tests, overclocking does have a noticeable impact on total system

power consumption.

Aside from reaching for 3DMark records the

NX8800GTX-T2D768E-HD is fast enough for hardcore gamers at its stock speeds,

even those of you who play 1600×1200+ with AA/AF. It’s hard to believe, but

overclocking really isn’t all that necessary with this GPU.

Prelude to

Benchmarks

The details of how the MSI NX8800GTX-T2D768E-HD test

system was configured for benchmarking; the specific hardware, software drivers,

operating system and benchmark versions are indicated below. In the second

column are the general specs for the reference platforms this nVIDIA GeForce

8800GTX based videocard is to be compared against. Please take a moment to look

over PCSTATS test system configurations before moving on to the individual

benchmark results on the next page.

| PCSTATS Test System Configurations |

|

< Previous Page |

© 2022 PCSTATS. |

Next Page > |

|

Seven GeForce 8800 series graphics cards compared

NVIDIA’S GEFORCE 8800 SERIES is a jaw-dropping marriage of performance and image quality that has raised the bar for PC graphics substantially. Not since ATI’s Radeon 9700 Pro have we been so impressed by a single graphics card. The G80 GPU is simply a marvel, and if you’re looking to buy a high-end graphics card today, it’s the only chip you want.

Of course, your quest for the best graphics card won’t end there; you also have to choose between GTS and GTX flavors of the GeForce 8800. And you’re still not done, because GeForce 8800 GTS and GTX cards are available from a wide variety of manufacturers, each of which tries to bring something unique to the table, be it through bundled extras, tweaked clock speeds, or exotic cooling.

As daunting as the selection of GeForce 8800 series graphics cards may be, choice is a good thing. To help you wade through the options, we’ve rounded up a collection of GeForce 8800 series cards from BFG Tech, EVGA, Foxconn, MSI, OCZ, PNY, and XFX to see how they stack up. Read on to see which cards rise to the top and which get lost in the reference card shuffle.

GT to the S… or X

Before diving into card-specific attributes, it’s worth taking a moment to highlight some of the key differences between GTS and GTX flavors of the GeForce 8800. If you haven’t already, I’d strongly suggest reading our initial coverage of the GeForce 8800, which explores the intricate details of the G80 architecture and why it’s such a radical departure from current GPU designs. We’ll stick to the basics here, starting with a breakdown of what Nvidia has lopped off the GeForce 8800 GTX to make the GTS.

| Stream processors | ROPs | Core clock | Memory bus width | Memory clock | |

| GeForce 8800 GTS | 96 | 5 | 500MHz | 320-bit | 1. |

| GeForce 8800 GTX | 128 | 6 | 575MHz | 384-bit | 1.8GHz |

The GeForce 8800 GTS only retains 96 of the GTX’s 128 stream processors, cutting the chip’s shader power by 25%. Nvidia further handicaps the GTS by trimming the number of ROPs from six to five, and by reducing the memory bus width from 384 to 320 bits.

Not content to rely solely on microsurgery to separate the GTS from the GTX, Nvidia also uses clock speeds to differentiate the two. The GTX’s 575MHz core clock is reduced to just 500MHz for the GTS, and effective memory speeds drop from 1.8GHz to 1.6GHz. Those clock speeds aren’t cut in stone, though. The first wave of GeForce 8800 series cards may have stuck with stock speeds, but several of the cards we’ll be looking at today offer higher out-of-the-box frequencies.

We should note that there are now two versions of the GeForce 8800 GTS: the original with 640MB of memory, and a new model with 320MB. The 320MB card can be had for around $300, which is about $90 cheaper than the most affordable 640MB card. However, we’ve found that the GeForce 8800 GTS 320MB’s reduced memory size can be a liability with newer games at higher resolutions. All the GTS cards we’ll be looking at today are 640MB models.

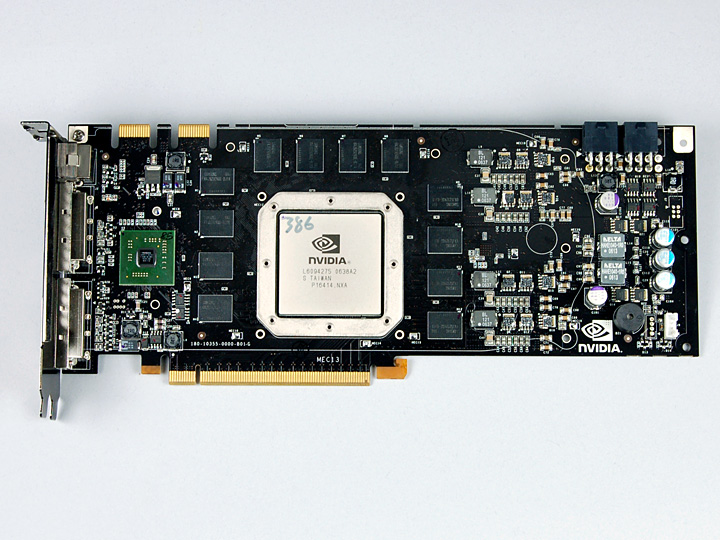

Referencing seven designs

GeForce 8800 series graphics cards revolve around the same Nvidia reference designs, and apart from the unique heatsink stickers offered by each manufacturer, you’d be hard-pressed to tell one card from another. Board vendors can’t be blamed for sticking to the reference designs, though. Instead of supplying its add-in board partners with graphics chips, Nvidia has GeForce 8800 series cards built by a contract manufacturer. Those cards are then sold to Nvidia’s board partners, effectively eliminating custom or tweaked board designs.

Nvidia says that add-in-card partners are free to offer their own customization, but that customization is effectively limited to coolers, clock speeds, and bundles. Interestingly, though, Nvidia won’t confirm whether it sells factory overclocked cards directly to board vendors or the vendors are doing the overclocking on their own. Nvidia apparently doesn’t release that much detail regarding its arrangements with add-in board partners.

So board partners may not have much freedom when it comes to card-specific features, but there’s still a little room for differentiation. Exhibit one:

| GT? | Core clock | Memory clock | Memory size | Sticker | Warranty length | Street price | |

| BFG GeForce 8800 GTS | GTS | 513MHz | 1. |

640MB | Brooding Mr. Clean | Lifetime | |

| EVGA GeForce 8800 GTX ACS³ | GTX | 626MHz | 2.0GHz | 768MB | NA | Lifetime* | |

| Foxconn FV-N88SMBD2-ONOC | GTS | 575MHz | 1.8GHz | 640MB | 3D space scene | 2 years | |

| MSI NX8800GTX | GTX | 576MHz | 1. |

768MB | Fairy princess | 3 years parts, 2 years labor | |

| OCZ GeForce 8800 GTX | GTX | 576MHz | 1.8GHz | 768MB | Sports car | Lifetime | |

| PNY XLR8 GeForce 8800 GTS | GTS | 513MHz | 1.58GHz | 640MB | XLR8 logo | 5 years* | |

| XFX GeForce 8800 GTX | GTX | 576MHz | 1. |

768MB | Armored wolfman | “Double lifetime” |

Today’s contestants are split between three GTS cards and four GTXs, most of which are running at stock speeds (513MHz appears to be the actual stock clock speed for the 8800 GTS). There are a couple of exceptions, though. EVGA’s GeForce 8800 GTX ACS³ pushes the GTX’s clocks to 626MHz core and an effective 2.0GHz memory, while Foxconn’s FV-N88SMBD2-ONOC cranks the GTS up to GTX speeds.

Fortunately, so-called factory “overclocking” doesn’t affect warranty coverage—all cards are covered at their shipping clock speeds, regardless of whether those are higher than Nvidia’s default clocks for the GTS and GTX. There’s plenty of variety when it comes to warranties, too; some manufacturers only offer a few years of coverage, while others pledge lifetime support.

As one might expect, prices vary from card to card, as well. Higher-clocked models tend to be more expensive, but that’s not always the case. Bundled extras also alter the value proposition, and we’ll be detailing all the extra goodies you get in the box in a moment. We’ll also be taking a closer look at those always-exciting heatsink stickers.

BFG’s GeForce 8800 GTS

|

No OC, for a change

BFG Tech has made a name for itself by offering higher-than-stock clock speeds on virtually all of its graphics cards. Curiously, though, the company’s GeForce 8800 GTS isn’t “overclocked in the box.” Yes, the GPU’s 513MHz core clock is just a smidge higher than the 500MHz Nvidia recommends, but its memory actually runs a tad slower than the 800MHz prescribed by Nvidia, at 792MHz—an effective 1.58GHz once we take DDR’s clock-doubling effect into account. Don’t get too excited, though; that looks to be the de facto standard for GTS cards. Our stock-clocked PNY GeForce 8800 GTS also has a 513MHz core and 792MHz memory clock, and we’d expect all GTS cards to follow suit.

With Nvidia selling finished reference cards to its add-in board partners, BFG’s GeForce 8800 GTs looks just like everyone else’s. Well, apart from the brooding Mr. Clean sticker on the heatsink, that is. I’m not quite sure what BFG is getting at with the sticker—perhaps that the card’s performance is so beyond your comprehension that it will give you a headache—but it definitely sets the card apart.

Like every other GTS, this BFG model has a single six-pin PCIe power connector, a pair of dual-link DVI outputs, and a video port capable of standard and high-definition output.

BFG complements the card’s output ports with a small collection of cables, including a couple of DVI-to-VGA adapters, a molex power adapter, and a video dongle. The video dongle only features component outputs, but you can plug an S-Video cable directly into the card’s video output port.

Of course, the gravy train of extras doesn’t end there. BFG also throws in a pack of Teflon mouse feet and a rather nice black t-shirt. I’ve actually used BFG’s Teflon pads on some older mice, and they work pretty well. I’d wear the shirt, too, if it weren’t an extra large. That’s a little big for my frame, but probably just right for the stereotypical North American gamer.

The average gamer should also know what BFG stands for, but for those who don’t, there’s a helpful sticker in the box. Unfortunately, I’m far too out of touch with today’s 1337 gamers to have any clue what OMGWTFBFGSAUCE is, but I assume it’s spicy.

While BFG includes plenty of extras with its GeForce 8800 GTS, you don’t get much in the way of software—just a driver CD. There’s also an interesting note suggesting that instead of returning a defective card to the place of purchase, you should contact BFG directly. Apparently, BFG would rather you deal with their customer support than that of a retailer, and based on the experiences I’ve had with retailer support, that’s probably a good idea. BFG offers free 24/7 technical support with the card via a toll-free number, and when combined with the company’s lifetime warranty, that’s quite a lifeline.

EVGA’s GeForce 8800 GTX ACS³ Edition

|

Shrouding the stock cooler

We’ve seen enough Special Ultra Xtreme XXX Golden Sample Edition graphics cards to last a lifetime, but EVGA’s GeForce 8800 GTX ACS³ Edition is unique, and not because it has some fancy superscript in its name. No, the ACS³ is distinctive because it’s the only card in this round-up that’s done something really different with Nvidia’s stock cooler, and we don’t just mean another sticker.

In addition to its unique cooler, the ACS³ also has the distinction of being the fastest card in the roundup. EVGA pushes the card’s core clock speed to 626MHz, which is nearly a 9% jump. The memory clock speed has also been increased from an effective 1.8GHz to an even 2.0GHz, which works out to an 11% boost.

But it’s the ACS³ that really grabs your attention. This is the third revision of EVGA’s Advanced Cooling System, and the company actually has a patent on the design, which is described as follows:

Graphics card apparatus with improved heat dissipation and including a planar metallic cover plate having an external perimeter configuration that generally corresponds to the plan-form of the printed circuit board used in the graphics card assembly, a plurality of thermal transfer blocks that can be selectively affixed to sources of thermal energy on the graphics card assembly and thermally coupled to the cover plate, and a fan and carriage therefor comprised of a heat sink and flow directing structure.

![]()

And also, it looks menacing. If Darth Vader had a graphics card, this would be it.

Flipping the card reveals that there’s a little ACS action on the underside, too. A beefy, finned back plate sits directly below the graphics and memory chips, allowing for better heat dissipation on both sides of the card.

One might be tempted to assume that the ACS³ is a radical departure from Nvidia’s stock GeForce 8800 series cooler, but that would be a mistake. The ACS³ is really more of a metal replacement for the plastic shroud that directs airflow with the stock cooler. It is a larger shroud, though.

As you can see in the picture above, a standard GeForce 8800 GTX cooler stops short of the edge of the card. The ACS³, however, extends the entire length of the card, with plenty of vents cut to encourage airflow.

Removing the ACS³ reveals a standard GeForce 8800 series cooler under the hood. Normally, we’d encourage graphics card makers to experiment with exotic heatsinks that deviate from the reference design, but Nvidia’s latest high-end graphics card coolers are among the best we’ve ever used. They do a heck of a job dumping warm air out the back of a case, and they barely make a sound in the process. EVGA hasn’t messed with what works here; they’ve just added a little twist of ACS to the equation.

Although not nearly as exciting as the Darth Vader cooler shroud, the EVGA card’s assortment of bundled extras is reasonably complete. In addition to a pair of DVI-to-VGA adapters, you also get two molex adapters for the card’s dual PCIe power plugs, a component video output dongle, and an S-Video cable.

EVGA throws in the requisite driver CD and a copy of Dark Messiah of Might and Magic, too. Most vendors are selling the game for around $40, so it’s a decent addition to the bundle, if adventure games are your thing. If they’re not, the game is new enough that you should be able to roll it on EBay and make a few bucks.

Like BFG, OCZ, and XFX, EVGA offers a lifetime warranty for its new graphics cards. However, to get the lifetime warranty, you have to register the card within 30 days of purchase, or you get stuck with only one year of coverage. Registration really isn’t a big deal, but setting a 30-day deadline is a little harsh, especially when no one else has a similar registration cut-off for their lifetime warranties.

To EVGA’s credit, the company offers its customers a unique step-up program that provides a measure of upgrade incentive protection. After buying an EVGA graphics card, you have 90 days to decide whether it’s fast enough. If you get the upgrade itch during that time—either to keep up with the Joneses or because a graphics chip refresh has juggled prices enough to let you jump up in performance without laying out too much cash—EVGA will let you trade in your card toward a more expensive model. You can’t get your money back, and you can only “step-up” once for each card you purchase. Still, it’s nice to know the option is there.

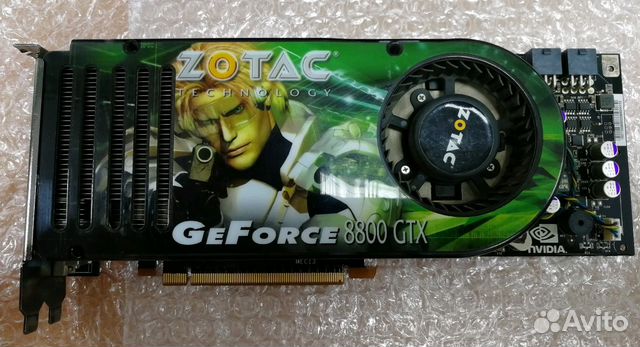

Foxconn’s FV-N88SMBD2-ONOC

|

Making a statement

Foxconn only recently got into the retail graphics card game, and despite the fact that the company is best know for relatively bland budget and OEM designs, its take on the GeForce 8800 GTS is anything but. Of course, the card can still be identified as a Foxconn; the company is known for incredibly awkward and cryptic product names, and FV-N88SMBD2-ONOC fits the bill on that front beautifully.

We have no clue what FV-N88SMBD2-ONOC actually means, but the OC at the end hints that the normally reserved and buttoned-down Foxconn is doing a little factory “overclocking.” Indeed, a rather innocuous little sticker on the card’s box indicates that this is an “Over-Clocking Version,” whatever over-clocking is.

Impressively, Foxconn isn’t messing around with modest clock speed hikes, either. The FV-N88SMBD2-ONOC is essentially a GeForce 8800 GTS running at GTX speeds—the core clock has been bumped from 500MHz to 575MHz, and the memory from 1.6GHz to an effective 1.8GHz. Don’t expect GTX performance, though; Foxconn can’t make up the GTS’ fewer stream processors, fewer ROPs, and narrower memory bus width.

Outside of its clock frequencies, this card is about as bland as the rest. I’m not even sure what to make of the heatsink sticker, other than it looks like something an intern whipped up in 3D Studio in about 10 minutes. Rendering time included. So that would make the FV-N88SMBD2-ONOC the ugliest GeForce 8800 in this round-up. After seeing that EVGA card naked, exposing the reference heatsink in all its glory, I think Foxconn would’ve been better off with clear plastic shroud and no sticker at all.

Things start to perk up for the Foxconn card when we begin digging around in the box, though. In addition to a couple of DVI-to-VGA adapters and a molex plug adapter for the card’s PCIe power connector, you also get a video output dongle with component, composite, and S-Video outputs. And that’s not all.

Dig deeper, and you’ll find a USB game controller with dual analog sticks and loads of buttons. We’ve seen graphics cards bundled with game controllers before, but perhaps not nearly often enough. I’d certainly rather have a game controller than a T-shirt that doesn’t fit or a game I’m not particularly interested in playing.

Speaking of software that doesn’t particularly interest me, Foxconn also throws in copies of RestoreIT 7 and VirtualDrive Pro 10. They make a big deal about it on the box, too, claiming that with the game controller, there’s $180 worth of extras included. The only problem is that VirtualDrive and RestoreIT are currently selling for $30 and $40, respectively. According to Foxconn, that makes what feels like a $25 game controller worth closer to $110. Maybe it’s the new math.

Perhaps that same new arithmetic is responsible for the FV-N88SMBD2-ONOC’s uninspired two-year warranty, as well. That’s the shortest coverage period in the bunch and the Foxconn card’s real achilles’ heel. When competitors are offering variations on a lifetime warranty with their graphics cards, two years looks pretty shabby.

MSI’s NX8800GTX

|

Exactly as expected

Although better known for its motherboards, MSI has long offered a wide range of graphics cards. The company is one of only a handful that sells cards based on GPUs from both Nvidia and ATI AMD, as well. What’s even more impressive than MSI’s penchant for playing both sides is the fact that the company’s graphics card lineup is peppered with interesting products and features, including factory “overclocked” cards, custom coolers, and HDMI output.

Unfortunately, you won’t find any of those exotic features on the NX8800GTX, which, despite a unique name, is about as bone stock as the GeForce 8800 GTX comes.

Surprisingly, though, the NX8800GTX is the only card in the bunch to leverage sex appeal on its heatsink sticker. At least MSI has been subtle about it; the fairy princess (RPG aficionados feel free to correct me here) is rather restrained in comparison to the leather-clad and half-naked ladies we’ve seen showcased by ATI and Nvidia. Heck, she almost looks angelic, making this card a far cry from an XXX Edition.

Even so, MSI takes care to cover each of the card’s DVI outputs with a plastic cap, presumably for, er, protection. That doesn’t strike us as entirely necessary, especially since MSI hasn’t bothered to protect the card’s video output port with a similar cap. If anything, we think that’s the port most likely to go unused.

If you do want to tap the video output port, MSI supplies a dongle with component and S-Video outputs. There’s also an S-Video cable in the box alongside a couple of DVI-to-VGA adapters.

On the software front, MSI packs in a number of little applications, including one that allows for automatic graphics card overclocking. Unfortunately, that app requires that you use MSI’s graphics drivers, so you can’t just run the latest ForceWare release. Since Nvidia has pledged to update its graphics drivers every month from here on out, you probably don’t want to lose out on fresh drivers just to use MSI’s overclocking utility.

We’re not particularly enamored with many of the little MSI-branded apps included with the NX8800GTX, but there are a few third-party software titles you might want to play with. CyberLink’s PowerCinema and Power2Go apps come in the box, and you also get a copy of Serious Sam II. The older Serious Sam SE was a staple of graphics card bundles long after the game hit the bargain bin, but Serious Sam II is at least recent enough to be selling online for around $25 still.

As entertaining as Serious Sam II is to play, I’d trade it in a heartbeat for a longer warranty on the NX8800GTX. Parts are covered for three years, but MSI only takes care of labor for the first two, making this the second-shortest warranty of the bunch.

OCZ’s GeForce 8800 GTX

|

Back in the game

The last time we had an OCZ graphics card in-house for testing was way back in 2001 with the Titan 3. Powered by a then-cutting-edge GeForce3 graphics chip, the Titan 3 was a revelation at the time; you got factory “overclocking,” an aftermarket Blue Orb cooler, and chunky aluminum ramsinks.

My, how things have changed.

OCZ is riding the GeForce 8800 GTX for its return to the graphics game, and the card bears little resemblance to the Titan 3 that defined what an enthusiast graphics card should be so many years ago. The OCZ GeForce 8800 GTX is, in fact, just another re-badged reference design. You won’t find any custom cooling solutions here, and the clock speeds are bone stock. OCZ does say that its cards have been “hand-selected,” though, and that it is committed to delivering “the highest possible headroom for overclocking.” We’ll see how that commitment pans out in our overclocking tests, but first, have a gander at the card.

Yeah, that’s definitely no Titan 3. This shrouded design no doubt offers better airflow than a naked heatsink, but it doesn’t look as good, at least not to those of us with a tendency to get hot and bothered by heatpipes and cooling fins.

In an attempt to give you something to look at through that case window, OCZ adorns the 8800 GTX heatsink with its own sticker depicting a bright green sports car that’s just ambiguous enough to avoid identification. This is the first heatsink sticker we’ve seen make a car analogy, and although bright green is an almost inhumane color for a sports car, at least they didn’t slap a spoiler onto the card. A carbon fiber heatsink shroud would have been trick, though.

What the OCZ card lacks in carbon it makes up with cables. Loads of them. Alongside a pair of DVI-to-VGA adapters and those all-important PCIe power connectors, you also get a video output dongle that provides component and S-Video outputs, and composite and S-Video cables. The composite video cable’s a little out of place, though; both the card and output dongle lack a composite output port, so there’s nothing to plug the cable into.

OCZ may give you a cable you don’t actually need, but they haven’t loaded the box up with software you’re not going to use. A simple driver CD is all that’s included, and that suits us just fine.

What we can’t provide on our own is warranty coverage, so it’s a good thing that OCZ offers a lifetime guarantee with the card. OCZ’s warranty support page doesn’t demand registration within a set time period to take advantage of the lifetime warranty coverage, either. A lifetime warranty doesn’t actually mean that one GeForce 8800 GTX is going to be more reliable than another—especially not with Nvidia’s contract manufacturer building all the cards—but it at least entitles you to a replacement anytime.

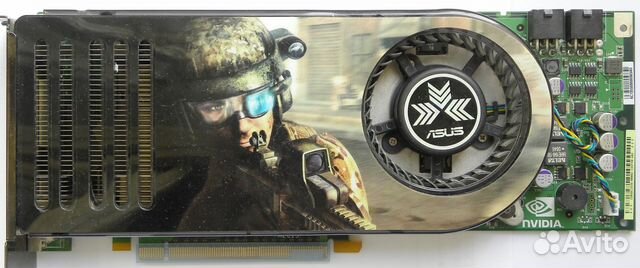

PNY’s XLR8 GeForce 8800 GTS

|

Affordable simplicity

PNY is pushing its latest GeForce 8800 series graphics cards under a new XLR8 performance brand that manages to capture a couple of well-worn enthusiast clichés with just four characters. If there’s one thing enthusiasts need, it’s more capital Xs and numbers masquerading as letters.

Of course, we tend to scoff at most branding exercises, so it’s not surprising that XLR8 isn’t resonating with us. The fact that the card doesn’t offer much in the way of actual acceleration doesn’t help, either; this is a standard GeForce 8800 GTS running at 513MHz core and 1.58GHz memory. For some reason, those stock clock speeds warrant a “Performance Edition” moniker that PNY proudly scribes across the card’s heatsink sticker.

XLR8 branding is really all there is to the sticker, which looks like it was ripped from an iPod nano commercial. Not that looks matter much. Even with a case window, admirers are going to have a hard time seeing the heatsink sticker when the card in installed in a tower enclosure. Board vendors might as well put their logos along the top edge of the card, since that’s all you’re really going to see once the card is installed in a system.

Interestingly, the PNY card is one of only a few in this round-up to come on a green printed circuit board. Either Nvidia has more than one contract manufacturer, or they’ve switched board colors somewhere along the way.

Sifting through the array of goodies PNY has included in the box reveals a standard collection of adapters and an S-Video cable. A video output dongle is also included that provides component, composite, and S-Video outputs in a single block.

Things are pretty simple on the software front, with a driver CD and download coupon for System Mechanic 7. Since this is the least expensive card of the bunch, we don’t mind the lack of bundled extras. We don’t even mind the fact that there’s an extra step if you want to maximize your warranty coverage. The card is covered by a standard three-year warranty, but users can add two years to that just by registering their card with PNY. Registration effectively brings the XLR8 card’s warranty up to five years, which might not match the lifetime terms offered by some manufacturers, but should last the useful life of the card.

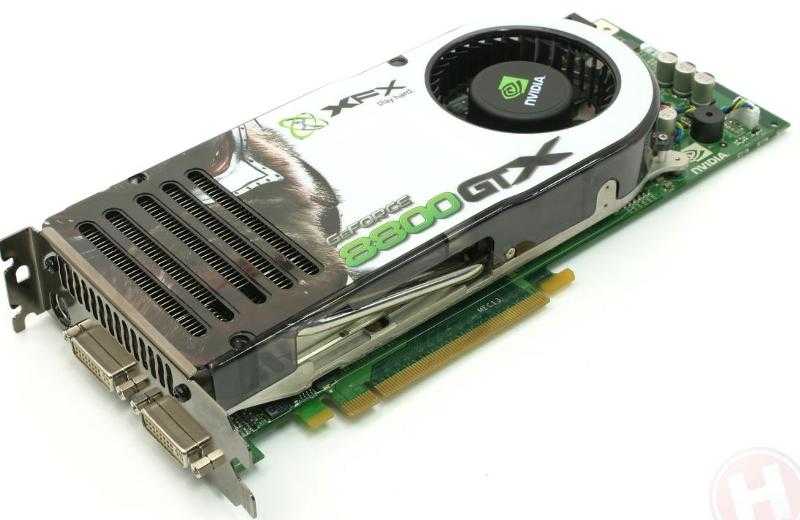

XFX’s GeForce 8800 GTX

|

PG-13 edition

We’ve poked fun at XFX’s “XXX Edition” cards on more than one occasion, but we have a decidedly more conservative GeForce 8800 GTX in today’s round-up. This particular model runs at stock clock speeds and sits at the bottom of XFX’s GTX range, just below Extreme and XXX versions that boast faster core and memory clocks. All three flavors feature the same G80 GPU, board design, and Nvidia cooler, though, and you’re free to overclock this stock version on your own. We certainly did, and with impressive results.

You’ve seen essentially the same 8800 GTX card four times now, and with the exception of EVGA’s fancy ACS³ aluminum shroud, not much as changed other than the sticker on the heatsink.

XFX’s artistic statement features some sort of armored wolfman. Or maybe it’s just a wolf, minus the man—vents on the cooler make it hard to tell one way or another.

Like all the others in this round-up, the XFX card’s back plate has a classy pewter finish that’s a pleasant departure from the boring black and silver, and gaudy gold, that dominate the PC world.

After seeing a game controller bundled with Foxconn’s GTS, I almost expected one to appear in the XFX GeForce 8800 GTX’s box. After all, it was a bundled game controller that won us over when we compared XFX’s GeForce 7800 GTX Overclocked with a couple of other 7800 GTXs a year and a half ago. Sadly, though, XFX doesn’t offer many extras with its standard GeForce 8800 GTX. You do get a couple of DVI-to-VGA adapters, a component output dongle, and an S-Video cable, but there are no PCIe power adapters in the box. Since the GeForce 8800 GTX has two six-pin PCIe power plugs, you’ll want to make sure your power supply has enough connectors or pick up some power adapters on your own.

A plain driver CD rounds out the card’s bundle in unspectacular fashion, but XFX does have an ace up its sleeve. While BFG, EVGA, and OCZ boast lifetime warranties, XFX offers what it calls a “double lifetime” warranty on its GeForce 8800 GTX. Double lifetime coverage doesn’t actually last two lifetimes; instead, it covers the second owner of the card, should it ever be resold. Registration is necessary for the card’s original and second owners, but that’s a small price to pay for what continued warranty coverage can add to the card’s resale value down the road.

Our testing methods

Since we’ve narrowed today’s focus to the unique attributes offered by each of Nvidia’s add-in board partners, we won’t spend too much time testing 3D gaming performance. For a more in-depth look at how the performance of the GeForce 8800 GTS and GTX compare to each other and a wide range of competitors, check out our initial review of the cards.

All tests were run at least twice, and their results were averaged, using the following test systems.

| Processor | Core 2 Duo E6700 2.67GHz |

| System bus | 1066MHz (266MHz quad-pumped) |

| Motherboard | EVGA 122-CK-NF68 |

| Bios revision | P24 |

| North bridge | Nvidia nForce 680i SLI SPP |

| South bridge | Nvidia nForce 680i SLI MCP |

| Chipset drivers | ForceWare 9. |

| Memory size | 2GB (2 DIMMs) |

| Memory type | Corsair TWIN2X2048-8500C5 DDR2 SDRAM at 800MHz |

| CAS latency (CL) | 4 |

| RAS to CAS delay (tRCD) | 4 |

| RAS precharge (tRP) | 4 |

| Cycle time (tRAS) | 12 |

| Audio | Integrated nForce 680i SLI MCP/ALC885 with Realtek HD 1.54 drivers |

| Graphics | BFG GeForce 8800 GTS 640MB PCI-E EVGA GeForce 8800 GTX ACS³ 768MB PCI-E Foxconn FV-N88SMBD2-ONOC 640MB PCI-E MSI NX8800GTX 768MB PCI-E OCZ GeForce 8800 GTX 768MB PCI-E PNY XLR8 GeForce 8800 GTS 640MB PCI-E XFX GeForce 8800 GTX 768MB PCI-E |

| Graphics driver | ForceWare 97. |

| Hard drive | Western Digital Caviar RE2 400GB |

| OS | Windows XP Professional |

| OS updates | Service Pack 2 |

Thanks to Corsair for providing us with memory for our testing. 2GB of RAM seems to be the new standard for most folks, and Corsair hooked us up with some of its 1GB DIMMs for testing.

Also, all of our test systems were powered by OCZ GameXStream 700W power supply units. Thanks to OCZ for providing these units for our use in testing.

We used the following versions of our test applications:

- Futuremark 3DMark06 Build 1.02

- F.E.A.R. 1.08

- The Elder Scrolls IV: Oblivion 1.1

The test systems’ Windows desktop was set at 1280×1024 in 32-bit color at an 85Hz screen refresh rate. Vertical refresh sync (vsync) was disabled for all tests.

All the tests and methods we employed are publicly available and reproducible. If you have questions about our methods, hit our forums to talk with us about them.

Graphics performance

We’ve narrowed our performance testing to 3DMark06’s Shader Model 3.0 tests and F.E.A.R.’s built-in performance benchmark. Both were run at 1920×1440—the highest resolution supported by our test monitor—with 4X antialiasing and 16X anisotropic filtering.

Obviously, the GeForce 8800 GTX cards have a considerable lead over the GTS models. What’s more interesting to see, however, is how much the higher clocked models manage to outpace their competition. The EVGA ACS³ makes more of its clock speed advantage over the rest of the GTX field than the Foxconn does over other GTS cards. That’s notable because it’s the Foxconn card that actually enjoys a greater clock speed jump, at least percentage-wise, over its stock-clocked compatriots.

Overclocking

The handy automatic overclocking utility built into Nvidia’s graphics drivers and then relocated to its nTune system utility doesn’t seem to be working properly with the GeForce 8800 series, so we had to kick it old-school with manual slider manipulation and loads of trial-and-error testing. Each of our overclocked configurations had to loop successfully through three iterations of 3DMark’s Shader Model 3.0 tests at 1920×1440 with 4X antialiasing and 16X aniso, and then endure ten minutes of Oblivion at the same graphics settings.

We were able to hit the following core and memory clock speeds with each card (doubling the memory clock speed gives you the effective memory clock):

- BFG GeForce 8800 GTS — 653MHz core, 962MHz memory

- EVGA GeForce 8800 GTX ACS3 — 643MHz core, 1048MHz memory

- Foxconn FV-N88SMBD2-ONOC — 650MHz core, 1060MHz memory

- MSI NX8800GTX — 629MHz core, 1053MHz memory

- OCZ GeForce 8800 GTX — 622MHz core, 1047MHz memory

- PNY XLR8 GeForce 8800 GTS — 653MHz core, 1059MHz memory

- XFX GeForce 8800 GTX — 659MHz core, 1060MHz memory

The XFX GeForce 8800 GTX hit managed a higher core clock speed than any other card by 9MHz, and it also shared the memory clock crown with Foxconn’s FV-N88SMBD2-ONOC, although only by 1MHz over PNY’s GTS. Note that with only a couple of exceptions, all the cards hit about the same core and memory clock speeds, regardless of their GTS or GTX designation.

As is always the case, overclocking success is never guaranteed and is often just the luck of the draw. Because Nvidia takes care of the manufacturing for all of these cards, there probably isn’t much the add-in board partners can do to ensure greater overclocking success.

Pushing these cards to their limits changes how they stack up a little, with the factory “overclocked” cards no longer sitting in the lead. These results suggests the overclocking potential of stock-clocked cards isn’t being eroded by cherry-picking for faster models.

Noise levels

Noise levels were measured using an Extech 407727 Digital Sound Level meter placed along the edge of the motherboard 1″ from the graphics card and out of the direct path of airflow. We recorded noise levels after 10 minutes idling at the Windows desktop, and again after 10 minutes rendering this stunning scene from Oblivion at 1920×1440 with 4X antialiasing and 16X anisotropic filtering, and all the in-game eye candy cranked. Cards were tested at both their default and overclocked speeds.

At idle, only a decibel separates the quietest card from the loudest. As one might expect, the overclocked cards tend to run a little louder, but the GeForce 8800 series cooler is so quiet at idle that it’s hard to tell.

Things spread out a little under load, and this time it’s the GTS cards that prove to be the quietest. What’s particularly interesting here is that even the overclocked GTS cards are running quieter than the best of our GTX crowd. GTX noise levels are reasonably consistent at idle, regardless of whether the cards are overclocked.

Power consumption

System power consumption was tested, sans monitor and speakers, at the wall outlet using a Watts Up power meter. We used the same idle and load conditions as our noise level tests.

It turns out that some GeForce 8800 series cards draw a little less power than others. The GTS models are predictably the most frugal when it comes to power consumption, but overclocking also makes a difference.

GPU temperature

We tracked GPU temperatures using Nvidia’s nTune system utility, which can log temperatures to a text file. Again, we used the same idle and load conditions as our noise level tests.

Perhaps the most striking thing about these results is the fact that none of the cards saw much of a change in GPU temperature from idle to load. That’s curious to say the least, and it’s not like nTune can’t track changes in temperature—the app had no problem logging higher GPU temperatures when the cards were overclocked.

Since these cards are using the same graphics chips and reference coolers, we can’t draw too many conclusions beyond the fact that G80 operating temperature seems to vary from chip to chip. EVGA’s ACS³ cooling shroud doesn’t appear to have a significant impact on GPU temperatures, either.

Update — Several readers have written in to tell us that they’re seeing much higher load temperatures with GeForce 8800 series graphics cards monitored by third party apps like RivaTuner than we saw with Nvidia’s nTune system utility. It appears that nTune’s GPU temperature tracking isn’t working properly with the GeForce 8800 series, and we’ve contacted Nvidia regarding the issue.

Conclusions

If you’re struggling to decide between a GeForce 8800 GTS and a GTX, I suggest reading through our more detailed coverage of how the models compare. In terms of performance per dollar, both offer exceptional value; it’s just a question of how much you want to spend, and what resolution you want to run.

Then there’s the matter of selecting a board vendor. You’re essentially getting the same reference hardware regardless of which you choose. That kills diversity in the market a little, but it also implies consistent production quality. Picking favorites then becomes a matter of comparing warranties, pricing, extras, and clock speeds. I’ve selected a couple of stand-outs from the cards we’ve looked at today.

On the GTS front, I was first torn between the BFG and Foxconn cards. The Foxconn’s factory “overclocking” and bundled game controller weigh heavily in its favor, and it is $10 cheaper than the BFG. However, Foxconn’s warranty spoils the deal—a measly two years of coverage in a market filled with lifetime warranties is a joke. So surely the BFG, with its lifetime warranty, excellent tech support, and freebie t-shirt and mouse feet, would win me over. But not quite. You see, the BFG card is also the most expensive GeForce 8800 GTS of the bunch, but it runs at stock speeds, and our card’s memory didn’t overclock nearly as well as that of the others.