| 1920×1080 Medium Preset 1920×1080 High Preset AA:SM 1920×1080 Very High Preset AA:SM | |

| NVIDIA GeForce RTX 2080 Ti (Desktop) MSI GeForce RTX 2080 Ti, i9-9900K |

179 (45.2min, 70.8P0.1, 113P1) fps ∼30% 106 (44.4min, 47.7P0.1, 59.7P1) fps ∼23% 71.3 (37.9min, 40.7P0.1, 45.3P1) fps ∼20% |

| NVIDIA GeForce RTX 2080 (Desktop) MSI GeForce RTX 2080, i9-9900K |

165.6 (17.1min, 64.1P0.1, 80.8P1) fps ∼28% 96.4 (11.9min, 37P0.1, 47.1P1) fps ∼21% 61.6 (31.2min, 31.7P0.1, 35P1) fps ∼17% |

| NVIDIA GeForce RTX 2080 Mobile MSI GT76 Titan DT 9SG, i9-9900K |

160 (28.5min, 42.3P0.1, 60. 89.3 (29.3min, 33.9P0.1, 48P1) fps ∼19% 61.1 (29.7min, 33.7P0.1, 41.5P1) fps ∼17% |

| NVIDIA GeForce RTX 2070 (Desktop) MSI GeForce RTX 2070, i9-9900K |

153 (14.1min, 38.6P0.1, 50.9P1) fps ∼26% 81.4 (20.6min, 40.3P0.1, 57.8P1) fps ∼17% 56.3 (37.4min, 41.1P0.1, 44P1) fps ∼16% |

| NVIDIA GeForce RTX 2060 (Desktop) MSI GeForce RTX 2060, i9-9900K |

138 (61.5min, 66.9P0.1, 104P1) fps ∼23% 70.8 (27.1min, 38.1P0.1, 49P1) fps ∼15% 49.6 (33.6min, 34.9P0.1, 40.1P1) fps ∼14% |

| NVIDIA GeForce RTX 2070 Mobile MSI GP65 Leopard 9SF, i7-9750H |

133 (54.9min, 61.3P0.1, 94. 70.8 (15.9min, 29.9P0.1, 33.7P1) fps ∼15% 50.2 (32.7min, 34P0.1, 41.2P1) fps ∼14% |

| NVIDIA GeForce GTX 1080 Mobile Alienware 17 R4, i7-7820HK |

126 (22.2min, 38.4P0.1, 62P1) fps ∼21% 72.9 (35.3min, 38.2P0.1, 45.7P1) fps ∼16% 47.4 (21.9min, 29P0.1, 33.9P1) fps ∼13% |

| NVIDIA GeForce RTX 2060 Mobile MSI GE65 Raider 9SE, i7-9750H |

112 (20.7min, 24.8P0.1, 28.5P1) fps ∼19% 61.1 (10.4min, 30.1P0.1, 46.9P1) fps ∼13% 46.5 (33.6min, 35.1P0.1, 38.9P1) fps ∼13% |

| NVIDIA GeForce GTX 1070 Mobile Asus G752VS, 6820HK |

102 (26min, 26.5P0.1, 30.4P1) fps ∼17% 62. 40.1 (23.3min, 23.8P0.1, 28.7P1) fps ∼11% |

| NVIDIA GeForce GTX 1660 Ti Mobile MSI GP65 Leopard 9SD, i7-9750H |

99.5 (22.3min, 22.7P0.1, 24.8P1) fps ∼17% 55.1 (17.3min, 34.8P0.1, 40.9P1) fps ∼12% 41.7 (28.8min, 32.2P0.1, 33.9P1) fps ∼12% |

| AMD Radeon RX 5500M MSI Alpha 15, R7 3750H |

76.5 (27.1min, 29.5P0.1, 36.1P1) fps ∼13% 42.5 (18.8min, 21.3P0.1, 26.6P1) fps ∼9% 31.5 (17.9min, 20.7P0.1, 24.6P1) fps ∼9% |

| NVIDIA GeForce GTX 1650 Ti Mobile MSI GP75, i7-10750H |

69.5 (15.4min, 16P0.1, 17.6P1) fps ∼12% 38.1 (25.9min, 27. 24.8 (15.7min, 17P0.1, 20.2P1) fps ∼7% |

| NVIDIA GeForce GTX 1650 Mobile MSI GL75, i7-10750H |

62.8 (13.7min, 14.3P0.1, 15.8P1) fps ∼11% 35.1 (24.2min, 26P0.1, 28.4P1) fps ∼8% 25.9 (11.4min, 14.3P0.1, 21.1P1) fps ∼7% |

| NVIDIA GeForce MX350 MSI Prestige 14, i7-10710U |

28.4 (6.9min, 7.02P0.1, 8.03P1) fps ∼5% 15.1 (10.7min, 11P0.1, 12.1P1) fps ∼3% 11.7 (7.57min, 7.82P0.1, 8.74P1) fps ∼3% |

| NVIDIA GeForce MX330 MSI Prestige 14, i7-10510U |

22.8 (4.99min, 5.02P0.1, 5.7P1) fps ∼4% 11.9 (7.5min, 8.11P0.1, 9.5P1) fps ∼3% 8.86 (5. |

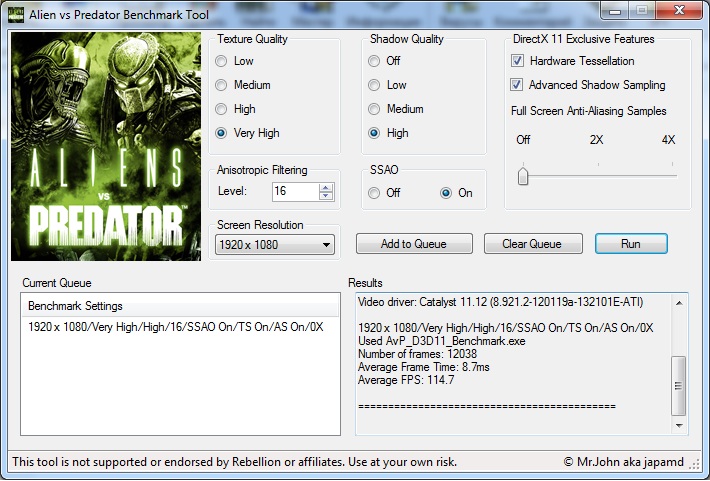

Crysis 2 Benchmark Tool (new DX11 version)

Crysis 2 Benchmark Tool

Quote:

«Adrenaline Crysis 2 Benchmark Tool» offers a quick and easy way for measuring your hardware performance using Crytek’s Crysis 2. Supporting several maps, image quality settings and resolutions with a simple and intuitive interface, several tests can be executed sequentially, making life easier for hardcore users and professional reviewers.

The tool also supports Ranked mode tests, where quality settings are verified against changes in order to allow a more fair comparison between several hardware configurations. The results can be sent to Adrenaline BenchZone, where you can share and compare your results against several other results of BenchZone users, using an exclusive ranking system classified by global result and by video card used.

Key features:

•Portuguese and English versions

•Supports several queued tests

•Supports image quality settings (HIGH, VERY HIGH, EXTREME and ULTRA)

•Supports Anti-Aliasing filters (experimental)

•Supports 3 timedemos (Central Park, Downtown and Times Square)Minimum Requirements:

•Windows XP SP2 or Windows Vista/Windows 7 (32-bit or 64-bit)

•Microsoft .NET Framework 2.0 or newer

•Microsoft C++ 2008 Runtime SP2 or newer

•Need game update to 1.9 patch (DirectX 11)Notes:

•The tool is being updated regularly to fix reported bugs

•Requires Crysis 2 (full game)

•Requires admin rights (run the program as administrator)Click to expand…

Source and download link 1

Source and download link 2

Updates

======

1.0.0.13 — 09/28/2011

— Fix for login problem due to domain change

1.0.1.12 — 07/15/2011

— Added quality presets for BenchZone’s Hall of Fame (Performance, Quality and Xtreme)

1. 0.1.11 — 07/08/2011

0.1.11 — 07/08/2011

— Fix for multi-display resolutions (AMD Eyefinity, NVIDIA Surround)

1.0.1.10 — 07/07/2011

— Workaround for multi-GPU systems issues (flickering/multi-gpu not working) found on build 1.0.1.9

1.0.1.9 — 07/04/2011

— Added new internal verification of quality settings.

— Added extra procedures for restoration of files after the benchmark tool is terminated.

1.0.1.8 — 07/03/2011

— New benchmark engine handling

1.0.1.7 — 07/02/2011

— Removed external dependency of a program’s module

— Removed timedemo Downtown/Autotest

1.0.1.6 — 07/02/2011

— Added support for game patch 1.9.

— Introduced support for DX11 and Ultra quality mode.

— New interface.

— Improved timedemos.

— Added support for submitting results to Adrenaline BenchZone.

— Improved Steam support (special thanks to Guilherme Sollero aka SHAFT for his help)

— Version numbering changed to full version.

0.15 — 15/04/2011

— New installer.

— New timedemos.

— Removed unavailable AA modes (8xQ, etc.)

— The program now checks for newer version when it’s started.

0.14 — 14/04/2011

— Savegames created during the benchmark are removed after the test finishes.

0.13 — 14/04/2011

— Autoexec.cfg is kept even it didn’t existed before. The program now deletes the file to avoid the game enter benchmark mode the next time it’s opened.

0.12 — 13/04/2011

— AA modes changed to reflect changes from Crysis/Crysis Warhead.

0.11 — 13/04/2011

— Program title changed.

— Fixed game config path.

0.10 — 13/04/2011

— First release.

Reactions:

d-block, damric and Nightz2k

JavaScript is disabled. For a better experience, please enable JavaScript in your browser before proceeding.

1 — 20 of 163 Posts

Ghostleader

·

Premium Member

d-block

·

Banned

renaldy

·

Registered

renaldy

·

Registered

Nightz2k

·

Overclocker

renaldy

·

Registered

WorldExclusive

·

Registered

Ghostleader

·

Premium Member

Ghostleader

·

Premium Member

man from atlantis

·

Registered

Cmoney

·

Registered

man from atlantis

·

Registered

man from atlantis

·

Registered

Ghostleader

·

Premium Member

man from atlantis

·

Registered

levontraut

·

Registered

Outcasst

·

Premium Member

superj1977

·

Registered

levontraut

·

Registered

1 — 20 of 163 Posts

- This is an older thread, you may not receive a response, and could be reviving an old thread.

Please consider creating a new thread.

Please consider creating a new thread.

Top

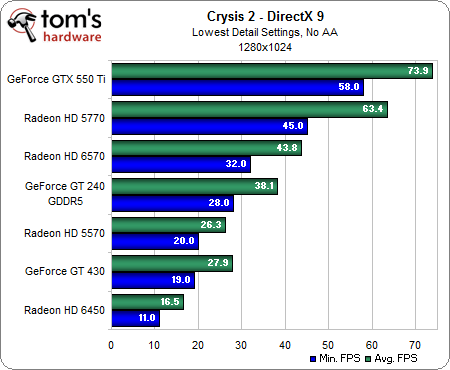

But Can It Run Crysis? 10 Years Later — Tom’s Hardware 2017

A Decade Of GPUs, Benchmarked

“But can it run Crysis?” It’s a question that’s still asked jokingly when new graphics cards are introduced, even if the answer nowadays is almost always affirmative. But exactly 10 years ago, experiencing Crysis in its full glory simply wasn’t possible. Resolutions as low as 1680×1050 were enough to overwhelm the likes of Radeon HD 3870 and the once-mighty GeForce 8800 GTX. Let that sink in. The fastest graphics cards available were too slow for the game’s most taxing settings on relatively mainstream monitors.

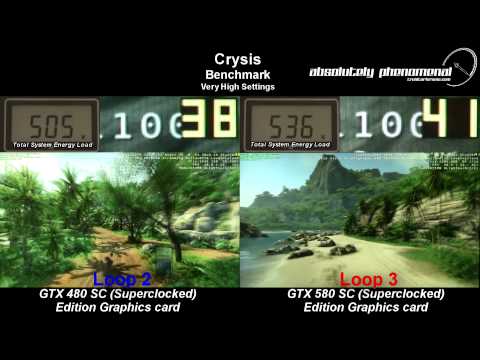

Subsequent GPUs continued struggling for playable frame rates, and it took years before the averages approached what most of us would consider acceptable. By the time Tom’s Hardware stopped testing with Crysis, we were well into 2010, GeForce GTX 480 was taking on Radeon HD 5870, and not a single card averaged more than 30 FPS at 2560×1600 in this game.

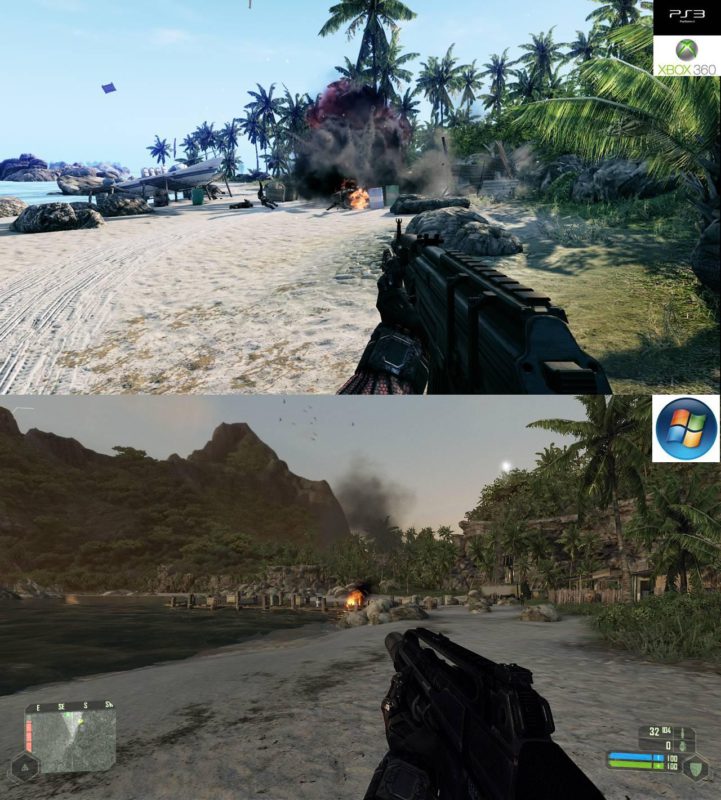

Why emphasize Crysis’ top quality presets? Because, the game still looks gorgeous when you lock its options to Very High. Let’s compare:

Low/Medium

Selecting the Low preset destroys texture quality, disables procedurally-drawn vegetation, eliminates shadows, handicaps the physics engine, applies a static sky, imposes unrealistic water, dials back effects quality to a minimum, and disables depth of field. This isn’t how we’d want to play Crysis, for sure.

As you can see, just one step up from Low to Medium changes Crysis‘ ambiance completely.

Medium/High

Perhaps the most dramatic difference between Medium and High quality is the former’s lack of motion blur. The effect is especially apparent in this captured frame, where that rock in the ocean and the trees behind it pan left to right quickly. HDR lighting is missing from the Medium quality screenshot, creating a universally brighter and less authentic scene.

Memory use jumps more going from Medium to High quality than any other transition available in Crysis’ settings.

High/Very High

The Very High preset has a subtler effect on quality than going from Low to Medium or Medium to High. However, a side-by-side comparison reveals clear differences in lighting, shadows, water rendering, foliage density, and texture quality.

DX9/DX10

And how about the difference between DirectX 9 and DirectX 10? You’d probably have a hard time distinguishing one from the other without the in-game info. And the performance delta that once existed between modes is far less prevalent after the work Crytek did to patch Crysis in the months following its introduction.

All of the benchmarks we’re running today invoke DirectX 10 in a 64-bit environment.

How We Tested Crysis

We threw around a number of different ideas for the 10-year anniversary of Crysis, and over the next few of days, you’re going to see us benchmark the game in a couple of ways. To start, though, we’re taking flagship graphics cards from AMD and Nvidia spanning the last decade, and comparing their performance.

On the AMD side, this gives us:

- Radeon HD 3870

- Radeon HD 4870

- Radeon HD 5870

- Radeon HD 6970

- Radeon HD 7970

- Radeon R9 290X

- Radeon R9 Fury X

- Radeon RX 580

- Radeon RX Vega 64

We had most of these cards in our library. The couple we lacked were quickly snatched up from eBay auctions.

Next, we gathered up a gaggle of GeForces:

- GeForce 8800 GTX

- GeForce 9800 GTX

- GeForce GTX 280

- GeForce GTX 480

- GeForce GTX 580

- GeForce GTX 680

- GeForce GTX 780 Ti

- GeForce GTX 980 Ti

- GeForce GTX 1080 Ti

Again, we had most of these. Gaps in our line-up were graciously filled by Nvidia, which pulled from its own library of cards.

While we debated pairing each GPU to its era-appropriate platform, that would have over-complicated our little exhibition, simultaneously adding variables to our graphics benchmarks. So, we dropped all of these cards onto our MSI Z270 Gaming Pro Carbon motherboard, which was recently upgraded to host a Core i7-7700K CPU. The processor is complemented by G.Skill’s F4-3200C14Q-32GTZ memory kit. Crucial’s MX200 SSD remains, as does the Corsair h210i cooler and be quiet! Dark Power Pro 10 850W power supply.

The processor is complemented by G.Skill’s F4-3200C14Q-32GTZ memory kit. Crucial’s MX200 SSD remains, as does the Corsair h210i cooler and be quiet! Dark Power Pro 10 850W power supply.

MSI Z270 Gaming Pro Carbon

Intel Core i7-7700K

G.Skill F4-3200C14Q-32GTZ

Crucial MX200 1TB

Corsair h210i

be quiet! Dark Power Pro 10 850W

This platform runs the latest version of Windows 10 with all of its updates and patches installed. And that’s a bit of an issue when you’re trying to test graphics cards that stopped receiving driver updates more than four years ago, before Windows 10’s time. Nevertheless, we used a bit of creative engineering to get as many AMD and Nvidia cards as possible working under the latest drivers.

From there, we improvised by forcing AMD’s older boards to accept known-good driver packages. For instance, Catalyst 13.4 can be pushed through the Device Manager, even if the installer won’t allow you past a certain point. Nvidia’s support for legacy products is far superior. GeForce 342.01 adds official Windows 10 support for Tesla-based cards, including our GeForce 8800 GTX, 9800 GTX, and GTX 280.

For instance, Catalyst 13.4 can be pushed through the Device Manager, even if the installer won’t allow you past a certain point. Nvidia’s support for legacy products is far superior. GeForce 342.01 adds official Windows 10 support for Tesla-based cards, including our GeForce 8800 GTX, 9800 GTX, and GTX 280.

We would have loved to use the old Crysis Benchmarking Tool for faster testing, but a desire for results at 1920×1080, 2560×1440, and 3840×2160 forced us to control each run by editing the benchmark_gpu.cfg file and running Crytek’s built-in GPU metric. Why pick that test, specifically, when other timedemos yield such different results? Even with a GeForce GTX 1080 Ti installed, the most taxing CPU test pulled frame rates into single digits at times, making it clear that we wouldn’t be isolating graphics. Fly-bys are rarely representative benchmarks. But in this case, the Island GPU benchmark was commonly utilized during Crysis’ heyday, so we’ll stick with it for comparing graphics cards to each other.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Crysis

- 1

Current page:

A Decade Of GPUs, Benchmarked

Next Page 1920×1080 Results

Chris Angelini is an Editor Emeritus at Tom’s Hardware US. He edits hardware reviews and covers high-profile CPU and GPU launches.

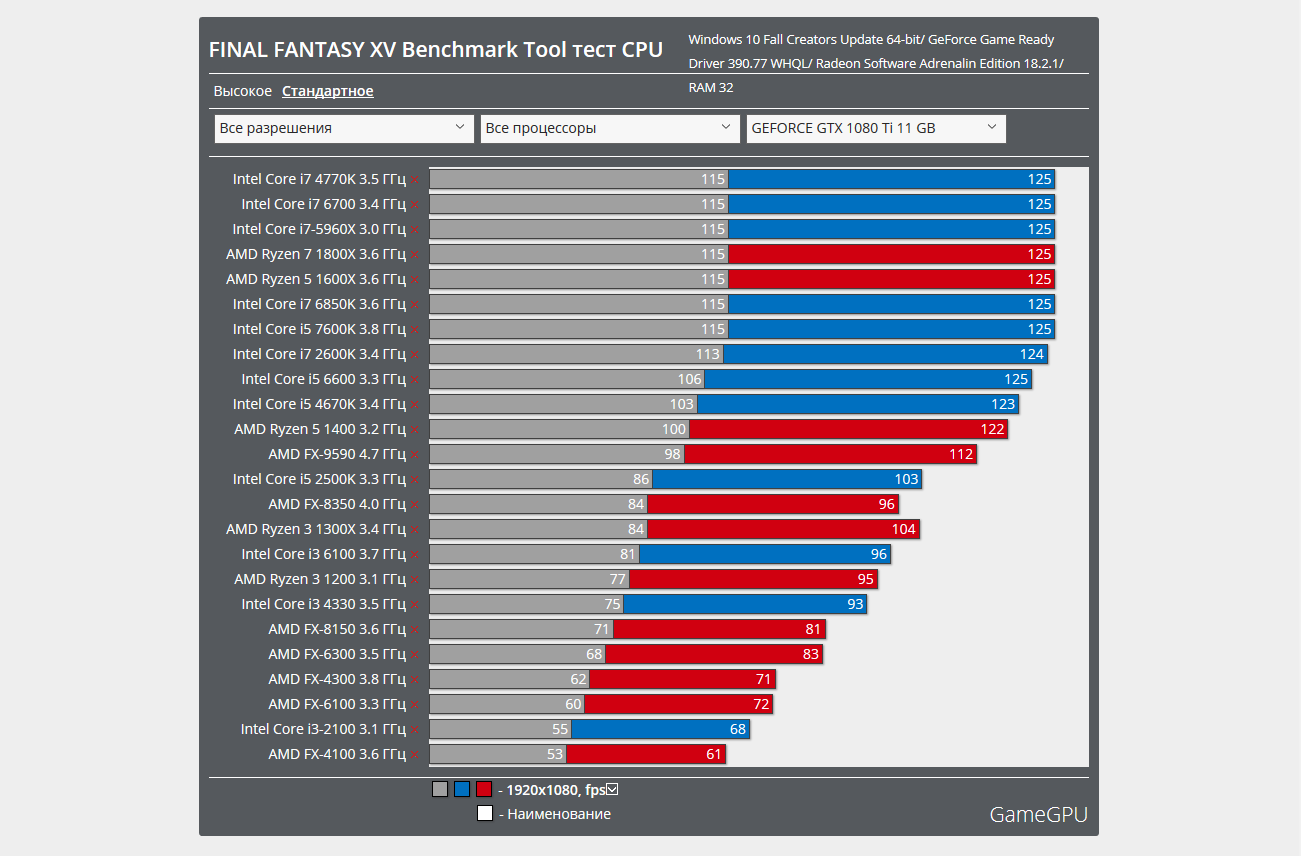

Processor Intel Core i7 980X — six-core extreme / Processors and memory

⇡#Testing

Let’s start, by tradition, with the Everest Ultimate synthetic test package.

The first results are alarming: without overclocking, the Core i7 980X loses to the Core i7 870 running at a lower frequency. However, this is understandable: due to the lower frequency of Uncore, the latency of the memory subsystem increased, which led to such low results.

In a Core i7 9 memory write testThe 80X is already behind both competitors, and no amount of overclocking allows it to catch up with them. You will have to come to terms with this, working with memory cannot be called the strong point of the new processor.

Copying to memory once again confirms the above. The Core i7 980X again lags behind the rest of the participants, and overclocking does not change the situation.

The high latency partly explains the slow performance of the memory subsystem. When overclocked, the Core i7 980X almost catches up with the youngest processor for the LGA 1366 platform, but it is still far from the faster Core i7 870.

But enough of the sad stuff, CPU Queen is the first test to show the huge advantage of the six-core Core i7 980X over the rest. Such a big difference is due to both a higher frequency and an increased number of cores. An almost threefold superiority, of course, is possible only in synthetic tests, but it still cannot but rejoice.

In this test, the Core i7 980X no longer overtakes the rest of the participants at times, but still stands out noticeably from their background. Overclocking does not give a particularly large increase — the processor receives the main gain due to the increased number of real and virtual cores.

And again, almost a twofold advantage in favor of the protagonist of testing. Overclocking increases the gap a little more, but does not play a key role.

Now we get to the fun part: the extra AES-NI instructions show themselves in action. The top processor for the LGA 1156 platform is almost 15 times behind the Core i7 980x. Think about it — 15 times, such a difference cannot be seen, even comparing any other modern processor with an aged Pentium 4. If all encryption-related tasks receive the same increase in speed, then their execution simply will not affect the system. Let’s hope that Intel will add a new instruction set to all new processors, which will give them a significant advantage when dealing with encryption.

A twofold lead, of course, does not look so good against the background of a fifteenfold lead obtained in the CPU AES test, but it also says a lot about the performance of the Core i7 980X processor in comparison with younger models.

Similar results are obtained in the CPU Mandel and CPU SinJulia tests — about a twofold superiority of the Core i7 980X over its rivals.

The CineBench R10 synthetic benchmark is not as well disposed towards the new processor as Everest, however, the Core i7 980X still outperforms the rest of the processors in it by a noticeable margin. Overclocking exacerbates the situation significantly — the CineBench results are significantly affected by the processor frequency.

The Fritz Chess chess benchmark does not support more than eight threads, and therefore all the advantages of having additional cores in the Core i7 980X are actually leveled. However, the higher frequency and large L3 cache do their part, and the Core i7 980X leaves the competition far behind. The results at 4.2 GHz are slightly higher, but the cache certainly played a decisive role.

The results at 4.2 GHz are slightly higher, but the cache certainly played a decisive role.

The WPrime test also shows a significant superiority of the Core i7 980X over the rest of the test participants. At the same time, a significant increase in frequency during overclocking does not greatly affect the results — the processor wins due to additional cores and an increased amount of L3 cache.

In the built-in benchmark of the WinRar archiver, the six-core processor lags behind its rivals — slow work with memory affects, but overclocking allows you to leave the Core i7 920 and Core i7 870 processors far behind.

The new processor also handles video encoding better than its predecessors, but in the x264 Benchmark the difference is far from being as great as in the previous tests, and overclocking even gives an increase that fits within the margin of error.

The popular 3DMark Vantage benchmark shows a noticeable advantage in the overall standings of the Core i7 980X. There is no question of superiority at times, however, it was with the help of the new processor that new world records were achieved in this discipline.

There is no question of superiority at times, however, it was with the help of the new processor that new world records were achieved in this discipline.

The situation is somewhat different when it comes to evaluating the performance of the processor: the Core i7 980X again outperforms older models by several times. This does not greatly affect the overall result, since the video card plays a key role in it.

Let’s move on to real applications, in this case, games. In the game FarCry 2 at optimal settings and low resolution, the processor at nominal frequencies is only slightly ahead of its rivals, despite the higher clock frequency and increased cache memory. But overclocking puts everything in its place, and the Core i7 980x is 30% ahead, which is not that small.

If you set high settings for detail and image quality, as well as increase the resolution, then the difference between Gulftown and quad-core processors will be the same 30%, and overclocking increases this difference to one and a half times.

In the Crysis shooter, which is now in its third year of honor as a benchmarking tool, the Core i7 980X is just three frames per second ahead of the Core i7 870 at low resolutions. Overclocking adds another frame per second to this gap, but such a small difference will not be noticeable during the game.

When the resolution is increased, the Core i7 980X at nominal frequencies even loses to the Core i7 870 — it seems that the issue is again in high memory latencies. Crysis is an old game, and there were no talks of any twelve threads at that time, so it is quite natural that there are no special advantages from the increased number of Intel Gulftown cores in this game.

The World in Conflict strategy can be called the most CPU-sensitive of all the games we have chosen. Low resolution Core i7 9 processorThe 80X without overclocking outperforms rivals by about the same 30%, and thanks to overclocking, the lead increases significantly and is about 50%.

In high resolution with better detail, that is, in fact, when switching from DirectX 9 to DirectX 10, the advantage of the new processor is almost lost — the difference of three frames per second is very difficult to notice. Overclocking adds another two frames per second, but this is very insignificant. Obviously, the performance in this game at high quality settings is limited by the capabilities of the video card.

Overclocking adds another two frames per second, but this is very insignificant. Obviously, the performance in this game at high quality settings is limited by the capabilities of the video card.

⇡#Conclusion

The suggested price for this six-core processor is $999. The same price goes for the Intel Core i7 975 processor, based on a less advanced 45nm process, running at the same frequency, but with just four cores and less L3 cache.

But the test results of the Intel Core i7 980X speak for themselves: in synthetic applications, the gain from additional cores, increased L3 cache, and a new set of instructions is huge. At the same time, in real applications, which are not so well optimized for multithreading, the advantages of the new processor are not so pronounced, but still quite large. In the pros of Intel Core i7 980X can also be written that it is equipped with a good cooler that can ensure stable operation of the processor even with significant overclocking attention to the novelty.

Benchmarking: Essence, Stages and Tools

Effective business development in today’s environment of fierce competition «on all fronts» is possible only with the experience of leading companies. Studying the achievements of leaders, comparing them with your own, and finally implementing the results obtained is the key to overcoming the crisis. In economics and practice, this process is called benchmarking. Let us consider in detail the mechanism that opens up a second wind to any business.

The theory has not yet developed a single conceptual apparatus. Each researcher interprets the range of its application in his own way, but it is unanimously believed that the concept of benchmarking was born in the 50s of the last century in Japan . After the Second World War, this small country, like no one else, needed to be restored from the ruins. And it has become one of the best in the world economy.

Despite the fact that the term “benchmarking” was methodically specified in 1972 by American scientists, its interpretation continues to develop.

Benchmarking is understood as:

- Finding good business practices

- Continuous or systematic process for measuring self and competitive performance

- Studying the management best practices of competitors or partners

- Borrowing efficient production or management technologies

- An ongoing process of qualitatively comparing the performance of an individual company with industry leaders

- Marketing Intelligence

- Competitive analysis

- The process of increasing the competitiveness of the enterprise.

As a result, a different understanding of a phenomenon helps to clearly clarify its essence. The most fully revealing is the following interpretation:

Benchmarking is a systematic activity (continuous process) of an economic entity in search of a reference enterprise in order to study its positive experience. Based on a comparative analysis, the results of this activity are borrowed to increase the competitiveness of the research enterprise.

If the enterprise does not have a specialist who performs benchmarking on a permanent and continuous basis, then his functions are performed by a periodically created or invited group of specialists. It is more profitable and convenient to call for help from specialized firms both for carrying out the analytical work itself and for training your own specialists.

Goals and objectives of benchmarking

Benchmarking as a process has one goal: to improve the situation in any area of activity.

To achieve its goal, the subject of the economy solves the following tasks:

- Research own indicators in the problem area

- Find leaders, that is, the best of the best on the subject under study in the industry

- Access required information

- Comparison

- Implement the experience gained by the leaders in their own economy.

Determining and applying the best method for further development is the essence, purpose and objectives of benchmarking.

Types of benchmarking

Benchmarking has a lot of definitions for a reason. Its different orientation provides versatility of use. But in practice, five types of benchmarking have been formed:

- General

- Functional

- Internal

- Competitive

- Process Benchmarking.

General Benchmarking

General Benchmarking — quantitative and qualitative comparison of production, sales, provision of services by your enterprise with similar activities of companies that have achieved great results. The result of the comparison should be the development of the direction of development and the introduction of advanced technologies. Sometimes this allows you to intensify investment activity. It is believed that this is the most difficult process, due to the inaccessibility of the necessary information.

Functional benchmarking

Functional benchmarking is a quantitative and qualitative comparison of the parameters of individual enterprise functions (processes, operations, methods) with similar parameters of more successful colleagues who work in identical conditions. It has many advantages compared to the previous type: ease of identifying a leader, no problems with access to significant indicators, breadth of opportunities for discovering the desired approaches and technologies. Along with positive properties, this type has one negative: sometimes it is impossible to apply, implement, adapt the results of the study.

It has many advantages compared to the previous type: ease of identifying a leader, no problems with access to significant indicators, breadth of opportunities for discovering the desired approaches and technologies. Along with positive properties, this type has one negative: sometimes it is impossible to apply, implement, adapt the results of the study.

Internal benchmarking

Internal benchmarking refers to the comparative analysis of various departments and departments of the company in order to identify the best performance and the most efficient business processes within a single organization. This type is very common due to the simplicity of its implementation and the availability of the required information.

Competitive benchmarking

Competitive benchmarking is carried out on the basis of a database of competitors in their industry or partner companies from another industry.

Process Benchmarking

Process Benchmarking is highlighted depending on the issue. If it is designed to reduce costs, then cost-forming factors are determined, differences are found in the formation of the cost of the leading company and its own. As a result, the problem is solved for a specific restricted problem.

If it is designed to reduce costs, then cost-forming factors are determined, differences are found in the formation of the cost of the leading company and its own. As a result, the problem is solved for a specific restricted problem.

Benchmarking steps and practical aspects

The benchmarking methodology consists of a series of incremental steps. The process of identifying, understanding and adapting the effective functioning of leading companies in order to improve the quality of their own work is conditionally divided into eight stages. Let’s briefly characterize each of them.

Stage 1. Identify functions and business processes that need improvement

To identify your own shortcomings, it is enough to look at yourself from the outside. Everyone knows this common truth from childhood. Similarly, you should act in search of the functions and business processes in the organization that need improvement. Here it is appropriate to apply the methods of competitive intelligence. Managers-benchmarkers often do not pay attention to the information that «lies under their feet», and plan large-scale research.

At this stage, it is important to prioritize features. First of all, the processes that play a major role in the formation of the cost of production are determined. Functions with greater potential for adjustment are then identified. You should also systematize positively motivating incentives. And in conclusion, determine the most success-forming factor-indicator.

Stage 2. Determining the indicators to be analyzed

In the second stage, the business plan should be examined to identify a set of quantitative and qualitative variables.

These include:

- labor indicators (overheads, wages)

- distinguishing factors (product quality and variety)

- data on the market share occupied by the company

- financial strategy and its results.

Stage 3. Identification of the best economic actors inside and outside the industry under study

At the third stage, leaders in the industry of interest and outside it are identified. The choice should not be limited to obvious, direct competitors. Often the achievements of parallel competitors that do not put pressure on the enterprise under study are useful. On the other hand, parallel competitors always have the potential to become direct in the long run.

The choice should not be limited to obvious, direct competitors. Often the achievements of parallel competitors that do not put pressure on the enterprise under study are useful. On the other hand, parallel competitors always have the potential to become direct in the long run.

It is interesting to study companies from other business areas. Undoubtedly, information about world experience will be invaluable.

Ideally, the list of leading companies should not be more than a dozen, or even less. But it must be creative, that is, it is better to select successful enterprises according to the principle of diversity.

Stage 4. Evaluation of the collected indicators in the selected direction

At the stage of collecting and evaluating information about the processes of interest, various sources are used. In your own company, this is not a problem, but to study an outside company, you need to show ingenuity, especially for obtaining hard-to-find data.

Various (formal and informal) surveys (of employees, suppliers, wholesalers, retail customers, firm applicants, industry consultants) can help here. Invaluable assistance is provided by internships of employees in partner companies, attendance at conferences, specialized exhibitions. At this stage, it is important to observe moderation.

Invaluable assistance is provided by internships of employees in partner companies, attendance at conferences, specialized exhibitions. At this stage, it is important to observe moderation.

Stage 5. Preparation of measurement information

The collected information should be systematized and brought to a comparable form.

Step 6. Comparison and analysis of leadership and own indicators

Collected data from outside should be compared with available own. The revealed differences allow us to isolate the nuances for study.

Stage 7. Work on the mistakes based on the conducted research

Now the task is to eliminate the mistakes in our own work. To improve the quality of work, it is necessary to identify weaknesses (logistical problems, high costs, low level of the implementation system). Then you can copy the style and method of work of the leader, but here it is important to remain yourself.

Stage 8. Implementation, development and summing up

Most companies, based on the results of benchmarking, use the Twenty Keys revolutionary transformation program developed by Japanese professor Iwao Kobayashi.

Video: how to conduct benchmarking (competitor analysis)

Implementation of the «Twenty Keys» system

Based on the results of benchmarking, it is necessary to systematize the tasks of enterprise management. The figure shows twenty keys to business excellence. In real life, the program can be implemented at an enterprise of any industry, size and form of ownership. It is aimed at improving the quality of production in general. At your enterprise, you can develop your own modification, taking this as a basis.

- Cleanliness and order

- Management system rationalization

- Small group activities

- Reduction of inventories

- Quick changeover technology

- Cost analysis of manufacturing operations

- Manufacture without constant supervision

- Consolidated production

- Maintenance of machinery and equipment

- Accounting and distribution of working time

- Quality system

- Helping suppliers improve the quality of their products

- Elimination of losses

- Empowering workers to make improvements

- Teaching related professions

- Production planning

- Labor productivity management

- Use of information systems

- Saving energy and materials

- General production technology

Benchmarking should not be understood as blind imitation. Its main task is to study and adapt the experience of the best . As a recommendation, I would like to suggest to the reader that not all the information received at first glance will seem usable. Some things need to be rethought.

Its main task is to study and adapt the experience of the best . As a recommendation, I would like to suggest to the reader that not all the information received at first glance will seem usable. Some things need to be rethought.

EVGA GTX 1050 Ti SC Video Card Review

At that very moment in time and space when I had to set 60% resolution to play Survarium comfortably, I knew that I would buy a new video card and would do so soon. But what I didn’t expect was that Palit’s replacement for my GTX 650 2 GB would be none other than the EVGA GTX 1050 Ti SC 4GB.

1. Would you like to read? See:

2.

Introduction about EVGA GTX 1050 Ti SC 4GB

2.1. Appearance

2.2. Parameters

2.3. Comparison with other models

2.4. Test bench

2.5. Synthetic tests

2.6. Gaming benchmarks

2.7. Tests in games

2.8. Multiplayer projects

2.9. Problem projects

2. 10. Results for EVGA GTX 1050 Ti SC 4GB

10. Results for EVGA GTX 1050 Ti SC 4GB

Don’t want to read? Look:

I made my choice by chance — on Amazon, where I had to make a purchase, this card cost 115 (!) US dollars, and was the most powerful that I could buy with limited finances — and I had limited . And yes, I know that the budget sector is now almost dominated by AMD, and the performance of the RX 460 is approximately 1050, but the cost is lower.

The trouble is in two moments. First, for the cost of 1050, I actually got a 1050 Ti with 4 GB of video memory. The second is ShadowPlay, aka GeForce Experience. I have not found and will hardly find a better tool for recording video than this software. And the last time I picked up a Radeon, AMD’s analogue of the program — ReLive — worked like a boiled potato instead of a processor. No way. I couldn’t take risks, because my work was tied to video capture from the screen, and the processor was too weak, so there wasn’t much choice.

No way. I couldn’t take risks, because my work was tied to video capture from the screen, and the processor was too weak, so there wasn’t much choice.

I have to say a separate word about the delivery, because the citizens from made an impression on me, comparable only to Sergey Redkov from Drimsim — maximum convenience, a really individual approach (and my payment was very specific) and pleasant work speeds. And also — an Amazon Prime account, thanks to which the purchase and delivery from the USA cost $ 140 in total. The link is not advertising, since I don’t plan to buy more on Amazon (for now, anyway), so I provide a sincere recommendation. And a link too.

Read also: Opinion on Wipeout Omega Collection

In general, the 1050 Ti is currently the most recommended budget-gaming build on YouTube along with the Pentium G4560 bundle. The retail price of options from different companies fluctuates around $170 in the CIS, which is a bit much — mainly due to the proximity to the GTX 1060 sector with 3 GB of video memory — and it has a better chip, a wider bus, and more CUDA cores will be.

The video card is perfectly packed, the box itself arrived in a bigger box with a bunch of internal … elements that I would call only synthetic swim bladders. But they coped with their task, and the box in the box reached me safe and sound.

Read also: Sony Xperia XZ1 Compact lit up in AnTuTu with Snapdragon 835 and Android 8.0

Appearance

Externally, the EVGA GTX 1050 Ti SC looks surprisingly modest. It is short (153 mm), smaller than the GTX 650, a 90 mm fan takes up most of the case in the center, light hints of an aggressive design are lost in the shadows. In addition to the fan under the case, there is also an aluminum radiator — such a set, as it turned out later, is quite enough.

Size comparison. Palit GTX 650 2 GB bottom.

Inside, in addition to the video card, there is a driver on a mini-DVD disk (which, due to the lack of a drive, looks extremely relevant, of course), as well as instructions and several tasty icons, including a metal one, like an EVGA enthusiast. This is the second most important award, right after my medal for shooting from a slingshot into the damp ground. I then hit many, many times … Oh, there was a time.

This is the second most important award, right after my medal for shooting from a slingshot into the damp ground. I then hit many, many times … Oh, there was a time.

The SC-version of the video card is designed by the manufacturer as a single-fan model with 4 GB of memory. Dual-fan versions are with 2 GB of video memory, including SSC options. At the same time, unlike the GTX 650 and GTX 1060, the EVGA GTX 1050 Ti is powered exclusively by the PCI connector, it does not need 6-pin additional power. By the way, this applies to all video cards of this model (thanks to Pyatkovsky for the data), and the options with a power connector are rather a placebo. Another interesting feature of my 1050 Ti is the lack of idle mode — dual-fan models do not rustle at minimum fan load, and mine does very well, even at a minimum speed of 200 rpm, and, in fact, even the CPU cooler turned out to be louder.

Parameters

The specifications of the EVGA GTX 1050 Ti SC are as follows. The graphics card is equipped with 768 CUDA cores, runs at a base frequency of 1354 MHz and 1468 MHz in Boost mode, is equipped with 4 GB of GDDR 5 video memory operating at an effective frequency of 7008 MHz, a memory bus of 128 bits, a speed of 0.28 nanoseconds and a bandwidth of 112.16 Gbps /With. It is installed in a PCI Express 3.0 slot.

The graphics card is equipped with 768 CUDA cores, runs at a base frequency of 1354 MHz and 1468 MHz in Boost mode, is equipped with 4 GB of GDDR 5 video memory operating at an effective frequency of 7008 MHz, a memory bus of 128 bits, a speed of 0.28 nanoseconds and a bandwidth of 112.16 Gbps /With. It is installed in a PCI Express 3.0 slot.

Read also: Ulefone Fan as a gift for long-playing smartphone Ulefone Power 2

It supports a wide range of NVIDIA technologies, including GameStream, G-Sync, OpenGL 4.5, Ansel, GPU Boost 3.0, as well as DirectX 12 and Vulkan. The maximum resolution is 7680×4320, video outputs are HDMI 2.0b, DisplayPort 1.4 and, fortunately for all mankind, DVI-D Dual Link, which I consider the most optimal connector of my series. Why — read here.

The ACX 2.0 Single Fan system is responsible for cooling, and it is enough to keep the video card within the limits of temperature decency even at frequencies above the norm. The EVGA GTX 1050 Ti SSC draws up to 75 watts of power and requires a minimum 300 watt power supply. The indicators are modest, given the power of the device in battle, but I remind you that it doesn’t even need an external power source!

The EVGA GTX 1050 Ti SSC draws up to 75 watts of power and requires a minimum 300 watt power supply. The indicators are modest, given the power of the device in battle, but I remind you that it doesn’t even need an external power source!

Read also: RED Hydrogen, the world’s first smartphone with a holographic display

Comparison with other models — 128 bit, memory frequency — 5000 MHz, bandwidth 80 Gb / s. It requires an additional 6-pin power supply, eats up to 82 watts and the PSU requires a minimum of 400 watts. I will not test in the games that I intended for the 1050 Ti — NVIDIA itself says that it is three times weaker than the new version in the regular version (meaning the GTX 1050), so feel free to divide the FPS in the 1050 Ti tests by four, and get the approximate performance. At the same time, the cost of the CU of the video card is about $ 70 — that is, twice as much as the ratio in frame rate. This, if anything, is a compliment to the 1050 Ti, not a criticism of the 650.

As for the GTX 1060, the reference model has 1152 CUDA cores, a base frequency of 1506 MHz and 1708 MHz in Boost, 192 Gbps memory bandwidth, a 256-bit bus, and 120 watts of power consumption. With all its advantages, the video card costs on the same Amazon, although not in the reference version, an average of $ 200, even $ 180, if you catch Amazon Prime by the tail. And this is $60 more expensive, excluding shipping and customs clearance.

Test bench

Since I was lucky enough to upgrade my hardware, the current test PC looks like this:

- Intel Pentium G4560 Kaby Lake generation

- cooler Titan Dragonfly 4

- motherboard MSI B250M PRO-VDH

- one GeIL DDR4-2400 RAM 8192MB

- SSD Goodram CX300 256GB

- hard drive WDC WD10EZEX-00BN5A0 1TB

- housing Aerocool Aero-500

- be quiet! Pure Power 10

- additional system cooler Titan Kukri 120×30 mm

A combination of G4560 and 1050/1050 Ti has long been considered the best budget option for games — and is chosen by crypto miners for the very L3 cache. The processor reveals the video card quite adequately, but it is worth remembering that despite its power — and this is practically a Core i3, since there are two cores and four threads — it has trouble with the cache.

The processor reveals the video card quite adequately, but it is worth remembering that despite its power — and this is practically a Core i3, since there are two cores and four threads — it has trouble with the cache.

Read also: the first images, prices and specifications of Xiaomi X1 appeared In most of the other games we tested, the video card strained.

Synthetic tests

Now let’s go through 3DMark. In the Time Spy test on DirectX 12, the video card earned 2258 performance points, showing 14.95 FPS in the first test and 12.77 FPS in the second. In the Fire Strike test, the video card scored 7247 points, 33.40 FPS in the first test and 29.82 FPS in the second.

AIDA64 Extreme showed interesting results in the GPGPU test:

- 12057 MB/s memory read

- memory write 11678 MB/s

- copy 91678 MB/s

- single capacity 2602 GFLOPS

- double capacity 83.01 GFLOPS

They are interesting in contrast to the Sapphire RX580 Nitro + 4GB, which I tested earlier, and which will be reviewed in a few days, along with a little comparison.

In the AIDA stress test, the graphics card warmed up to 58 degrees Celsius after 5 minutes of testing, in standby mode the temperature did not exceed 40 degrees Celsius. The video card turned out to be very quiet, and only under maximum load it starts to make a noticeable noise, in all other cases it is quieter than a mouse.

The Heaven benchmark at Ultra quality and Extreme tessellation gave 872 points and an average FPS of 34.6. The minimum was 7.5 FPS, the maximum was 75.1. During the benchmark, the video card also heated up to 65 degrees, and its frequency jumped to 1974 MHz. In the AIDA64 stress test, the frequency rose to 1771 MHz — and given that I am far from an adept in overclocking sciences, we will consider such a sharp jump as the frequency ceiling.

Upd. A secondary check with the FPS Monitor turned on made it clear and clear that the card’s frequency does not exceed 1750 MHz. The number above is from somewhere else, but is not the frequency of the chip itself.

There was a little crap with the calculation of power consumption — without the EVGA GTX 1050 Ti SC 4GB, the system consumed 57 watts, and together with the video card … 55 watts. As for the maximum load, in the stress test, the system with the video card began to consume 96 watts, from which the fact follows — in the minimum load mode, the heroine of the review even almost does not draw power.

Game Benchmarks

An important point here is the standardized benchmarks, which sometimes run even outside of the game itself, as was the case with Metro Last Light. Directly running the video card for games will be later.

With the settings turned to maximum, including NVIDIA GameWorks and PhysX, the game Batman: Arkham Knight took 3982 MB of video memory out of 4050 available. Benchmarks showed 22 FPS on average and 11 FPS at a minimum, but during the test it turned out that the video card was almost chilling, but the G4560 worked at full speed. EVGA GTX 1050 Ti SC 4GB frequency jumped to 1759MHz, and the temperature was at the level of 57 degrees.

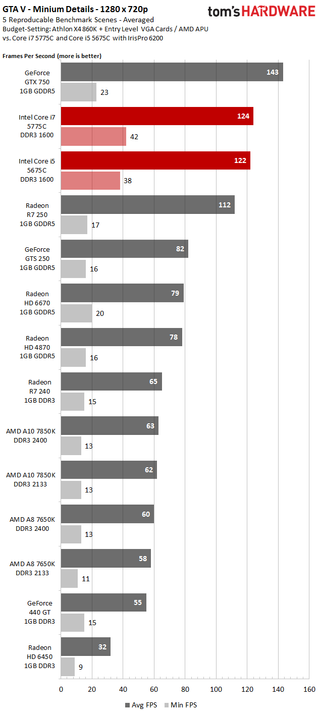

A similar situation happened with GTA V. It is sad that most of the gaming benchmarks are focused on the processor more than on the video card, but as a result, at very high graphics settings with anti-aliasing and other sweets, the benchmark showed an average of 35 FPS, rarely dropping to 25, and often rising to 40-50. At the same time, prolags and loading of textures were often noticeable. The occupied video memory turned out to be 2700 MB out of 4050 available, but it is worth remembering that the processor slows down the potential of the video card, so there was no point in raising the settings.

Benchmarking Metro Last Light on settings turned up to the ceiling started out fun — with visual lags, as if lightning illuminates the map every half second. This case passed after a couple of mouse clicks, but after three tests of a standard scene, the computer averaged 25 FPS, a maximum of 55 FPS and a minimum of 0.97 FPS. As we will see in the game itself, this is still a good result. Paradoxically, neither the processor nor the video card were loaded more than 60% — and it is not clear why.

Paradoxically, neither the processor nor the video card were loaded more than 60% — and it is not clear why.

Tests in games

Let’s start with the same Metro Last Light. In the first mission at the maximum available settings, we have 22 FPS on average, after Artyom wakes up, the frame rate drops to a very stable and very low 11 FPS — while the load on both the processor and the video card does not exceed 60%. Why — I don’t know, but I’ll tell you right away. You can already play on medium settings, and get 60 FPS safely.

GTA V in the game itself rested on the processor, because of which I constantly observed prolags and loadings when moving quickly around the city. The processor worked at 100%, but I can’t say that the card was cooling down at the same time — it was loaded at 90% almost constantly, and often worked at 100%.

Batman: Arkham Knight at the same maximum settings tried with all her might to stay at 30 FPS, and on foot she almost succeeded, the frames dropped to 20 very rarely. In the car, however, they went down much more often, and the minimum was 13 FPS. But here the video card is not to blame — at the moments of drawdowns, it rested at 20% of the load, and I shift all the blame to the processor.

In the car, however, they went down much more often, and the minimum was 13 FPS. But here the video card is not to blame — at the moments of drawdowns, it rested at 20% of the load, and I shift all the blame to the processor.

Another heavyweight open world — Far Cry 4 — showed me the difference between the minimum and maximum settings. The graphics turned to the maximum in all respects made it possible to get from 10 to 40 FPS, on average — 25. The worst thing was when there were a lot of people on the screen, the processor was straining there, and with a bunch of burning grass.

But spinning everything to the minimum did not really help the case, the FPS increase was about 15, and I would not call it stable anyway. If I understand correctly, then the CPU at the minimum settings processes some additional effects, which gives it even more load, and at the maximum and medium settings, the video card takes on some of the work. Total — weakly playable, but because of the processor, not because of the video card. Let me tell you a secret, on my old PC with a Core i3-4130 at medium settings with the same EVGA GTX 1050 Ti SC 4GB, I got a stable 50 FPS.

Let me tell you a secret, on my old PC with a Core i3-4130 at medium settings with the same EVGA GTX 1050 Ti SC 4GB, I got a stable 50 FPS.

Next is DOOM. The first test was done on Vulkan on ultra settings wherever possible. Nightmare settings require 5 GB or more of video memory, they are not available to us. Testing was carried out in three stages: the first was the initial map with all weapons and infinite ammo. The frame rate in this case was at 50 FPS, occasionally rising to 60 and dropping to 40.

Next up is the Smelter test with a bunch of eye candy. During shooting and special effects, frames occasionally dropped to 33 FPS, staying at around 50 almost constantly. The video card was loaded just to death, the CPU was a little tense. The third stage was a test with bots in multiplayer — the same 50 FPS with rare drawdowns up to 30. And these, I remind you, are ultra-settings on fairly budget hardware. My compliments, id Software!

The second DOOM test was on OpenGL 4. 5 and the frame rate dropped by an average of 10 in each test. Prolags also became noticeable, the processor began to take over almost all the work. In multiplayer, it was also not very comfortable after the stability of the Volcano.

5 and the frame rate dropped by an average of 10 in each test. Prolags also became noticeable, the processor began to take over almost all the work. In multiplayer, it was also not very comfortable after the stability of the Volcano.

Multiplayer projects

Counter-Strike Global Offensive kept at 60 FPS on the current build and on maximum settings in all situations, no matter how much smoke and fire I would throw, and no matter how many rounds I fired at my comrade’s head.

DotA 2 at maximum graphics settings in cheat mode sank to 55 FPS only with a huge number of special effects on the screen and nine Axes trying to cut the immortal hero.

World of Tanks/World of Warplanes kept at 60 FPS on maximum settings and with HD clients. Prolags were, but rarely and barely noticeable.

War Thunder kept at 60 FPS in aircraft battles with reinforced concrete, and this is at the same maximum settings, but in tank battles the counter kept at 50 FPS, sometimes dropped to 30 FPS when there was a lot of smoke on the screen and physics in action .

2P1) fps ∼23%

2P1) fps ∼23% 4 (22.1min, 31.3P0.1, 39.7P1) fps ∼13%

4 (22.1min, 31.3P0.1, 39.7P1) fps ∼13% 6P0.1, 31P1) fps ∼8%

6P0.1, 31P1) fps ∼8% 38min, 5.88P0.1, 7P1) fps ∼2%

38min, 5.88P0.1, 7P1) fps ∼2%