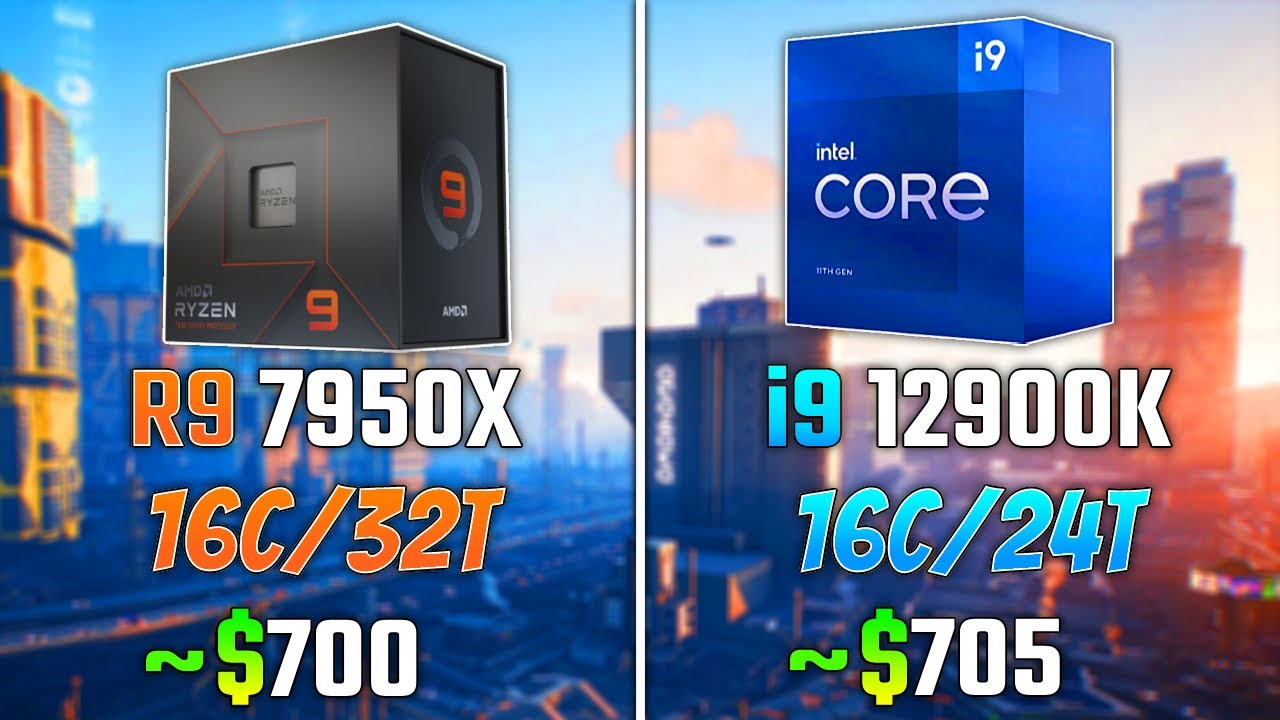

Radeon R9 370 [in 2 benchmarks]

Radeon R9 370

Buy

- Interface PCIe 3.0 x16

- Core clock speed 925 MHz

- Max video memory 4096

- Memory type GDDR5

- Memory clock speed 5600 MHz

- Maximum resolution

Summary

AMD started Radeon R9 370 sales 5 May 2015. This is GCN 1.0 architecture desktop card based on 28 nm manufacturing process and primarily aimed at gamers. 4 GB of GDDR5 memory clocked at 5.6 GHz are supplied, and together with 256 Bit memory interface this creates a bandwidth of 179.2 GB/s.

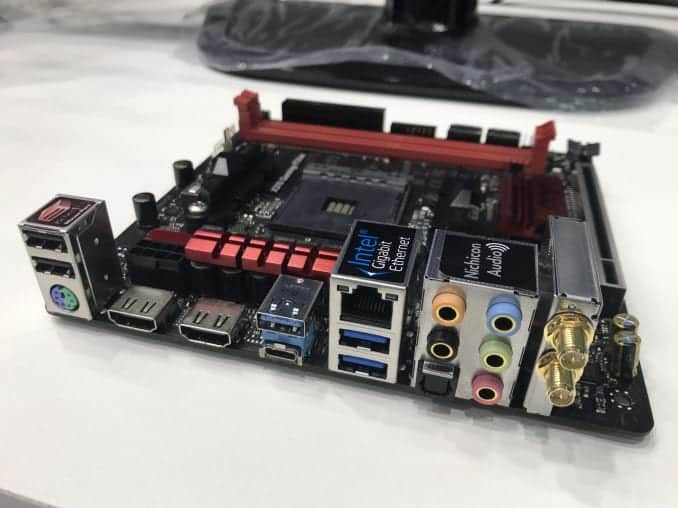

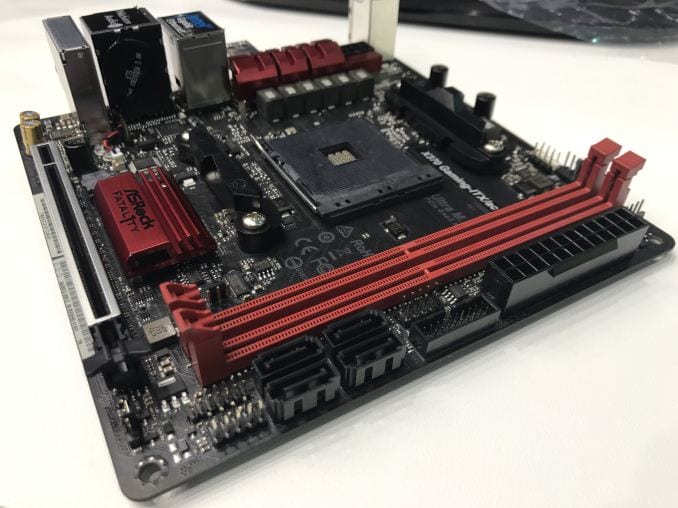

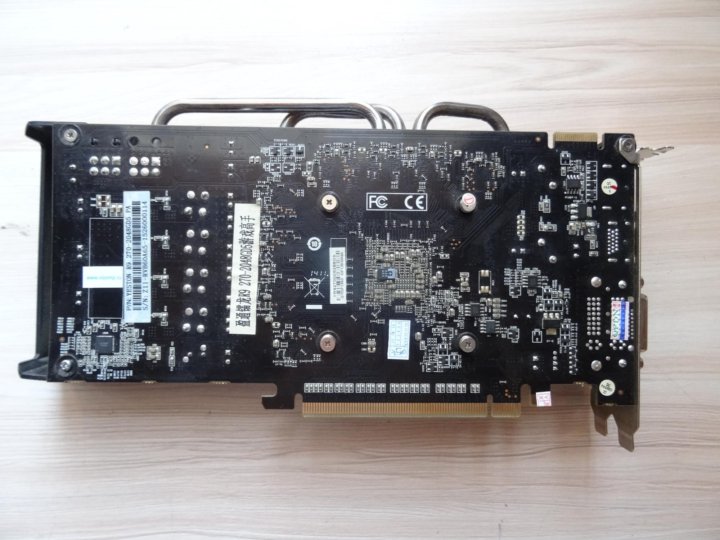

Compatibility-wise, this is dual-slot card attached via PCIe 3.0 x16 interface. Its manufacturer default version has a length of 221 mm. 1x 6-pin power connector is required, and power consumption is at 110 Watt.

It provides poor gaming and benchmark performance at

16. 08%

of a leader’s which is NVIDIA GeForce RTX 3090 Ti.

Radeon R9

370

vs

GeForce RTX

3090 Ti

General info

Of Radeon R9 370’s architecture, market segment and release date.

| Place in performance rating | 286 | |

| Value for money | 4.04 | |

| Architecture | GCN 1.0 (2012−2020) | |

| GPU code name | Trinidad | |

| Market segment | Desktop | |

| Release date | 5 May 2015 (7 years ago) | |

| Current price | $337 | of 49999 (A100 SXM4) |

Value for money

To get the index we compare the characteristics of video cards and their relative prices.

- 0

- 50

- 100

Technical specs

Radeon R9 370’s general performance parameters such as number of shaders, GPU base clock, manufacturing process, texturing and calculation speed. These parameters indirectly speak of Radeon R9 370’s performance, but for precise assessment you have to consider its benchmark and gaming test results.

| Pipelines / CUDA cores | 1280 | of 18432 (AD102) |

| Core clock speed | 925 MHz | of 2610 (Radeon RX 6500 XT) |

| Boost clock speed | 975 MHz | of 2903 (Radeon Pro W6600) |

| Number of transistors | 2,800 million | of 14400 (GeForce GTX 1080 SLI Mobile) |

| Manufacturing process technology | 28 nm | of 4 (h200 PCIe) |

| Thermal design power (TDP) | 110 Watt | of 900 (Tesla S2050) |

| Texture fill rate | 78. 00 00 |

of 939.8 (h200 SXM5) |

| Floating-point performance | 2,496 gflops | of 16384 (Radeon Pro Duo) |

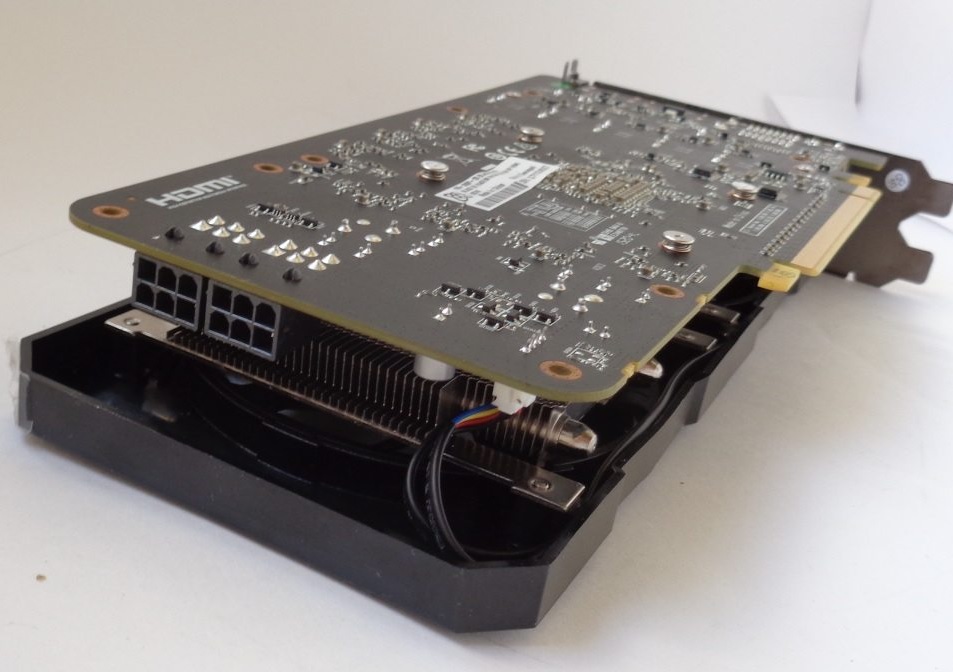

Compatibility, dimensions and requirements

Information on Radeon R9 370’s compatibility with other computer components. Useful when choosing a future computer configuration or upgrading an existing one. For desktop video cards it’s interface and bus (motherboard compatibility), additional power connectors (power supply compatibility).

| Interface | PCIe 3.0 x16 | |

| Length | 221 mm | |

| Width | 2-slot | |

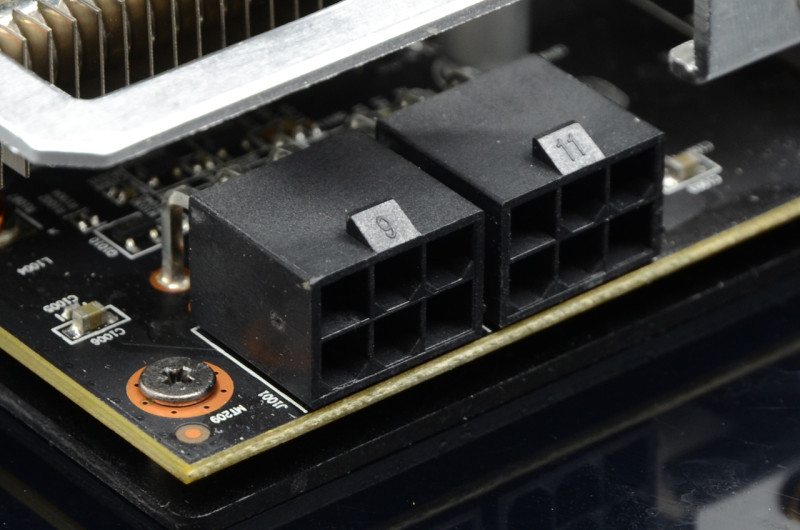

| Supplementary power connectors | 1x 6-pin |

Memory

Parameters of memory installed on Radeon R9 370: its type, size, bus, clock and resulting bandwidth. Note that GPUs integrated into processors don’t have dedicated memory and use a shared part of system RAM.

| Memory type | GDDR5 | |

| Maximum RAM amount | 4 GB | of 128 (Radeon Instinct MI250X) |

| Memory bus width | 256 Bit | of 8192 (Radeon Instinct MI250X) |

| Memory clock speed | 5600 MHz | of 21000 (GeForce RTX 3090 Ti) |

| Memory bandwidth | 179.2 GB/s | of 14400 (Radeon R7 M260) |

Video outputs and ports

Types and number of video connectors present on Radeon R9 370. As a rule, this section is relevant only for desktop reference video cards, since for notebook ones the availability of certain video outputs depends on the laptop model.

| Display Connectors | 2x DVI, 1x HDMI, 1x DisplayPort | |

| HDMI | + |

API support

APIs supported by Radeon R9 370, sometimes including their particular versions.

| DirectX | 12 (11_1) | |

| Shader Model | 5.1 | |

| OpenGL | 4.6 | |

| OpenCL | 1.2 | |

| Vulkan | 1.2.131 |

Benchmark performance

Non-gaming benchmark performance of Radeon R9 370. Note that overall benchmark performance is measured in points in 0-100 range.

Overall score

This is our combined benchmark performance rating. We are regularly improving our combining algorithms, but if you find some perceived inconsistencies, feel free to speak up in comments section, we usually fix problems quickly.

R9 370

16.08

- Passmark

- 3DMark Fire Strike Graphics

Passmark

This is probably the most ubiquitous benchmark, part of Passmark PerformanceTest suite. It gives the graphics card a thorough evaluation under various load, providing four separate benchmarks for Direct3D versions 9, 10, 11 and 12 (the last being done in 4K resolution if possible), and few more tests engaging DirectCompute capabilities.

It gives the graphics card a thorough evaluation under various load, providing four separate benchmarks for Direct3D versions 9, 10, 11 and 12 (the last being done in 4K resolution if possible), and few more tests engaging DirectCompute capabilities.

Benchmark coverage: 26%

R9 370

4722

3DMark Fire Strike Graphics

Fire Strike is a DirectX 11 benchmark for gaming PCs. It features two separate tests displaying a fight between a humanoid and a fiery creature seemingly made of lava. Using 1920×1080 resolution, Fire Strike shows off some realistic enough graphics and is quite taxing on hardware.

Benchmark coverage: 14%

R9 370

5249

Mining hashrates

Cryptocurrency mining performance of Radeon R9 370. Usually measured in megahashes per second.

| Bitcoin / BTC (SHA256) | 336 Mh/s |

Game benchmarks

Let’s see how good Radeon R9 370 is for gaming. Particular gaming benchmark results are measured in frames per second. Comparisons with game system requirements are included, but remember that sometimes official requirements may reflect reality inaccurately.

Particular gaming benchmark results are measured in frames per second. Comparisons with game system requirements are included, but remember that sometimes official requirements may reflect reality inaccurately.

Average FPS

Here are the average frames per second in a large set of popular modern games across different resolutions:

| Full HD | 45 |

Popular games

Relative perfomance

Overall Radeon R9 370 performance compared to nearest competitors among desktop video cards.

NVIDIA GeForce GTX 760

101.12

AMD Radeon HD 7950

100.93

AMD Radeon Sky 500

100.31

AMD Radeon R9 370

100

NVIDIA GeForce GTX 1630

98.88

AMD Radeon HD 7870

98. 82

82

NVIDIA GeForce GTX 580

95.71

Conclusion

Radeon R9 370 is a middle-level video card based on an outdated GCN 1.0 core and released only in OEM variant, so it never was actually sold directly to end users.

Some video FPS benchmarks:

NVIDIA equivalent

We believe that the nearest equivalent to Radeon R9 370 from NVIDIA is GeForce GTX 1630, which is slower by 1% and lower by 4 positions in our rating.

GeForce GTX

1630

Compare

Here are some closest NVIDIA rivals to Radeon R9 370:

NVIDIA GeForce GTX 670

112

NVIDIA GeForce GTX 1050

107.4

NVIDIA GeForce GTX 760

101. 12

12

AMD Radeon R9 370

100

NVIDIA GeForce GTX 1630

98.88

NVIDIA GeForce GTX 580

95.71

NVIDIA P104-100

94.22

Similar GPUs

Here is our recommendation of several graphics cards that are more or less close in performance to the one reviewed.

Radeon Sky

500

Compare

Radeon HD

7870 XT

Compare

P104

100

Compare

Radeon R9

270

Compare

GeForce GTX

670

Compare

GeForce GTX

760 Ti OEM

Compare

Recommended processors

These processors are most commonly used with Radeon R9 370 according to our statistics.

Xeon E5

2650 v2

3.4%

Core i3

10100F

3.2%

FX

6300

2.6%

Xeon E5

2420

2.1%

Core i5

3470

2%

Xeon E5

2689

1.8%

Ryzen 3

1200

1.6%

Xeon E5

2620 v3

1. 6%

6%

Core i5

4460

1.5%

Core i5

10400F

1.5%

User rating

Here you can see the user rating of the graphics card, as well as rate it yourself.

Questions and comments

Here you can ask a question about Radeon R9 370, agree or disagree with our judgements, or report an error or mismatch.

Please enable JavaScript to view the comments powered by Disqus.

GPU Compare | Graphics Card Comparison

The Radeon R9 370 will run 89% of the top 10,000 PC games. It will also run 63% of these games at the recommended or best experience levels.

- Manufacturer

-

AMD - Generation

- 9 generations old

- Category

-

High Performance - Dedicated RAM

-

2. 0 GB

0 GB - DirectX

-

12 - Rank

-

85th percentile of AMD GPUs - Rank in Power

-

74th of AMD GPUs - Rank in Popularity

-

202nd of AMD GPUs

Can the Radeon R9 370 run the Top PC games? You can see a GPU comparison by choosing another video card. How many games can your GPU run?

|

Radeon R9 370

|

Radeon RX 6950 XT

|

||

|---|---|---|---|

| Rank | Game |

|

|

| 1 |

Cyberpunk 2077 |

|

|

| 2 |

Grand Theft Auto V |

|

|

| 3 |

VALORANT |

|

|

| 4 |

Call of Duty: Warzone |

|

|

| 5 |

Elden Ring |

|

|

| 6 |

Marvel’s Spider-Man Remastered |

|

|

| 7 |

Red Dead Redemption 2 |

|

|

| 8 |

Call of Duty: Modern Warfare II |

|

|

| 9 |

God of War |

|

|

| 10 |

Fortnite |

|

|

| 11 |

FIFA 23 |

|

|

| 12 |

Minecraft |

|

|

| 13 |

The Witcher 3: Wild Hunt |

|

|

| 14 |

Apex Legends |

|

|

| 15 |

Forza Horizon 5 |

|

|

| 16 |

Genshin Impact |

|

|

| 17 |

League of Legends |

|

|

| 18 |

Assassin’s Creed Valhalla |

|

|

| 19 |

Counter-Strike: Global Offensive |

|

|

| 20 |

The Sims 4 |

|

|

| 21 |

FIFA 22 |

|

|

| 22 |

PLAYERUNKNOWN’S BATTLEGROUNDS |

|

|

| 23 |

Battlefield 2042 |

|

|

| 24 |

Far Cry 6 |

|

|

| 25 |

WARZONE |

|

|

| 26 |

1982 |

|

|

| 27 |

Dying Light 2 Stay Human |

|

|

| 28 |

GRID Autosport |

|

|

| 29 |

Call of Duty: Modern Warfare |

|

|

| 30 |

Horizon Zero Dawn |

|

|

| 31 |

ARK: Survival Evolved |

|

|

| 32 |

Assassin’s Creed: Origins |

|

|

| 33 |

Phasmophobia |

|

|

| 34 |

Grand Theft Auto IV |

|

|

| 35 |

Escape from Tarkov |

|

|

| 36 |

Devil May Cry 5 |

|

|

| 37 |

Days Gone |

|

|

| 38 |

Battlefield 1 |

|

|

| 39 |

Sea of Thieves |

|

|

| 40 |

Battlefield 5 |

|

|

| 41 |

Assassin’s Creed Odyssey |

|

|

| 42 |

Battlefield 4 |

|

|

| 43 |

Assassin’s Creed Unity |

|

|

| 44 |

NotGTAV |

|

|

| 45 |

Call of Duty: WW2 |

|

|

| 46 |

Hogwarts Legacy |

|

|

| 47 |

Rust |

|

|

| 48 |

Forza Horizon 4 |

|

|

| 49 |

Monster Hunter: World |

|

|

| 50 |

Fallout 4 |

|

|

| 51 |

Overwatch |

|

|

| 52 |

Dota 2 |

|

|

| 53 |

Call of Duty: Black Ops II |

|

|

| 54 |

UNCHARTED Legacy of Thieves Collection |

|

|

| 55 |

Assassin’s Creed IV Black Flag |

|

|

| 56 |

Tom Clancy’s Rainbow Six: Siege |

|

|

| 57 |

Rocket League |

|

|

| 58 |

Fall Guys: Ultimate Knockout |

|

|

| 59 |

SEKIRO: SHADOWS DIE TWICE |

|

|

| 60 |

Deathloop |

|

|

| 61 |

Destiny 2 |

|

|

| 62 |

Fortnite: Battle Royale |

|

|

| 63 |

Team Fortress 2 |

|

|

| 64 |

The Elder Scrolls V: Skyrim |

|

|

| 65 |

Metal Gear Rising: Revengeance |

|

|

| 66 |

Dark Souls 3 |

|

|

| 67 |

Return to Monkey Island |

|

|

| 68 |

GTA 5 Thor Mod |

|

|

| 69 |

PUBG Lite |

|

|

| 70 |

Far Cry 5 |

|

|

| 71 |

MONSTER HUNTER RISE |

|

|

| 72 |

Call of Duty: Modern Warfare 3 |

|

|

| 73 |

Assassin’s Creed III |

|

|

| 74 |

GTA 5 Premium Online Edition |

|

|

| 75 |

Stray |

|

|

| 76 |

Far Cry 3 |

|

|

| 77 |

Assetto Corsa |

|

|

| 78 |

Assassin’s Creed Syndicate |

|

|

| 79 |

Assassin’s Creed |

|

|

| 80 |

Call of Duty: Black Ops III |

|

|

| 81 |

The Forest |

|

|

| 82 |

Batman: Arkham Knight |

|

|

| 83 |

Civilization 6 |

|

|

| 84 |

GUNDAM EVOLUTION |

|

|

| 85 |

Tekken 7 |

|

|

| 86 |

Far Cry 4 |

|

|

| 87 |

Assassin’s Creed Rogue |

|

|

| 88 |

DayZ |

|

|

| 89 |

Assassin’s Creed II |

|

|

| 90 |

Age of Empires 4 |

|

|

| 91 |

Dead by Daylight |

|

|

| 92 |

Watch Dogs 2 |

|

|

| 93 |

Arma III |

|

|

| 94 |

Forza Horizon 3 |

|

|

| 95 |

Dying Light |

|

|

| 96 |

Cities: Skylines |

|

|

| 97 |

Warframe |

|

|

| 98 |

Terraria |

|

|

| 99 |

Project Zomboid |

|

|

| 100 |

Watch Dogs |

|

|

AMD

NVIDIA

Intel

| Rank | GPU |

|---|---|

AMD Radeon R9 370 — review.

GPU Benchmark & Specs

GPU Benchmark & Specs

AMD Radeon R9 370 graphics card (also called GPU) comes in 208 in the performance rating. It is a good result. The graphics card AMD Radeon R9 370 runs with the minimal clock speed 925 MHz. It is featured by the acceleration option and able to run up to 975 MHz. The manufacturer has equipped AMD with GB of 2 GB memory, clock speed 5600 MHz and bandwidth 179.2 GB/s.

The power consumption of the graphics card is 110 Watt, and the fabrication process is only 28 nm. Below you will find the main data on the compatibility, sizes, technologies and gaming performance test results. Also you can read and leave the comments.

Let’s take a closer look at the most important specifications of the graphics card. To have a good idea what a graphics card is the best, we recommend to use comparison service.

4.6

Out of 14

Hitesti score

Popular graphics cards

Most viewed

AMD Radeon RX Vega 7

Intel UHD Graphics 630

Intel UHD Graphics 600

NVIDIA Quadro T1000

AMD Radeon RX Vega 10

NVIDIA GeForce MX330

Intel HD Graphics 530

Intel UHD Graphics 620

Intel HD Graphics 4600

Intel HD Graphics 520

Buy here:

AliExpress

General info

The basic set of information will help you find out the graphics card AMD Radeon R9 370 release date and its purpose (laptops or PCs), as well as the price at the time of the release and the average current price. This data also includes the architecture employed by the producer, and the chip’s codename.

This data also includes the architecture employed by the producer, and the chip’s codename.

| Place in performance rating: | 267 | |||

| Architecture: | GCN 1.0 | |||

| Code name: | Trinidad | |||

| Type: | Desktop | |||

| Release date: | 5 May 2015 (6 years ago) | |||

| Price now: | $248 | |||

| Value for money: | 13.35 | |||

| GPU code name: | Trinidad | |||

| Market segment: | Desktop | |||

Technical specs

This is the important information that defines the graphics card’s capacity. The simpler the device production process, the better. The core’s power frequency is responsible for its speed (direct correlation) while the elaboration of signals is performed by the transistors (the more transistors, the faster the computations are carried out).

The simpler the device production process, the better. The core’s power frequency is responsible for its speed (direct correlation) while the elaboration of signals is performed by the transistors (the more transistors, the faster the computations are carried out).

| Pipelines: | 1280 | |||

| Core clock speed: | 925 MHz | |||

| Boost Clock: | 975 MHz | |||

| Transistor count: | 2,800 million | |||

| Manufacturing process technology: | 28 nm | |||

| Power consumption (TDP): | 110 Watt | |||

| Texture fill rate: | 78. 00 00 |

|||

| Floating-point performance: | 2,496 gflops | |||

| Pipelines / CUDA cores: | 1280 | |||

| Boost clock speed: | 975 MHz | |||

| Number of transistors: | 2,800 million | |||

| Thermal design power (TDP): | 110 Watt | |||

Compatibility, dimensions and requirements

Today there are numerous form factors for PC cases, so it is extremely important to know the length of the graphics card and the types of its connection. This will help facilitate the upgrade process.

| Interface: | PCIe 3. 0 x16 0 x16 |

|||

| Length: | 221 mm | |||

| Supplementary power connectors: | 1x 6-pin | |||

Memory

The internal main memory is used for storing data while conducting computations. Contemporary games and professional graphic apps have high requirements for the memory’s volume and capacity. The higher this parameter, the more powerful and fast the graphics card is. Type of memory, the capacity and bandwidth for AMD Radeon R9 370.

| Memory type: | GDDR5 | |||

| Maximum RAM amount: | 2 GB | |||

| Memory bus width: | 256 Bit | |||

| Memory clock speed: | 5600 MHz | |||

| Memory bandwidth: | 179. 2 GB/s 2 GB/s |

|||

Video outputs and ports

As a rule, all contemporary graphics cards feature several connection types and additional ports. Knowing these peculiarities is crucial for avoiding problems with connecting the graphics card to the monitor or other peripheral devices.

| Display Connectors: | 2x DVI, 1x HDMI, 1x DisplayPort | |||

| HDMI: | + | |||

API support

All API-supported AMD Radeon R9 370 are listed below.

| DirectX: | 12 (11_1) | |||

| OpenGL: | 4.6 | |||

Overall gaming performance

All tests have been based on FPS counter. Let’s have a look on what place AMD Radeon R9 370 has been taken in the gaming performance test (calculation has been made in accordance with the game developer recommendations about system requirements; it can differ from the real world situations).

Select games to view

Horizon Zero DawnDeath StrandingF1 2020Gears TacticsDoom EternalHunt ShowdownEscape from TarkovHearthstoneRed Dead Redemption 2Star Wars Jedi Fallen OrderNeed for Speed HeatCall of Duty Modern Warfare 2019GRID 2019Ghost Recon BreakpointFIFA 20Borderlands 3ControlF1 2019League of LegendsTotal War: Three KingdomsRage 2Anno 1800The Division 2Dirt Rally 2.0AnthemMetro ExodusFar Cry New DawnApex LegendsJust Cause 4Darksiders IIIFarming Simulator 19Battlefield VFallout 76Hitman 2Call of Duty Black Ops 4Assassin´s Creed OdysseyForza Horizon 4FIFA 19Shadow of the Tomb RaiderStrange BrigadeF1 2018Monster Hunter WorldThe Crew 2Far Cry 5World of Tanks enCoreX-Plane 11.11Kingdom Come: DeliveranceFinal Fantasy XV BenchmarkFortniteStar Wars Battlefront 2Need for Speed PaybackCall of Duty WWIIAssassin´s Creed OriginsWolfenstein II: The New ColossusDestiny 2ELEXThe Evil Within 2Middle-earth: Shadow of WarFIFA 18Ark Survival EvolvedF1 2017Playerunknown’s Battlegrounds (2017)Team Fortress 2Dirt 4Rocket LeaguePreyMass Effect AndromedaGhost Recon WildlandsFor HonorResident Evil 7Dishonored 2Call of Duty Infinite WarfareTitanfall 2Farming Simulator 17Civilization VIBattlefield 1Mafia 3Deus Ex Mankind DividedMirror’s Edge CatalystOverwatchDoomAshes of the SingularityHitman 2016The DivisionFar Cry PrimalXCOM 2Rise of the Tomb RaiderRainbow Six SiegeAssassin’s Creed SyndicateStar Wars BattlefrontFallout 4Call of Duty: Black Ops 3Anno 2205World of WarshipsDota 2 RebornThe Witcher 3Dirt RallyGTA VDragon Age: InquisitionFar Cry 4Assassin’s Creed UnityCall of Duty: Advanced WarfareAlien: IsolationMiddle-earth: Shadow of MordorSims 4Wolfenstein: The New OrderThe Elder Scrolls OnlineThiefX-Plane 10. 25Battlefield 4Total War: Rome IICompany of Heroes 2Metro: Last LightBioShock InfiniteStarCraft II: Heart of the SwarmSimCityTomb RaiderCrysis 3Hitman: AbsolutionCall of Duty: Black Ops 2World of Tanks v8Borderlands 2Counter-Strike: GODirt ShowdownDiablo IIIMass Effect 3The Elder Scrolls V: SkyrimBattlefield 3Deus Ex Human RevolutionStarCraft 2Metro 2033Stalker: Call of PripyatGTA IV — Grand Theft AutoLeft 4 DeadTrackmania Nations ForeverCall of Duty 4 — Modern WarfareSupreme Commander — FA BenchCrysis — GPU BenchmarkWorld in Conflict — BenchmarkHalf Life 2 — Lost Coast BenchmarkWorld of WarcraftDoom 3Quake 3 Arena — TimedemoHalo InfiniteFarming Simulator 22Battlefield 2042Forza Horizon 5Riders RepublicGuardians of the GalaxyBack 4 BloodDeathloopF1 2021Days GoneResident Evil VillageHitman 3Cyberpunk 2077Assassin´s Creed ValhallaDirt 5Watch Dogs LegionMafia Definitive EditionCyberpunk 2077 1.5GRID LegendsDying Light 2Rainbow Six ExtractionGod of War

25Battlefield 4Total War: Rome IICompany of Heroes 2Metro: Last LightBioShock InfiniteStarCraft II: Heart of the SwarmSimCityTomb RaiderCrysis 3Hitman: AbsolutionCall of Duty: Black Ops 2World of Tanks v8Borderlands 2Counter-Strike: GODirt ShowdownDiablo IIIMass Effect 3The Elder Scrolls V: SkyrimBattlefield 3Deus Ex Human RevolutionStarCraft 2Metro 2033Stalker: Call of PripyatGTA IV — Grand Theft AutoLeft 4 DeadTrackmania Nations ForeverCall of Duty 4 — Modern WarfareSupreme Commander — FA BenchCrysis — GPU BenchmarkWorld in Conflict — BenchmarkHalf Life 2 — Lost Coast BenchmarkWorld of WarcraftDoom 3Quake 3 Arena — TimedemoHalo InfiniteFarming Simulator 22Battlefield 2042Forza Horizon 5Riders RepublicGuardians of the GalaxyBack 4 BloodDeathloopF1 2021Days GoneResident Evil VillageHitman 3Cyberpunk 2077Assassin´s Creed ValhallaDirt 5Watch Dogs LegionMafia Definitive EditionCyberpunk 2077 1.5GRID LegendsDying Light 2Rainbow Six ExtractionGod of War

low

1280×720

med.

1920×1080

high

1920×1080

ultra

1920×1080

QHD

2560×1440

4K

3840×2160

Horizon Zero Dawn (2020)

low

1280×720

med.

1920×1080

high

1920×1080

ultra

1920×1080

QHD

2560×1440

4K

3840×2160

Death Stranding (2020)

low

1280×720

med.

1920×1080

high

1920×1080

ultra

1920×1080

QHD

2560×1440

4K

3840×2160

F1 2020 (2020)

low

1280×720

med.

1920×1080

high

1920×1080

ultra

1920×1080

QHD

2560×1440

4K

3840×2160

Gears Tactics (2020)

low

1280×720

med.

1920×1080

high

1920×1080

ultra

1920×1080

QHD

2560×1440

4K

3840×2160

Doom Eternal (2020)

low

1280×720

med.

1920×1080

high

1920×1080

ultra

1920×1080

QHD

2560×1440

4K

3840×2160

| Legend | |

| 5 | Stutter – The performance of this graphics cards with this game is not well explored yet. According to interpolated information obtained from graphics cards of similar efficiency levels, the game is likely to stutter and show low frame rates. |

| May Stutter – The performance of this graphics cards with this game is not well explored yet. According to interpolated information obtained from graphics cards of similar efficiency levels, the game is likely to stutter and show low frame rates. | |

| 30 | Fluent – According to all known benchmarks with the specified graphical settings, this game is expected to run at 25fps or more |

| 40 | Fluent – According to all known benchmarks with the specified graphical settings, this game is expected to run at 35fps or more |

| 60 | Fluent – According to all known benchmarks with the specified graphical settings, this game is expected to run at 58fps or more |

May Run Fluently – The performance of this graphics cards with this game is not well explored yet. According to interpolated information obtained from graphics cards of similar efficiency levels, the game is likely to show fluent frame rates. According to interpolated information obtained from graphics cards of similar efficiency levels, the game is likely to show fluent frame rates. |

|

| ? | Uncertain – The testing of this graphics cards on this game showed unexpected results. A slower card might be able to produce higher and more consistent frame rates when running the same benchmark scene. |

| Uncertain – The performance of this graphics cards with this game is not well explored yet. No reliable data interpolation can be made based on the performance of similar cards of the same category. | |

| The value in the fields reflects the average frame rate across the entire database. To obtain individual results, move your cursor over the value. | |

Benchmark

Benchmarks help determine the performance in standard tests for AMD Radeon R9 370. We have listed the world’s most famous benchmarks so that you could obtain accurate results in each (see the description). Graphics card preliminary testing is especially important in the presence of high loads so that the user could see to what extent the graphic processing unit copes with computations and data elaboration.

Graphics card preliminary testing is especially important in the presence of high loads so that the user could see to what extent the graphic processing unit copes with computations and data elaboration.

Overall benchmark performance

NVIDIA GeForce GTX 760

21.93%

AMD Radeon HD 6990M Crossfire

21.93%

AMD Radeon R9 370

21.87%

AMD Radeon Pro Vega 16

21.68%

AMD Radeon HD 7870

21.38%

PassMark is a great benchmark that gets updated regularly and shows relevant information on the graphics card’s performance.

AMD Radeon HD 7950

NVIDIA GeForce GTX 760

AMD Radeon R9 370

AMD Radeon Pro Vega 16

AMD Radeon HD 7870

4. 6

6

Out of 14

Hitesti score

Share on social network:

In order to leave a review you need to log in

Reviews of AMD Radeon R9 370

Compare AMD Radeon R9 370

VS

NVIDIA GeForce GTX 1650

NVIDIA GeForce GTX 760

NVIDIA GeForce GTX 1050 Ti

AMD Radeon RX 560

NVIDIA GeForce GTX 960

NVIDIA GeForce GTX 1050 Ti

AMD Radeon R9 280

NVIDIA GeForce GTX 680M

Intel HD Graphics P530

AMD Fiji

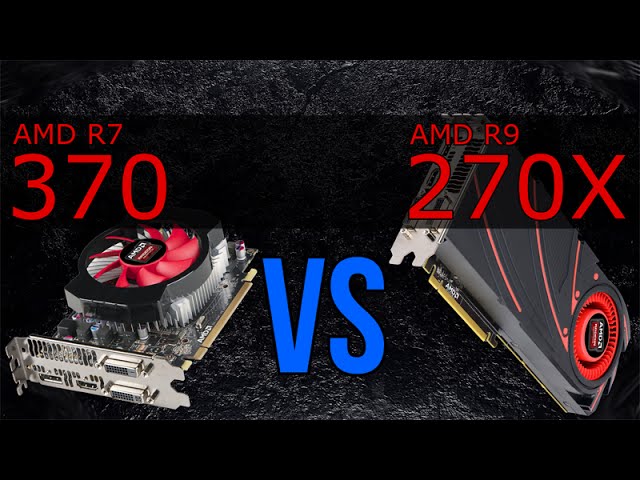

AMD Radeon R7 370 vs AMD Radeon R9 280: What is the difference?

44points

AMD Radeon R7 370

37points

AMD Radeon R9 280

Comparison winner

vs

54 facts in comparison

AMD Radeon R7 370

AMD Radeon R9 280

Why is AMD Radeon R7 370 better than AMD Radeon R9 280?

- 98MHz faster GPU clock speed?

925MHzvs827MHz - 3.

1 GPixel/s higher pixel rate?

1 GPixel/s higher pixel rate?

29.6 GPixel/svs26.5 GPixel/s - 90W lower TDP?

110Wvs200W - 150MHz faster memory clock speed?

1400MHzvs1250MHz - 600MHz higher effective memory clock speed?

5600MHzvs5000MHz - 0.8 newer version of DirectX?

12vs11.2 - 0.8 newer version of OpenCL?

2vs1.2 - Has Double Precision Floating Point (DPFP)?

Why is AMD Radeon R9 280 better than AMD Radeon R7 370?

- 1.07 TFLOPS higher floating-point performance?

2.96 TFLOPSvs1.89 TFLOPS - 33.4 GTexels/s higher texture rate?

92.6 GTexels/svs59.2 GTexels/s - 1.5x more VRAM?

3GBvs2GB - 61GB/s more memory bandwidth?

240GB/svs179GB/s - 128bit wider memory bus width?

384bitvs256bit - 768 more shading units?

1792vs1024 - 1513million more transistors?

4313 millionvs2800 million - 48 more texture mapping units (TMUs)?

112vs64

Which are the most popular comparisons?

AMD Radeon R7 370

vs

AMD Radeon RX 550

AMD Radeon R9 280

vs

AMD Radeon R9 280X

AMD Radeon R7 370

vs

AMD Radeon R9 270X

AMD Radeon R9 280

vs

Gigabyte Radeon RX 550

AMD Radeon R7 370

vs

AMD Radeon R9 370X

AMD Radeon R9 280

vs

AMD Radeon RX 570

AMD Radeon R7 370

vs

Nvidia GeForce GTX 1050

AMD Radeon R9 280

vs

AMD Radeon Vega 8

AMD Radeon R7 370

vs

MSI GeForce GTX 980 Armor 2X OC

AMD Radeon R9 280

vs

Nvidia GeForce GTX 750 Ti

AMD Radeon R7 370

vs

MSI GeForce GTX 1050 Ti

AMD Radeon R9 280

vs

AMD Radeon RX 470

AMD Radeon R7 370

vs

AMD Radeon RX 470

AMD Radeon R9 280

vs

Gigabyte GeForce GTX 1050 Ti

AMD Radeon R7 370

vs

Nvidia GeForce GTX 750 Ti

AMD Radeon R9 280

vs

Nvidia GeForce GTX 960

AMD Radeon R7 370

vs

Nvidia GeForce GTX 960

AMD Radeon R9 280

vs

Nvidia GeForce MX330

AMD Radeon R7 370

vs

AMD Radeon R5

Price comparison

User reviews

Overall Rating

AMD Radeon R7 370

1 User reviews

AMD Radeon R7 370

5. 0/10

0/10

1 User reviews

AMD Radeon R9 280

0 User reviews

AMD Radeon R9 280

0.0/10

0 User reviews

Features

Value for money

5.0/10

1 votes

No reviews yet

Gaming

5.0/10

1 votes

No reviews yet

Performance

5.0/10

1 votes

No reviews yet

Fan noise

10.0/10

1 votes

No reviews yet

Reliability

6.0/10

1 votes

No reviews yet

Performance

1.GPU clock speed

925MHz

827MHz

The graphics processing unit (GPU) has a higher clock speed.

2.GPU turbo

975MHz

933MHz

When the GPU is running below its limitations, it can boost to a higher clock speed in order to give increased performance.

3.pixel rate

29.6 GPixel/s

26. 5 GPixel/s

5 GPixel/s

The number of pixels that can be rendered to the screen every second.

4.floating-point performance

1.89 TFLOPS

2.96 TFLOPS

Floating-point performance is a measurement of the raw processing power of the GPU.

5.texture rate

59.2 GTexels/s

92.6 GTexels/s

The number of textured pixels that can be rendered to the screen every second.

6.GPU memory speed

1400MHz

1250MHz

The memory clock speed is one aspect that determines the memory bandwidth.

7.shading units

Shading units (or stream processors) are small processors within the graphics card that are responsible for processing different aspects of the image.

8.texture mapping units (TMUs)

TMUs take textures and map them to the geometry of a 3D scene. More TMUs will typically mean that texture information is processed faster.

9.render output units (ROPs)

The ROPs are responsible for some of the final steps of the rendering process, writing the final pixel data to memory and carrying out other tasks such as anti-aliasing to improve the look of graphics.

Memory

1.effective memory speed

5600MHz

5000MHz

The effective memory clock speed is calculated from the size and data rate of the memory. Higher clock speeds can give increased performance in games and other apps.

2.maximum memory bandwidth

179GB/s

240GB/s

This is the maximum rate that data can be read from or stored into memory.

3.VRAM

VRAM (video RAM) is the dedicated memory of a graphics card. More VRAM generally allows you to run games at higher settings, especially for things like texture resolution.

4.memory bus width

256bit

384bit

A wider bus width means that it can carry more data per cycle. It is an important factor of memory performance, and therefore the general performance of the graphics card.

It is an important factor of memory performance, and therefore the general performance of the graphics card.

5.version of GDDR memory

Newer versions of GDDR memory offer improvements such as higher transfer rates that give increased performance.

6.Supports ECC memory

✖AMD Radeon R7 370

✖AMD Radeon R9 280

Error-correcting code memory can detect and correct data corruption. It is used when is it essential to avoid corruption, such as scientific computing or when running a server.

Features

1.DirectX version

DirectX is used in games, with newer versions supporting better graphics.

2.OpenGL version

OpenGL is used in games, with newer versions supporting better graphics.

3.OpenCL version

Some apps use OpenCL to apply the power of the graphics processing unit (GPU) for non-graphical computing. Newer versions introduce more functionality and better performance.

Newer versions introduce more functionality and better performance.

4.Supports multi-display technology

✔AMD Radeon R7 370

✔AMD Radeon R9 280

The graphics card supports multi-display technology. This allows you to configure multiple monitors in order to create a more immersive gaming experience, such as having a wider field of view.

5.load GPU temperature

Unknown. Help us by suggesting a value. (AMD Radeon R7 370)

Unknown. Help us by suggesting a value. (AMD Radeon R9 280)

A lower load temperature means that the card produces less heat and its cooling system performs better.

6.supports ray tracing

✖AMD Radeon R7 370

✖AMD Radeon R9 280

Ray tracing is an advanced light rendering technique that provides more realistic lighting, shadows, and reflections in games.

7.Supports 3D

✔AMD Radeon R7 370

✔AMD Radeon R9 280

Allows you to view in 3D (if you have a 3D display and glasses).

8.supports DLSS

✖AMD Radeon R7 370

✖AMD Radeon R9 280

DLSS (Deep Learning Super Sampling) is an upscaling technology powered by AI. It allows the graphics card to render games at a lower resolution and upscale them to a higher resolution with near-native visual quality and increased performance. DLSS is only available on select games.

9.PassMark (G3D) result

Unknown. Help us by suggesting a value. (AMD Radeon R9 280)

This benchmark measures the graphics performance of a video card. Source: PassMark.

Ports

1.has an HDMI output

✔AMD Radeon R7 370

✔AMD Radeon R9 280

Devices with a HDMI or mini HDMI port can transfer high definition video and audio to a display.

2.HDMI ports

Unknown. Help us by suggesting a value. (AMD Radeon R9 280)

More HDMI ports mean that you can simultaneously connect numerous devices, such as video game consoles and set-top boxes.

3.HDMI version

Unknown. Help us by suggesting a value. (AMD Radeon R7 370)

Unknown. Help us by suggesting a value. (AMD Radeon R9 280)

Newer versions of HDMI support higher bandwidth, which allows for higher resolutions and frame rates.

4.DisplayPort outputs

Unknown. Help us by suggesting a value. (AMD Radeon R9 280)

Allows you to connect to a display using DisplayPort.

5.DVI outputs

Allows you to connect to a display using DVI.

6.mini DisplayPort outputs

Allows you to connect to a display using mini-DisplayPort.

Price comparison

Cancel

Which are the best graphics cards?

AMD Radeon R9 370 review: GPU specs, performance benchmarks

Buy on Amazon

Radeon R9 370 videocard released by AMD; release date: 5 May 2015. The videocard is designed for desktop-computers and based on GCN 1. 0 microarchitecture codenamed Trinidad.

0 microarchitecture codenamed Trinidad.

Core clock speed — 925 MHz. Boost clock speed — 975 MHz. Texture fill rate — 78 GTexel / s. Pipelines — 1280. Floating-point performance — 2,496 gflops. Manufacturing process technology — 28 nm. Transistors count — 2,800 million. Power consumption (TDP) — 110 Watt.

Memory type: GDDR5. Maximum RAM amount — 2 GB. Memory bus width — 256 Bit. Memory clock speed — 5600 MHz. Memory bandwidth — 179.2 GB / s.

Benchmarks

| PassMark G3D Mark |

|

|

||||

| PassMark G2D Mark |

|

|

||||

| Geekbench OpenCL |

|

|

||||

CompuBench 1. 5 Desktop 5 DesktopFace Detection |

|

|

||||

| CompuBench 1.5 Desktop Bitcoin Mining |

|

|

||||

| GFXBench 4.0 Car Chase Offscreen |

|

|

||||

GFXBench 4. 0 0Manhattan |

|

|

||||

| GFXBench 4.0 T-Rex |

|

|

||||

| GFXBench 4.0 Car Chase Offscreen |

|

|

||||

| GFXBench 4.0 Manhattan |

|

|

||||

| GFXBench 4.0 T-Rex |

|

|

||||

| 3DMark Fire Strike Graphics Score |

|

|

| Name | Value |

|---|---|

| PassMark — G3D Mark | 4722 |

| PassMark — G2D Mark | 781 |

| Geekbench — OpenCL | 75346 |

CompuBench 1. 5 Desktop — Face Detection 5 Desktop — Face Detection |

64.576 mPixels/s |

| CompuBench 1.5 Desktop — Bitcoin Mining | 336.491 mHash/s |

| GFXBench 4.0 — Car Chase Offscreen | 6096 Frames |

| GFXBench 4.0 — Manhattan | 3718 Frames |

| GFXBench 4.0 — T-Rex | 3357 Frames |

| GFXBench 4.0 — Car Chase Offscreen | 6096.000 Fps |

| GFXBench 4.0 — Manhattan | 3718.000 Fps |

| GFXBench 4.0 — T-Rex | 3357.000 Fps |

| 3DMark Fire Strike — Graphics Score | 0 |

Specifications (specs)

| Architecture | GCN 1. 0 0 |

| Code name | Trinidad |

| Launch date | 5 May 2015 |

| Place in performance rating | 289 |

| Type | Desktop |

| Boost clock speed | 975 MHz |

| Core clock speed | 925 MHz |

| Floating-point performance | 2,496 gflops |

| Manufacturing process technology | 28 nm |

| Pipelines | 1280 |

| Texture fill rate | 78 GTexel / s |

| Thermal Design Power (TDP) | 110 Watt |

| Transistor count | 2,800 million |

|

|

|

| Display Connectors | 2x DVI, 1x HDMI, 1x DisplayPort |

| Interface | PCIe 3. 0 x16 0 x16 |

| Length | 221 mm |

| Supplementary power connectors | 1x 6-pin |

| DirectX | 12.0 (11_1) |

| OpenGL | 4.5 |

| Maximum RAM amount | 2 GB |

| Memory bandwidth | 179.2 GB / s |

| Memory bus width | 256 Bit |

| Memory clock speed | 5600 MHz |

| Memory type | GDDR5 |

Navigation

Choose a GPU

Compare videocards

Compare AMD Radeon R9 370 with others

AMD

Radeon R9 370

vs

ATI

Radeon X800 SE

AMD

Radeon R9 370

vs

ATI

Mobility Radeon HD 540v

AMD

Radeon R9 370

vs

AMD

Radeon HD 7990

AMD

Radeon R9 370

vs

AMD

Radeon RX 560X

AMD

Radeon R9 370

vs

AMD

Radeon RX 5500M

AMD

Radeon R9 370

vs

NVIDIA

Quadro T1000 Max-Q

Radeon R7 370 vs Radeon R9 370 Graphics cards Comparison

Find out if it is worth upgrading your current GPU setup by comparing Radeon R7 370 and Radeon R9 370. Here you can take a closer look at graphics cards specs, such as core clock speed, memory type and size, display connectors, etc. The price, overall benchmark and gaming performances are usually defining factors when it comes to choosing between Radeon R7 370 and Radeon R9 370. Make sure that the graphics card has compatible dimensions and will properly fit in your new or current computer case. Also these graphics cards may have different system power recommendations, so take that into consideration and upgrade your PSU if necessary.

Here you can take a closer look at graphics cards specs, such as core clock speed, memory type and size, display connectors, etc. The price, overall benchmark and gaming performances are usually defining factors when it comes to choosing between Radeon R7 370 and Radeon R9 370. Make sure that the graphics card has compatible dimensions and will properly fit in your new or current computer case. Also these graphics cards may have different system power recommendations, so take that into consideration and upgrade your PSU if necessary.

Radeon R7 370

Check Price

Radeon R9 370

Main Specs

| Radeon R7 370 | Radeon R9 370 | |

| Power consumption (TDP) | 110 Watt | 110 Watt |

| Interface | PCIe 3. 0 x16 0 x16 |

PCIe 3.0 x16 |

| Supplementary power connectors | 1 x 6-pin | 1x 6-pin |

| Memory type | GDDR5 | GDDR5 |

| Maximum RAM amount | 4 GB | 2 GB |

| Display Connectors | 2x DVI, 1x HDMI, 1x DisplayPort | 2x DVI, 1x HDMI, 1x DisplayPort |

|

Check Price |

- Both graphics cards have the same power consumption of 110 Watt.

- Both video cards are using PCIe 3.0 x16 interface connection to a motherboard.

- Radeon R7 370 has 2 GB more memory, than Radeon R9 370.

- Both cards are used in Desktops.

- Radeon R7 370 and Radeon R9 370 are build with GCN 1.0 architecture.

- Radeon R7 370 and Radeon R9 370 are manufactured by 28 nm process technology.

- Radeon R9 370 is 69 mm longer, than Radeon R7 370.

- Memory clock speed of Radeon R9 370 is 4625 MHz higher, than Radeon R7 370.

Game benchmarks

| Assassin’s Creed OdysseyBattlefield 5Call of Duty: WarzoneCounter-Strike: Global OffensiveCyberpunk 2077Dota 2Far Cry 5FortniteForza Horizon 4Grand Theft Auto VMetro ExodusMinecraftPLAYERUNKNOWN’S BATTLEGROUNDSRed Dead Redemption 2The Witcher 3: Wild HuntWorld of Tanks | ||

| high / 1080p | 21−24 | 35−40 |

| ultra / 1080p | 14−16 | 21−24 |

| QHD / 1440p | 8−9 | 16−18 |

| 4K / 2160p | 5−6 | 10−11 |

| low / 720p | 40−45 | 60−65 |

| medium / 1080p | 27−30 | 40−45 |

| The average gaming FPS of Radeon R9 370 in Assassin’s Creed Odyssey is 60% more, than Radeon R7 370. |

||

| high / 1080p | 35−40 | 55−60 |

| ultra / 1080p | 30−35 | 45−50 |

| QHD / 1440p | 14−16 | 35−40 |

| 4K / 2160p | 9−10 | 18−20 |

| low / 720p | 75−80 | 100−110 |

| medium / 1080p | 40−45 | 60−65 |

| The average gaming FPS of Radeon R9 370 in Battlefield 5 is 54% more, than Radeon R7 370. | ||

| low / 768p | 50−55 | 50−55 |

| QHD / 1440p | 0−1 | 0−1 |

Radeon R7 370 and Radeon R9 370 have the same average FPS in Call of Duty: Warzone. |

||

| low / 768p | 230−240 | 250−260 |

| medium / 768p | 210−220 | 220−230 |

| ultra / 1080p | 130−140 | 180−190 |

| QHD / 1440p | 100−110 | 110−120 |

| 4K / 2160p | 55−60 | 70−75 |

| high / 768p | 170−180 | 210−220 |

| The average gaming FPS of Radeon R9 370 in Counter-Strike: Global Offensive is 15% more, than Radeon R7 370. | ||

| low / 768p | 60−65 | 60−65 |

| ultra / 1080p | 55−60 | − |

| medium / 1080p | 55−60 | 55−60 |

| Radeon R7 370 and Radeon R9 370 have the same average FPS in Cyberpunk 2077. |

||

| low / 768p | 120−130 | 120−130 |

| medium / 768p | 110−120 | 110−120 |

| ultra / 1080p | 85−90 | 100−110 |

| The average gaming FPS of Radeon R9 370 in Dota 2 is 5% more, than Radeon R7 370. | ||

| high / 1080p | 27−30 | 45−50 |

| ultra / 1080p | 24−27 | 40−45 |

| QHD / 1440p | 21−24 | 27−30 |

| 4K / 2160p | 9−10 | 14−16 |

| low / 720p | 55−60 | 80−85 |

| medium / 1080p | 30−33 | 45−50 |

The average gaming FPS of Radeon R9 370 in Far Cry 5 is 48% more, than Radeon R7 370. |

||

| high / 1080p | 35−40 | 60−65 |

| ultra / 1080p | 27−30 | 45−50 |

| QHD / 1440p | 16−18 | 27−30 |

| 4K / 2160p | − | 27−30 |

| low / 720p | 130−140 | 180−190 |

| medium / 1080p | 80−85 | 110−120 |

| The average gaming FPS of Radeon R9 370 in Fortnite is 45% more, than Radeon R7 370. | ||

| high / 1080p | 35−40 | 60−65 |

| ultra / 1080p | 27−30 | 45−50 |

| QHD / 1440p | 16−18 | 30−35 |

| 4K / 2160p | 14−16 | 24−27 |

| low / 720p | 75−80 | 100−110 |

| medium / 1080p | 40−45 | 65−70 |

The average gaming FPS of Radeon R9 370 in Forza Horizon 4 is 55% more, than Radeon R7 370. |

||

| low / 768p | 110−120 | 140−150 |

| medium / 768p | 100−105 | 120−130 |

| high / 1080p | 45−50 | 70−75 |

| ultra / 1080p | 18−20 | 30−35 |

| QHD / 1440p | 9−10 | 21−24 |

| The average gaming FPS of Radeon R9 370 in Grand Theft Auto V is 36% more, than Radeon R7 370. | ||

| high / 1080p | 14−16 | 24−27 |

| ultra / 1080p | 12−14 | 20−22 |

| QHD / 1440p | 10−12 | 16−18 |

| 4K / 2160p | 3−4 | 8−9 |

| low / 720p | 45−50 | 65−70 |

| medium / 1080p | 20−22 | 30−35 |

The average gaming FPS of Radeon R9 370 in Metro Exodus is 55% more, than Radeon R7 370. |

||

| low / 768p | 120−130 | 130−140 |

| medium / 1080p | 110−120 | 120−130 |

| The average gaming FPS of Radeon R9 370 in Minecraft is 8% more, than Radeon R7 370. | ||

| ultra / 1080p | 14−16 | 14−16 |

| low / 720p | 75−80 | 100−110 |

| medium / 1080p | 18−20 | 18−20 |

| The average gaming FPS of Radeon R9 370 in PLAYERUNKNOWN’S BATTLEGROUNDS is 24% more, than Radeon R7 370. | ||

| high / 1080p | 16−18 | 24−27 |

| ultra / 1080p | 10−12 | 16−18 |

| QHD / 1440p | 3−4 | 10−11 |

| 4K / 2160p | 2−3 | 7−8 |

| low / 720p | 40−45 | 65−70 |

| medium / 1080p | 21−24 | 35−40 |

The average gaming FPS of Radeon R9 370 in Red Dead Redemption 2 is 68% more, than Radeon R7 370. |

||

| low / 768p | 80−85 | 130−140 |

| medium / 768p | 50−55 | 85−90 |

| high / 1080p | 27−30 | 45−50 |

| ultra / 1080p | 16−18 | 24−27 |

| 4K / 2160p | 9−10 | 16−18 |

| The average gaming FPS of Radeon R9 370 in The Witcher 3: Wild Hunt is 63% more, than Radeon R7 370. | ||

| low / 768p | 90−95 | 90−95 |

| medium / 768p | 60−65 | 60−65 |

| ultra / 1080p | 40−45 | 50−55 |

| high / 768p | 55−60 | 60−65 |

The average gaming FPS of Radeon R9 370 in World of Tanks is 6% more, than Radeon R7 370. |

||

Full Specs

| Radeon R7 370 | Radeon R9 370 | |

| Architecture | GCN 1.0 | GCN 1.0 |

| Code name | Trinidad (Pitcairn) | Trinidad |

| Type | Desktop | Desktop |

| Release date | 5 May 2015 | 5 May 2015 |

| Pipelines | 1024 | 1280 |

| Core clock speed | 925 MHz | |

| Boost Clock | 975 MHz | 975 MHz |

| Transistor count | 2,800 million | 2,800 million |

| Manufacturing process technology | 28 nm | 28 nm |

| Texture fill rate | 62. 40 40 |

78.00 |

| Floating-point performance | 1,997 gflops | 2,496 gflops |

| Length | 152 mm | 221 mm |

| Memory bus width | 256 Bit | 256 Bit |

| Memory clock speed | 975 MHz | 5600 MHz |

| Memory bandwidth | 179.2 GB/s | 179.2 GB/s |

| Shared memory | — | |

| DirectX | 12 (11_1) | |

| Shader Model | 5.1 | 5.1 |

| OpenGL | 4.6 | 4.6 |

| OpenCL | 2.0 | 1.2 |

| Vulkan | + | 1.2.131 |

| Monero / XMR (CryptoNight) | 0. 42 kh/s 42 kh/s |

|

| FreeSync | + | |

| Bus support | PCIe 3.0 | |

| HDMI | + | |

| Bitcoin / BTC (SHA256) | 359 Mh/s | 336 Mh/s |

| Eyefinity | + | |

| TrueAudio | + | |

| Mantle | + | |

| Design | reference | |

| Number of Eyefinity displays | 6 | |

| DisplayPort support | + | |

| CrossFire | + | |

| VCE | + | |

| DDMA audio | + | |

| Decred / DCR (Decred) | 0. 52 Gh/s 52 Gh/s |

|

| Ethereum / ETH (DaggerHashimoto) | 14 Mh/s | |

| Zcash / ZEC (Equihash) | 150 Sol/s | |

| AppAcceleration | + | |

|

Check Price |

Similar compares

- Radeon R7 370 vs P104 100

- Radeon R7 370 vs Radeon R9 M390X

- Radeon R9 370 vs P104 100

- Radeon R9 370 vs Radeon R9 M390X

- Radeon R7 370 vs Radeon HD 7950

- Radeon R7 370 vs GeForce GTX 670MX SLI

- Radeon R9 370 vs Radeon HD 7950

- Radeon R9 370 vs GeForce GTX 670MX SLI

AMD Radeon Video Card Families Reference

Radeon X

Family Reference

Radeon X1000 Family Reference

Radeon HD 2000 Family Reference

Radeon HD 4000 Family Reference

Radeon HD Family Reference 5000

Radeon HD 6000 Family Reference

Radeon HD 7000 Family Reference

Reference information about the family of video cards Radeon 200

Reference information on the family of video cards Radeon 300

Graphic processors specifications

| «Fiji» | «Hawaiii» | «Antigu» » | Trinidad Curacao Pitcairn |

Tobago Bonaire |

|||||||||||||||||||||

| base article | here | here | 0016 PSP, GB/C (bit) |

Textory- Call, GTEK |

PhILRIET, GPICS | TDP, W | |||||||||||||||||||

| Radeon R9 Fury | «Fiji» | 4096/259 9001 1050 | 1000 | 4 HBM | 512 (4096) | 269 | 67 | 275 | |||||||||||||||||

| Radeon R9 Fury | «Fiji» | 3584/224/64 | 1000 | 1000 | 4 HBM | 512 (4096) | 224 | 64 | 275 | ||||||||||||||||

| Radeon R9 Nano | «Fiji» | 4096/256/64 | 4 HBM | 4 HBM 4 HBM 4 HBM 4 HBM 4 HBM 4 HBM 4 HBM 4 HBM 4 HBM 4 HBM 4 HBM 4 HBM 4 Hbm | 64 | 175 | |||||||||||||||||||

| Radeon R9 390X | «Hawaii» | 2816/176/64 | 1050 | 1500(6000) | 8 GDDR5 | 384 (512) | 185 | 67 | 275 | ||||||||||||||||

| Radeon R9 390 | «Hawaii» | 2560/160/64 | 1000 | 1500(6000) | 8 GDDR5 | 384 (512) | 160 | 64 | 275 | ||||||||||||||||

| Radeon R9 380X | “Tonga” | 2048/128/32 | 970 | 1425 (5700) | 4 GDDR5 | 182 (256) | 124. 2 2

| 31.0 31.0 31.0 31.0 31. | Radeon R9 380 | «Tonga» | 1792/112/32 | 970 | 1425 (5700) | 2-4 GDDR5 | 182 (256) | 108.6 | 190 900 Pitcairn | 1024/64/32 | 975 | 1400 (5600) | 2-4 GDDR5 | (256) | 62.4 9001.2 | 110 | |

| Radaon 360 | Bonaire | 768/48/16 | 1050 | 1625 (6500) | 2 GDDR5 | 104 (128) | 50.4 | 16.8 | 100 |

Graphic accelerator AMD Radeon R9 Fury x

6 floating point ALUs (integer and floating point formats supported, with FP32 and FP64 precision)

6 floating point ALUs (integer and floating point formats supported, with FP32 and FP64 precision) 67.0160033

With this model, AMD opened a new subfamily of elite Fury video cards nominally included in the Radeon 300 line. in this case, a single-chip) solution. The company did not try to justify the choice of the name Fury. Probably, this name was taken from the once successful video cards of the ATI Rage Fury family, released at the end of 2019.90s. In addition, the Furies are the goddesses of revenge in ancient Roman mythology, and the Titans are gods from ancient Greek mythology.

in this case, a single-chip) solution. The company did not try to justify the choice of the name Fury. Probably, this name was taken from the once successful video cards of the ATI Rage Fury family, released at the end of 2019.90s. In addition, the Furies are the goddesses of revenge in ancient Roman mythology, and the Titans are gods from ancient Greek mythology.

The Radeon R9 Fury X sits at the very top of the company’s product line, with a MSRP of $649 for the Radeon R9 Fury X, exactly on par with its direct competitor, Nvidia’s GeForce GTX 980 Ti, which was announced at the end of May. as a preemptive strike against the future (at that time) AMD solution. The competing solution offered performance close to that of the high-end GeForce GTX Titan X for much less money, and Fury X now has to compete with the gaming GTX 980 Ti.

One of the most controversial points in the characteristics of the new model is the presence of only 4 GB of video memory, which is enough even for high resolutions at maximum quality settings, but in a number of modern games with 4K rendering resolution, as well as full-screen anti-aliasing and high settings. quality, even more volume is needed now. And AMD would be happy to offer an 8 GB option, but alas, the first generation of HBM memory simply does not allow it. Read more about all the subtleties associated with HBM memory below.

quality, even more volume is needed now. And AMD would be happy to offer an 8 GB option, but alas, the first generation of HBM memory simply does not allow it. Read more about all the subtleties associated with HBM memory below.

The graphics card itself is very compact — the Radeon R9 Fury X PCB is only 7.5 inches (about 190mm) long, well short of typical high-end reference cards. A small board is combined with a large water cooling system heatsink. The combination of CBO with HBM-memory made it possible to reduce the physical dimensions and the number of components on the board (in the power circuit, in particular). You can compare GPU and RAM areas on Radeon R9 290X and R9 Fury X:

Under typical gaming conditions, the Radeon R9 Fury X graphics card consumes about 275W, but since it is equipped with a pair of 8-pin PCI-E power connectors, it can draw up to 375W from the power supply, that is, much more. In terms of I / O interfaces, the Radeon R9 Fury X is capable of outputting information to six displays (using a DisplayPort 1. 2 MST hub) connected via DVI (adapter required), HDMI 1.4a and DisplayPort 1.2a.

2 MST hub) connected via DVI (adapter required), HDMI 1.4a and DisplayPort 1.2a.

Among the connectors on the board there is one HDMI video output and three DisplayPort. They decided to get rid of the outdated DVI connector altogether, although the Radeon HD 7970 and Radeon R9 290X they still were, and sometimes two. And users of older monitors with DVI interfaces will now have to use adapters: passive ones if Single Link is enough, and more expensive ones for Dual Link connections.

Alas, due to the lack of support for HDMI 2.0, the new product supports image output in 4K resolution at 60 Hz only via DisplayPort. It is likely that over time it will also be possible to use active adapters from DisplayPort to HDMI 2.0, but so far such configurations do not work.

Architectural and functional features

Since the Radeon R9 Fury X is based on the Fiji GPU, which belongs to the long-established Graphics Core Next (GCN) architecture, you can learn about many of the details in our early materials. This architecture is the basis of all modern AMD solutions, and even the latest GPUs differ only in some modifications in computing abilities and additional graphics capabilities that are important for DirectX 12 support.

This architecture is the basis of all modern AMD solutions, and even the latest GPUs differ only in some modifications in computing abilities and additional graphics capabilities that are important for DirectX 12 support.

Like the previous high-end Hawaii chip, the new GPU is not the first of a completely new architecture, but only uses the latest version of the current Graphics Core Next (it can be called GCN 1.2 or GCN third generation). Fiji has made a small number of changes compared to last year’s Tonga, and the novelty can be attributed to the GCN 1.2 generation. From the basic changes that have appeared in Fiji, based on the latest version of the Graphics Core Next architecture, we can note everything that we have already seen in the Tonga chip, which is based on the Radeon R9 model video card.285.

The new top-end graphics processor includes all the GCN 1.2 improvements, including improved geometry processing and tessellation performance (according to these indicators, Fiji is on par with Hawaii and Tonga and faster than Tahiti), new data compression methods without frame loss buffer, some multimedia 16-bit instructions, and increased L2 cache to 2 MB. In terms of computing power, the new GPU has received improved scheduling and task distribution, and several new instructions for parallel data processing.

In terms of computing power, the new GPU has received improved scheduling and task distribution, and several new instructions for parallel data processing.

The most «loud» architectural improvement is the appearance of significantly improved lossless framebuffer data compression algorithms — for this, the ROP blocks have been specially modified. It is the operations (mostly writes) with the frame buffer that are the most demanding on memory bandwidth, because the GPU writes a very large number of pixels to the buffer each frame. So an increase in the efficiency of this work leads to less demanding memory bandwidth and increases the so-called effective memory bandwidth.

In the case of GCN 1.2 architecture chips, new frame buffer data compression methods provide compression ratios up to 8:1, and on average this results in a 40% improvement in bandwidth efficiency. For example, the Radeon R9 285 with a 256-bit bus has a similar effective bandwidth to the Radeon R9 280 with a 384-bit memory bus. Well, in the case of the top-end Fiji chip, the effective memory bandwidth has grown to ultra-high values, since the chip contains 4096-bit HBM memory, but more on that later.

Also, as part of the GCN 1.2 architecture, some changes were made to the computing units: improvements in scheduling and distribution of tasks between execution units within the heterogeneous HSA architecture, the introduction of new 16-bit instructions to increase speed and reduce power consumption, as well as improvements in parallel processing data, which is most important in the case of the top Fiji GPU. Like other products of the GCN 1.2 architecture, the new GPU has limited data exchange capability between different SIMD lines, opening up the possibility for new efficient algorithms in OpenCL programs.

Well, for ordinary enthusiasts, the most important architectural changes were the already mentioned frame buffer data compression and acceleration of geometric processing and tessellation. This improvement was made back in Hawaii, nothing has changed in Tonga. But now, in the case of Fiji, the operation of the geometry pipeline has been further optimized, which should have a positive effect in tasks with a large amount of geometry and using tessellation.

But now, in the case of Fiji, the operation of the geometry pipeline has been further optimized, which should have a positive effect in tasks with a large amount of geometry and using tessellation.

The general scheme of the Fiji GPU is very similar to the one we saw in the Hawaii chip, released back in 2013. Both of these GPUs are divided into four Shader Engines, each with its own geometry processor and rasterizer, as well as four larger ROPs capable of processing 16 pixels per clock (for a total of 64 ROPs in each of these chips). The GPU has a single command processor and eight Asynchronous Compute Engines, which have been modified to reflect the changes in GCN 1.2.

Compared to Hawaii, in terms of organization, AMD engineers did not touch anything in the Fiji chip, simply placing more Compute Units in each Shader Engine (16 instead of 11), but left the number of engines themselves unchanged (probably this is — an architectural limitation of GCN in its current form) and the number of other execution units in their composition.

Given that each CU contains 64 ALUs, there are 1024 ALUs per Shader Engine and 4096 stream processors for the whole of Fiji. Accordingly, the number of texture units was also increased, because for each CU in the video chips of the GCN architecture there are four TMUs, so there are 256 of them in the new GPU, unlike 176 TMUs in Hawaii.

Accordingly, the theoretical values of the speed of calculations and texture processing in Fiji increased, but the peak values of the speed of geometry processing and fill speed (fillrate, ROP performance) remained almost at the same level, adjusted only for different GPU frequencies. Perhaps, in some cases, there may be an emphasis on overall performance in the speed of filling the scene or processing geometry, but this greatly depends on the conditions (the complexity of the scene and the overdraw value for it, as well as the resolution and full-screen anti-aliasing, etc.).

But AMD representatives claim that in the case of Hawaii, memory bandwidth (LBW) is most often the limiter, and the performance of ROP units is quite enough in most cases, and the rendering speed is rarely limited by their capabilities. But after all, Fiji uses a faster HBM memory with a wide bus, as well as new data compression methods in the screen buffer, and the ROP blocks themselves in Fiji received more opportunities to work with 16-bit per color data. So, most likely, the number of cases of focusing the overall performance on the possibility of ROP units will only increase. And it was not possible to increase the number of ROP blocks in the new chip, since the GPU turned out to be quite large anyway.

Based on the number of ALUs of 4096 and the maximum GPU frequency of 1050 MHz, a theoretical single precision (FP32) performance of 8.6 teraflops can be obtained. But with double-precision calculations in the new chip, things are much worse than in the same Hawaii — here AMD had to go in about the same direction that Nvidia chose for its older Maxwell, shifting the focus towards gaming to the detriment of professional computing.

Although different GCN architecture chips can perform FP64 calculations at 1/2 to 1/16 of the speed of FP32 calculations, for Fiji, AMD chose the minimum value (1/16), which gives an FP64 speed of about 538 megaflops. Compare this to the capabilities of Hawaii, which performs double precision calculations only twice as fast as single precision calculations. Even less complex cheap GCN chips have 1/8 temp! So Fiji has become as “playable” as the GM200. It seems that AMD took a cue (good or not — depends on the point of view) with Nvidia trimming its top-end GPU even further. And in the end, both top gaming chips from AMD and Nvidia are more gaming than professional computing.

Compare this to the capabilities of Hawaii, which performs double precision calculations only twice as fast as single precision calculations. Even less complex cheap GCN chips have 1/8 temp! So Fiji has become as “playable” as the GM200. It seems that AMD took a cue (good or not — depends on the point of view) with Nvidia trimming its top-end GPU even further. And in the end, both top gaming chips from AMD and Nvidia are more gaming than professional computing.

And otherwise, the Fiji GPU is not quite a typical video chip for AMD. This time the company released a rather large GPU — almost 600 mm in area 2 ! But for several years now they have been trying to avoid such large and super-hot video chips, since they are too expensive to develop and manufacture, and they require more time from the start of development to market entry due to the complexity and design and reduced yield of suitable chips. Not to mention that with larger GPUs, the risk of failure is higher. Although, of course, now the 28 nm process technology has already been perfectly developed and does not cause any special problems for video chip manufacturers.

Although, of course, now the 28 nm process technology has already been perfectly developed and does not cause any special problems for video chip manufacturers.

But even Hawaii at one time was already rather big with its 438 mm 2 , and in the form of Fiji, for the first time in several years, AMD turned out to be a GPU that is only slightly less complex compared to the competing Nvidia chip in terms of the number of transistors and the size of the crystal . Thus, Fiji has a core size of 596 mm 2 , which is only 5 mm 2 less than the size of Nvidia’s GM200. By the way, the figure of about 600 mm 2 is very interesting — it seems that the Taiwanese TSMC is simply not capable of mass-producing even larger chips, and both companies expected to get the maximum given this limitation. It is all the more interesting how much success they achieved in the end in terms of speed and functionality compared to each other.

The slowdown in FP64 computing has greatly simplified the computational units in Fiji, and the number of CUs has increased from 44 to 64, so instead of 2816 ALUs in the new GPU, there are exactly 4096 of them. With the increase in computational and texture performance, compared to Hawaii , other performance parameters have not changed much. For example, the number of geometry engines, as well as the theoretical geometry processing speed, remained the same (a little more due to the increased frequency of the video chip in the Radeon R9Fury X versus Radeon R9 290X). But the GCN 1.2 architecture has also seen improvements to speed up geometry processing, and Fiji should be faster than Hawaii in this regard, even at equal peak performance. We will definitely check this in our synthetic tests.

With the increase in computational and texture performance, compared to Hawaii , other performance parameters have not changed much. For example, the number of geometry engines, as well as the theoretical geometry processing speed, remained the same (a little more due to the increased frequency of the video chip in the Radeon R9Fury X versus Radeon R9 290X). But the GCN 1.2 architecture has also seen improvements to speed up geometry processing, and Fiji should be faster than Hawaii in this regard, even at equal peak performance. We will definitely check this in our synthetic tests.

Although the GPU itself has changed very little architecturally, there are several changes associated with the use of a new type of memory. The Fiji GPU includes eight HBM memory controllers, each serving half of the HBM stack (for a total of four on the chip), and each controller is associated with its eight ROPs and a 256 KB L2 cache partition.

Fury X got 60% more video memory bandwidth than the R9 290X (4096-bit bus at 512 GB/s vs. 512-bit bus at 320 GB/s). Together with improvements in frame buffer color information compression, this gives twice the effective bandwidth — and this indicator is one of the key indicators for modern GPUs in real applications. Of course, compression will work well in 3D rendering, but hardly in computational tasks, but in any case, the use of HBM memory gives a good increase in memory bandwidth. But even with such a high bandwidth, the cache is still many times faster, and therefore the size of the L2 cache was also increased in the new GPU: Fiji has 2 MB of L2 cache, compared to 1 MB for the previous top solution.

512-bit bus at 320 GB/s). Together with improvements in frame buffer color information compression, this gives twice the effective bandwidth — and this indicator is one of the key indicators for modern GPUs in real applications. Of course, compression will work well in 3D rendering, but hardly in computational tasks, but in any case, the use of HBM memory gives a good increase in memory bandwidth. But even with such a high bandwidth, the cache is still many times faster, and therefore the size of the L2 cache was also increased in the new GPU: Fiji has 2 MB of L2 cache, compared to 1 MB for the previous top solution.

Important changes have taken place in terms of video data processing — the corresponding Unified Video Decoder (UVD) block has the same capabilities as the Carrizo family APUs and can hardware-accelerate video data decoding in H.265 (HEVC) format. In terms of video encoding, the capabilities of the VCE unit in Fiji have not changed, it can still encode visuals in H. 264 format, but the video decoding unit has received full hardware support for decoding video data in H.265 format, becoming the first discrete GPU with such support. .

264 format, but the video decoding unit has received full hardware support for decoding video data in H.265 format, becoming the first discrete GPU with such support. .

AMD also notes the improved scaler and Eyefinity technology — the ability to output images to six image output devices. Unfortunately, the HDMI 2.0 support expected by many is missing from the new GPU. And this is a rather significant drawback, because the most affordable devices with 4K resolution are TVs, which most often have HDMI 2.0 ports and no DisplayPort. Recall that competing Nvidia graphics cards received support for HDMI 2.0 in all GPUs of the second generation of the Maxwell architecture.

Among the advantages of Fiji, it remains to note the support for TrueAudio technology, which the Fiji chip also has. Introduced in the GCN 1.1 family of GPUs, this technology offers hardware-accelerated audio processing on several Tensilica DSPs similar to those included in the main chip of the Sony PlayStation 4 console. Despite all the delights of hardware audio processing in the form of offloading the main CPU from these tasks, TrueAudio support in games is limited to a few games released under the auspices of a special AMD technical and marketing program, and the likelihood of seeing it in other games is not very high.

Despite all the delights of hardware audio processing in the form of offloading the main CPU from these tasks, TrueAudio support in games is limited to a few games released under the auspices of a special AMD technical and marketing program, and the likelihood of seeing it in other games is not very high.

Design Features and Cooling System

It’s no surprise that AMD chose to build the Radeon R9 Fury X to the same high standards for its elite Fury series as Nvidia did for the Titan. The case of the video card is made of several parts assembled around the printed circuit board, and aluminum alloys with different surface treatments are used in its manufacture, as a result, it looks and feels solid, as required from a top-end solution.

Radeon R9 board bezelFury X, which covers the components on the board, is removable. It is fixed with four screws and can be replaced with a panel made of a different material and with its own pattern — to give the card a personalized look. In the case of a video card, which is usually placed inside the case so that its front surface is not visible, this is not so important, but in general, the idea is original and interesting.

In the case of a video card, which is usually placed inside the case so that its front surface is not visible, this is not so important, but in general, the idea is original and interesting.

For a greater visual effect, we decided to place several LEDs on the board, as well as a red luminous logo — similar to the dual-chip model of the previous generation Radeon R9295X2, the novelty has a red luminous inscription RADEON on the board. Also, the new top-end video card from AMD contains several LEDs that signal the GPU operating mode and are located above the PCI Express additional power connectors.

A bar of eight GPU Tach LEDs indicates the intensity of the GPU work load at the moment. That is, in game mode, all eight LEDs will be lit, and in desktop mode, only one of them is lit. The color of these LEDs is user selectable from red or blue using a switch on the back of the board. And another green LED, located next to them, indicates the activity of the AMD ZeroCore low power mode.

To keep AMD’s most powerful GPU cool, we decided to use a water cooling system that keeps the GPU running under a typical gaming load at around 50 degrees. Water cooling systems have long been the norm in enthusiast systems, and the Radeon R9 295X2 is the first graphics card to feature this type of reference cooler, with closed loop water cooling.

Since the new GPU is very demanding on power and generates a lot of heat, it is no wonder that the Radeon R9Fury X was chosen by a similar system manufactured by Cooler Master. The cooler has a 120 mm heatsink and fan, and their combined thickness is 60 mm, which is quite a lot. As a result, the CBO can easily handle up to 500 watts of heat, far exceeding the Fury X’s typical power consumption figure of 275 watts — a very large headroom.

Although we have already seen more than one video card with water cooling systems, but it was the combination of it with HBM memory that made it possible to significantly reduce the physical dimensions of the printed circuit board and the video card case. The new memory also reduces the number of components in the board’s power system. Therefore, the new product is very different from the usual top-end air-cooled video cards that occupy a couple of slots along the entire length. Radeon R9 PCB LengthThe Fury X measures just 7.5 inches (around 190mm), which is way smaller than typical reference cards in the upper price range.

The new memory also reduces the number of components in the board’s power system. Therefore, the new product is very different from the usual top-end air-cooled video cards that occupy a couple of slots along the entire length. Radeon R9 PCB LengthThe Fury X measures just 7.5 inches (around 190mm), which is way smaller than typical reference cards in the upper price range.

All components on the board (GPU, VRM and memory chips) are cooled by a single system, equipped with a 120mm radiator and a high-quality fan of the appropriate size from Nidec. The cooler cools the GPU itself and related components, including the MOSFET in the voltage regulator module (VRM) — a special tube is laid for this. The video chip itself with HBM chips placed on it is cooled by the main pump unit.

The cooler used is capable of dissipating up to 500 W of heat, although the model is powered by a pair of 8-pin PCI-E connectors that can transfer up to 375 W, and the six-phase VRM voltage regulator module is capable of providing circuits with a current of up to 400 A — here you can see a large headroom for overclocking enthusiasts, as AMD’s typical Fury X power draw is much lower at just 275W.

Since the cooling system of the Radeon R9 Fury X uses a high-quality large-sized fan, the cooler achieves a fairly low noise level of 32 dBA, which is significantly less than air cooling with a typical 40-45 dBA. Although the first users of Fury X video cards complained about the noise coming from the pump, not the fan, AMD promised to solve this problem in the next batches of boards produced for the market.

Most enthusiasts who buy such video cards are interested in overclocking, including extreme overclocking. And AMD has made some of the task easier by equipping its top-end single-chip card with a powerful cooler and a generous power supply system. Most often, overclocking is limited by lack of cooling or power, and the Radeon R9 Fury X has been designed to minimize these limitations, which should please overclockers.

Using the AMD Overdrive page in the AMD Catalyst Control Center, the user is given the option to set clock speeds, target temperatures, fan speeds, and power limits to control the speed of the graphics card. So far, not everything is clear with the overclocking of the video memory of the new HBM standard, but its overclocking from 500 to 600 MHz gives a noticeable acceleration in games:

I must say that at the time of the release of the card, its overclocking capabilities were seriously limited, AMD did not give the opportunity to increase the voltage and overclock the HBM memory, you can only increase the GPU frequency and the limit of the total power consumption, but there is a very serious backlog. The Dual BIOS switch will also help with this, as in the top-end cards of the previous generation, which allows you to choose between a fixed reference BIOS image and a modified one.

The new High Bandwidth Memory 9 standard0013

As we already mentioned, the main innovation of the AMD Radeon R9 Fury X video card is the use of a new standard video memory — High Bandwidth Memory (HBM). So far, only GDDR5 memory has been used in video cards, which is an evolutionary development of well-known standards, and although it has improved performance and power consumption compared to GDDR3/GDDR4, these improvements are not so significant.

The foundations of the old DRAM standards are many years old, and modifications have resulted in bandwidth gains far short of what GPU performance has grown over time. In twenty years, improvements in standards have allowed memory bandwidth (BW) to rise by only about 50 times, while GPU computing speed has grown much more during this time. Therefore, the industry needed new types of memory that would provide completely different possibilities.